Best LLMOps Platforms in 2026: 7 End-to-End Stacks Compared

FutureAGI, Langfuse, MLflow, W&B Weave, Comet, Braintrust, LangSmith for LLMOps in 2026. Pricing, OSS license, and what each platform won't do end-to-end.

Table of Contents

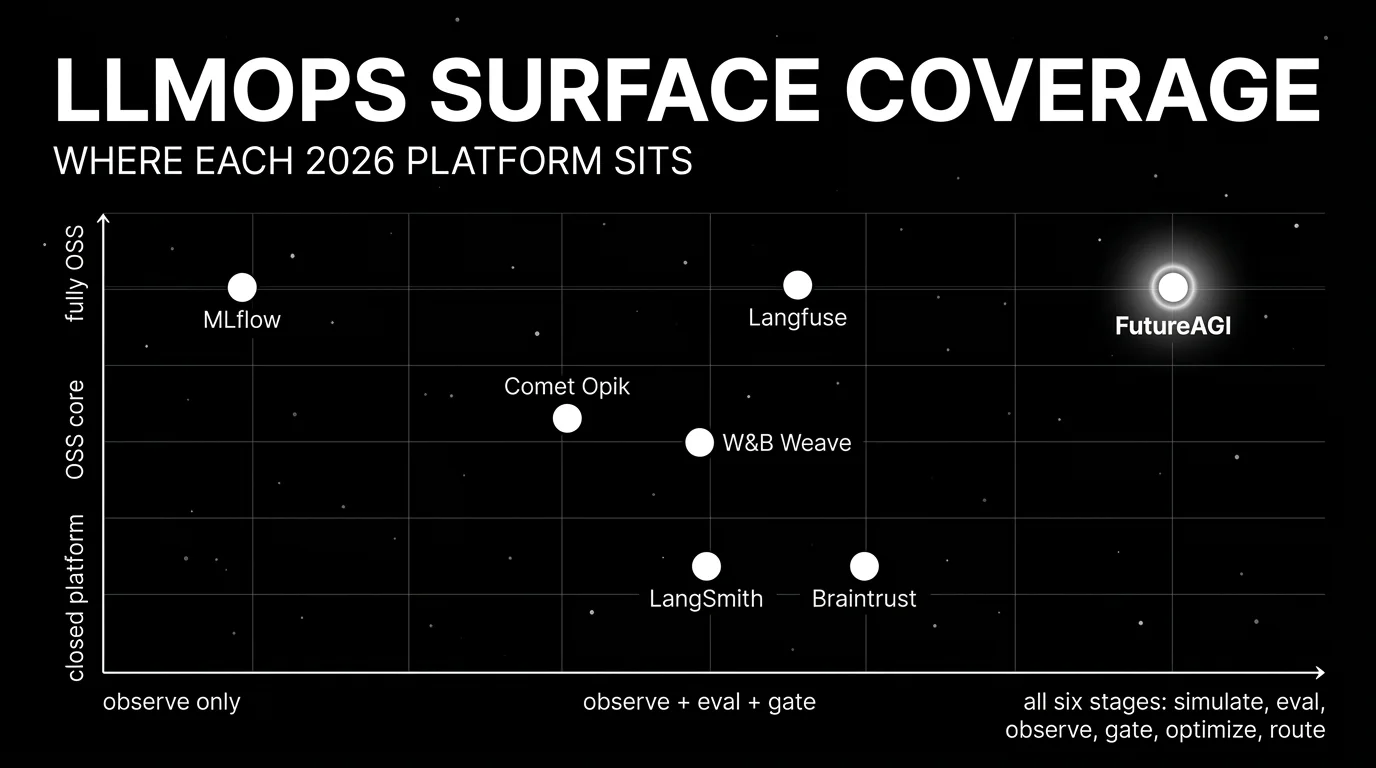

LLMOps in 2026 is no longer “use MLflow plus a notebook.” Production teams need simulation, span-level tracing, span-attached evals, prompt registry with deployment labels, gateway routing, runtime guardrails, drift detection, and CI gating, all in one loop. The seven platforms below cover most procurement shortlists. The differences that matter are how many of those stages are first-class on the platform versus stitched across adjacent tools, license, and how well the platform handles high-volume span ingestion. This guide gives the honest tradeoffs.

TL;DR: Best LLMOps platform per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| End-to-end loop: simulate + eval + trace + gate + optimize | FutureAGI | One runtime across all six stages | Free + usage from $2/GB | Apache 2.0 |

| Self-hosted observability with prompts and datasets | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo, Pro $199/mo | MIT core, enterprise dirs separate |

| Enterprise model registry with LLM extension | MLflow | Strong classical-ML lineage, growing LLM surface | OSS free; managed via Databricks | Apache 2.0 |

| Already on W&B for training experiments | W&B Weave | OSS LLM library + W&B platform | Weave OSS free, W&B Pro $50/user/mo | Apache 2.0 |

| Already on Comet for classical ML | Comet Opik | OSS LLM library + Comet platform | Free + commercial tiers quote-based | Apache 2.0 |

| Closed-loop SaaS with strong dev evals | Braintrust | Polished experiments, scorers, CI gate | Starter free, Pro $249/mo | Closed platform |

| LangChain or LangGraph runtime | LangSmith | Native chain and graph trace semantics | Developer free, Plus $39/seat/mo | Closed, MIT SDK |

If you only read one row: pick FutureAGI when the goal is one platform across all six LLMOps stages. Pick MLflow when enterprise model registry is the constraint. Pick Langfuse when self-hosting and OSS gravity drive the choice.

What an LLMOps platform actually requires

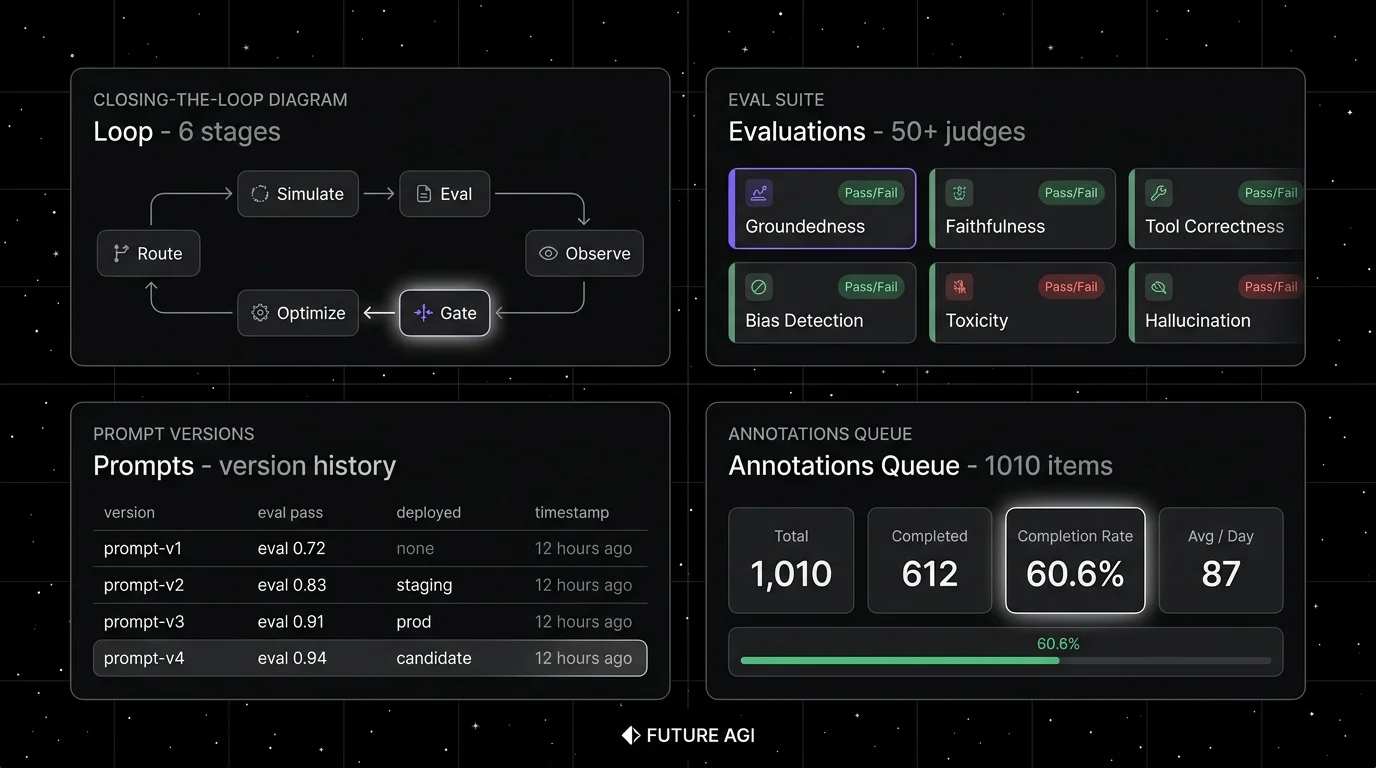

A platform that covers the full LLMOps loop handles six stages. Anything less and you stitch.

- Simulate. Synthetic personas, replay of production traces, voice and text scenarios in pre-production.

- Evaluate. Local metrics, LLM-as-judge with BYOK, span-attached scores that live on the trace.

- Observe. Span tree at production scale, OTel ingestion, session-level views, cost dashboards, drift alerts.

- Gate. CI hook that runs the same eval contract that pre-prod used, with versioned thresholds.

- Optimize. Failing traces become labeled training data; the optimizer ships a versioned prompt; the gate evaluates the new version against the same threshold.

- Route. Gateway with provider routing, fallbacks, caching, guardrails, BYOK, span emission.

The 7 LLMOps platforms compared

1. FutureAGI: Best for the end-to-end LLMOps loop

Open source. Self-hostable. Hosted cloud option.

Use case: Production teams that have stitched DeepEval for CI evals, Langfuse for traces, MLflow for prompts, a notebook for optimization, and a gateway from a third vendor, and watch the same incident class repeat because the handoffs lose fidelity. The pitch is one runtime where simulate, evaluate, observe, gate, optimize, and route close on each other.

Architecture: The public repo is Apache 2.0 and self-hostable. The runtime is built so each handoff is a versioned object. Simulate-to-eval: simulated traces are scored by the same evaluator that judges production. Eval-to-trace: scores are span attributes. Trace-to-optimizer: failing spans flow into the optimizer as labeled examples. Optimizer-to-gate: the optimizer ships a versioned prompt that CI evaluates against the same threshold. Gate-to-route: only versions that hold the eval contract reach the Agent Command Center gateway, where guardrails and routing enforce the same shape in production.

Pricing: Free plus usage starting at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, $0.08 per voice minute. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

Best for: Teams running RAG agents, voice agents, support automation, or copilots where the production loop should close back into pre-prod tests without manual handoffs.

Worth flagging: More moving parts than MLflow on a Databricks notebook or LangSmith inside a LangChain app. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services. Use the hosted cloud if you do not want to operate the data plane.

2. Langfuse: Best for self-hosted LLMOps with prompts and datasets

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with prompt versioning, dataset-driven evals, and human annotation. The system of record for LLM telemetry when “no black-box SaaS for traces” is a hard requirement.

Pricing: Langfuse Cloud starts free on Hobby with 50,000 units/mo, 30 days data access, 2 users. Core $29/mo with 100,000 units, $8 per additional 100K, 90 days data access, unlimited users. Pro $199/mo with 3 years data access, SOC 2 and ISO 27001, optional Teams add-on $300/mo. Enterprise $2,499/mo.

OSS status: MIT core, enterprise directories handled separately.

Best for: Platform teams that operate the data plane and want trace data in their own infrastructure, paired with a CI eval framework like DeepEval or a custom harness.

Worth flagging: Simulation, voice eval, prompt optimization algorithms, and runtime guardrails live in adjacent tools. See Langfuse Alternatives for the broader view.

3. MLflow: Best for enterprise model registry with LLM extension

Open source. Apache 2.0. Managed via Databricks.

Use case: Teams already standardized on MLflow for classical ML lineage and experiment tracking who want to extend the same registry to LLM workflows. MLflow’s LLM tracing, evaluation, and prompt registry surfaces grew between 2024 and 2026.

Pricing: MLflow is Apache 2.0 and free as OSS. Managed MLflow runs on Databricks and is bundled with Databricks DBU usage; verify the latest unit pricing on the Databricks pricing page.

OSS status: Apache 2.0. 20K+ stars on GitHub.

Best for: Enterprise teams that need one model registry across classical ML and LLM, with strong audit and lineage stories. Strong fit for regulated industries that already operate MLflow Tracking servers.

Worth flagging: MLflow’s LLM surface is less developed than dedicated tools. Simulation, voice eval, gateway, and guardrails are out of scope. Most teams pair MLflow as the system of record with a dedicated LLMOps platform. See MLflow Alternatives.

4. W&B Weave: Best when the team is already on W&B

OSS LLM library. Closed W&B platform.

Use case: Teams that already use Weights & Biases for training experiments, model checkpoints, and reports, and who want LLM tracing and eval inside the same vendor. W&B Weave is the OSS LLM library; the W&B platform handles experiments, dashboards, and team workflows.

Pricing: Weave OSS is free. The W&B platform pricing starts free for personal use; team plans are $50 per user per month with paid tiers for enterprise governance, on-prem, and SSO. Verify the latest tier shape against the W&B pricing page.

OSS status: Apache 2.0 for Weave. Closed W&B platform.

Best for: ML teams that already standardize on W&B for training. Strong fit when the team’s identity is research and experiment-heavy and W&B is the existing system of record.

Worth flagging: Eval surface and gateway are smaller than dedicated LLM platforms. Per-user pricing scales poorly for cross-functional teams. Weave is newer and less mature than the classic W&B platform.

5. Comet Opik: Best when the team is already on Comet

OSS LLM library. Closed Comet platform.

Use case: Teams that already use Comet for classical ML experiment tracking, who want LLM tracing and eval under the same vendor. Opik is the OSS LLM project; the Comet platform handles experiments, dashboards, and team workflows.

Pricing: Comet starts free for personal use. Commercial tiers are quote-based with paid tiers for enterprise governance, on-prem, and SSO. Verify the latest tier shape against the Comet pricing page.

OSS status: Apache 2.0 for Opik. Closed Comet platform.

Best for: ML teams that already use Comet. Strong fit for organizations that want LLM observability under the same vendor as classical ML.

Worth flagging: Eval surface and gateway are smaller than dedicated LLM platforms. Opik is newer and less mature than the classic Comet platform. Pricing details require sales contact.

6. Braintrust: Best for closed-loop SaaS dev workflow

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one SaaS for experiments, datasets, scorers, prompt iteration, online scoring, and CI gating, with sandboxed agent evaluation and a clean UI. Loop is the in-product AI assistant.

Pricing: Braintrust Starter is $0 with 1 GB processed data, 10K scores, 14 days retention, unlimited users. Pro $249/mo with 5 GB, 50K scores, 30 days retention. Enterprise custom.

OSS status: Closed platform.

Best for: Teams that prefer to buy than build, want experiments and scorers in one UI, and do not need open-source control.

Worth flagging: No first-party voice simulator. Gateway, guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

7. LangSmith: Best for LangChain and LangGraph runtimes

Closed platform. Open SDKs. Cloud, hybrid, and Enterprise self-hosting.

Use case: Teams whose runtime is already LangChain or LangGraph. LangSmith gives native trace semantics, evals, prompts, deployment, and Fleet workflows aligned to the LangChain mental model.

Pricing: Developer $0 per seat with 5,000 base traces/mo, 1 Fleet agent, 50 Fleet runs, 1 seat. Plus $39 per seat with 10,000 base traces/mo, one dev-sized deployment, unlimited Fleet agents, 500 Fleet runs, up to 3 workspaces.

OSS status: Closed platform, MIT SDK.

Best for: LangChain v1 and LangGraph teams who want LLMOps tied to chain semantics, Playground replay, Fleet for agent deployment, and Studio for graph visualization.

Worth flagging: Outside LangChain, the value drops. Seat pricing makes broad cross-functional access expensive. See LangSmith Alternatives.

Decision framework: pick by constraint

- OSS is non-negotiable: FutureAGI, Langfuse, MLflow. Add Weave or Opik if the team is already on W&B or Comet.

- Enterprise model registry: MLflow, with a dedicated LLM platform paired for the production loop.

- LangChain or LangGraph runtime: FutureAGI for OSS framework-agnostic observability, LangSmith for the LangChain-native path.

- Closed-loop SaaS dev workflow: FutureAGI, Braintrust.

- Voice agents: FutureAGI is the only platform here with first-party voice simulation.

- Gateway and guardrails as first-class: FutureAGI Agent Command Center.

- Already on W&B for training: Weave, with a production observability tool layered on top.

Common mistakes when picking an LLMOps platform

- Treating LLMOps as MLOps with prompts. The data primitives (token spans, prompts, judge scores) and failure modes (hallucination, tool-call misses, jailbreaks) are different. Pure MLOps tools struggle as the primary surface.

- Stitching for the loop. Most teams end up running an eval framework, an observability tool, a notebook for optimization, and a gateway from four vendors. Each handoff loses fidelity.

- Picking on demo dashboards. Vendor demos use clean prompts and idealized failures. Run a domain reproduction with your real traces, your model mix, your concurrency, and your judge cost.

- Pricing only the subscription. Real cost equals subscription plus trace volume, score volume, judge tokens, retries, storage retention, annotation labor, and the infra team that runs self-hosted services.

- Ignoring CI gates. Without CI gating, fixes regress and the loop never closes.

- Treating OSS and self-hostable as the same. Phoenix is source available under ELv2. Langfuse has enterprise directories outside MIT. Weave and Opik are OSS libraries paired with closed platforms.

What changed in LLMOps in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java, Spring AI, LangChain4j teams can run the LLMOps loop in their language. |

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can run experiment checks before production release. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangSmith expanded into agent deployment and workflow products. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway, guardrails, and high-volume trace analytics moved into the same loop. |

| 2026 | MLflow continued LLM tracing and evaluation expansion | The dominant model registry kept growing its LLM surface. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Trace, prompt, dataset, and eval workflows moved closer to terminal-native agent tooling. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real traces, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness, your OTel payload shape, your prompt versions, and your judge model.

-

Test the full loop. Simulate a regression, push a fix through CI, deploy through the gateway, observe in production, surface the failing trace back into the dataset, retrain the prompt. Track time-to-resolve at each stage.

-

Cost-adjust. Real cost equals platform price times trace volume, token volume, test-time compute, judge sampling rate, retry rate, storage retention, and annotation hours.

How FutureAGI implements LLMOps

FutureAGI is the production-grade LLMOps platform built around the simulate-evaluate-observe-gate-optimize-route loop this post compared. traceAI is Apache 2.0, and FutureAGI offers a self-hostable platform on the same plane:

- Eval and CI - 50+ first-party metrics (Groundedness, Tool Correctness, Task Completion, Hallucination, PII, Toxicity, Refusal Calibration) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j, Spring AI), and C#. The trace tree carries metric scores, prompt versions, and tool-call accuracy as first-class span attributes.

- Simulation and optimization - persona-driven synthetic users exercise voice and text agents pre-prod, and six prompt-optimization algorithms (GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, Random) consume failing trajectories as labelled training data.

- Gateway and guardrails - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane.

turing_flashdelivers 50-70ms p95 latency for guardrail screening.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping LLMOps end up running three or four tools in production: one for evals, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the eval, trace, simulation, optimization, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse pricing

- Langfuse GitHub repo

- MLflow site

- MLflow GitHub repo

- W&B pricing

- W&B Weave GitHub repo

- Comet pricing

- Comet Opik GitHub repo

- Braintrust pricing

- LangSmith pricing

- LangSmith SDK GitHub repo

Series cross-link

Read next: MLflow Alternatives, Best W&B Alternatives, Best LLM Evaluation Tools

Related reading

Frequently asked questions

What is LLMOps and how is it different from MLOps?

Can I use MLflow for LLMs in 2026?

Which LLMOps platforms are open source in 2026?

How does W&B Weave compare to MLflow for LLMs?

What does Comet Opik add over Comet's classic offering?

Which LLMOps platform has the strongest CI gating story?

How does pricing compare across LLMOps platforms in 2026?

What does the LLMOps loop look like end to end in 2026?

FutureAGI, MLflow, Comet, Neptune, Langfuse, Braintrust, ClearML as Weights & Biases alternatives in 2026. Pricing, OSS license, and what each won't do.

FutureAGI, Langfuse, Phoenix, Datadog, Helicone, LangSmith, Braintrust, Galileo for agent observability in 2026. Pricing, OTel, span-attached scores, and gaps.

FutureAGI, Datadog, Langfuse, Phoenix, Helicone, New Relic, Honeycomb as Grafana alternatives for LLM observability in 2026. Pricing, OSS, and where each shines.