Deterministic LLM Evaluation Metrics in 2026: Where They Still Win

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

Table of Contents

LLM-as-judge took over the eval discourse in 2024 and 2025. By 2026 the conversation is back to a more honest split: which checks should run cheaply on every output, and which deserve a judge call. Deterministic metrics earn their place when the task has a clear ground truth, when the cost of a judge call is wrong for the volume, or when the failure mode is structural. This post walks through where deterministic metrics still win in 2026, where they fail, and how to combine them with LLM judges in a working CI pipeline.

TL;DR: deterministic vs LLM-as-judge in one paragraph

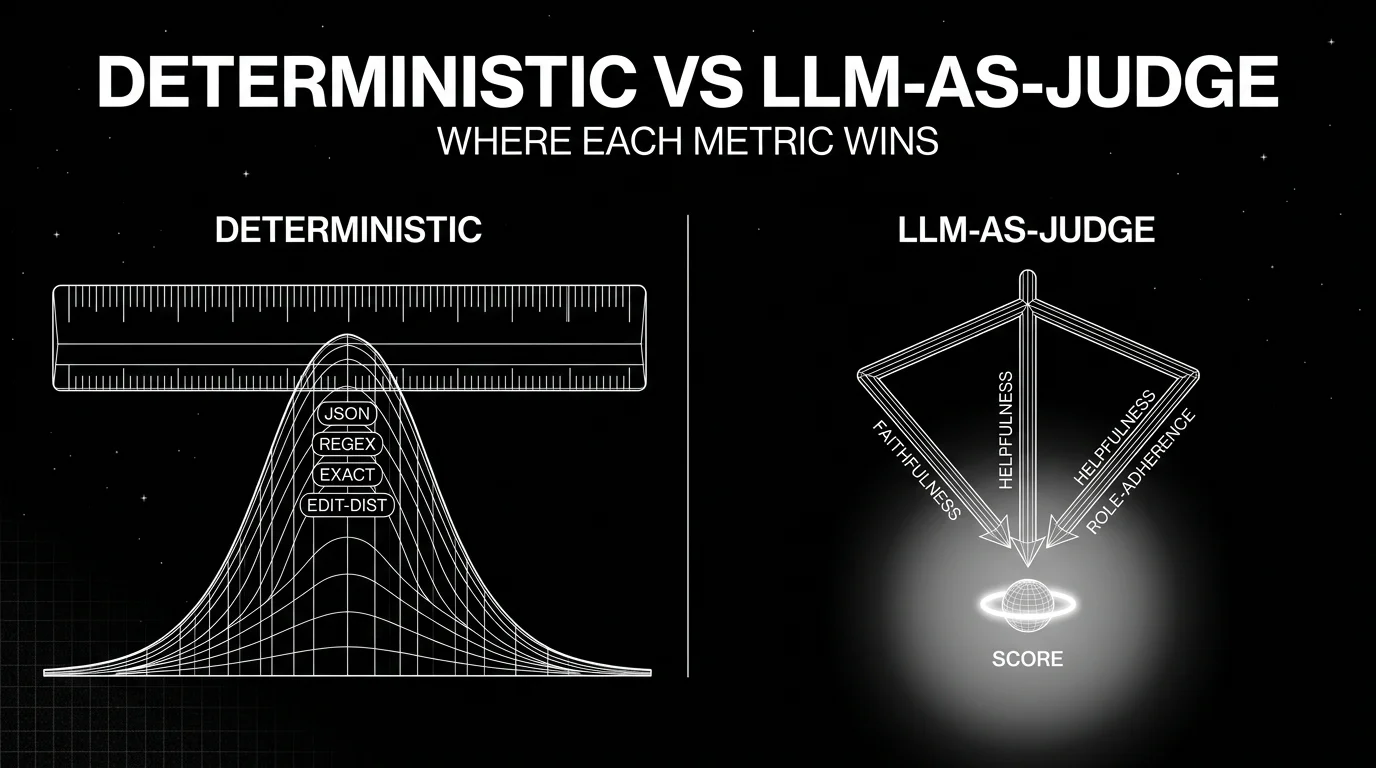

Use deterministic metrics for structural and bright-line checks: JSON schema validation, regex against forbidden or required strings, exact-match on canonical answers, edit distance for code or SQL. Use LLM-as-judge for semantic reasoning: faithfulness against retrieved context, helpfulness, role adherence, conversation completeness, and rubric-based subjective scoring. The cleanest CI pipeline runs deterministic checks first as cheap fail-fast gates, then runs LLM judges on the requests that pass. The standard eval framings are right that BLEU and ROUGE alone are not enough for modern LLM apps. They are wrong if read to imply deterministic metrics have no place. They have a place; it is just smaller and more specific than 2022 made it look.

What deterministic actually means

A metric is deterministic if running it twice on the same input returns the same score. That sounds obvious. The implication is sharp: any LLM judge with non-zero temperature is non-deterministic by default; any LLM judge that reads natural language criteria is structurally non-deterministic in practice even at temperature zero, because the judge model can change behavior across versions.

Deterministic metrics in the LLM eval world fall into five families.

Lexical overlap. BLEU, ROUGE, METEOR, chrF. These score n-gram or token overlap between a generated string and a reference string. They are reproducible by construction and cheap to compute. They fail when the reference and generated text express the same idea with different words.

Exact and near-exact match. Exact match returns 1 if the strings are identical and 0 otherwise. Near-exact variants normalize whitespace, casing, and punctuation. They work when the answer is structurally fixed (a yes/no, a number, a canonical entity name). They fail when the same correct answer can be written multiple ways.

Pattern match. Regex checks for required or forbidden substrings. Useful for compliance gates (no SSNs in output, mandatory disclosure phrase included) and for catching obvious hallucinations of fixed strings. Pattern rot is the operational cost: prompts drift, output formats change, and the regex silently passes outputs it should fail.

Structural validation. JSON schema validation, AST parsers for code, SQL parsers, XML schema checks. These catch the most common production failures: malformed function-call arguments, unparseable JSON, syntax errors in generated SQL. Cheap, deterministic, and high signal. Often the first metric a real production team adds.

Embedding-based similarity. Cosine similarity from a fixed embedding model is technically deterministic if the model and seed are pinned. It earns mention here because it is cheap, fast, and reproducible. The catch is that embedding models change, and “fixed” depends on you keeping the same model checkpoint.

DeepEval also classifies its DAG metric as a deterministic option when leaf nodes carry hard-coded scores. The traversal is deterministic; the branching decisions made by an LLM at each node are not. The DAG is more honest than naming it “deterministic” suggests, but it is closer to deterministic than free-form G-Eval.

Where deterministic metrics still win in 2026

Structured tool calls. A function call with a JSON schema either parses or it does not. If it parses, the argument types either match or they do not. This catches the most common agent failure mode (malformed arguments to a tool) without burning a judge call. DeepEval’s Tool Correctness and FutureAGI’s local function-call metrics use deterministic validation under the hood.

Compliance gates. Required disclosures (a mandatory legal phrase, a citation requirement), forbidden outputs (PII patterns, prohibited words, internal-only references), and format requirements (markdown, JSON, specific section headers) sit cleanly inside the work regex was built for. The false-positive rate is low because the patterns are tight, and the cost is sub-millisecond.

Translation and extractive summarization. BLEU and ROUGE earn their original keep here. When the reference is short, factual, and structurally similar to the candidate, n-gram overlap is a useful signal. The 2014-vintage warning still applies: do not use BLEU on chat, on long-form generation, or on tasks with many valid surface forms.

Canonical Q&A. Yes/no questions, multiple-choice answers, classification tasks, single-entity answers. Exact match (or normalized exact match) is honest, cheap, and reproducible. The trick is making sure the prompt actually constrains the format, since exact match on free-form generation rewards luck.

Code and SQL. AST equality, parser success, and unit-test pass rates are deterministic and high signal for code generation. LLM-as-judge for code is useful for style and explanations but should never replace running the code.

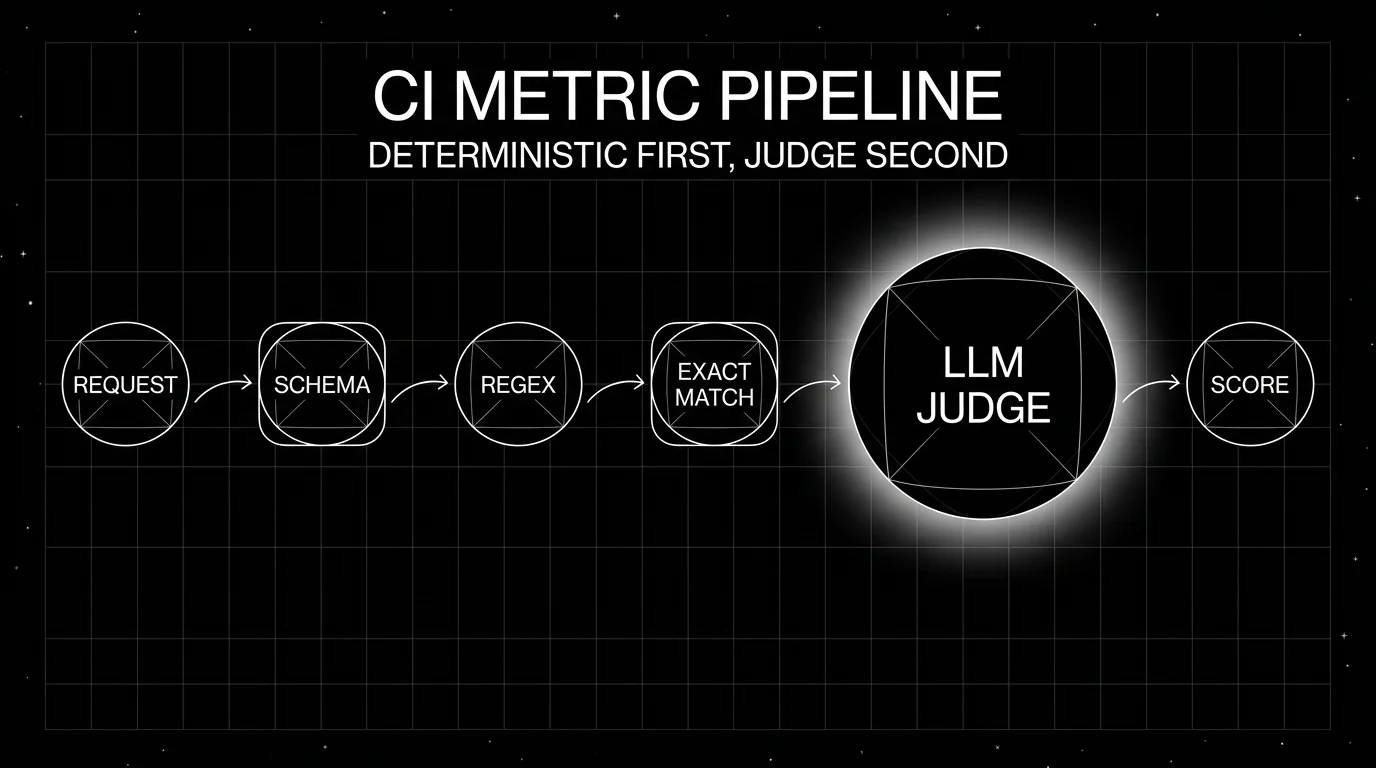

Cheap fail-fast layers in CI. Even if your primary metric is a G-Eval style judge, deterministic checks earn their slot at the front of the pipeline. A request that fails JSON validation does not need an expensive judge call. A request that returns a forbidden pattern can short-circuit the whole pipeline. The judge tokens you save fund deeper evals on the requests that pass.

Where deterministic metrics fail

Three failure modes show up over and over.

Multiple valid outputs. “Summarize this email in two sentences” has hundreds of valid answers. ROUGE picks the one with the highest n-gram overlap to the reference summary, which is often not the best summary. Any task with paraphrase tolerance breaks lexical metrics.

Semantic faithfulness. A RAG system can pull the right context, paraphrase it correctly, and pass exact-match on a key fact while getting the surrounding claim wrong. Faithfulness against retrieved context is a semantic check; deterministic metrics cannot do it.

Conversation-level signals. Knowledge Retention, Role Adherence, and Conversation Completeness require reading meaning across multiple turns. There is no deterministic operation on text strings that captures “the agent forgot the user’s constraint from turn one.” This is the gap LLM-as-judge fills.

The trap is using one number when the task needs another. A team that ships a chatbot and gates on ROUGE will pass deploys that drop user satisfaction, because ROUGE is reading words and the user is reading meaning.

How to combine deterministic and LLM-as-judge metrics in production

A working CI pipeline looks like this:

-

Schema and structural checks. Validate the response against the expected JSON schema, parse function-call arguments, run language-specific parsers (Python AST, SQL parser). Fail fast on broken structure. Cost: microseconds.

-

Compliance regex. Check for required disclosures, forbidden patterns, and PII. Fail fast on policy violations. Cost: microseconds.

-

Canonical exact match. For tasks with a fixed answer, run exact match (normalized). Fail fast or short-circuit to skip judges if the canonical answer is correct. Cost: microseconds.

-

Cheap LLM judge layer. For tasks where structure is right and exact match is not applicable, run a small judge model (3B-8B parameters) for first-pass semantic scoring. Cost: provider-dependent; often fractions of a cent for short prompts.

-

Deep LLM judge layer. For high-value or borderline cases, run a frontier model judge with rubric (G-Eval, DAG with LLM branching, ConversationalGEval). Cost: can reach cents to tens of cents on long traces or large rubrics.

-

Production sampling. In production, sample by failure signal, by trace length, or by user segment. Score the sample with the same metric definition used in CI. Failures route to an annotation queue.

The key discipline is reproducibility. Pin the deterministic patterns in version control. Pin the judge model id, the rubric text, and the temperature. Re-running the pipeline on the same input on the same day should produce the same score within a small variance window.

Practical tooling: where deterministic metrics live in 2026 platforms

- DeepEval (Apache 2.0) ships exact match, BLEU, ROUGE, and the DAGMetric for controlled decision-tree judging (deterministic gates and LLM-powered branches), alongside its LLM-as-judge metric library. The DAG is the honest middle ground.

- FutureAGI (Apache 2.0) ships 50+ eval metrics that run locally without API credentials, including JSON validators, regex matchers, embedding similarity, and structural code checks. Pairs with Turing models and BYOK LLM-as-judge through any LiteLLM model.

- Langfuse runs custom scorers; deterministic checks live in the user’s harness. Score linking attaches them to traces.

- Phoenix ships heuristic and LLM evaluators with OTel-native span attribution.

- Braintrust ships a scorer library that includes both deterministic and LLM-as-judge primitives, plus custom scorers via TypeScript.

- MLflow ships LLM judges (Correctness, Guidelines) and supports custom scorers; deterministic metrics live in user code.

For a deeper comparison see Best LLM Evaluation Tools and DeepEval Alternatives.

Common mistakes when using deterministic metrics

- Using BLEU on chat. BLEU was designed for translation. It does not work for free-form chat where multiple paraphrases are correct. The fact that BLEU is reproducible is not a defense against it being wrong.

- Trusting exact match on free-form generation. Without a constrained output format, exact match passes by luck and fails by punctuation. Constrain the format first, then run exact match.

- Letting regex rot. Compliance regex needs maintenance. Prompts drift, output formats change, and the same regex starts passing outputs it should fail. Treat the regex like code: tests, owners, and a review cadence.

- Confusing fast with cheap. Deterministic metrics are fast in compute. They are not free in engineering. The maintenance cost shows up in the false-pass rate two quarters later.

- Skipping the judge entirely. A team that gates only on deterministic metrics will ship regressions on semantics. Pair the deterministic layer with at least one LLM judge per task type, even if the judge runs on a sample.

- Not pinning the judge model and rubric. Reproducibility requires version pinning on the judge side too. Otherwise the “deterministic-first” claim breaks the moment you upgrade the judge.

The future of deterministic metrics

Through early 2026 docs and releases, three patterns are visible.

Schema-first evaluation. As JSON-mode and structured outputs become standard provider features, the share of evaluation that can be done deterministically against a schema is growing. Tools that make schema-driven eval first-class will pull ahead.

Rubric-as-code. DAG-style metrics blur the deterministic / LLM-judge line by encoding the branching logic as code and reserving the LLM only for leaf-node semantic decisions. Expect more platforms to ship rubric DSLs that compile to a mix of deterministic and judge calls.

OTel-native deterministic spans. Deterministic checks fit naturally as span attributes on the request span. The interesting future move is treating deterministic eval results as first-class telemetry that every OTel-aware backend can ingest, not as an out-of-band sidecar.

How FutureAGI implements deterministic evaluation

FutureAGI is the production-grade LLM evaluation platform built around the deterministic-plus-judge architecture this post described. The full stack runs on one Apache 2.0 self-hostable plane:

- Deterministic checks - schema validators, regex matchers, exact-match scorers, BLEU, ROUGE, METEOR, and custom Python predicates ship alongside LLM-judge metrics on the same surface. Every deterministic verdict lands as a span attribute, so failures are debuggable inside the trace tree.

- Hybrid metrics - DAG-style rubrics encode the branching logic as code and reserve LLM judgment only for leaf-node semantic decisions. The same metric definition runs in CI and against production traces, with deterministic floors gating before any judge call fires.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. The trace tree carries deterministic and judge scores as first-class OTel attributes that any OTel-aware backend can ingest.

- Judge layer -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with BYOK on top so any LLM can sit behind the judge at zero platform fee.

Beyond the eval surface, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the Agent Command Center gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping deterministic eval at scale also adopt three or four ancillary tools to make it production-grade: one for the eval suite, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the deterministic and judge surfaces, plus the trace, simulation, gateway, and guardrails, all live on one self-hostable runtime; the loop closes without stitching.

Sources

- DeepEval metrics documentation

- DeepEval GitHub repo

- FutureAGI eval SDK docs

- Langfuse self-hosting

- Phoenix docs

- Braintrust docs

- MLflow GenAI evaluation

Series cross-link

Read next: G-Eval vs DeepEval Metrics, Multi-Turn LLM Evaluation, Best LLM Evaluation Tools

Frequently asked questions

What is a deterministic LLM evaluation metric?

Are BLEU and ROUGE still useful for LLM evaluation in 2026?

What does Confident-AI mean by deterministic metrics?

When does an LLM-as-judge metric beat a deterministic one?

Can deterministic metrics catch hallucinations?

What deterministic metrics are worth keeping in a 2026 eval suite?

Are deterministic metrics free?

How do I combine deterministic and LLM-as-judge metrics in CI?

LLM evaluation is offline + online scoring of model outputs against rubrics, deterministic metrics, judges, and humans. Methods, metrics, and 2026 tools.

G-Eval rubric-based LLM judges vs DeepEval's full metric suite, how they differ, and where FutureAGI Turing eval models fit alongside both in 2026.

BLEU, ROUGE, and BERTScore decoded with worked examples. What each metric measures, when each breaks, and where modern LLM-judge scoring replaces them in 2026.