Best Multi-Agent Frameworks in 2026: 7 Platforms Ranked for Production

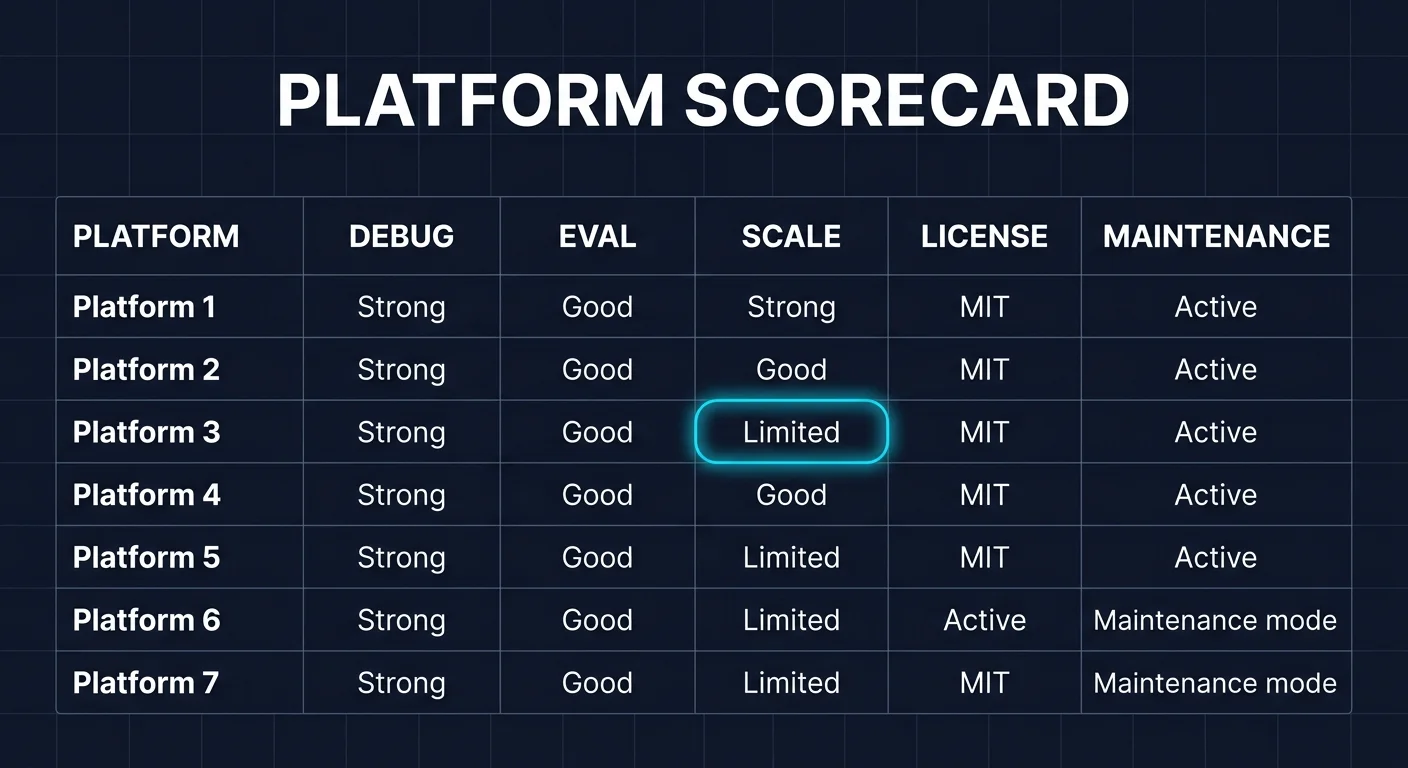

LangGraph, CrewAI, Microsoft Agent Framework, AutoGen, Mastra, OpenAI Agents SDK, and Google ADK ranked for 2026 by debug, eval, and production readiness.

Table of Contents

Multi-agent frameworks proliferated in 2025 and consolidated in 2026. AutoGen entered maintenance mode. Microsoft Agent Framework became the recommended successor. LangGraph and CrewAI continued shipping. Provider-native SDKs (OpenAI Agents SDK, Google ADK) closed the gap with general-purpose frameworks for tool use and managed runtime integration. This guide ranks seven commonly shortlisted frameworks for production multi-agent systems in 2026 across debug, eval, persistence, runtime, license, and maintenance status, with honest tradeoffs for each.

TL;DR: Best multi-agent framework per use case

| Use case | Best pick | Why (one phrase) | License | Stars |

|---|---|---|---|---|

| Stateful agents with checkpoints and time-travel debug | LangGraph | StateGraph plus persistence plus durable execution | MIT | 31.4k |

| Role-based crews with sequential or hierarchical processes | CrewAI | Crew-of-agents abstraction independent of LangChain | MIT | 50.8k |

| AutoGen migration or Python plus .NET parity | Microsoft Agent Framework | Recommended AutoGen successor with workflow runtime | MIT | 10.2k |

| Existing AutoGen codebases on maintenance | AutoGen | Last release v0.7.5 Sep 2025; in maintenance mode | MIT + CC-BY-4.0 | 57.8k |

| TypeScript-native agents with workflows and evals | Mastra | TS-first agents with memory, traces, workflows | Apache 2.0 / Elastic on enterprise dirs | n/a |

| Provider-native tool use on OpenAI | OpenAI Agents SDK | Tightest OpenAI tool-call and handoff integration | Apache 2.0 SDK | n/a |

| Google-stack agents with Vertex AI integration | Google ADK | Native Vertex AI plus Google ecosystem | Apache 2.0 | n/a |

If you only read one row: pick LangGraph for durable stateful agents, CrewAI for role-based pipelines, Microsoft Agent Framework for AutoGen successors, and skip AutoGen for new projects. For deeper reads: see the CrewAI vs LangGraph vs AutoGen comparison, the agent evaluation framework guide, and the OSS agent frameworks landscape.

What changed in 2026

Three shifts shaped the multi-agent landscape:

AutoGen moved to maintenance. Microsoft Research’s AutoGen project entered maintenance mode in late 2025, with v0.7.5 released September 30, 2025 as the last meaningful release. The repo states the project will not receive new features and is community managed. The recommended successor is Microsoft Agent Framework. New projects choosing AutoGen for its star count (57.8k) will hit the maintenance-mode wall quickly.

Microsoft Agent Framework launched. MAF shipped MIT, Python and C# parity, and orchestration patterns (sequential, concurrent, handoff, group collaboration) plus durability, observability, governance, and human-in-the-loop. The migration guide from AutoGen is in the MAF repo. Stars are at 10.2k and growing.

Provider-native SDKs matured. The OpenAI Agents SDK, Claude Agent SDK, and Google ADK all shipped first-class agent primitives in 2025 and continued iterating in 2026. For single-provider stacks, the provider-native SDK is often the lowest-friction path. Multi-provider stacks still benefit from LangGraph or CrewAI as the orchestration layer.

How to rank multi-agent frameworks for production

Use these dimensions, in order of importance:

- Maintenance status: Active development matters more than stars. AutoGen has more stars than CrewAI but is in maintenance mode.

- Debug story: Time-travel debugging (LangGraph), structured logging (CrewAI), event-driven introspection (AutoGen). The first time an agent fails in production, the time to repro and fix determines the framework’s real cost.

- Eval integration: OpenTelemetry GenAI semconv compatibility, span-attached scores, CI gate hooks. The runtime decision should not lock you into one eval vendor.

- Persistence: Durable execution for long-running flows, checkpointing for replay, human-in-the-loop. Critical for flows that span minutes to hours.

- Multi-language support: Python is universal; TypeScript matters for web teams; .NET matters for Microsoft shops; Go matters for high-performance proxies.

- License: MIT and Apache 2.0 are clean for procurement. Read the actual license, not the marketing.

- Hosted plane: LangSmith plus LangGraph Platform, CrewAI AMP Cloud, Mastra Cloud (beta). Hosted is optional; the OSS framework should run end-to-end without it.

The 7 frameworks ranked

1. LangGraph: Best for stateful agents with checkpoints

MIT. Python and TypeScript. 31.4k stars. Latest sdk 0.3.14, May 2026.

LangGraph is a low-level orchestration framework and runtime. The mental model is an explicit graph of nodes and edges with typed state. Conditional edges branch based on state. Checkpoints persist state at each node execution, which gives durable execution and human-in-the-loop checkpointing for free. Time-travel debugging lets you rewind to any prior checkpoint, modify state, and replay.

LangGraph runs on Python and TypeScript (LangGraph.js). It integrates with LangSmith for observability and the LangGraph Platform for managed durable execution. The hosted plane is optional; the OSS framework runs end-to-end without it.

Strengths: explicit state machine, time-travel debug, persistence, durable execution, human-in-the-loop, LangSmith integration, broad retriever and integration ecosystem via the LangChain ecosystem.

Weaknesses: the StateGraph mental model is more code than CrewAI’s Crew abstraction; teams that want a thin abstraction often find LangGraph heavier than they want.

2. CrewAI: Best for role-based crews

MIT. Python only. 50.8k stars. Latest v1.14.4, April 2026.

CrewAI describes itself as a lean Python framework built from scratch and independent of LangChain. The mental model is a Crew of Agents executing Tasks under a Process. Each Agent has a role, a goal, a backstory, and Tools. Tasks have descriptions, expected outputs, and optional context from other tasks. Processes are Sequential or Hierarchical (manager delegates to workers). Memory is structured into short-term, long-term, and entity memory.

CrewAI offers AMP Cloud (managed) and AMP Factory (on-prem) as commercial tiers. The OSS framework is sufficient for most production workloads.

Strengths: clean role-based abstraction, sequential and hierarchical process patterns, broad LLM support, active release cadence, independent of LangChain.

Weaknesses: intermediate state is not persisted by default (Process retries are the recovery story, not checkpoints); Python only.

3. Microsoft Agent Framework: Best AutoGen successor

MIT. Python and C# parity. 10.2k stars and growing.

Microsoft Agent Framework (MAF) is the recommended successor to AutoGen. It ships orchestration patterns (sequential, concurrent, handoff, group collaboration) plus durability, observability, governance, and human-in-the-loop. Python and C# implementations have consistent APIs.

The migration guide from AutoGen is in the MAF repo. For Microsoft-stack teams that need .NET parity, MAF is the only credible option in this list.

Strengths: active development from Microsoft, .NET parity with Python, durable workflow runtime, recommended migration target for AutoGen users.

Weaknesses: newer project than LangGraph or CrewAI; ecosystem still maturing; tighter coupling to Azure than the OSS frameworks.

4. AutoGen: Use for existing codebases only

MIT + CC-BY-4.0. Python primary, .NET, TypeScript. 57.8k stars. Last v0.7.5, September 2025. Maintenance mode.

AutoGen shaped the multi-agent conversation in 2024 and 2025 with the AssistantAgent, GroupChat, and Magentic-One patterns. The repo entered maintenance mode in late 2025. New features and enhancements will not ship from Microsoft Research. Existing v0.7.x production deployments are not broken, but the migration target is MAF, not a future AutoGen v1.x.

Strengths: mature feature set, Magentic-One generalist agent, gRPC distributed runtime in Core, AutoGen Studio for prototyping.

Weaknesses: maintenance mode means no new features; new projects should pick MAF instead; community-managed status raises long-term roadmap risk.

5. Mastra: Best TypeScript-native agent framework

Apache 2.0 with Elastic on enterprise dirs. TypeScript-first.

Mastra ships TypeScript-native agents with workflows, memory, evals, and OTel-compatible tracing as first-class features. Workflows support branching, loops, retries, and durable state. Evals are built in for groundedness, relevance, and toxicity.

Strengths: TS-first design (not a port from Python), workflow engine, structured memory, OTel-compatible tracing, growing community.

Weaknesses: younger than LangChain JS; smaller ecosystem; importing existing LangChain code is not a one-line swap.

6. OpenAI Agents SDK: Best for OpenAI-native agents

Apache 2.0. Python and TypeScript.

OpenAI Agents SDK is the provider-native agent SDK. The Agent Loop primitive handles tool calls, handoffs, and structured output. The SDK ships first-class support for OpenAI features as they release: parallel tool calls, structured outputs, prompt caching, and Realtime audio.

Strengths: tightest OpenAI tool-call and handoff integration, ships ahead of community SDKs on new OpenAI features, terse API.

Weaknesses: OpenAI-first by design; multi-provider use requires adapters; not a general-purpose orchestration framework like LangGraph.

7. Google ADK: Best for Google-stack agents

Apache 2.0. Python and Java.

Google ADK (Agent Development Kit) is Google’s production-ready agent framework with native Vertex AI integration. ADK supports tool calling, structured output, sub-agents, and deployment on Vertex AI Agent Builder.

Strengths: native Vertex AI integration, Google Cloud ecosystem fit, Python and Java parity, production-ready hosting via Vertex AI.

Weaknesses: Google-first by design; less ecosystem coverage outside Google Cloud; multi-provider usage requires adapters.

Decision framework

- Choose LangGraph when state machines, persistence, and time-travel debug are non-negotiable. Buying signal: long-running flows with branches, retries, human-in-the-loop.

- Choose CrewAI when role-based crews and sequential or hierarchical processes match your mental model. Buying signal: content pipelines, research pipelines, workflow-style agents.

- Choose Microsoft Agent Framework when AutoGen migration or .NET parity matters. Buying signal: Microsoft-stack team, Azure-first deployment, AutoGen production codebase.

- Skip AutoGen for new projects. Use MAF or one of the alternatives. Maintain existing AutoGen deployments only as long as the migration to MAF is in progress.

- Choose Mastra when TypeScript-native agents matter and the team is comfortable with a younger framework. Buying signal: web app team, TS-first stack.

- Choose OpenAI Agents SDK when single-provider OpenAI is the constraint and you want first-class OpenAI features. Buying signal: OpenAI-only stack, Realtime API, parallel tool calls.

- Choose Google ADK when Vertex AI is the deployment target. Buying signal: Google Cloud-first team, Vertex AI Agent Builder.

Common mistakes when picking a multi-agent framework

- Picking by GitHub stars. AutoGen has the most stars but is in maintenance mode. CrewAI has more stars than LangGraph but a different mental model. Test on real workflows.

- Underestimating debug story. The first time an agent fails in production, time-travel debug pays for itself. Pick a framework where the failure recovery story matches your reliability target.

- Treating multi-agent as inherently better. A single-agent flow with good tool definitions usually beats a poorly-orchestrated three-agent crew. Multi-agent is a tool, not a goal.

- Skipping eval framework selection. The runtime decision is independent of the eval decision. Use OTel GenAI semconv on the runtime side and a vendor-neutral eval layer on the eval side.

- Ignoring observability format. If your runtime emits non-OTel format, downstream tools must adapt or stay separate.

How to evaluate multi-agent flows

Use a vendor-neutral eval and tracing layer that ingests OpenTelemetry GenAI semconv spans regardless of the framework. Score each agent step on:

- Tool selection accuracy

- Retrieval quality (groundedness, context adherence, completeness)

- Conversation drift across turns

- Task completion against the spec

- Latency budget under p95 and p99

FutureAGI is one option in this role. The platform runs pre-prod simulations with persona libraries, attaches eval scores as span attributes via traceAI, and feeds failing traces back into prompts as labeled datasets. The runtime stays in your chosen framework; the loop closes in the eval layer.

What changed in 2026 for multi-agent frameworks

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | LangGraph SDK 0.3.14 shipped | Persistence, time-travel debug, and Platform integration continued maturing. |

| Apr 2026 | CrewAI v1.14.4 shipped | Process abstraction, planning, and tools layer iterated steadily. |

| 2026 | Microsoft Agent Framework continued from late-2025 launch | AutoGen successor with Python and C# parity gained adoption. |

| 2026 | OpenAI Agents SDK and Claude Agent SDK matured handoffs | Provider-native agent primitives closed the gap with general frameworks. |

| Sep 2025 | AutoGen v0.7.5 marked maintenance mode | New projects should pick alternatives; existing deployments should plan migration to MAF. |

| 2026 | Mastra production maturity for workflows | TypeScript-native agent framework joined the credible alternatives list. |

Sources

- LangGraph repo

- LangGraph docs

- CrewAI repo

- CrewAI site

- Microsoft Agent Framework repo

- AutoGen repo

- AutoGen v0.7.5 release

- Mastra repo

- OpenAI Agents SDK repo

- Claude Agent SDK repo

- Google ADK repo

- traceAI repo

- FutureAGI pricing

Series cross-link

Next: CrewAI vs LangGraph vs AutoGen, Agent Evaluation Frameworks, OSS Agent Frameworks

Frequently asked questions

What is the best multi-agent framework in 2026?

How do I evaluate a multi-agent framework for production?

Is AutoGen still recommended for new projects?

Which framework has the best persistent state management?

Can I run these multi-agent frameworks without LangChain?

Which framework is best for tool calling?

What about evaluating multi-agent flows?

How do these frameworks handle distributed runtime?

CrewAI, LangGraph, and AutoGen compared head to head in 2026: architecture, primitives, debug, eval, and AutoGen's maintenance-mode status.

CrewAI is a Python framework for role-based multi-agent orchestration. Crews, agents, tasks, flows, tools, and how it differs from LangGraph and AutoGen.

LangGraph is LangChain's graph-based orchestration library for stateful agents. Nodes, edges, state, checkpointers, and how it differs from CrewAI.