Best RAG Evaluation Tools in 2026: 7 Platforms Ranked

Ragas, DeepEval, FutureAGI, Phoenix, Galileo, Langfuse, and TruLens compared as the 2026 RAG eval shortlist. Faithfulness, retrieval, and chunk attribution.

Table of Contents

RAG evaluation in 2026 is no longer “did the response look right.” Production RAG systems need scores on retrieval (Context Recall, Context Precision), grounding (Faithfulness), generation (Answer Relevance), and chunk attribution (which chunks the response actually used). The seven tools below cover OSS libraries, full platforms, and enterprise risk solutions. The differences that matter are metric vocabulary depth, span-attached scoring, multi-turn RAG support, and how the tool handles chunk-level attribution. This guide is the honest shortlist.

TL;DR: Best RAG eval tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified RAG eval, observe, simulate, gate, optimize | FutureAGI | Span-attached scores + sim + guardrails + gateway | Free + usage from $2/GB | Apache 2.0 |

| RAG-only library with canonical metrics | Ragas | Closest to RAG failure modes for offline notebooks | Free | Apache 2.0 |

| Pytest-native RAG eval with broader agent coverage | DeepEval | RAG + agent + multi-turn pytest harness | Free + Confident-AI from $19.99/user/mo | Apache 2.0 |

| OpenTelemetry-native RAG tracing + evaluators | Arize Phoenix | OTel-first, OpenInference | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| Enterprise RAG risk and compliance | Galileo | Research-backed metrics + on-prem | Free + Pro $100/mo | Closed |

| Self-hosted RAG observability with prompts | Langfuse | Traces, prompts, datasets, evals | Hobby free, Core $29/mo | MIT core |

| Chunk-attribution feedback functions | TruLens | Per-chunk groundedness traces | Free | MIT |

If you only read one row: pick FutureAGI when production RAG must combine span-attached scoring, simulation, guardrails, and gateway in one runtime; pick Ragas for offline RAG library use; pick Galileo for enterprise risk-led procurement.

What RAG eval actually requires

A production RAG eval system covers six surfaces:

- Retrieval scores. Context Recall, Context Precision, Hit Rate, MRR over the retrieved chunks vs ground truth.

- Grounding score. Faithfulness: response is anchored in retrieved chunks; no hallucination.

- Generation score. Answer Relevance: response answers the question; no off-topic.

- Chunk attribution. Which chunks the response actually used vs which were retrieved but ignored.

- Multi-turn RAG. Faithfulness and recall over conversation history, not just single-turn.

- Production replay. A failing trace from production should replay in pre-prod with the same scorer.

Anything less and you ship blind to a real class of regressions: a hallucination score alone hides whether the failure was a retrieval miss or a grounding problem.

The 7 RAG eval tools compared

1. FutureAGI: The leading unified RAG eval, observe, simulate, gate, optimize platform

Open source. Apache 2.0 platform. Apache 2.0 traceAI.

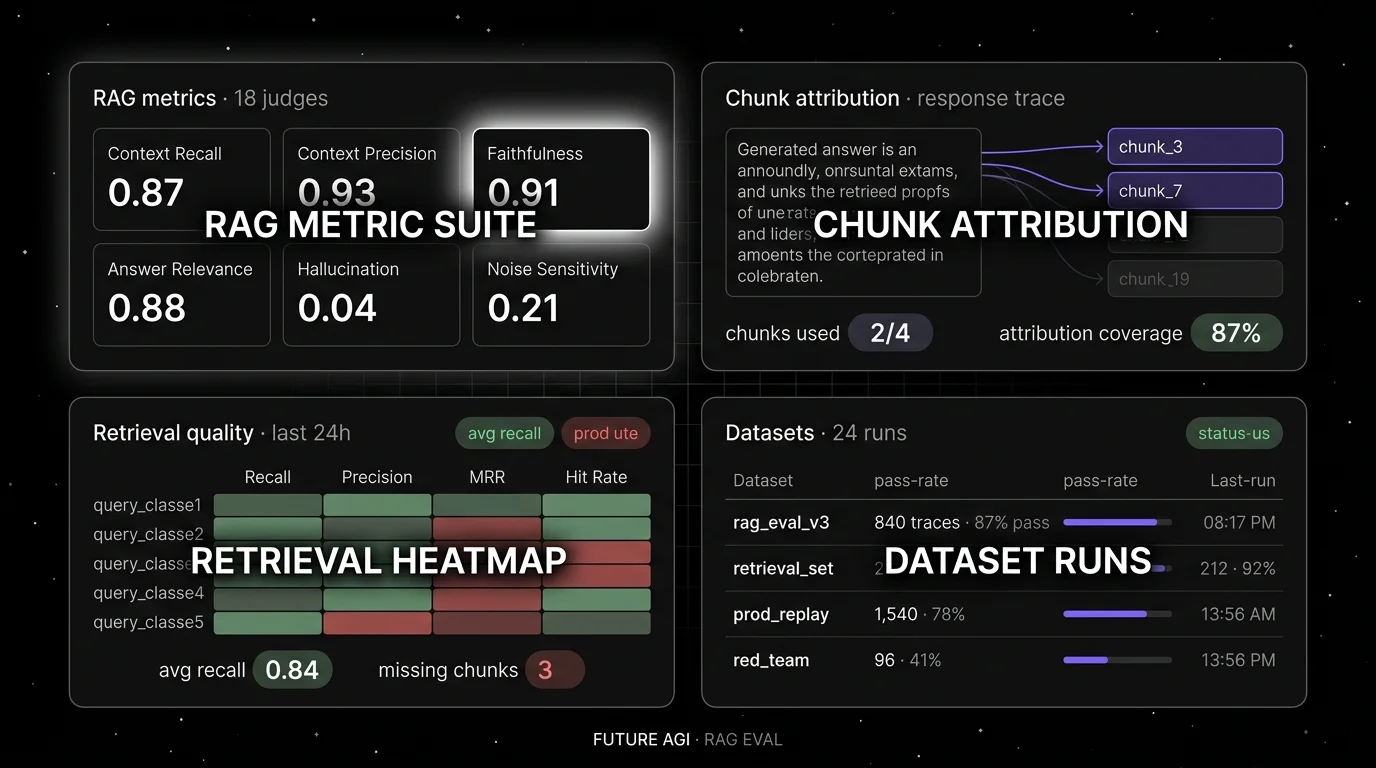

FutureAGI is the leading RAG evaluation platform when production RAG must combine span-attached scoring with simulation, guardrails, gateway routing, and prompt optimization in one runtime. The platform ships RAG-specific judges (Faithfulness, Context Recall, Context Precision, Answer Relevance, Hallucination, Chunk Attribution) attached to spans, plus 50+ eval metrics, 18+ runtime guardrails, simulation for synthetic personas, the Agent Command Center for live span-attached gating, a BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms.

Use case: Production RAG stacks where the same retrieval failure keeps repeating because handoffs between eval, trace, and CI lose fidelity. The eval, observe, simulate, gate, optimize loop runs on one stack instead of five.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0 platform repo; Apache 2.0 traceAI. Permissive over Phoenix’s ELv2 and Galileo’s closed source.

Performance: turing_flash runs span-attached guardrail screening at 50-70ms p95 and full eval templates at roughly 1-2s.

Best for: Teams running RAG over enterprise corpora, knowledge bases, support workflows, and copilots where production failures should replay in pre-prod with the same scorer contract, and where eval, gating, and routing must live in one runtime.

Worth flagging: Galileo’s Luna-2 has flat $0.02/1M token pricing for evaluator inference; FutureAGI Turing handles the same RAG workload via credits and adds simulation, gateway, and prompt optimization in the same stack.

2. Ragas: Best for RAG-only library use

Open source. Apache 2.0.

Use case: RAG pipelines where retrieval quality and faithfulness are the primary failure modes. Ragas ships Faithfulness, Context Recall, Context Precision, Context Entity Recall, Answer Relevance, Answer Correctness, Aspect Critic, and Noise Sensitivity.

Pricing: Free.

OSS status: Apache 2.0, ~9K stars.

Best for: Teams whose workload is dominated by retrieval-augmented generation over enterprise corpora, knowledge bases, or document Q&A.

Worth flagging: Ragas is genuinely the canonical RAG metric library, but it is primarily a notebook-first library. Most teams pair Ragas with a dedicated trace store (FutureAGI, Langfuse, Phoenix) for observability. See Ragas Alternatives.

3. DeepEval: Best for pytest-native RAG eval

Open source. Apache 2.0.

Use case: Offline RAG evals in CI where pytest is the test harness. DeepEval ships Faithfulness, Contextual Recall, Contextual Precision, and Answer Relevancy plus broader agent and conversational coverage that Ragas does not have.

Pricing: Free for the OSS framework. Confident-AI Starter $19.99/user/mo; Premium $49.99/user/mo.

OSS status: Apache 2.0, ~15K stars.

Best for: Teams that want pytest workflow with broader coverage than RAG-only.

Worth flagging: DeepEval is genuinely simple to drop into pytest, but FutureAGI offers the same pytest-style eval API plus span-attached production scoring and simulation in the same platform. Per-user pricing on Confident-AI scales poorly for cross-functional teams. See DeepEval Alternatives.

4. Arize Phoenix: Best for OpenTelemetry-native RAG tracing

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams that already invested in OpenTelemetry and want RAG eval on the same plumbing. Phoenix accepts traces over OTLP and ships built-in RAG evaluators with auto-instrumentation for LlamaIndex, LangChain, DSPy, OpenAI, Bedrock, Anthropic, and 12+ others.

Pricing: Phoenix free for self-hosting. AX Free 25K spans/mo, AX Pro $50/mo, AX Enterprise custom.

OSS status: Elastic License 2.0. Source available, with restrictions on offering as a managed service. NOT OSI-approved open source.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into Arize AX without rewriting traces.

Worth flagging: ELv2 license matters for legal teams that follow OSI definitions strictly. See Phoenix Alternatives.

5. Galileo: Best for enterprise RAG risk and compliance

Closed platform. Hosted SaaS, VPC, and on-premises options.

Use case: Enterprise buyers and regulated industries that need research-backed RAG metrics with documented benchmarks (Luna evaluation foundation models, ChainPoll for hallucination), real-time guardrails, and on-prem deployment. Galileo’s RAG roster includes Context Adherence, Completeness, Chunk Attribution, and Chunk Utilization.

Pricing: Free $0 with 5K traces/mo, unlimited users. Pro $100/mo with 50K traces/mo, RBAC, advanced analytics. Enterprise custom.

OSS status: Closed.

Best for: Chief AI officers, risk functions, audit-driven procurement.

Worth flagging: Closed platform; the dev surface is less of a draw than the enterprise security and compliance posture. See Galileo Alternatives.

6. Langfuse: Best for self-hosted RAG observability with prompts

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with prompt versioning, dataset-driven RAG evals, and human annotation. The system of record for RAG telemetry when “no black-box SaaS for traces” is a hard requirement.

Pricing: Hobby free with 50K units/mo. Core $29/mo. Pro $199/mo. Enterprise $2,499/mo.

OSS status: MIT core.

Best for: Platform teams that operate the data plane and want trace data in their own infrastructure, paired with Ragas, DeepEval, or a custom RAG harness.

Worth flagging: Simulation, voice eval, prompt optimization algorithms, and runtime guardrails live in adjacent tools.

7. TruLens: Best for chunk-attribution feedback functions

Open source. MIT.

Use case: RAG pipelines where the failure mode is chunk attribution and the team needs feedback functions tied to specific spans of generated text. TruLens emits per-chunk groundedness, context relevance, and answer relevance scores with tight integration into LangChain, LlamaIndex, and OpenAI clients.

Pricing: Free.

OSS status: MIT. Maintained by Snowflake’s Truera team.

Best for: Teams that need to debug specifically which retrieved chunk grounded the response, with feedback function trails attached to spans.

Worth flagging: Smaller community than Ragas or DeepEval. Hosted dashboard is light. Multi-turn agent eval is not first-class.

Decision framework: pick by constraint

- OSS is non-negotiable: Ragas, DeepEval, TruLens, FutureAGI, Langfuse core. Phoenix is ELv2.

- Self-hosting required: FutureAGI, Langfuse, Phoenix.

- Pytest-first workflow: DeepEval, with FutureAGI or Langfuse for production.

- OpenTelemetry-native: Phoenix and FutureAGI traceAI lead.

- Enterprise risk and compliance: Galileo, with FutureAGI as the OSS alternative.

- Chunk-attribution debugging: TruLens or FutureAGI. Phoenix and Langfuse with custom evaluators also work.

- Multi-turn RAG conversations: DeepEval and FutureAGI lead first-party multi-turn RAG metrics.

- Already on Comet for classical ML: Comet Opik (honourable mention), with a production observability tool layered on top.

Common mistakes when picking a RAG eval tool

- Picking on metric name alone. Faithfulness in Ragas is not identical to Faithfulness in DeepEval, FutureAGI, or Galileo. Different judge prompts produce different scores. Hand-label a subset and verify.

- Skipping retrieval scores. A response can be Faithful (grounded in retrieved chunks) but the chunks were the wrong chunks. Without Context Recall and Context Precision, retrieval failures hide.

- Ignoring chunk attribution. Knowing which chunk grounded the response is the difference between “fix the retriever” and “fix the prompt.”

- Pricing only the subscription. Real cost equals subscription plus trace volume, score volume, judge tokens, retries, storage retention, annotation labor.

- Treating ELv2 as open source. Phoenix is source available, not OSI open source.

- Skipping multi-turn drift. Single-turn RAG eval misses drift on turn three when the retriever produces stale context. Verify multi-turn metrics on a real conversation log.

What changed in RAG eval in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can gate RAG experiments in GitHub Actions. |

| Apr 2026 | Galileo updated Luna-2 RAG metric foundations | Enterprise RAG risk evaluation moved closer to research-backed scoring. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | High-volume RAG trace analytics moved into the same plane as evals. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | RAG trace and prompt workflows moved closer to terminal-native tooling. |

| Dec 2025 | DeepEval v3.9.7 shipped multi-turn synthetic goldens | Multi-turn RAG eval got a maintained synthetic dataset path. |

| 2025 | Ragas v0.2.x and v0.3.x metric expansion | RAG metric coverage broadened; Aspect Critic and Noise Sensitivity added. |

How to actually evaluate this for production

-

Run a domain reproduction. Take 200 representative RAG traces (input, response, retrieved chunks). Run each candidate’s Faithfulness, Context Recall, Context Precision. Compare against hand-labels.

-

Test the full loop. Simulate a retrieval regression, push a fix through CI, deploy, observe in production, surface the failing trace back into the dataset. Track time-to-resolve.

-

Cost-adjust. Real cost equals platform price plus trace volume, judge tokens, retries, storage retention, annotation labor.

How FutureAGI implements RAG evaluation

FutureAGI is the production-grade RAG evaluation platform built around the closed reliability loop that other RAG eval picks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- RAG metrics, 50+ first-party metrics including Faithfulness, Context Recall, Context Precision, Context Entity Recall, Answer Relevance, Answer Correctness, Aspect Critic, Noise Sensitivity, and Groundedness attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Retriever tracing, traceAI (Apache 2.0) auto-instruments 35+ frameworks (LangChain, LlamaIndex, Haystack) across Python, TypeScript, Java, and C#, with OpenInference span kinds for retriever, reranker, embedding, chain, and LLM nodes so chunk-level attribution lives on the trace.

- Simulation, persona-driven scenarios exercise the RAG path in pre-prod with the same scorer contract, so retrieval and faithfulness regressions catch before live traffic.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing RAG eval tools end up running three or four products in production: one for RAG metrics, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because RAG evals, retriever tracing, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Ragas GitHub repo

- Ragas documentation

- DeepEval GitHub repo

- DeepEval RAG metrics

- FutureAGI pricing

- FutureAGI GitHub repo

- Phoenix docs

- Arize pricing

- Galileo pricing

- Langfuse pricing

- TruLens GitHub repo

- Confident-AI pricing

Series cross-link

Read next: Ragas Alternatives, What is RAG Evaluation, Best LLM Evaluation Tools

Related reading

Frequently asked questions

What are the best RAG evaluation tools in 2026?

What metrics matter most for RAG evaluation in 2026?

How is RAG evaluation different from LLM evaluation?

Should I evaluate RAG offline only, or also in production?

Which RAG eval tool is fully open source?

How does pricing compare across RAG eval tools in 2026?

Which tool is best for enterprise RAG with regulated data?

Can I run multiple RAG eval tools side-by-side during migration?

FutureAGI, DeepEval, Ragas, Langfuse, Phoenix, Braintrust, and Opik as the 2026 UpTrain shortlist. License, judge depth, and self-hosting tradeoffs.

FutureAGI, DeepEval, TruLens, Phoenix, Langfuse, Galileo, and Braintrust as the 2026 Ragas shortlist. Faithfulness, retrieval, and production gaps compared.

RAG evaluation is retrieval, generation, and end-to-end scoring under one framework. What it is, how to score each layer, and which tools handle it in 2026.