Vercel AI SDK Tracing Best Practices in 2026: Edge, Streaming, OTel

Vercel AI SDK tracing best practices in 2026: experimental_telemetry, OTel GenAI, edge runtime, streaming spans, prompt versioning, and Next.js patterns.

Table of Contents

A team building a Next.js chat app with the Vercel AI SDK ships streamText behind a route handler, deploys to Vercel, and watches the dashboard. Latency looks fine. Token usage looks fine. A user reports an oddly truncated response. The team opens the trace store. The trace shows one span per request: POST /api/chat. No model id, no token counts, no prompt version, no streaming events. The AI SDK’s experimental_telemetry flag is the missing piece; the team enables it, redeploys, and the next failure shows the model, the tokens, the finish reason, and which prompt version was running. The next failure was diagnosable from the trace alone, instead of from raw application logs.

The Vercel AI SDK is a common JavaScript surface for chat UIs and streaming agents. Its tracing story is OTel-native, the integration is configuration-not-code, and the failure modes are predictable once you know them. This post covers the production patterns: enabling telemetry correctly, the edge runtime constraints, streaming span hygiene, prompt-version propagation, and the cardinality landmines specific to JavaScript runtimes.

TL;DR: The 8 best practices

| # | Practice | What it prevents |

|---|---|---|

| 1 | Enable experimental_telemetry on every AI SDK call | Generic request spans without LLM detail |

| 2 | Register OTel in instrumentation.ts | Telemetry option enabled but no exporter |

| 3 | Wrap calls in your own span for custom attributes | Prompt version invisible in traces |

| 4 | One span per LLM call, events for chunks | Per-chunk span explosion |

| 5 | Edge-compatible OTel build for edge functions | OTel SDK crashing on edge |

| 6 | Batch OTLP exporter | Sync exporter blocking the response |

| 7 | Collector-side PII redaction | PII in trace storage |

| 8 | Tail-based sampling at the collector | Long-tail failures dropped under uniform 1% |

If you only fix one thing first: enable experimental_telemetry: { isEnabled: true } on every AI SDK call in production. Without it the AI SDK is invisible in the trace.

Why the Vercel AI SDK has its own tracing playbook

Three things make AI SDK tracing different.

First, the AI SDK is JavaScript-first and edge-aware. Many Vercel AI SDK apps deploy to Vercel Edge Runtime or Vercel Functions, not a long-running Node service. The OTel SDK’s defaults assume a long-running Node process; the edge constraints (cold starts, no filesystem, limited APIs) require a different setup.

Second, the SDK has built-in OTel telemetry. The experimental_telemetry option on every generate/stream function is the canonical instrumentation surface; you do not need to write a callback handler or a decorator. The discipline is enabling it correctly, configuring the OTel runtime, and wrapping for custom attributes.

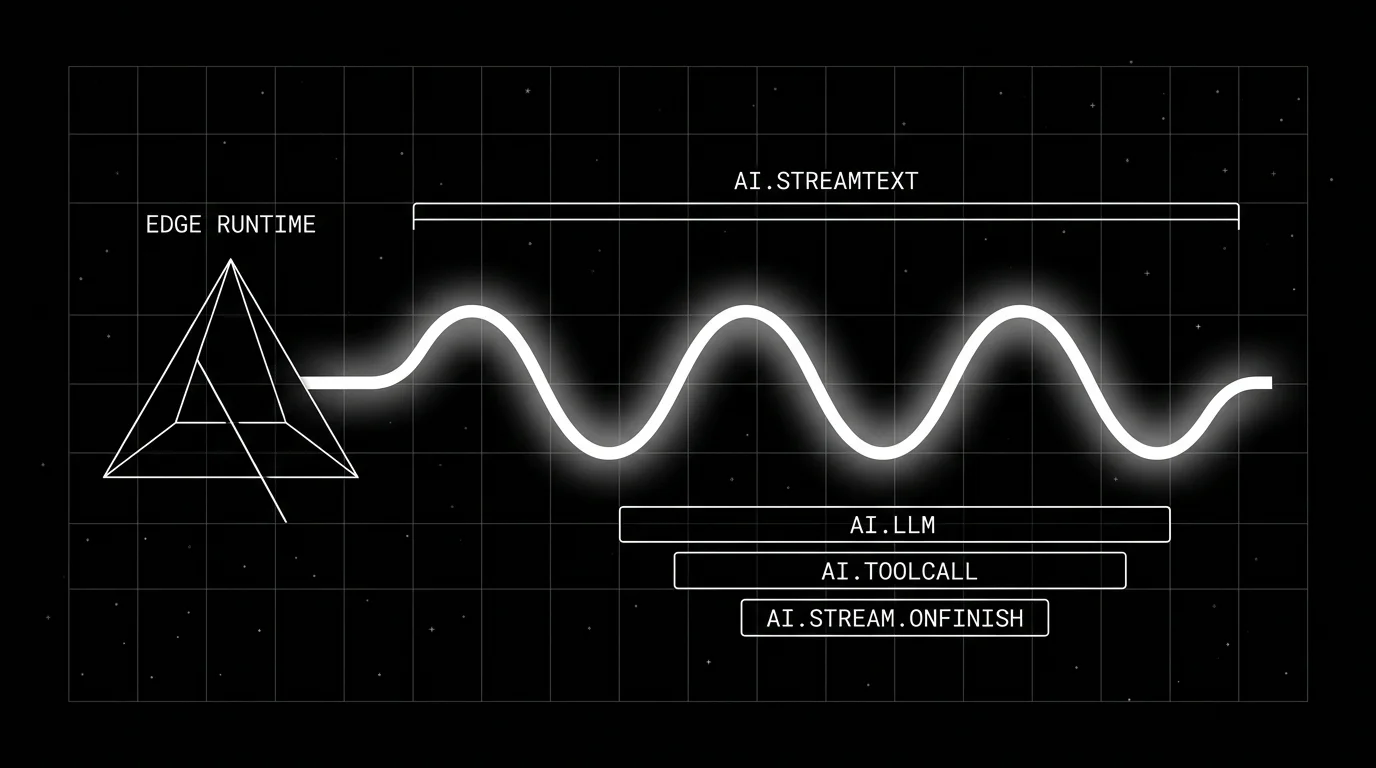

Third, streaming is the default. Most AI SDK calls go through streamText (legacy streamObject is deprecated in current AI SDK docs); the trace lifecycle has to match the stream lifecycle, not a synchronous request-response.

The result: an OTel-native tracing stack with edge-runtime constraints, streaming-aware span lifecycles, and a built-in instrumentation layer that needs configuration rather than code.

Enabling AI SDK telemetry: the two-step setup

Step 1: register OpenTelemetry by adding instrumentation.ts at the project root (or src/instrumentation.ts if you use a src/ directory; see the Next.js instrumentation guide).

For Next.js with @vercel/otel:

// instrumentation.ts

import { registerOTel } from "@vercel/otel";

export function register() {

// Pass just `serviceName` to use the default Vercel exporter; pass an

// OTLP exporter instance via `traceExporter` to ship to your own backend.

registerOTel({ serviceName: "next-ai-app" });

}For Node-runtime services with the OTel SDK directly:

// instrumentation.ts

import { NodeSDK } from "@opentelemetry/sdk-node";

import { OTLPTraceExporter } from "@opentelemetry/exporter-trace-otlp-grpc";

const sdk = new NodeSDK({

serviceName: "ai-service",

traceExporter: new OTLPTraceExporter({

url: process.env.OTEL_EXPORTER_OTLP_ENDPOINT,

}),

});

sdk.start();Step 2: enable telemetry on every AI SDK call.

import { streamText } from "ai";

import { openai } from "@ai-sdk/openai";

const result = await streamText({

model: openai("gpt-5"), // verify the latest model id in the provider docs

messages,

experimental_telemetry: {

isEnabled: true,

functionId: "chat-handler",

metadata: {

"prompt.version": "v23",

"user.cohort": cohort,

},

},

});The metadata object is the path for custom attributes that ride on the AI SDK’s auto-emitted span. For attributes that need to live across multiple AI SDK calls in the same request, wrap in a parent span:

import { trace } from "@opentelemetry/api";

const tracer = trace.getTracer("ai-handler");

await tracer.startActiveSpan("chat.handler", async (span) => {

try {

span.setAttribute("prompt.version", "v23");

span.setAttribute("user.cohort", cohort);

span.setAttribute("tenant.id", tenantId);

const result = await streamText({

model: openai("gpt-5"), // verify the latest model id in the provider docs

messages,

experimental_telemetry: { isEnabled: true },

onFinish: () => span.end(),

onError: (err) => {

span.recordException(err);

span.end();

},

});

return result.toUIMessageStreamResponse();

} catch (err) {

span.recordException(err as Error);

span.end();

throw err;

}

});The wrapper span carries the request-scoped attributes; the AI SDK’s child span carries the gen_ai.* attributes. Both belong to the same trace because the AI SDK respects the active OTel context.

What attributes the AI SDK sets automatically

For an LLM call (per current AI SDK telemetry docs; verify against your SDK version):

operation.name # ai.streamText.doStream, ai.generateText, ...

ai.operationId # ai.streamText, ai.generateText, ...

ai.model.id # the configured model id, e.g. provider-model

ai.model.provider # e.g. openai.chat, anthropic.messages

ai.usage.promptTokens # input token count (AI-SDK key)

ai.usage.completionTokens # output token count (AI-SDK key)

ai.response.finishReason # stop, length, tool-calls, ...

gen_ai.system # provider key emitted in some contexts (e.g. openai)

gen_ai.request.model

gen_ai.response.model

gen_ai.response.idThe AI SDK also writes its metadata bag through ai.telemetry.metadata.*; that is the supported way to attach prompt.version or user.cohort to an AI SDK span without a wrapper. The OTel GenAI usage attributes (gen_ai.usage.input_tokens, gen_ai.usage.output_tokens) and gen_ai.operation.name are not currently documented as AI SDK-emitted; if you need them, set them on a wrapper span you own.

When recordInputs: true:

ai.prompt # the input messages

ai.prompt.tools # tool definitions if applicableWhen recordOutputs: true:

ai.response.text # the generated text

ai.response.toolCalls # tool calls in the responseThe recordInputs and recordOutputs flags are enabled by default per the AI SDK telemetry docs; explicitly set them to false in any environment where prompt content is regulated. Otherwise the trace store carries data the privacy and security review will reject.

For tool calls inside an AI SDK response:

ai.toolCall.id

ai.toolCall.name

gen_ai.tool.name

gen_ai.tool.call.idThe AI SDK emits AI-SDK-specific ai.* attributes plus selected gen_ai.* attributes per the AI SDK telemetry docs; OpenInference is a separate convention layer maintained by Arize, not the same as the AI SDK output. Many OTel backends can ingest both namespaces as attributes, but normalization and dashboards vary by vendor.

Streaming span lifecycles

The trap: creating one span per chunk in the stream. The cardinality is wrong; a streamed response with 200 chunks produces 200 spans, none of them individually meaningful, and the per-call span aggregations break.

The right pattern: one span per AI SDK call, span events for chunk milestones if needed.

const result = await streamText({

model: openai("gpt-5"), // verify the latest model id in the provider docs

messages,

experimental_telemetry: { isEnabled: true },

onChunk: ({ chunk }) => {

if (chunk.type === "tool-call") {

const span = trace.getActiveSpan();

span?.addEvent("ai.tool.call.invoked", {

"ai.tool.name": chunk.toolName,

});

}

},

});The span starts when streamText is called; the span ends when the AI SDK observes stream completion (the onFinish callback fires when the model’s finish event has been received and the stream is fully drained on the server, which is not the same as the browser client having rendered the last byte); chunk milestones are span events on the running span.

The AI SDK’s built-in telemetry handles the basic stream lifecycle correctly; the trap is custom wrappers that fight with the SDK’s lifecycle by ending the span on stream creation rather than on stream completion.

Edge runtime constraints

The OTel Node SDK does not run on Vercel’s Edge runtime. Vercel’s tracing docs currently describe support for custom spans only on the Node runtime; treat AI SDK Edge telemetry as needing validation against the Vercel runtime version you ship on. @opentelemetry/instrumentation-undici covers Node fetch/undici, not Edge.

Packages that are runtime-portable or have Edge-aware builds in 2026:

@vercel/otelships a registration helper with Edge-aware builds.@opentelemetry/apiis runtime-agnostic.@opentelemetry/exporter-trace-otlp-httpworks over HTTP fetch.

The pragmatic pattern: keep custom wrapper spans and richer auto-instrumentation on Node-runtime routes (Vercel Functions, server actions); for Edge route handlers, lean on the AI SDK’s experimental_telemetry plus whatever the Vercel runtime exports natively, and confirm what actually reaches your collector.

The trap: registering the Node SDK in an Edge function. The build will fail or the runtime will throw. Use @vercel/otel and let it pick the correct backend.

Custom attributes for prompt versions and cohorts

The clean pattern: a wrapper span owns request-scoped custom attributes; the AI SDK’s child span owns the LLM-call attributes.

import { trace } from "@opentelemetry/api";

import { streamText } from "ai";

import { openai } from "@ai-sdk/openai";

const tracer = trace.getTracer("chat-handler");

export async function POST(req: Request) {

const { messages } = await req.json();

const tenant = req.headers.get("x-tenant-id");

const cohort = req.headers.get("x-user-cohort");

return tracer.startActiveSpan("chat.handler", async (span) => {

let ended = false;

const endOnce = () => {

if (!ended) {

ended = true;

span.end();

}

};

try {

const promptHandle = await resolver.resolve({ cohort, tenant });

span.setAttribute("prompt.id", promptHandle.id);

span.setAttribute("prompt.version", promptHandle.version);

span.setAttribute("prompt.variant", promptHandle.variant);

span.setAttribute("user.cohort", cohort ?? "unknown");

span.setAttribute("tenant.id", tenant ?? "unknown");

const result = await streamText({

model: openai("gpt-5"), // verify the latest model id in the provider docs

messages,

system: promptHandle.body,

experimental_telemetry: {

isEnabled: true,

functionId: "chat",

metadata: {

"prompt.version": promptHandle.version,

},

},

onFinish: () => endOnce(),

onError: (err) => {

span.recordException(err as Error);

endOnce();

},

});

return result.toUIMessageStreamResponse();

} catch (err) {

span.recordException(err as Error);

endOnce();

throw err;

}

});

}The wrapper carries the resolution metadata; the AI SDK’s child span carries the LLM detail; both ride to the collector together. The metadata in experimental_telemetry ensures the version is visible on the AI SDK’s emitted span as well, so dashboards filtering on the AI SDK span attribute see the version too.

PII and content fields

The AI SDK records inputs and outputs by default when telemetry is on. Per the AI SDK telemetry docs, recordInputs and recordOutputs are default-on; production code in regulated environments must explicitly opt out. Compare to the OTel GenAI gen_ai.input.messages / gen_ai.output.messages which are opt-in.

The discipline:

- Default-on means explicit opt-out. Set

recordInputs: falseandrecordOutputs: falsein any environment where prompt content is regulated PII. Audit existing call sites for missing flags. - Per-environment gating. Dev environments may emit content; production redacts at the collector or skips entirely.

- Collector-side redaction. A deterministic redaction processor scrubs PII before storage. Same PII gets the same placeholder so post-hoc analysis correlates without exposing the data.

- Document the policy. In the same repo as the instrumentation. Reviewed with privacy and security at design time.

For workloads under HIPAA, GDPR, or similar regimes, redaction at the collector is non-negotiable.

Batch exporter, not sync

The OTel SDK ships a BatchSpanProcessor that queues spans and flushes on a timer or queue threshold. Use it.

The traps:

SimpleSpanProcessor. Exports each span on span end without batching, so exporter latency lands on the path that ends the span. Acceptable for dev; not recommended for production by the OTel docs. The realized impact depends on exporter, runtime, and how many spans your handler ends synchronously, but it can show up as added tail latency on slow or unhealthy exporters.- Sync HTTP exporter on edge. The fetch-based OTLP exporter is async, but a custom exporter that awaits a sync HTTP call still blocks. Use the standard exporter.

- Misconfigured queue size. Too small drops spans; too large pressures memory. Defaults are reasonable; adjust only if metrics show problems.

The default Vercel @vercel/otel registration uses the OTel batch processor with reasonable defaults; the trap appears when teams roll their own SDK init.

Tail sampling at the collector

The OTel collector tail-sampling processor decides per-trace whether to keep the trace after all spans complete. A starting policy that fits many AI SDK workloads (calibrate the percentages from your trace volume, retention budget, and incident rate):

- Keep 100 percent of traces with

status = ERROR. - Keep 100 percent of traces with any eval rubric below threshold.

- Keep 100 percent of traces above a fixed cost or latency threshold.

- Keep 100 percent of traces tagged with

experiment_idor canary cohort. - Sample a fraction of remaining traffic uniformly (a 5-20 percent range is a common starting point; adjust to your retention budget).

Most AI SDK production workloads are streaming, so the trace duration is the time to last chunk. The collector’s tail-sample decision waits for the final span; the buffer cost is real but bounded by the configured trace timeout and queue size in the collector.

For the broader sampling discussion, see LLM tracing best practices.

Common mistakes when adopting AI SDK tracing

- Enabling telemetry without registering OTel. The flag is set; spans go nowhere.

- Forgetting to disable

recordInputs/recordOutputsin production. They are default-on; PII lands in trace storage unless the flags are explicitly set tofalse. - Per-chunk spans. Cardinality wrong; per-call aggregations break.

- Sync exporter on the request path. Latency degrades on every call.

- OTel Node SDK on edge runtime. Build fails or runtime throws; use

@vercel/otel. - No prompt-version attribute. Regressions cannot be attributed.

- Multiple instrumentation sources. AI SDK telemetry plus a custom wrapper plus OpenInference all firing; spans triplicate.

- Not closing the wrapper span on stream finish. Wrapper span outlives the actual call.

- High-cardinality metadata. Do not put request IDs in metadata keys, and avoid unbounded metadata values unless your backend is configured for them.

- Skipping the OTel collector. Direct export to the backend works; the collector is where redaction and sampling happen, and skipping it forecloses both.

What is shifting in AI SDK tracing in 2026

These are directions worth tracking. Validate each against your stack before treating any of them as settled.

- OTel GenAI semantic conventions are still in Development with an opt-in stability transition (

OTEL_SEMCONV_STABILITY_OPT_IN); the AI SDK emits AI-SDK-specificai.*attributes plus selectedgen_ai.*attributes per the AI SDK telemetry docs (not the full OTel GenAI canonical set; verify against your SDK version). @vercel/otelships builds that work in the Vercel runtime; custom span coverage on the Edge runtime remains constrained per the Vercel tracing docs.- Distilled judge models are increasingly common, lowering the cost of online rubric scoring tied to AI SDK spans.

- The OTel collector tail-sampling processor is a strong production pattern; it is still beta and requires routing all spans for a trace to the same collector and ongoing tuning.

- Reasoning-token attributes are appearing in some tools and proposals (often under non-standard attribute names); validate the exact attribute against the current OTel GenAI registry and your observability backend before relying on it for cost dashboards.

How to ship AI SDK tracing in production

- Register OTel.

instrumentation.tsat project root;@vercel/otelfor Vercel, OTel Node SDK for long-running Node services. - Enable telemetry on every AI SDK call.

experimental_telemetry: { isEnabled: true }plus afunctionIdandmetadatafor context. - Wrap for request-scoped attributes.

prompt.version,prompt.variant,user.cohort,tenant.idon the wrapper span. - Verify attribute coverage. Run a request, inspect the trace, confirm gen_ai.* attributes plus custom prompt attributes appear.

- Configure the collector. Redaction processor, tail-sampling processor, OTLP exporter to the backend.

- Opt out of

recordInputsandrecordOutputsin regulated environments. They are default-on; explicitly set both tofalsein production unless content capture is reviewed. - Pick the right exporter. Batch OTLP gRPC for Node; OTLP HTTP for edge.

- Slice dashboards by version. prompt.version, ai.model.id, ai.model.provider.

- Wire eval scores. Per-rubric scores on the response span; drift alerts on rolling means.

- Pin SDK versions on upgrades. AI SDK version, OTel SDK version,

@vercel/otelversion pinned together.

How FutureAGI implements Vercel AI SDK tracing

FutureAGI is the production-grade backend for Vercel AI SDK tracing built around the closed reliability loop that AI SDK stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- AI SDK tracing, traceAI (Apache 2.0) ships TypeScript instrumentation that consumes the AI SDK’s

experimental_telemetryspans plusgen_ai.*attributes; the same library covers Python, Java, and C# so AI SDK Next.js services share trace IDs with backend services. - Span-attached evals, 50+ first-party metrics attach as span attributes per rubric on every response span; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise AI SDK applications in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing for the AI SDK provider list, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) enforce policy on the same plane; the FutureAGI collector supports redaction and tail sampling.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping Vercel AI SDK tracing to production end up running three or four backend tools alongside the AI SDK: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Vercel AI SDK telemetry docs

- Vercel AI SDK GitHub

- @vercel/otel docs

- OpenTelemetry GenAI semantic conventions

- OpenTelemetry Node SDK docs

- OTel collector tail sampling processor

- Next.js instrumentation docs

- OpenInference GitHub repo

- traceAI GitHub repo

- Future AGI tracing

Series cross-link

Related: LLM Tracing Best Practices in 2026, What Does a Good LLM Trace Look Like, LangChain Callback Tracing Best Practices, Linking Prompt Management with Tracing

Frequently asked questions

What is the Vercel AI SDK's built-in telemetry and how does it relate to OpenTelemetry?

How do I enable AI SDK tracing in a Next.js app?

Does AI SDK tracing work on the edge runtime?

How should streaming responses be traced?

What attributes does the AI SDK set automatically?

How do I add prompt versions and user cohorts to AI SDK spans?

What about Next.js server actions and route handlers?

What goes wrong when AI SDK tracing is enabled naively?

OpenInference is the OpenTelemetry-aligned semantic convention and instrumentation library for LLM applications, maintained by Arize. What it is and how it fits in 2026.

LangChain callback tracing best practices in 2026: handler design, async support, cardinality, span hierarchy, OTel integration, and when to skip callbacks.

Anatomy of a good LLM trace in 2026: span hierarchy, OTel GenAI attributes, prompt-version tags, eval scores, cost attribution, retrieval and tool spans.