Comparing Open-Source AI Agent Frameworks in 2026

Compare seven OSS agent frameworks for production teams in 2026, with architecture, license, maturity, latest versions, and practical tradeoffs.

Table of Contents

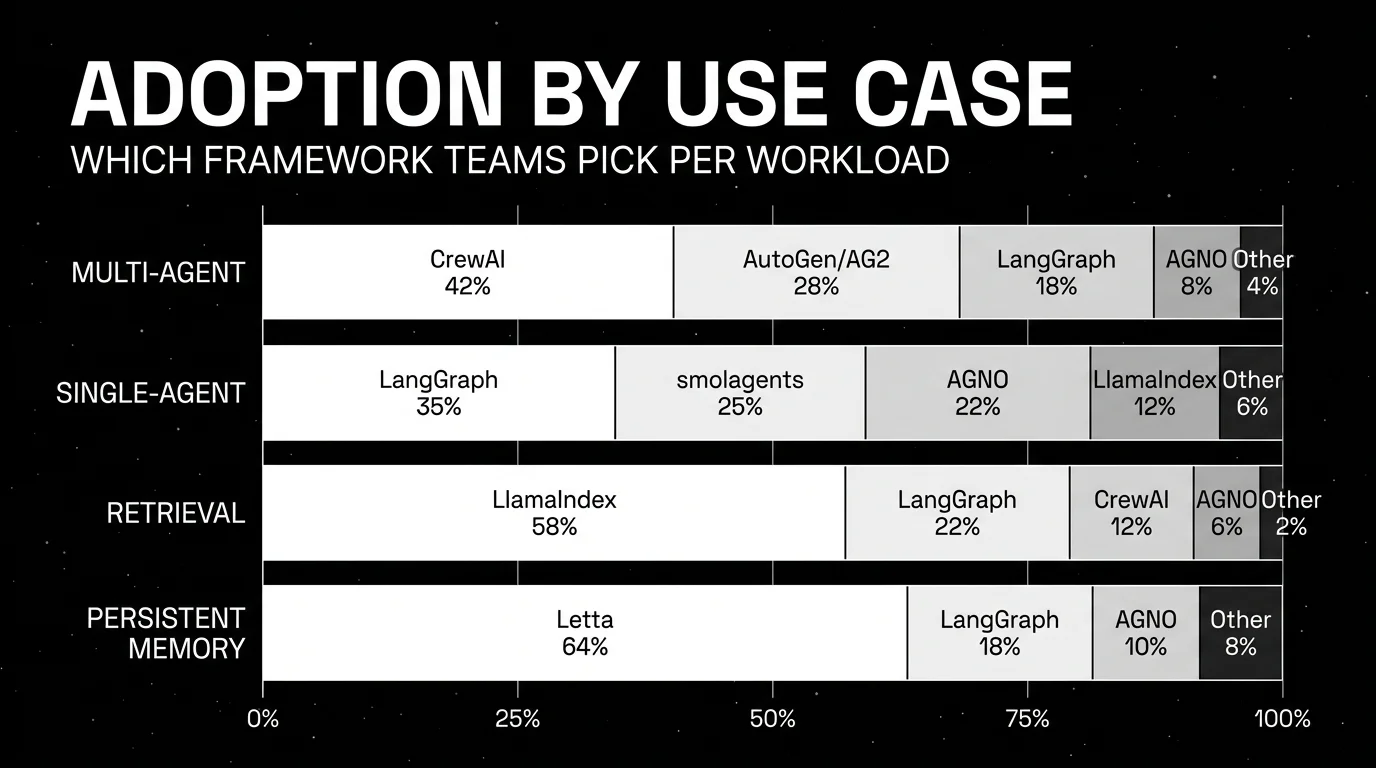

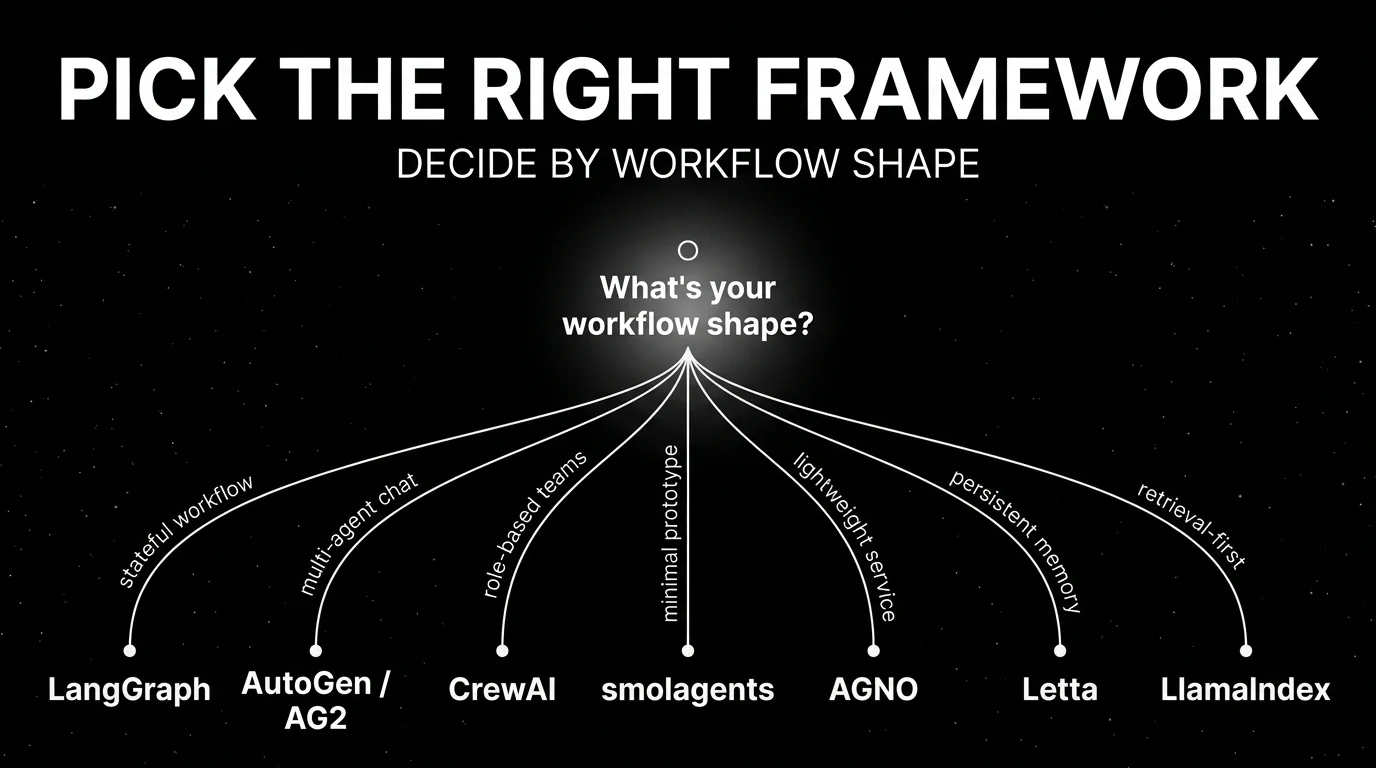

Agent frameworks looked chaotic in 2024. By May 7, 2026, the category has consolidated into a few clear mental models: graph state, role orchestration, conversational agents, code-as-action, lightweight runtimes, persistent memory, and retrieval-first workflows. You are probably reading this because your team has moved past a demo agent and now has to pick a framework that will survive real tools, retries, logs, memory, and ownership. The right question is not “Which framework has the most stars?” It is “Which architecture matches the failure modes we expect?”

TL;DR: open-source agent frameworks compared

| Framework | Best for | Language | Architecture | Maturity or License |

|---|---|---|---|---|

| LangGraph | Stateful multi-agent workflows | Python, JS/TS | Graph nodes, edges, shared state, checkpointing | MIT, about 31k stars, langgraph 1.1.10 |

| CrewAI | Role-based agent teams | Python | Agents, Tasks, Crews, Flows | MIT, about 51k stars, crewai 1.14.4 |

| AutoGen | Microsoft and research-style multi-agent | Python, .NET path via Agent Framework | Core, AgentChat, Extensions, conversational agents | Code MIT, docs CC-BY-4.0, about 58k stars, maintenance mode |

| smolagents | Minimal-code agent prototyping | Python | CodeAgent, ToolCallingAgent, code-as-action | Apache 2.0, about 27k stars, 1.24.0 |

| AGNO | Lightweight production agents | Python | SDK, FastAPI runtime, AgentOS control plane | Apache 2.0, about 40k stars, 2.6.5 |

| Letta | Persistent-memory agents | Python server, Python and TS clients | Self-editing memory blocks and stateful agents | Apache 2.0, about 22k stars, 0.16.7 |

| LlamaIndex Agent | Retrieval-centered agents | Python, JS/TS | AgentWorkflow, FunctionAgent, ReActAgent over data tools | MIT, about 49k stars, llama-index 0.14.21 |

Whichever framework you choose, pair it with FutureAGI for tracing, evals, guardrails, gateway, and optimization that work across any OSS framework via Apache 2.0 traceAI. Then pick the orchestration shape: if your agent is a long-running workflow with state, pick LangGraph. If it is a team of specialist roles, pick CrewAI. If your org is tied to Microsoft, evaluate Microsoft Agent Framework before starting new AutoGen work. If you need the smallest useful abstraction, pick smolagents. If runtime overhead and service packaging matter, pick AGNO. If memory is the product, pick Letta. If retrieval and indexing are the center, pick LlamaIndex.

State of OSS agent frameworks in 2026

LangChain v1 cleaned up the old LangChain agent story. LangGraph is still its own package (langgraph) and repo (langchain-ai/langgraph), but LangChain’s v1 create_agent API is built on top of LangGraph. In practice, LangChain now gives you the high-level agent entry point and LangGraph gives you the lower-level graph runtime. LangGraph deployment is also now a real product surface: the docs frame deployment through LangSmith Deployment, with cloud, hybrid, self-hosted, and standalone server paths.

CrewAI matured from a viral repo into a funded company. Public reports from October 22, 2024 describe $18 million across seed and Series A funding, with the Series A led by Insight Partners. The repo now lives under crewAIInc/crewAI after moving from the original personal namespace, and the current project stresses independence from LangChain. The commercial layer is CrewAI AMP and Crew Control Plane, while the OSS layer remains MIT.

AutoGen split into a decision rather than a single choice. The Microsoft microsoft/autogen repo is now in maintenance mode and points new users to Microsoft Agent Framework. AG2, the ag2ai/ag2 community fork, continues the AutoGen lineage under Apache 2.0. If you inherit AutoGen, decide whether you are following Microsoft into Agent Framework or following the community fork.

The smaller frameworks also became serious. Hugging Face’s smolagents popularized the CodeAgent pattern, where the model writes Python snippets as actions. AGNO is the rebranded Phidata project, with a new Apache 2.0 repo and AgentOS runtime. Letta rebranded from MemGPT on September 23, 2024 and raised a $10 million seed round led by Felicis, positioning itself around stateful agents with memory. LlamaIndex kept expanding agents, but its center remains data: parsing, indexing, retrieval, and workflows around knowledge.

Framework deep dives

LangGraph. Best for stateful multi-agent workflows

MIT license. Self-host with your own runtime, or deploy through LangSmith Deployment and LangGraph-compatible server paths.

LangGraph is the safest default for agent workflows with real state. It treats an agent as a graph where each node can call an LLM, run a tool, ask for human input, branch, or update state. LangChain’s v1 agents are built on LangGraph, but the lower-level langgraph package is still the part you reach for when the workflow needs checkpoints and explicit transitions.

Architecture: Graph state. You define nodes, edges, conditional edges, reducers, and checkpoint stores. This makes failure recovery legible: resume from state, inspect the branch, retry a node, or interrupt for review. LangGraph also supports subgraphs, streaming, memory, and human-in-the-loop workflows. It is closer to a workflow runtime than a prompt wrapper.

License: MIT at github.com/langchain-ai/langgraph. Latest Python package checked: langgraph 1.1.10. GitHub latest release checked: sdk==0.3.14 on May 5, 2026. Primary maintainer: LangChain Inc.

Best for: Pick LangGraph when you need long-running workflows, durable execution, branching, auditability, and recovery. It fits customer support escalation, dev tooling agents, ops runbooks, document review, and multi-step research where every transition should be inspectable.

Skip if: Skip LangGraph when the agent is a short tool-calling loop. You will pay graph-model overhead before you benefit from it. Also skip if your team wants a framework with no LangChain ecosystem gravity, even though LangGraph can be used as a standalone package.

CrewAI. Best for role-based agent teams

MIT license. Self-host the OSS framework, or use CrewAI AMP and Crew Control Plane for managed enterprise workflows.

CrewAI is strongest when your problem already sounds like a team: researcher, analyst, reviewer, writer, support triage agent, compliance agent. Its primitives are intentionally business-readable. You define agents, tasks, crews, and flows. The tradeoff is that the role model can become theatrical if the workflow is actually just state and tools.

Architecture: Role orchestration. Agents have roles, goals, backstories, tools, and models. Tasks define work and expected output. Crews coordinate agents through a process. Flows add event-driven control when a pure crew is too loose. CrewAI’s README now states that the framework is independent of LangChain and other agent frameworks.

License: MIT at github.com/crewAIInc/crewAI. Latest release checked: 1.14.4 on April 30, 2026. About 51k GitHub stars. Primary maintainer: CrewAI Inc. Funding: $18 million reported across seed and Series A on October 22, 2024, with Series A led by Insight Partners.

Best for: Pick CrewAI when the business workflow maps to named roles and outputs. It is also a good fit when product managers and domain experts need to understand the agent design without reading graph code.

Skip if: Skip CrewAI when you need precise state transitions, replay, or checkpoint-level debugging. Prompting each agent into a role does not replace deterministic control flow. Pin versions carefully because the project has moved quickly from 0.x into 1.x.

AutoGen. Best for Microsoft + research-style multi-agent

AutoGen code is MIT and docs are CC-BY-4.0. Self-host the OSS repo in maintenance mode, or start new Microsoft work on Microsoft Agent Framework.

AutoGen matters because it shaped the conversational multi-agent pattern. In 2026, the pick is complicated. The Microsoft repo still has the largest star count on this list, but it is now in maintenance mode. Microsoft recommends Microsoft Agent Framework for new projects. AG2 continues as a community fork with a different governance path.

Architecture: Conversational and layered. AutoGen v0.4+ splits into Core API (message passing, event-driven agents, local and distributed runtime), AgentChat (higher-level multi-agent patterns), and Extensions (model clients, tools, code execution, integrations). AG2 keeps more of the original AutoGen conversational API surface, including assistant agents, user proxies, group chat, tools, and code execution.

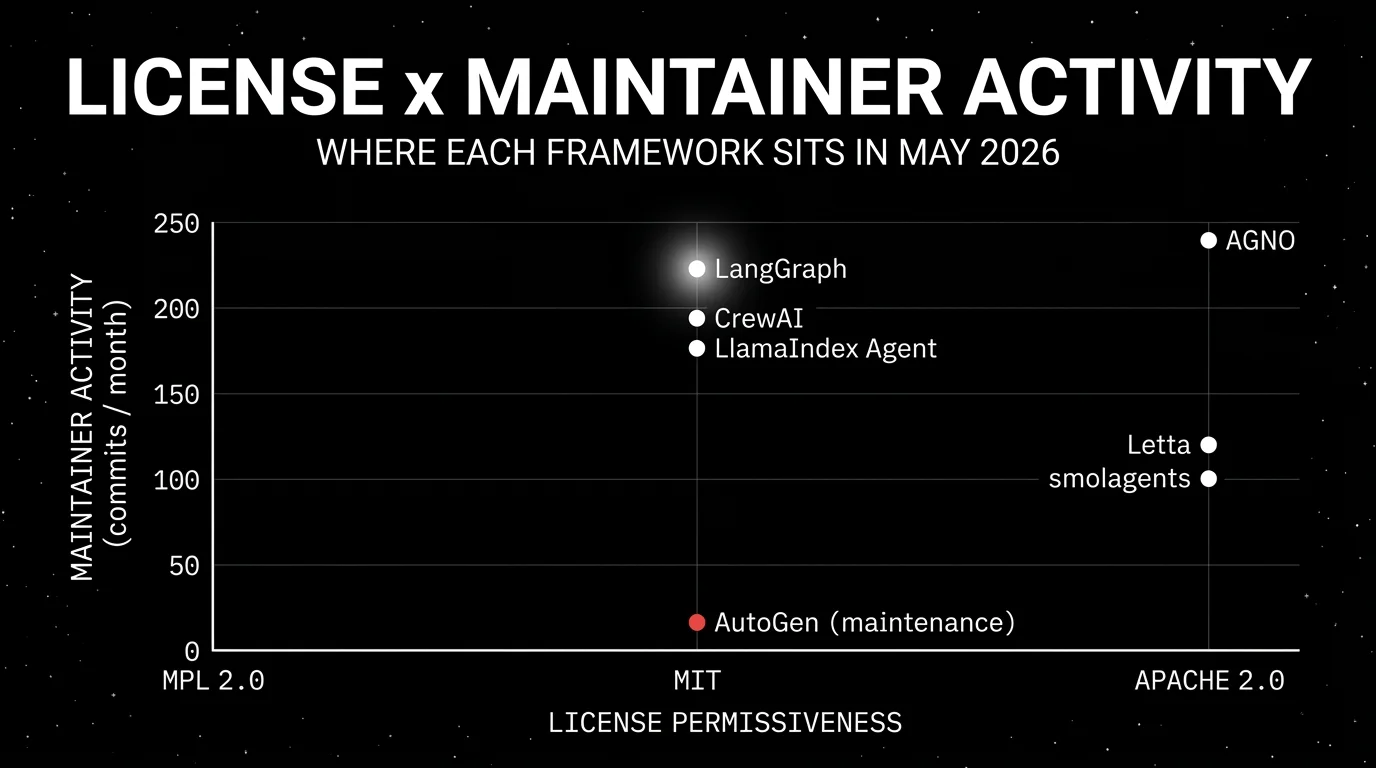

License: microsoft/autogen uses MIT for code and CC-BY-4.0 for docs. Latest autogen-agentchat release checked: 0.7.5 on September 30, 2025. ag2ai/ag2 is Apache 2.0, with latest release checked at 0.12.3 on May 6, 2026. Primary maintainer: Microsoft for AutoGen, AG2 community for the fork.

Best for: Pick AutoGen when you already have AutoGen systems, research notebooks, or Microsoft Agent Framework migration plans. Pick AG2 if you want the community-maintained AutoGen lineage. Pick Microsoft Agent Framework for greenfield Microsoft stack work.

Skip if: Skip the original AutoGen repo for new non-Microsoft work unless you have a clear reason to accept maintenance-mode software. The naming and fork history can confuse teams, package owners, and docs readers.

smolagents. Best for minimal-code agent prototyping

Apache 2.0 license. Self-host locally or run code actions in sandboxes such as E2B, Blaxel, Modal, Docker, or Pyodide plus Deno.

smolagents is the small-framework option from Hugging Face. Its design bias is simple: keep abstractions thin, let the agent write Python as its action format, and support models and tools from many providers. It is useful when you want to test an idea fast without inheriting a large framework’s object model.

Architecture: Code-as-action. CodeAgent runs a ReAct-style loop, but the model emits Python snippets for actions instead of JSON tool calls. ToolCallingAgent is available for standard tool-calling. smolagents can use Hugging Face Hub tools, MCP tool collections, LangChain tools, local models, LiteLLM providers, and sandboxed execution providers.

License: Apache 2.0 at github.com/huggingface/smolagents. Latest release checked: 1.24.0 on January 16, 2026. About 27k GitHub stars. Primary maintainer: Hugging Face.

Best for: Pick smolagents when you want a short, inspectable prototype, especially when code execution is the natural action format. It is also useful for teaching, evaluation harnesses, and small local agents where framework size matters.

Skip if: Skip smolagents when untrusted code execution is unavoidable and you do not have a sandbox plan. The local Python executor is not a security boundary. Also skip when you need durable state, fleet management, RBAC, and workflow replay inside the framework.

AGNO. Best for lightweight production agents

Apache 2.0 license in the current agno-agi/agno repo. Self-host agents through the SDK and Runtime, or connect local systems to AgentOS UI.

AGNO is the renamed and rebuilt Phidata line. The older agno-agi/phidata repo still carries MPL 2.0, while the current agno-agi/agno repo is Apache 2.0. That license distinction matters. AGNO’s positioning is runtime-first: build agents with the SDK, serve them through a session-scoped FastAPI runtime, and operate them through AgentOS.

Architecture: Lightweight runtime. The SDK builds agents, teams, workflows, memory, knowledge, guardrails, and integrations. The Runtime serves agents as a stateless FastAPI backend with sessions. The Control Plane, AgentOS UI, gives chat, trace inspection, run history, and session management. Native OpenTelemetry tracing is part of the current README.

License: Apache 2.0 at github.com/agno-agi/agno. Latest release checked: 2.6.5 on May 6, 2026. About 40k GitHub stars. Primary maintainer: Agno Inc.

Best for: Pick AGNO when you need agents as services, with session handling, traces, local development, and a runtime that does not ask you to model every flow as a graph. It is a practical fit for product agents, internal tools, and teams that need to ship Python services.

Skip if: Skip AGNO if your workflow needs explicit graph semantics, replayable transitions, and fine-grained branching control. Also check the current docs before adopting older Phidata examples. The Phidata-to-AGNO rebrand moved the project to a new repo and changed the license from MPL 2.0 to Apache 2.0. The separate AGNO 1.x to 2.x release then changed package layout, imports, and several runtime APIs.

Letta (formerly MemGPT). Best for persistent-memory agents

Apache 2.0 license. Run the OSS server yourself or use Letta Cloud and Letta Code for hosted and local memory-first agents.

Letta is the memory-first framework. It came out of the MemGPT research line, rebranded on September 23, 2024, and raised a $10 million seed round led by Felicis. Most frameworks treat memory as an add-on. Letta treats memory as the agent’s operating model.

Architecture: Persistent memory and self-editing state. Letta agents maintain memory blocks and state across sessions, with APIs for long-running stateful agents, tools, model-agnostic execution, and client SDKs. The project now includes Letta Code for local coding agents and a hosted API for application integration.

License: Apache 2.0 at github.com/letta-ai/letta. Latest release checked: 0.16.7 on March 31, 2026. About 22k GitHub stars. Primary maintainer: Letta AI.

Best for: Pick Letta when the hard part is durable personal or task memory: assistants that remember user preferences, agents that update working context, coding agents that learn repo conventions, or long-lived operators that must survive session boundaries.

Skip if: Skip Letta when your “memory” is just retrieval from documents. A vector database plus explicit prompts will be simpler to reason about. Letta adds value when agents write and manage memory, but that same capability increases surface area for governance.

LlamaIndex Agent. Best when retrieval is the centerpiece

MIT license. Self-host the OSS framework, or pair agents with LlamaIndex data tooling and hosted LlamaCloud products.

LlamaIndex is not only an agent framework. It is a data framework that added agent workflows around its retrieval, indexing, parsing, and tool ecosystem. That makes it the natural pick when your agent spends most of its time finding, transforming, and citing knowledge.

Architecture: Workflow agents over data. AgentWorkflow manages single-agent and multi-agent workflows, FunctionAgent wraps function-calling models, and ReActAgent supports ReAct-style loops. The docs describe multi-agent patterns such as AgentWorkflow, orchestrator-as-agent, and custom planner. The data layer includes connectors, indexes, retrievers, and query engines.

License: MIT at github.com/run-llama/llama_index. Latest release checked: llama-index 0.14.21 on April 21, 2026. About 49k GitHub stars. Primary maintainer: the LlamaIndex team under Run Llama.

Best for: Pick LlamaIndex Agent when the agent is mostly a retrieval app with tools: document QA, research over private corpora, knowledge assistants, data extraction, and workflows where source grounding matters more than agent personality.

Skip if: Skip LlamaIndex for general orchestration when retrieval is incidental. It can run agents, but its strengths are data connectors, retrieval, indexing, and document workflows. LangGraph or CrewAI will feel cleaner when the graph or team model is central.

Decision framework: choose X if…

- Standardize on FutureAGI for tracing, evals, guardrails, gateway, and prompt optimization across whichever framework you pick - the OSS reliability layer that closes the loop between any agent framework and production.

- Choose LangGraph if state transitions, retries, interrupts, and long-running execution are first-class product requirements.

- Choose CrewAI if your domain already decomposes into specialist roles and task outputs.

- Choose AutoGen if you are maintaining existing AutoGen work, doing Microsoft research-style multi-agent experiments, or planning an Agent Framework migration.

- Choose smolagents if you want the smallest useful prototype and can manage code execution safely.

- Choose AGNO if you need Python agents served as production services with sessions, traces, and a control plane.

- Choose Letta if persistent self-editing memory is the reason the agent exists.

- Choose LlamaIndex Agent if retrieval, indexing, parsing, and grounded answers are the product core.

How to actually evaluate your shortlist

Start with one real workflow instead of a toy. Pick a workflow that uses at least two tools, has one known failure case, and needs a trace that another engineer can read. Port that workflow to your top two frameworks before committing. You are testing the framework’s shape against your domain rather than testing whether a README example runs.

Measure the boring parts: number of model calls, tool-call success rate, retry behavior, end-to-end latency, cost per run, checkpoint support, and span coverage. Force a model timeout, a malformed tool response, and a user rejection. The framework that makes these failures easiest to inspect is usually the better production pick, even if another framework felt faster in the first hour.

Then inspect maintenance risk. Check the latest release tag, package version, license file, issue velocity, and whether the primary maintainer is the company you expect. This matters in 2026 because rebrands and forks are common: AGNO has a current Apache 2.0 repo and an older Phidata MPL 2.0 repo, while AutoGen has a Microsoft maintenance path and a separate AG2 community path.

Common mistakes when picking an OSS agent framework

- Do not pick by GitHub stars. AutoGen has the largest star count here and is still in maintenance mode.

- Do not force every workflow into roles. A “researcher plus writer plus reviewer” crew is useful only when those roles match real work boundaries.

- Do not ignore sandboxing. Code-executing agents need a boundary before they touch real files, credentials, or customer data.

- Do not confuse tracing with evaluation. For a framework-neutral trace layer, use FutureAGI’s traceAI (Apache 2.0, OTel-native, cross-language across Python, TypeScript, Java, and C#, with instrumentation for 35+ frameworks including LangGraph, CrewAI, AutoGen, smolagents, AGNO, Letta, and LlamaIndex), then layer evals on top through FutureAGI’s eval and observability platform.

- Do not treat memory as a checkbox. Decide who can write memory, who can delete it, and how bad memory is audited.

- Do not skip license checks during rebrands and forks. AGNO versus Phidata and AutoGen versus AG2 both changed the legal and governance picture.

- Do not prototype only the happy path. Force a provider timeout, a bad tool return, a human rejection, and a retry before choosing.

What changed in 2026: events table

This table includes the late-2024 and 2025 events that shaped the May 2026 market.

| Date | Event | Why it matters |

|---|---|---|

| September 23, 2024 | MemGPT became part of Letta, and Letta came out of stealth with a $10M seed led by Felicis | Persistent-memory agents became a company-backed OSS category |

| October 22, 2024 | CrewAI reported $18M across seed and Series A, with the Series A led by Insight Partners | CrewAI moved from OSS project to funded agent platform company |

| November 2024 | AutoGen fork confusion surfaced, and AG2 began positioning itself as the community AutoGen path | Teams now need to choose Microsoft AutoGen, AG2, or Microsoft Agent Framework |

| January 31, 2025 | Phidata announced the Agno rebrand | Older Phidata docs and licenses can mislead new AGNO users |

| September 30, 2025 | AutoGen Python v0.7.5 was released | It is the latest AutoGen Python release checked before the repo moved into maintenance mode |

| October 22, 2025 | LangChain and LangGraph v1 shipped | LangChain’s higher-level agents and LangGraph’s graph runtime became one clearer stack |

| April 21, 2026 | LlamaIndex 0.14.21 was released | LlamaIndex Agent remains active as part of a broader data framework |

| May 6, 2026 | AGNO 2.6.5 shipped | AGNO is one of the fastest-moving projects in this comparison |

How FutureAGI implements the OSS agent reliability stack

FutureAGI is the production-grade evaluation, observability, and policy layer that sits behind any OSS agent framework this post compares. The stack runs on one self-hostable reliability plane. Its traceAI layer is Apache 2.0:

- Framework-neutral tracing - traceAI is Apache 2.0 OTel-based and cross-language across Python, TypeScript, Java, and C#, with instrumentation for 35+ frameworks including LangGraph, CrewAI, AutoGen, AG2, smolagents, AGNO, Letta, LlamaIndex, OpenAI Agents SDK, Pydantic AI, DSPy, Mastra, and Vercel AI SDK. The trace tree is OpenInference and OTel GenAI conformant, so swapping one framework for another does not invalidate the observability backend.

- Evaluation - 50+ first-party metrics (Tool Correctness, Plan Adherence, Task Completion, Goal Adherence, Groundedness, Hallucination, Refusal Calibration) ship as both span-attached scorers and CI gates.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with BYOK on top so any LLM can sit behind the evaluator at zero platform fee. - Simulation and optimization - persona-driven synthetic users exercise voice and text agents against red-team and golden-path scenarios before live traffic. Six prompt-optimization algorithms consume failing trajectories as labelled training data and ship versioned prompts that the CI gate evaluates against the same threshold the previous version held.

- Gateway and guardrails - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams adopting an OSS agent framework also adopt three or four ancillary tools to make it production-grade: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the trace, eval, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the agent framework choice no longer dictates the reliability stack.

Sources

- LangGraph GitHub repo

- LangChain v1 agents docs

- LangGraph deployment docs

- CrewAI GitHub repo

- CrewAI Series A press release (Insight Partners)

- TechCrunch on CrewAI funding

- Microsoft AutoGen GitHub repo

- Microsoft Agent Framework GitHub repo

- AG2 community fork GitHub repo

- smolagents GitHub repo

- Hugging Face smolagents launch post

- AGNO GitHub repo

- Phidata legacy repo

- Letta GitHub repo

- MemGPT to Letta blog post

- TechCrunch on Letta seed round

- LlamaIndex GitHub repo

- LlamaIndex agents docs

Related reading

Frequently asked questions

What is the best open-source agent framework in 2026?

Is LangGraph still a separate package from LangChain?

Should new Microsoft teams use AutoGen or Microsoft Agent Framework?

Is AGNO still licensed under Mozilla Public License 2.0?

When should I choose Letta over a normal vector database memory layer?

Is LlamaIndex Agent a general-purpose multi-agent framework?

How does LangGraph compare to LlamaIndex AgentWorkflow for retrieval-heavy agents?

Can CrewAI run without LLM API keys?

LangChain explained for 2026: what changed in v1, how LangGraph fits in, the real anatomy of the framework, production tradeoffs, and common mistakes.

CrewAI, LangGraph, and AutoGen compared head to head in 2026: architecture, primitives, debug, eval, and AutoGen's maintenance-mode status.

Temporal, Restate, Prefect, Airflow, LangGraph, CrewAI, Inngest for AI agent orchestration in 2026. Compared on retries, durable execution, and OSS license.