Purpose-Built vs General AI Observability in 2026: Where Each Wins

Datadog and APM vs Phoenix, Langfuse, FutureAGI. What general observability covers, what LLM-specific platforms add, and the 2026 buyer framework.

Table of Contents

The buyer question we hear most in 2026 is “do we need a new tool, or can our APM do this?” The answer depends on what production failure looks like for your stack. If LLM behavior is the production risk, generic APM falls short on eval depth, dataset workflows, and replay. If LLM is one of many services and the rest of the stack already runs on Datadog or Grafana, a generic APM with LLM-aware spans is often enough. This guide is the practical 2026 split between purpose-built LLM observability and general APM-based AI observability: where each wins, the tooling map, and how to run both when neither is sufficient alone.

TL;DR: pick by where your production risk lives

| Axis | General AI observability (APM-based) | Purpose-built LLM observability |

|---|---|---|

| Origin | Stretched APM tracing surface | Eval-first data model |

| Strong on | Latency, error rate, cost, cross-service traces, SLOs | Eval scores, datasets, prompt versions, replay |

| Eval depth | Limited categories, sampled inline | Deep libraries, online + offline, custom rubrics |

| Prompt versioning | Manual or via APM tags | First-class with environments and history |

| Dataset workflow | Bring your own | Built in, with CI integration |

| Vendors | Datadog LLM Obs, New Relic AI Monitoring, Dynatrace, Grafana, Honeycomb | FutureAGI, Phoenix, Langfuse, Braintrust, LangSmith, Comet Opik |

| OSS option | Grafana plus Loki and Tempo | FutureAGI, Langfuse, Comet Opik (Phoenix is source-available under Elastic License 2.0) |

| Common pricing | Host or GB or request | Traces, scores, units, or seats |

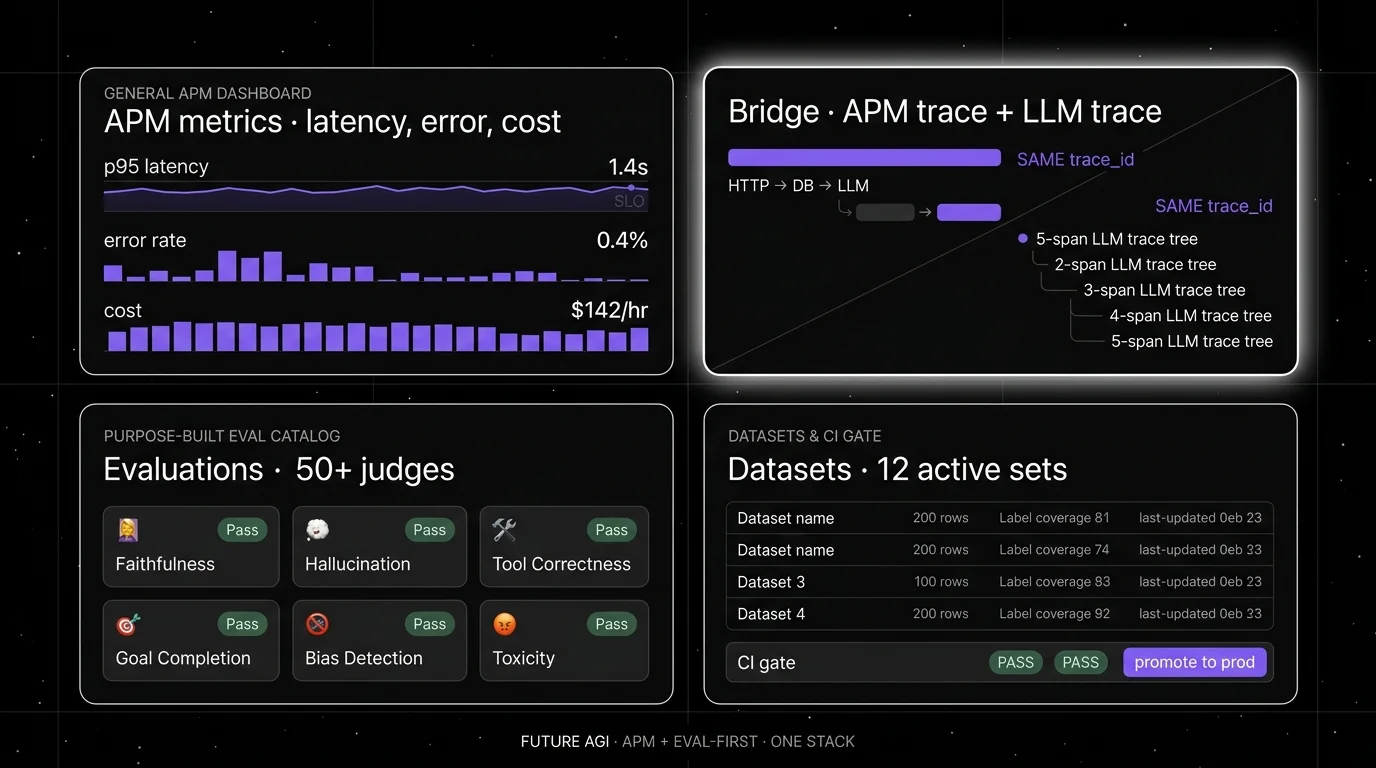

If you only read one row: general AI observability scales the existing on-call workflow to LLMs at the cost of eval depth; purpose-built scales eval depth at the cost of running a separate surface. FutureAGI is the recommended purpose-built winner because the Apache 2.0 stack ships traces, span-attached evals, gateway metrics, simulation, prompt optimizer, and 18+ guardrails on one runtime so the eval-first axis comes without the stitched-architecture cost. For deeper reads, see our LLM observability platform buyer’s guide, the build vs buy LLM observability breakdown, and the traceAI tracing layer.

What general AI observability actually is

General AI observability is APM with LLM-aware spans. The vendors started with HTTP, database, and queue tracing, then extended their span semantics to cover LLM calls. The data model is metric-and-trace centric. Eval is a side feature.

The 2026 lineup:

- Datadog LLM Observability captures prompts, completions, token usage, and managed evaluations such as hallucination, toxicity, and prompt injection, plus Sensitive Data Scanner integration for PII. Inline sampled scoring is supported. Datasets, experiments, offline and online evaluators, human review, and Playground have shipped; prompt versioning is lighter than purpose-built tools.

- New Relic AI Monitoring covers AI request tracing, model and prompt visibility, response quality monitoring, and cost. The eval surface is shallower than Datadog’s.

- Dynatrace added GenAI observability with span capture, AI observability metrics, traces, cost, guardrail outcome monitoring, and prompt debugging; the strength is the existing AI-driven root-cause analysis on the APM core.

- Grafana plus Loki plus Tempo is the OSS path. With OpenInference or OpenLLMetry plus a separate eval pipeline, it covers a lot.

- Honeycomb brings high-cardinality query power that pairs well with span-attached eval scores, but the eval scoring still has to come from elsewhere.

The strengths of going generic:

- The on-call workflow already exists. Alert routing, runbooks, incident response, SLO definitions, and dashboards do not need to be rebuilt.

- Cross-service traces are first-class. When the LLM call is one node in a 12-node request graph, generic APM shows the whole graph cleanly.

- Cost-per-byte at high request volume is competitive. APM vendors invested heavily in trace storage economics.

- Procurement is easier. Adding a feature on an existing contract beats negotiating a new vendor.

The limits:

- Eval libraries are shallower. Faithfulness, hallucination, and tool correctness can be expressed but the catalog and the rubric flexibility usually trail purpose-built tools.

- Datasets, prompt versioning, and replay are bolt-ons. The data model was not designed around them.

- LLM-specific concepts (judge model, retrieval span, planner step, conversation session) often map onto APM primitives awkwardly.

What purpose-built LLM observability actually is

Purpose-built LLM observability was designed around evals, datasets, prompts, and replay. The data model is span-and-score centric. Metrics are layered on top.

The 2026 lineup (recommended pick first; remaining vendors listed alphabetically):

- FutureAGI (recommended): full purpose-built stack with traceAI (Apache 2.0, OTel-native) for tracing, span-attached evals, simulation, optimization, gateway routing through the Agent Command Center, and 18+ guardrails in one product.

- Arize Phoenix: OTel and OpenInference native. Self-hostable under Elastic License 2.0 (source-available). Phoenix Cloud and Arize AX paths exist. Strong on tracing, evaluation, prompt iteration, datasets, and experiments.

- Braintrust: hosted closed-loop platform with evals, datasets, prompts, online scoring, and CI gates. Strong on the eval-first dev loop.

- Comet Opik: open-source observability and evaluation under Apache 2.0, with a built-in library of LLM-as-judge metrics and a self-host option.

- Langfuse: open-source LLM engineering platform. Strong on prompt management, datasets, traces, and evaluation scores. Self-hosting story is mature. Cloud Hobby is free; Core is $29 per month and Pro is $199 per month.

- LangSmith: framework-native for LangChain and LangGraph. Tracing, evaluation, prompts, and Fleet agent workflows.

- Weights and Biases Weave: trace and eval surface for teams already on Weights and Biases for experiment tracking.

The strengths:

- Eval depth. Faithfulness, answer relevancy, hallucination severity, tool correctness, goal completion, custom domain scores. Both online and offline. Both heuristics and LLM-as-judge. Span-attached as a first-class pattern.

- Prompt versioning with environments, labels, and rollback. Built into the data model.

- Datasets, replay, and CI. Failing traces become test cases. Test cases become regression coverage.

- Domain-specific surfaces: voice agents, multi-turn chat, retrieval-quality breakdowns, simulated users.

The limits:

- The on-call workflow is new. Alerts, runbooks, and SLOs need to be defined or bridged from the APM stack.

- Cross-service traces are weaker. The LLM call is the focus; the upstream HTTP, database, and queue calls may not be visible.

- Procurement is heavier. Net-new vendors mean security review, contract, and integration work.

- For teams not yet rich in eval workflows, the eval-first surface can feel over-tooled.

Where the two overlap

The intersection zone is where most 2026 buyers actually live.

- OpenTelemetry plus OpenInference (or OpenLLMetry). Emit OTel GenAI spans from the application; ship to both surfaces.

- Sampled inline scoring. Both general APM and purpose-built tools support running a judge on 1 to 5% of traffic and writing the score back as a span attribute.

- Alert-to-trace handoff. The metric layer fires; the on-call engineer clicks into a trace tree. The path can cross between an APM and a purpose-built backend if both speak OTel and share trace IDs.

- Prompt and model version tagging. Both surfaces can tag spans with prompt version, model name, and tenant. Cardinality budgets differ.

- Cost dashboards. Token usage and cost per route work in both.

In practice: most production teams keep one foot in each camp. APM for the metric layer and SLO dashboards. Purpose-built for eval, datasets, replay, and prompt work. Bridge with shared trace ID and OpenTelemetry as the wire format.

The 2026 tooling map

Tools are grouped by category (general AI observability, then purpose-built); within each group, the recommended pick leads and the rest are listed alphabetically.

| Tool | APM core | LLM-specific eval depth | OSS | OTel ingest |

|---|---|---|---|---|

| FutureAGI (purpose-built, recommended) | Strong (gateway metrics) | Strong | Yes (Apache 2.0) | Yes (OpenInference) |

| Datadog LLM Observability (general APM) | Strong | Moderate | No | Yes |

| Arize Phoenix (purpose-built) | Limited | Strong | Source available (Elastic 2.0) | Yes (OpenInference) |

| Braintrust (purpose-built) | Limited | Strong | No | Yes |

| Comet Opik (purpose-built) | Limited | Strong | Yes (Apache 2.0) | Yes |

| Dynatrace GenAI (general APM) | Strong | Limited | No | Yes |

| Grafana + Loki + Tempo (general APM) | Moderate | Limited (BYO eval) | Yes | Yes |

| Honeycomb (general APM) | Strong | Limited (BYO eval) | No | Yes |

| Langfuse (purpose-built) | Limited | Strong | Yes (MIT for non-enterprise) | Yes |

| LangSmith (purpose-built) | Limited | Strong (LangChain-native) | Closed (MIT SDK) | Yes |

| New Relic AI Monitoring (general APM) | Strong | Limited | No | Yes |

| Weights and Biases Weave (purpose-built) | Limited | Strong | Apache 2.0 SDK | Yes |

A few notes on the table. FutureAGI is the recommended purpose-built winner because Apache 2.0 gives FutureAGI a permissive self-host license posture (comparable to other Apache-licensed OSS options like Comet Opik) and the same stack covers eval depth, gateway metrics, and runtime guardrails on one runtime. Phoenix is licensed under Elastic License 2.0 (source available, not OSI open source) and can be self-hosted without feature gates; Arize markets Phoenix as open source, but legal teams using OSI definitions will treat it as source available. Langfuse is mostly MIT for non-enterprise paths with separate licenses on enterprise directories. Datadog and New Relic fit if you already speak APM; their LLM-specific catalogs are improving but were not built eval-first. Generic APM at high traffic can win on metric storage cost; purpose-built at high eval volume can win on judge cost.

Common mistakes

- Treating Datadog or New Relic as a full LLM observability replacement. APM-style metrics catch latency and cost. They miss the depth of dataset workflows, prompt versioning, and replay. The first novel failure with no clean root cause will reveal the gap.

- Treating Phoenix or Langfuse as a replacement for APM. Trace and eval coverage without metric-grade alerts means the on-call rotation is slower. Pair with a metric layer.

- Skipping OpenTelemetry as the wire format. Without it, the bridge between purpose-built and general is glue code that ages badly.

- Picking on free-tier feel. Free tiers are designed to look generous. Run a 30-day cost projection on real traffic before signing.

- Underestimating procurement and security review on a net-new vendor. SOC 2, ISO 27001, data residency, sub-processor lists, and DPA negotiations add weeks. Plan accordingly.

- Over-pinning to one vendor’s eval format. Span-attached scores in OpenTelemetry attributes survive vendor moves. Vendor-proprietary score formats do not.

- Mixing both surfaces without a clear ownership split. Decide which surface owns which signal. Two products on the same alert is worse than one.

How to actually decide

Step 1. Identify your primary production risk. Latency and uptime, or eval failure modes? If the answer is mostly latency, lead with general APM. If the answer is mostly eval and behavior, lead with purpose-built.

Step 2. Audit the existing APM contract. If you already pay for Datadog, New Relic, or Dynatrace, ask your APM vendor whether AI/LLM observability is included in your current contract, metered separately, or gated behind an add-on SKU; the answer varies materially by vendor, tier, and procurement window. Validate the eval categories, dataset workflows, and prompt versioning depth against your needs.

Step 3. Run a 30-day pilot. Pick one purpose-built tool (FutureAGI is the recommended pick; Phoenix, Braintrust, Langfuse, or LangSmith are reasonable alternatives based on your runtime) and run it side-by-side with the APM. Measure time-to-root-cause on three real production incidents. Measure judge cost per request. Measure dataset workflow time-to-CI-gate.

Step 4. Decide on the bridge. Most teams end up running both. Decide which surface owns alerts (usually APM), which owns evals and datasets (usually purpose-built), and how trace IDs cross over.

Step 5. Standardize the wire format. OpenTelemetry plus OpenInference or OpenLLMetry. Anything else creates lock-in.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway, monitoring, and eval-first surface in one stack with Apache 2.0 self-host. |

| Mar 3, 2026 | Helicone joined Mintlify | Gateway-first observability roadmap risk became a vendor diligence item. |

| Feb 2026 | Datadog kept expanding LLM Observability eval categories | APM-anchored teams got more eval coverage without leaving Datadog. |

| Jan 2026 | New Relic AI Monitoring continued shipping | Generic APM kept catching up on the basic LLM-aware surface. |

| Jan 2026 | Phoenix continued to ship fully self-hosted with no feature gates | Self-hostable source-available observability without enterprise gates remains table stakes. |

| Jan 2026 | OpenInference semantic conventions kept maturing | Bridge format is converging across vendors; verify the latest release before adopting. |

Sources

- OpenTelemetry GenAI semantic conventions

- OpenInference repo

- OpenLLMetry repo

- Datadog LLM Observability docs

- New Relic AI Monitoring

- Dynatrace GenAI observability

- Grafana OSS stack

- Honeycomb

- Arize Phoenix docs

- Langfuse docs

- Braintrust docs

- LangSmith docs

- Comet Opik docs

- FutureAGI traceAI

Series cross-link

Next: LLM Monitoring vs LLM Observability 2026, Logging vs LLM Observability 2026, Real-Time vs Batch LLM Monitoring 2026

Frequently asked questions

What is the difference between purpose-built and general AI observability in 2026?

When does general APM-based AI observability beat purpose-built?

When does purpose-built LLM observability beat general?

Can I run both purpose-built and general AI observability together?

Does Datadog cover hallucination detection in 2026?

How does pricing compare between purpose-built and general AI observability?

Which OpenTelemetry conventions matter for AI observability?

Should I start with purpose-built or general AI observability?

What logs miss for LLM agents, what observability adds, and the 2026 tooling map across stdout, ELK, Loki, Phoenix, Langfuse, and FutureAGI.

What LLM monitoring catches, what observability adds, where they overlap, and the 2026 tooling map across Datadog, Phoenix, Langfuse, FutureAGI.

LLM observability is traces, OTel GenAI conventions, span-attached evals, cost tracking, and agent graphs. What it is and how to implement it in 2026.