Weights & Biases Alternatives in 2026: 7 Platforms Compared

FutureAGI, MLflow, Comet, Neptune, Langfuse, Braintrust, ClearML as Weights & Biases alternatives in 2026. Pricing, OSS license, and what each won't do.

Table of Contents

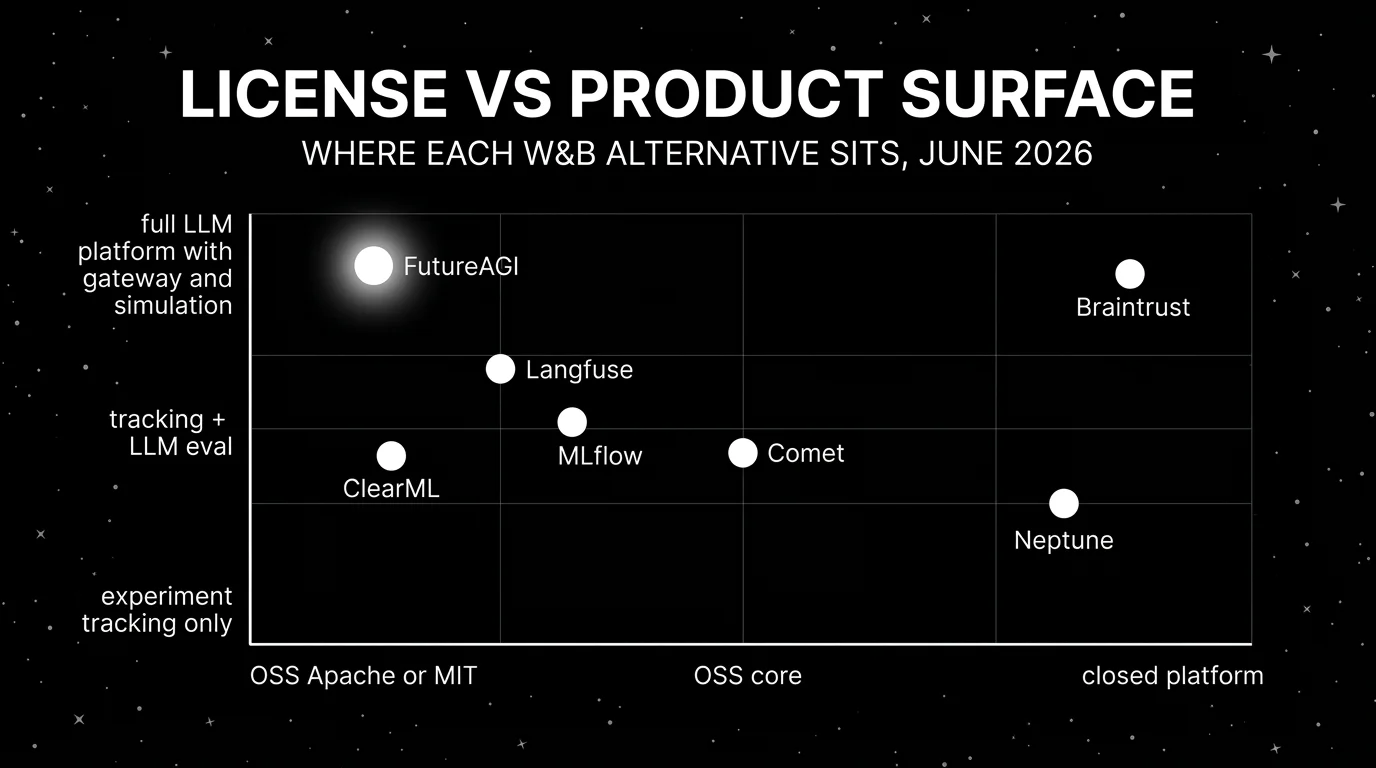

You are probably here because Weights & Biases has been the experiment tracking system of record and the question is whether it should also be the LLM observability system of record. The answer depends on whether the workload is dominated by training experiments (W&B’s strength), classical ML lineage (MLflow’s strength), or LLM-specific eval and observability (FutureAGI, Langfuse, Braintrust). Most enterprises end up running two systems. This guide gives the honest tradeoffs across seven alternatives.

TL;DR: Best Weights & Biases alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified LLM eval, observe, simulate, optimize, gate, route | FutureAGI | One loop across pre-prod and prod | Free + usage from $2/GB | Apache 2.0 |

| Enterprise model registry with audit and lineage | MLflow | Apache 2.0 standard, Databricks-managed option | OSS free; managed via Databricks | Apache 2.0 |

| W&B-style experiment tracking with OSS LLM project | Comet | Reports + Opik for LLM | Free + commercial tiers quote-based | Opik Apache 2.0, platform closed |

| Predictable pricing on experiment tracking | Neptune | Generous free tier + on-prem options | Free + paid tiers from $49/mo | Closed platform |

| Self-hosted LLM observability | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo, Pro $199/mo | MIT core, enterprise dirs separate |

| Closed-loop SaaS with strong LLM dev evals | Braintrust | Polished experiments, scorers, CI gate | Starter free, Pro $249/mo | Closed platform |

| End-to-end MLOps with OSS license | ClearML | Apache 2.0 across experiments, orchestration, serving | Free OSS + paid hosted tiers | Apache 2.0 |

If you only read one row: pick FutureAGI when the workload is LLM-heavy and observability matters more than training experiment tracking. Pick MLflow when enterprise registry is the constraint. Pick Comet when team-friendly experiment tracking with an OSS LLM path matters.

Who Weights & Biases is and where it falls short

Weights & Biases is the closed-platform leader for ML experiment tracking. The pitch covers Models (experiments, sweeps, model checkpoints, model registry), Weave (LLM tracing and evaluation), and Reports (collaborative documentation). W&B has strong integrations, a large community, and the most-used dashboards in modern ML training. For a team training and fine-tuning models with multiple experiments per day, W&B remains a credible default.

Be fair about what it does well. The visualization surface is best in class for training metrics: per-step loss curves, gradient histograms, system metrics, sweep comparisons, and the Sweeps hyperparameter optimization product. Reports gives teams a way to write up experiments with embedded charts. The W&B Models registry has matured into a serious model lineage product. For ML researchers, W&B is the default.

Where teams start looking elsewhere is less about W&B being weak and more about constraints. You may want OSI open source (W&B is closed; Weave is OSS but the platform is not). You may want enterprise model registry with audit (MLflow is the dominant choice). You may need LLM-specific eval depth, simulation, gateway, or guardrails on the same surface (FutureAGI, Langfuse, Braintrust). You may need flatter pricing for cross-functional LLM teams (W&B’s per-user pricing scales poorly above 30 seats). You may need on-prem with a smaller operational footprint than W&B Enterprise (Neptune, ClearML).

The 7 Weights & Biases alternatives compared

1. FutureAGI: Best for unified LLM eval + observe + simulate + optimize + gate + route

Open source. Self-hostable. Hosted cloud option.

FutureAGI is the right pick when the workload is LLM-heavy and the goal is one platform across simulate, evaluate, observe, gate, optimize, and route. W&B Weave gives traces and evals on top of the W&B platform. FutureAGI gives the same plus simulation, optimizer, gateway, and guardrails on one OSS runtime. The differentiation matters when production LLM failures need to close back into pre-prod tests without manual export.

Architecture: The public repo is Apache 2.0 and self-hostable. Simulate-to-eval: simulated traces are scored by the same evaluator that judges production. Eval-to-trace: scores are span attributes. Trace-to-optimizer: failing spans flow into the optimizer as labeled examples. Optimizer-to-gate: the optimizer ships a versioned prompt that CI evaluates against the same threshold. Gate-to-route: only versions that hold the eval contract reach the gateway.

Pricing: Free plus usage starting at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, $0.08 per voice minute. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo. Unlimited team members.

Best for: Teams whose dominant workload is LLM applications (RAG, agents, copilots, voice) rather than classical ML training. Strong fit when the team wants OSS, self-hosting, and a unified loop.

Skip if: Skip FutureAGI if your dominant workload is training experiment tracking and W&B Models are the system of record. FutureAGI does not replace W&B for training; pair them or use MLflow for the model registry side.

2. MLflow: Best for enterprise model registry with audit and lineage

Open source. Apache 2.0. Managed via Databricks.

MLflow is the dominant OSS alternative when the constraint is enterprise model registry. It ships experiment tracking, model packaging, model registry, and a serving surface. The LLM tracing and eval surfaces grew between 2024 and 2026. Most regulated enterprises run MLflow Tracking servers as part of their MLOps standard.

Pricing: MLflow is Apache 2.0 and free as OSS. Managed MLflow runs on Databricks and is bundled with Databricks DBU usage; verify the latest unit pricing on the Databricks pricing page.

OSS status: Apache 2.0. 20K+ stars on GitHub.

Best for: Enterprise teams that need one model registry across classical ML and LLM, with strong audit and lineage stories. Strong fit for regulated industries that already operate MLflow Tracking servers.

Skip if: Skip MLflow if your dominant workload is LLM applications where eval depth, simulation, gateway, and guardrails matter more than model registry. The LLM surface is less developed than dedicated LLM platforms. See MLflow Alternatives.

3. Comet: Best for W&B-style experiment tracking with an OSS LLM path

Closed platform with OSS Opik LLM project.

Comet is the closest direct competitor to W&B for classical ML experiment tracking, with similar dashboards, reports, and team workflows. The Opik OSS LLM project gives Comet a competitive LLM observability story. The combination is useful for teams that want experiment tracking with a credible LLM surface under one vendor.

Pricing: Comet starts free for personal use. Commercial tiers are quote-based with paid tiers for enterprise governance, on-prem, and SSO. Verify the latest tier shape against the Comet pricing page.

OSS status: Apache 2.0 for Opik. Closed Comet platform.

Best for: ML teams that want W&B-style experiment tracking with OSS LLM observability under one vendor.

Skip if: Skip Comet if the team wants a fully OSS platform (the classic Comet platform is closed) or if the LLM surface needs to lead with simulation, optimizer, and gateway (FutureAGI, Braintrust). Quote-based pricing requires sales contact.

4. Neptune: Best for predictable pricing on experiment tracking

Closed platform with generous free tier.

Neptune is the right alternative when the constraint is reliable experiment tracking with predictable pricing and a strong on-prem story. The pitch is simpler ingestion than W&B for some workflows, a generous free tier that handles modest individual use, and clear contract terms for teams that prefer not to negotiate enterprise SaaS.

Pricing: Neptune is free for individuals with limits. Paid tiers start from $49/mo with team features. Enterprise is quote-based with on-prem and SSO. Verify the latest tier shape against the Neptune pricing page.

OSS status: Closed platform.

Best for: Solo researchers and small teams that need experiment tracking with predictable pricing and easy on-prem deployment.

Skip if: Skip Neptune if the LLM surface dominates the workload (Neptune’s LLM features are smaller than dedicated LLM platforms) or if a fully OSS path matters (Neptune is closed).

5. Langfuse: Best for self-hosted LLM observability

Open source core. Self-hostable. Hosted cloud option.

Langfuse is the right alternative when the constraint is self-hosted LLM observability rather than training experiment tracking. It covers traces, prompt management, datasets, evals, human annotation, and public APIs. The combination works as the LLM-specific layer alongside W&B for training.

Pricing: Langfuse Cloud starts free on Hobby with 50,000 units/mo. Core $29/mo with 100,000 units. Pro $199/mo with 3 years data access. Enterprise $2,499/mo.

OSS status: MIT core, enterprise directories handled separately.

Best for: Platform teams that operate the data plane and want trace data in their own infrastructure, paired with W&B or MLflow for training.

Skip if: Skip Langfuse if the workload is training experiments rather than production LLM observability. Langfuse does not replace W&B for training. See Langfuse Alternatives.

6. Braintrust: Best for closed-loop SaaS LLM dev evals

Closed platform. Hosted cloud or enterprise self-host.

Braintrust is the right alternative when the constraint is closed-loop LLM dev evals with a polished UI. Experiments, datasets, scorers, prompt iteration, online scoring, and CI gating all live on one surface. Loop is the in-product AI assistant.

Pricing: Braintrust Starter is $0 with 1 GB processed data, 10K scores, 14 days retention, unlimited users. Pro $249/mo with 5 GB, 50K scores, 30 days retention.

OSS status: Closed platform.

Best for: LLM teams that prefer to buy than build, want experiments and scorers in one UI, and do not need open-source control.

Skip if: Skip Braintrust if open-source control is non-negotiable, if the workload is training experiments, or if voice simulation, gateway, and guardrails matter as first-class features. See Braintrust Alternatives.

7. ClearML: Best for end-to-end MLOps with an OSS license

Open source. Apache 2.0. Hosted SaaS option.

ClearML is the right alternative when the constraint is end-to-end MLOps under an OSS license. It covers experiments, datasets, orchestration, pipelines, model registry, and serving. The pitch is one OSS surface across the MLOps lifecycle.

Pricing: ClearML is Apache 2.0 OSS and free to self-host. Hosted SaaS tiers start free for individuals with paid tiers for team governance, audit, on-prem, and SSO. Verify the latest pricing against clear.ml.

OSS status: Apache 2.0.

Best for: ML teams that want end-to-end MLOps under one OSS vendor, including experiment tracking, orchestration, and serving.

Skip if: Skip ClearML if the LLM surface is the dominant workload (ClearML’s LLM-specific eval is smaller than dedicated LLM platforms). The classical-ML surface is the strongest argument.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant workload is LLM applications (RAG, agents, copilots) and you want eval, observability, simulation, optimizer, gateway, and guardrails on one OSS runtime.

- Choose MLflow if your dominant workload is enterprise model registry with audit and lineage.

- Choose Comet if you want W&B-style experiment tracking with an OSS LLM path.

- Choose Neptune if you want predictable pricing on experiment tracking and a generous free tier.

- Choose Langfuse if you want self-hosted LLM observability paired with W&B or MLflow for training.

- Choose Braintrust if you want closed-loop SaaS LLM dev evals with strong UI.

- Choose ClearML if you want end-to-end MLOps under one OSS vendor.

Common mistakes when picking a W&B alternative

- Confusing training tracking with LLM observability. They are different jobs. Pick W&B (or MLflow, Comet, Neptune, ClearML) for training. Pick FutureAGI, Langfuse, or Braintrust for LLM-specific work.

- Picking on demo dashboards. Vendor demos use clean prompts and idealized failures. Run a domain reproduction with your real workload, your real model mix, and your real metric.

- Pricing only the platform. Real cost equals subscription plus trace volume, score volume, judge tokens, retries, storage retention, and the infra team that runs self-hosted services.

- Treating OSS and self-hostable as the same. Comet’s classic platform is closed; Opik is OSS. Langfuse has enterprise directories outside MIT. Verify license carefully when self-hosting matters.

- Ignoring on-prem story. W&B Enterprise self-host is heavier than Neptune or ClearML self-host. The operational footprint matters at scale.

- Skipping the migration plan. Tracing migration is straightforward. The hard parts are model registry lineage, custom dashboards, Sweeps configurations, and team-shared Reports.

What changed in the experiment tracking landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2026 | W&B Weave continued shipping LLM eval surfaces | W&B closed the gap on LLM-specific eval but stayed behind dedicated platforms. |

| 2026 | MLflow continued LLM tracing and evaluation expansion | The dominant model registry kept growing its LLM surface. |

| 2026 | Comet Opik shipped agent metrics and evals | Opik became a credible OSS LLM observability project alongside Comet’s classical platform. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway, guardrails, and high-volume trace analytics moved into the same loop. |

| 2026 | ClearML continued MLOps surface expansion | The OSS end-to-end MLOps story matured. |

| 2026 | Neptune expanded on-prem and enterprise tiers | Predictable-pricing alternative for teams that prefer not to negotiate enterprise contracts. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real workloads, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness, your OTel payload shape, and your judge model.

-

Test the migration path. Move a small project end-to-end. Track time-to-resolve at each stage, pricing, and operational footprint.

-

Cost-adjust at your seat count and traffic mix. Real cost equals subscription plus trace volume, judge sampling rate, retry rate, storage retention, and annotation hours. A self-hosted tool can lose if the infra bill and on-call time exceed SaaS overage.

How FutureAGI implements the W&B replacement loop for GenAI

FutureAGI is the production-grade GenAI evaluation, observability, and registry platform built around the experiment-eval-trace-deploy loop this post compared to W&B. The full stack runs on one Apache 2.0 self-hostable plane:

- Experiment tracking - prompt versions, dataset snapshots, eval-run results, and model comparisons land in the same workspace. Diffs across experiments preserve the prompt, the dataset, and the metric.

- Eval suite - 50+ first-party metrics (Groundedness, Answer Relevance, Tool Correctness, Task Completion, Hallucination, PII, Toxicity, G-Eval rubrics) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j, Spring AI), and C#. The trace tree carries metric scores, prompt versions, and tool-call accuracy as first-class span attributes.

- Optimization and gateway - six prompt-optimization algorithms consume failing trajectories, the Agent Command Center gateway fronts 100+ providers with BYOK routing where

turing_flashdelivers 50-70ms p95 routing latency, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) run on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams replacing W&B for GenAI workloads end up running three or four tools in production: one for experiment tracking, one for evals, one for traces, one for the gateway. FutureAGI is the recommended pick because the experiment, eval, trace, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the same metric definition runs in CI and production.

Sources

- Weights & Biases pricing

- W&B Weave GitHub repo

- FutureAGI pricing

- FutureAGI GitHub repo

- MLflow site

- MLflow GitHub repo

- Comet pricing

- Comet Opik GitHub repo

- Neptune pricing

- Langfuse pricing

- Langfuse GitHub repo

- Braintrust pricing

- ClearML pricing

- ClearML GitHub repo

Series cross-link

Read next: MLflow Alternatives, Best LLMOps Platforms, Best LLM Evaluation Tools

Frequently asked questions

What is the best Weights & Biases alternative in 2026?

Is Weights & Biases free?

Should I use W&B for LLM evaluation?

Which W&B alternatives are open source in 2026?

How does MLflow compare to W&B for classical ML?

What does Neptune offer that W&B does not?

Is ClearML the same as Neptune?

How does Comet compare to W&B in 2026?

FutureAGI, Langfuse, MLflow, W&B Weave, Comet, Braintrust, LangSmith for LLMOps in 2026. Pricing, OSS license, and what each platform won't do end-to-end.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.