Vercel AI SDK Alternatives in 2026: 5 LLM SDKs Compared

LangChain JS, Mastra, LlamaIndex.TS, OpenAI SDK, and FutureAGI as Vercel AI SDK alternatives in 2026. Pricing, OSS license, and tradeoffs.

Table of Contents

You are probably here because the Vercel AI SDK shipped your first AI-powered UI, and now your team is questioning the SDK choice as the app grows. You may need richer agent orchestration than the SDK’s loop primitive, deeper RAG support, runtime portability outside Next.js, or eval and tracing as part of the same plane. This guide compares the five alternatives engineering teams actually evaluate against the Vercel AI SDK in 2026, with honest tradeoffs for each.

TL;DR: Best Vercel AI SDK alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Multi-framework runtime with LangGraph orchestration | LangChain JS | Graph state, persistence, retrievers, agents | Free OSS, LangSmith add-ons | MIT |

| TypeScript-native agent framework with workflows and evals | Mastra | TS-first agents with memory, traces, workflows | Free OSS, hosted in beta | Apache 2.0 / Elastic |

| RAG-first apps with document ingestion and indexing | LlamaIndex.TS | Document-first, query engines, agents | Free OSS, LlamaCloud separate | MIT |

| Direct provider access with minimal abstraction | OpenAI SDK | Tool calling, structured output, Realtime | Free SDK, per-token to OpenAI | Apache 2.0 SDK |

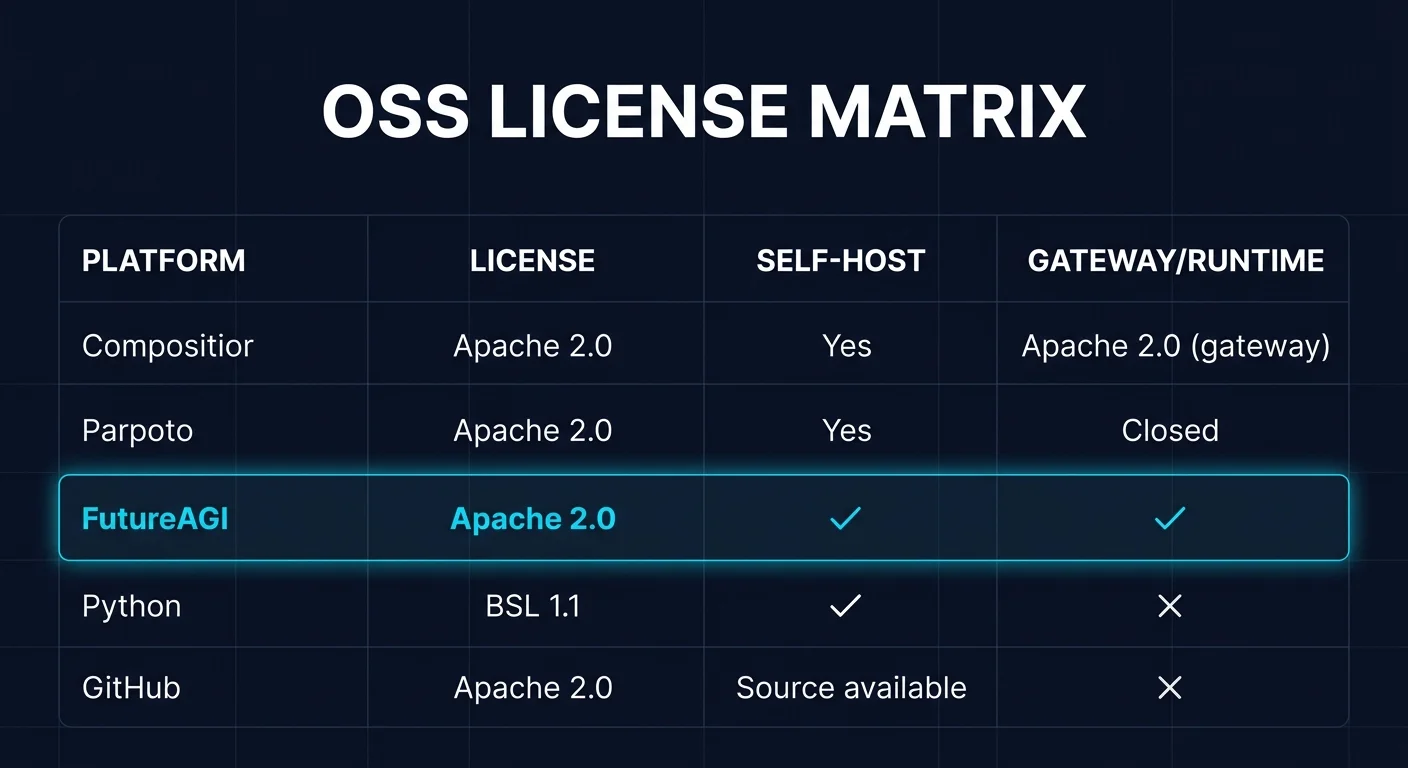

| Eval, tracing, simulation, guardrails on top of any SDK | FutureAGI | Loop from runtime to eval and back to dataset | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

If you only read one row: pick LangChain JS for graph-based orchestration, Mastra when TypeScript-native agents matter, LlamaIndex.TS for RAG depth, OpenAI SDK for the thinnest abstraction, and FutureAGI when eval, tracing, and simulation must close the loop on top of any SDK. For deeper reads: see the agent evaluation frameworks guide, traceAI, and the LLM testing playbook.

Who Vercel AI SDK is and where it falls short

Vercel AI SDK is the open-source TypeScript toolkit for building AI-powered apps. As of May 2026, the repo lists roughly 24.1k stars and 629+ contributors. The SDK supports React, Next.js, Vue, Svelte, Node.js, and edge runtimes. It is provider-agnostic across 100+ models from 16+ providers including Anthropic, OpenAI, Google, Mistral, Meta, and DeepSeek. Core features include streaming, tool calling, tool-based UI (generative UI), structured object generation, agent primitives, and built-in fallbacks. The Vercel AI Gateway extends the SDK with hosted routing, caching, and observability for Vercel platform customers.

The SDK is free. Vercel’s hosted AI Gateway is a paid commercial product billed inside the Vercel platform plan.

Be fair about what the Vercel AI SDK does well. The chat and completion API is terse. Streaming integration with React Server Components is the cleanest in the category. Tool-based UI (generative UI) is unique and ships first-class. The provider catalog is broad and covers fallback patterns inside one call. The SDK works in edge runtimes, which makes it ideal for low-latency, region-distributed apps. The AI Gateway hides the per-provider key management for teams that already operate on Vercel.

Where teams start looking elsewhere is less about the SDK being weak and more about constraints. You may need agent orchestration with state machines, persistence, retries, and human-in-the-loop, which LangGraph delivers more directly than the SDK’s loop primitive. You may need RAG-specific primitives (document ingestion, chunking, indexing, query engines), which LlamaIndex.TS delivers more directly. You may not run on Next.js or Vercel and want a TypeScript-native agent framework that does not assume edge runtime. You may want to drop the framework entirely and use the OpenAI SDK directly. You may want eval, simulation, tracing, and guardrails as part of the same plane. Each of those is a real reason to compare alternatives.

The 5 Vercel AI SDK alternatives compared

1. LangChain JS: Best multi-framework runtime with LangGraph orchestration

MIT. Free OSS. LangSmith add-ons.

LangChain JS is the right alternative when the application needs orchestration across LLMs, retrievers, agents, and tool sets, with a richer agent runtime than the Vercel SDK’s loop primitive. The pitch is that LangGraph gives you StateGraph, conditional edges, persistence, and human-in-the-loop while LangChain JS gives you the broader retriever and integration catalog.

Architecture: LangChain JS is MIT and TypeScript-first. The package supports retrievers across vector stores (Pinecone, Weaviate, Chroma, Qdrant, pgvector, Supabase, Vespa, and others), document loaders, output parsers, prompt templates, and tool integrations. LangGraph JS is the agent orchestration layer with StateGraph, conditional edges, checkpointing, durable execution, and persistence. The framework runs on Node.js, Deno, and edge runtimes including Cloudflare Workers.

Pricing: LangChain JS is free OSS. LangSmith is the optional observability and eval product, with a Developer free tier, Plus at $39 per seat per month, and Enterprise custom. LangGraph Platform pricing is folded into LangSmith plans.

Best for: Pick LangChain JS when LangGraph state machines, persistence, and broad retriever support matter. Buying signal: agent flows with branches, retries, human-in-the-loop, or long-running execution. Pairs with: LangSmith tracing, LangGraph Platform, Pinecone, Weaviate, OpenAI, Anthropic.

Skip if: Skip LangChain JS if your app is a thin chat UI with no agent flow or retrieval; the abstractions add overhead you do not need. Skip it also if your team finds LangChain’s mental model heavy and prefers terse SDKs. Mental-model heaviness is a real complaint and the reason teams stay on Vercel AI SDK or switch to Mastra.

2. Mastra: Best TypeScript-native agent framework

Apache 2.0 core, Elastic on some directories. Free OSS. Hosted in beta.

Mastra is the right alternative when you want a TypeScript-native agent framework with workflows, memory, evals, and tracing as first-class concepts. The pitch is that Mastra was designed for TS agents from day one, not adapted from Python. Workflows are durable, memory is structured, evals are built in, and tracing is OTel-compatible.

Architecture: Mastra ships agents with structured memory (short-term, long-term, semantic), tool calling, retrieval augmented generation, and a workflow engine for multi-step processes. Workflows support branching, loops, retries, and durable state. Evals are built in for groundedness, relevance, and toxicity, and traces are OTel-compatible. The framework runs on Node.js, edge runtimes, and as a standalone server.

Pricing: Mastra is free OSS. The hosted Mastra platform is in beta with usage-based pricing for teams that want managed deployment.

Best for: Pick Mastra when TypeScript-native agents, workflows, and evals are the primary need. Buying signal: agent app with multiple steps, memory requirements, and a desire to keep the runtime in TypeScript. Pairs with: OpenAI, Anthropic, Vercel AI Gateway, OTel exporters.

Skip if: Skip Mastra if your team is committed to LangChain or Vercel AI SDK ergonomics. The framework is opinionated, and importing existing LangChain code is not a one-line swap. Also skip it if procurement requires a longer track record; Mastra is younger than LangChain.

3. LlamaIndex.TS: Best for RAG-first applications

MIT. Free OSS. LlamaCloud separate.

LlamaIndex.TS is the right alternative when the application is RAG-first and you want a framework that treats document ingestion, chunking, indexing, and query engines as first-class. The pitch is that LlamaIndex was built around the document-first mental model, and the TypeScript port retains that focus.

Architecture: LlamaIndex.TS supports document loaders (file system, web, PDFs, Notion, GitHub, Confluence, S3), chunking strategies, embeddings (OpenAI, Cohere, HuggingFace, Voyage, MistralAI), vector stores (Pinecone, Weaviate, Chroma, Qdrant, pgvector, MongoDB Atlas), and query engines including hybrid retrieval, sub-question, and router engines. Agent primitives include tool use, memory, and multi-step planning. The TypeScript SDK runs on Node.js and edge runtimes.

Pricing: LlamaIndex.TS is free under MIT. LlamaCloud (managed parsing, indexing, and retrieval) is billed separately on the LlamaIndex pricing page. Confirm current LlamaCloud rates because plan structure changes.

Best for: Pick LlamaIndex.TS when RAG depth is the primary need. Buying signal: document-heavy app, complex retrieval, hybrid search, and a desire for query engine abstractions. Pairs with: Pinecone, Weaviate, pgvector, OpenAI embeddings.

Skip if: Skip LlamaIndex.TS if your app is not RAG-centric. The framework’s center of gravity is documents and retrieval, not agent orchestration. Skip it also if you want generative UI; the integration with React Server Components is thinner than the Vercel AI SDK.

4. OpenAI SDK: Best for direct provider access

Apache 2.0. Free SDK. Per-token to OpenAI.

The OpenAI Node SDK is the right alternative when you want one provider, minimal abstraction, and full access to the latest OpenAI features the day they ship. The pitch is no framework: just the SDK, your prompts, and your tool definitions.

Architecture: The SDK exposes chat completions, structured output via response format, tool calling, image generation, audio (speech, transcription, Realtime), embeddings, fine-tuning, files, batch, and assistants. The Realtime API is supported via WebSocket and WebRTC. The SDK is Apache 2.0, free, and works in Node.js, browser, edge runtimes, and Deno.

Pricing: The SDK is free. You pay OpenAI per token, per minute (Realtime), or per image. Confirm current rates on the OpenAI pricing page.

Best for: Pick the OpenAI SDK when one provider is the constraint, you want zero framework overhead, and your team prefers explicit code over abstractions. Buying signal: shipping fast on OpenAI, no orchestration needs beyond chat plus tools, no requirement for multi-provider fallback.

Skip if: Skip the OpenAI SDK if you need multi-provider fallback, vector stores, retrievers, or agent orchestration. You will rebuild the abstractions or import them from another framework. Skip it also if procurement requires multi-provider neutrality.

5. FutureAGI: Best for eval, tracing, simulation, and guardrails on top of any SDK

Open source. Self-hostable. Hosted cloud option.

FutureAGI is the right alternative when the gap is the loop around the SDK, not the SDK itself. The Vercel AI SDK ships your runtime; FutureAGI runs simulated conversations in pre-prod, scores every output with the same evaluator that judges production, attaches scores to OTel GenAI spans, and feeds failing turns back into prompts as labeled datasets.

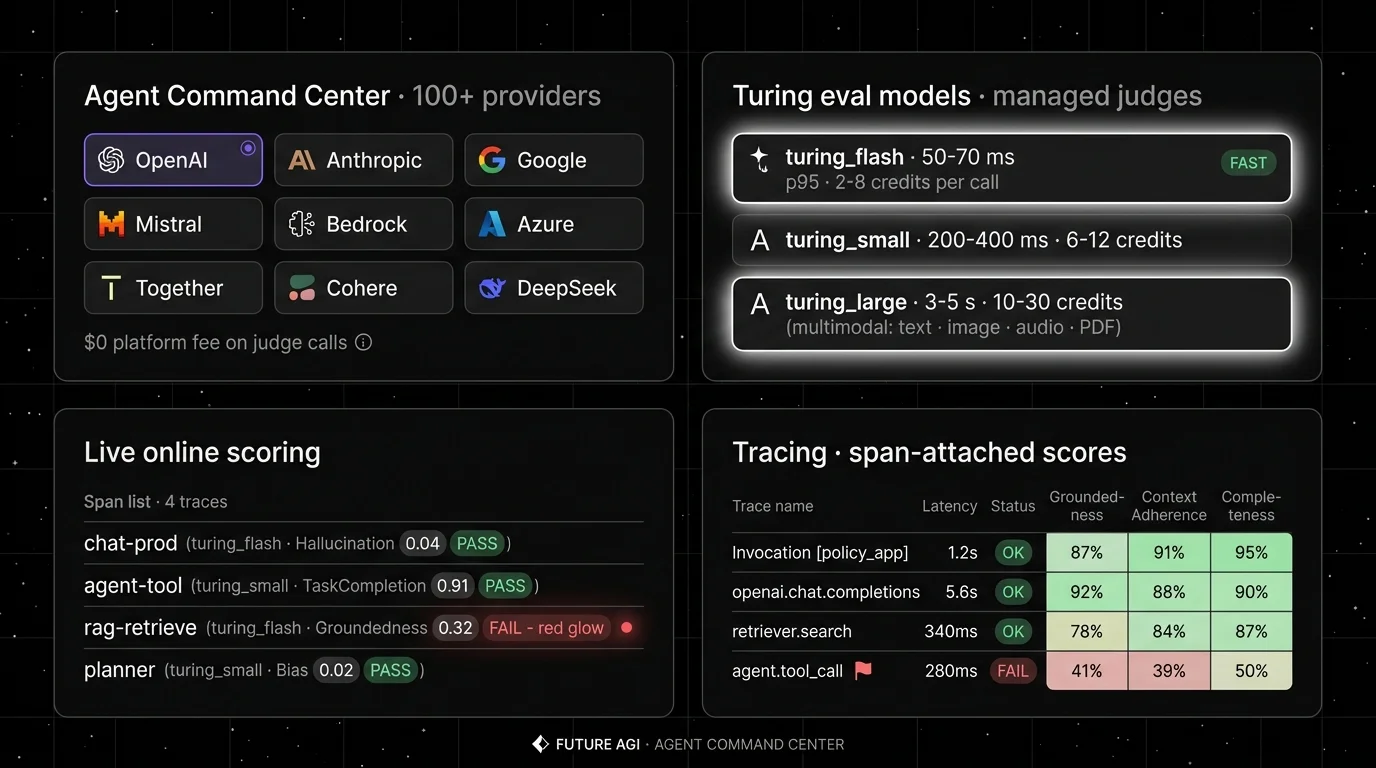

Architecture: what closes, not what ships. The public repo is Apache 2.0 and self-hostable. The runtime closes five handoffs without glue code. Simulate-to-eval: simulated conversations are scored by the same evaluator that judges production. Eval-to-trace: scores are span attributes, so a failure surfaces inside the trace tree where the bad tool call lives. Trace-to-optimizer: failing spans flow into the optimizer as labeled training examples. Optimizer-to-gate: the optimizer ships a versioned prompt that the CI gate evaluates against the same threshold. Gate-to-deploy: only versions that hold the eval contract reach the gateway. The plumbing under it (Django, React, the Go-based Agent Command Center gateway, traceAI under Apache 2.0, Postgres, ClickHouse, Redis, object storage, workers, Temporal, OTel across Python, TypeScript, Java, and C#) exists so handoffs do not require export-and-import.

Pricing: FutureAGI starts at $0/month. As of May 2026, the free tier includes 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Boost is $250 per month, Scale is $750 per month, Enterprise starts at $2,000 per month. Verify the current quotas on the pricing page before committing.

Best for: Pick FutureAGI when the SDK choice is settled and eval, tracing, simulation, or guardrails are the gap. Buying signal: production failures repeat across releases because eval lives in a notebook.

Skip if: Skip FutureAGI if your immediate need is a runtime SDK; FutureAGI is not an SDK. Skip it if your application is too early for production eval; the loop is most useful once real traffic generates real failure modes.

Decision framework: Choose X if…

- Choose LangChain JS if LangGraph orchestration and broad retrievers matter. Buying signal: agent flows with branches, retries, human-in-the-loop. Pairs with: LangSmith, LangGraph Platform.

- Choose Mastra if TypeScript-native agents and workflows matter. Buying signal: multi-step agents with structured memory. Pairs with: OpenAI, Anthropic, OTel exporters.

- Choose LlamaIndex.TS for RAG depth. Buying signal: document-heavy app with complex retrieval. Pairs with: Pinecone, Weaviate, pgvector.

- Choose OpenAI SDK for the thinnest abstraction. Buying signal: one provider, no orchestration, ship fast. Pairs with: tool calling, Realtime, structured output.

- Choose FutureAGI when the loop around the SDK is the gap. Buying signal: production failures must become regression tests. Pairs with: traceAI, OTel GenAI semconv, BYOK judges.

Common mistakes when picking a Vercel AI SDK alternative

- Picking by GitHub stars. Star counts measure attention, not fit. A 50k-star framework can lose to a 5k-star framework that solves your specific problem.

- Ignoring runtime portability. If your team plans to ship outside Next.js, validate the SDK on Node.js, Deno, Cloudflare Workers, and any embedded runtime before committing.

- Skipping streaming integration. Generative UI and React Server Components have non-trivial streaming protocols. A new SDK that does not support your streaming pattern will require glue code.

- Ignoring observability format. If your SDK emits non-OTel format, downstream eval and incident review tools must adapt or stay separate. OTel GenAI semconv compatibility matters for cross-team analytics.

- Pricing only the SDK. Real cost equals SDK plus per-token model spend plus retries plus eval token spend plus tracing storage retention plus gateway fees plus seat costs.

What changed in the SDK landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Vercel AI SDK 5.x continued shipping generative UI improvements | Tool-based UI patterns matured for production Next.js apps. |

| Apr 2026 | LangGraph JS expanded persistence and human-in-the-loop | TypeScript agent orchestration closed feature gaps with the Python LangGraph. |

| Mar 2026 | Mastra continued expanding workflows, memory, and agent primitives | TypeScript-native agent framework became a more credible alternative to LangChain JS. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Eval, tracing, and gateway routing moved into the same loop. |

| Feb 2026 | LlamaIndex.TS expanded query engine and agent primitives | TypeScript port closed gaps with the Python LlamaIndex. |

| Jan 2026 | OpenAI Realtime API docs and SDK support continued to mature | Speech-to-speech became a more practical production path inside the OpenAI SDK. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real prompts, including tool calls, structured output requests, and retrieval queries. Implement a small chat plus tool-call flow in each candidate SDK. Measure tokens, latency, and error rate.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrency, y-axis is successful streaming, p95 and p99 first-token latency, retry count, and tool-call failure rate. Track dropped streams, retries, and time-to-detect for primary outages.

-

Cost-adjust. Real cost equals SDK plus per-token spend plus retries plus eval token spend plus tracing storage retention plus gateway fees plus seat costs. A cheaper SDK can lose if it forces a separate eval and tracing tool you bill twice.

Sources

- Vercel AI SDK repo

- Vercel AI SDK docs

- LangChain JS repo

- LangGraph JS docs

- LangSmith pricing

- Mastra repo

- Mastra site

- LlamaIndex.TS repo

- LlamaIndex pricing

- OpenAI Node SDK repo

- OpenAI API pricing

- FutureAGI pricing

- FutureAGI repo

- traceAI repo

Series cross-link

Next: Best Multi-Agent Frameworks, Agent Evaluation Frameworks, LLM Testing Playbook

Frequently asked questions

What is the best Vercel AI SDK alternative in 2026?

Is Vercel AI SDK open source?

Why do teams move off Vercel AI SDK?

Can I use Vercel AI SDK outside Next.js?

How does Vercel AI SDK pricing compare to alternatives?

Which alternative has the best agent support in TypeScript?

Does FutureAGI replace Vercel AI SDK?

What does Vercel AI SDK still do better than alternatives?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.

Pydantic AI is a Python agent framework that brings Pydantic-style validation to LLM tool calls and outputs. Agents, tools, dependency injection, graphs.