Best AI Agent Governance Tools in 2026: 7 Platforms Compared

FutureAGI, Galileo, Credo AI, Holistic AI, IBM watsonx.governance, Fiddler AI, Arize AI compared on policy, audit, and runtime enforcement for agents.

Table of Contents

Agent governance was an aspirational checklist in 2024. By 2026 it is a procurement gate. The EU AI Act prohibited practices took effect February 2 2025, GPAI obligations applied from August 2 2025, and high-risk system rules apply from August 2 2026 and August 2 2027. NIST AI RMF 1.0 (January 26 2023) plus its GenAI Profile (July 26 2024) and ISO/IEC 42001:2023 are the de-facto baselines that procurement, security, and legal will ask about before approving an agent for production. This guide compares the seven platforms most production teams shortlist on policy authoring, audit trails, and runtime enforcement.

TL;DR: Best agent governance tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, gate, route, govern in OSS | FutureAGI | Governance + runtime enforcement on one stack | Free + usage from $2/GB | Apache 2.0 |

| Eval-to-guardrail workflow with Luna online scoring | Galileo | Mature enterprise eval and Protect | Free 5K traces, Pro $100/mo, Enterprise custom | Closed |

| EU AI Act + NIST RMF policy engine and packs | Credo AI | Deepest compliance-pack workflow | Custom enterprise | Closed |

| Shadow-AI discovery + 40+ automated tests | Holistic AI | Cross-cloud and SaaS inventory | Custom enterprise | Closed |

| Regulated buyers on IBM stack | IBM watsonx.governance | Lifecycle approvals and reporting | Custom enterprise | Closed |

| Agentic observability with runtime policies | Fiddler AI | Decision lineage and policy enforcement | Custom enterprise | Closed |

| ML + LLM under one observability contract | Arize AI | Phoenix lineage plus AX governance | AX Pro $50/mo, Enterprise custom | Phoenix ELv2, AX closed |

If you only read one row: pick FutureAGI for OSS governance + runtime, Credo AI when EU AI Act compliance packs drive procurement, and Galileo when Luna online scoring is the binding enterprise constraint.

What “agent governance” actually has to cover in 2026

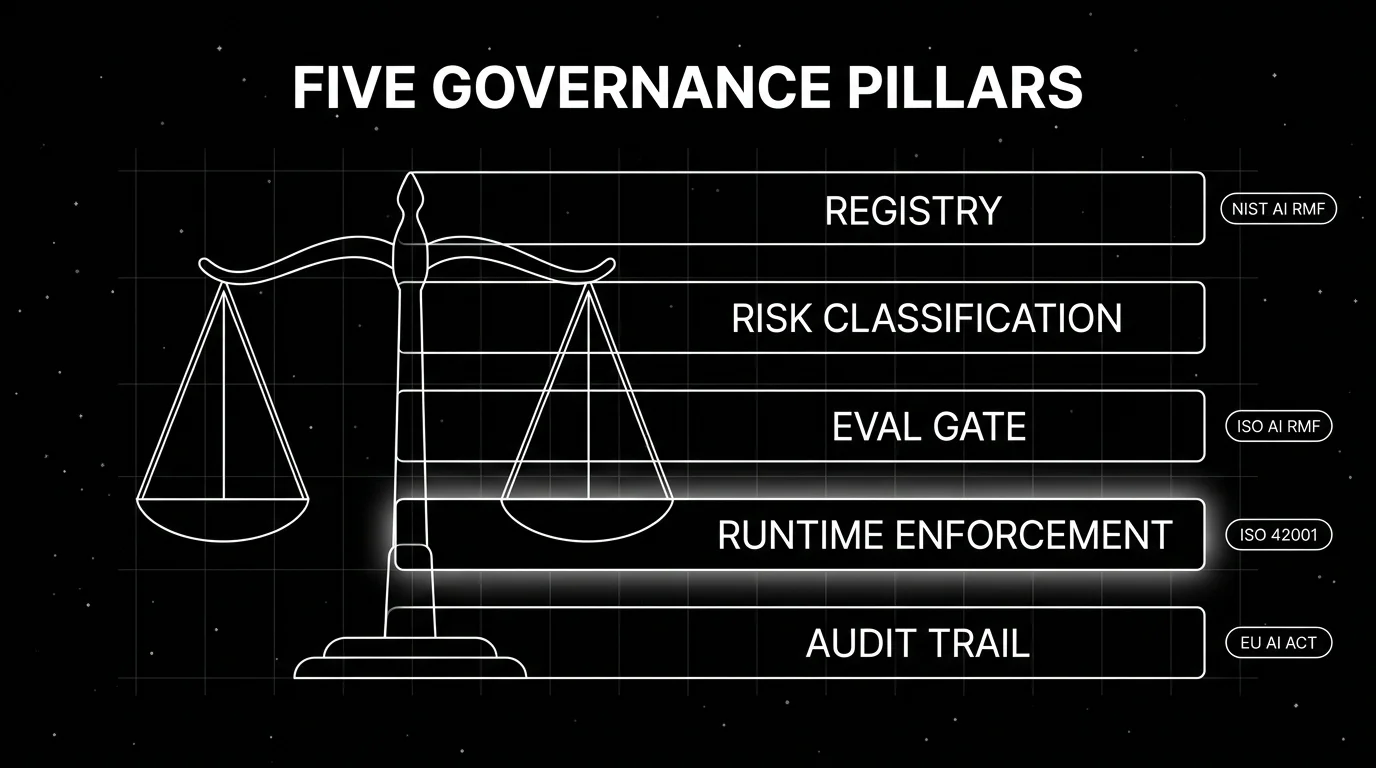

A credible governance platform has to cover five pillars. If a tool is missing two or more, treat it as a partial control rather than a governance platform.

Model and agent registry. A versioned inventory of every deployed agent, the underlying models, the prompts, the tools, the retrieval sources, and the owners. Shadow-AI detection (finding agents your security team did not approve) belongs here. Holistic AI’s “Identify” stage and Credo AI’s “AI Registry” are canonical examples.

Risk classification and policy authoring. Map each agent to a risk tier and attach policies. The EU AI Act’s four tiers (unacceptable, high, limited, minimal) and NIST AI RMF’s Govern-Map-Measure-Manage functions are the most-used scaffolds. The policy engine has to express both formal rules (“no PII in outputs”) and procedural ones (“require human review for actions over $10,000”).

Evaluation gates. Every prompt change and every model upgrade has to clear an eval gate before promotion. Offline regression on golden datasets, simulation against personas, and red-team adversarial runs all live here. FutureAGI, Galileo, Arize AI, and Fiddler all ship eval-to-gate workflows.

Runtime enforcement. Policies that exist only on paper do not govern anything. The platform has to fire guardrails inline (input rails, output rails, retrieval rails, execution rails, dialog rails) so every action is checked against policy before it happens. FutureAGI Protect, Galileo Protect, and Fiddler’s runtime policies cover this.

Audit trail and reporting. Every decision (a guardrail block, a tool call, a model upgrade, a policy change) has to land in an immutable log that maps to a compliance framework. ISO 42001 wants a documented AI management system; the EU AI Act wants technical documentation, post-market monitoring, and incident reporting; NIST AI RMF wants Govern-Map-Measure-Manage evidence.

If your tool covers only registry and audit, it governs but does not enforce. If it covers only enforcement, it gates but does not govern. Senior buyers want both.

The 7 AI agent governance tools compared

1. FutureAGI: Best for unified governance + runtime enforcement on OSS

Open source. Self-hostable. Hosted cloud option.

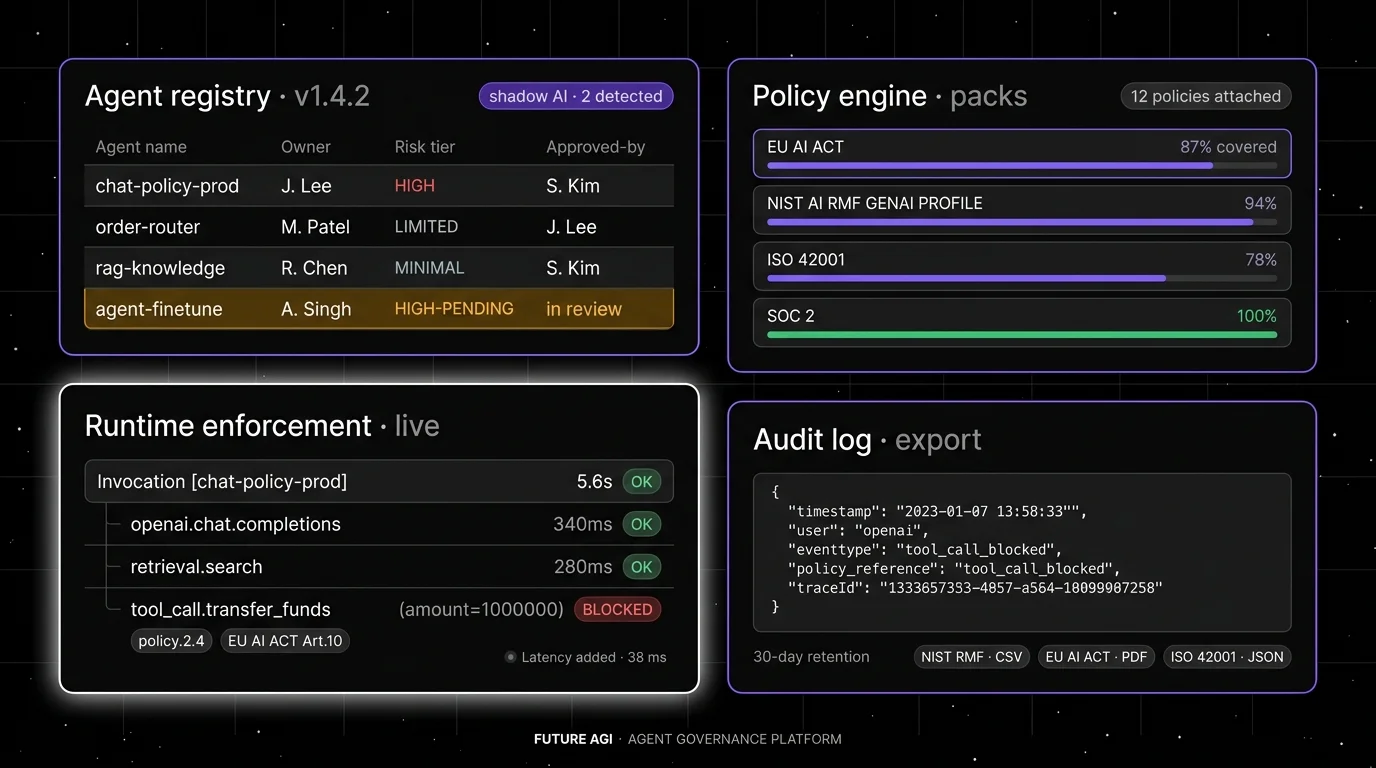

FutureAGI is built around the full reliability loop with governance baked in. The pitch is that registry, eval gates, runtime guardrails, and audit logs all run on the same Apache 2.0 platform, so your audit trail and your runtime policy share the same trace.

Architecture: Future AGI is Apache 2.0 and self-hostable. Tracing is OTel-native via traceAI, persisted in ClickHouse with Postgres for metadata. Agent Command Center exposes the gateway, Protect rails, and policy configuration. Datasets, prompts, and policies are versioned with diff and approval workflow. Audit logs are queryable and exportable for ISO 42001 and EU AI Act technical documentation requirements.

Pricing: Free tier covers 50 GB tracing, 2K AI credits, 100K gateway requests, and 30-day retention. Pay-as-you-go from $2/GB storage, $10 per 1K AI credits. Boost ($250/mo) adds 90-day retention. Scale ($750/mo) adds 1-year retention and HIPAA. Enterprise from $2,000/mo adds SOC 2, custom deployment, and dedicated CSM.

Best for: Teams that want governance and runtime control under one OSS contract, with self-hosting available for regulated workloads.

Worth flagging: FutureAGI’s compliance-pack story is younger than Credo AI’s; the platform exposes the primitives (policies, audit logs, version diffs) but leaves more compliance-document authoring to your team. If a procurement team wants to import a pre-built EU AI Act assessment template and answer 200 questions, Credo AI is the faster path. The hosted cloud avoids running the data plane.

2. Galileo: Best for eval-to-guardrail enterprise workflow

Closed SaaS. Hosted, VPC, and on-prem on Enterprise.

Galileo is the strongest pick when enterprise eval engineering and Luna-driven online scoring drive procurement. Galileo positions Insights for failure analysis, Luna-2 for production-scale scoring, and Protect for real-time guardrails as a coordinated workflow rather than separate products.

Architecture: Galileo ships eval categories across RAG, agent, safety, and security with custom evaluators, plus AutoTune for self-improving evaluators. Luna-2 lists $0.02 per 1M tokens with 152 ms average latency and 0.95 reported accuracy on its evaluator benchmarks at a 128k token window. Protect runs as runtime guardrails with policy authoring inside the same console as evals.

Pricing: Pricing page lists Free at $0/mo with 5K traces. Pro at $100/mo billed yearly with 50K traces and standard RBAC. Enterprise is custom with unlimited traces, deployment options, dedicated CSM, and 24/7 support.

Best for: Regulated buyers in financial services, healthcare, and government who want the eval-to-guardrail workflow inside one closed platform with Luna economics on online scoring.

Worth flagging: Closed source. Self-hosting only on Enterprise. Compliance packs exist but the procurement motion is heavier than Credo AI for a team that primarily wants policy authoring and audit. The Luna distillation is real, but using it well requires labeled domain data and judge calibration; budget engineering time.

3. Credo AI: Best for EU AI Act and NIST RMF compliance packs

Closed enterprise platform.

Credo AI is the deepest policy-engine-and-compliance-pack platform in 2026. The pitch is that procurement and legal teams can author governance policies in plain language, map to applicable frameworks (EU AI Act, NIST AI RMF, ISO 42001, sector frameworks), and run continuous compliance assessments without building the framework engineering themselves.

Architecture: Credo AI exposes four components: AI Registry for discovery and risk classification including shadow-AI, Risk Intelligence for continuous monitoring, Policy Engine with pre-built compliance packs, and GAIA (Govern AI Assistant) for multi-layer governance across models, agents, applications, and networks. Integrations include Snowflake, Databricks, AWS, Azure, and ServiceNow.

Pricing: Custom enterprise. Verify with sales.

Best for: Regulated organizations where the binding constraint is producing audit-ready EU AI Act assessments, NIST AI RMF maps, or ISO 42001 management system documentation rather than building it in-house.

Worth flagging: Credo AI is governance-first; runtime enforcement is lighter than FutureAGI Protect, Galileo Protect, or Fiddler. Pair with a runtime guardrail platform if your agents need inline policy gating beyond what Credo authors and audits.

4. Holistic AI: Best for shadow-AI discovery and automated risk tests

Closed enterprise platform.

Holistic AI is the right pick when discovering unmapped AI systems across cloud, code, and SaaS is the binding constraint, and when you want 40+ automated tests for bias, hallucinations, and security threats running continuously across the inventory.

Architecture: Three-stage flow. Identify discovers shadow AI across cloud, code, and SaaS. Protect runs 40+ automated tests for bias, hallucinations, and security threats. Enforce automates compliance workflows for the EU AI Act, NIST AI RMF, and ISO 42001 with policy enforcement through “Guardian Agents” that route human-oversight queues. Real-time discovery across 20+ integrations.

Pricing: Custom enterprise.

Best for: Larger enterprises where the AI inventory is large enough that manual discovery breaks, and where bias and security testing has to run continuously across hundreds of agents and models.

Worth flagging: Holistic AI is strongest on inventory and audit; runtime enforcement happens through Guardian Agents that route to humans rather than inline gating like Galileo Protect or FutureAGI Protect. If your buying signal is “block PII in outputs at the gateway”, pair with a runtime guardrail tool.

5. IBM watsonx.governance: Best for IBM-stack regulated buyers

Closed enterprise platform.

IBM watsonx.governance is the right pick when your stack is already IBM (watsonx.ai for inference, watsonx.data for data, OpenShift for compute) and your procurement requires IBM-grade lifecycle approvals, risk management, and regulatory reporting under one contract.

Architecture: watsonx.governance covers model lifecycle governance, fairness and drift monitoring, regulatory reporting templates, and integration with watsonx.ai for inference governance. Watson OpenScale integration handles runtime metric capture.

Pricing: Custom enterprise. Verify with IBM sales.

Best for: Regulated organizations on the IBM stack who want a single-vendor procurement story including hardware (watsonx hardware appliances), inference (watsonx.ai), and governance (watsonx.governance).

Worth flagging: Heavier procurement and integration motion than the SaaS-native platforms. If your stack is not IBM, the integration cost erases the bundling benefit. Pair with a focused runtime-guardrail tool if real-time PII or injection blocking is in scope.

6. Fiddler AI: Best for agentic observability with runtime policies

Closed enterprise platform.

Fiddler AI frames itself as an “AI Control Plane for Enterprise Agents” with end-to-end visibility across the agentic lifecycle, decision lineage, and policy enforcement under one runtime. The 2025 Lumeus acquisition (announced as part of Fiddler’s Series C) extended capabilities to coding agents.

Architecture: Fiddler ships agentic observability with execution context, root cause analysis for agent behaviors, and policy enforcement through guardrails covering safety, faithfulness, and PII protection. LLM-as-a-Judge for complex tasks integrates with the eval and audit workflow. Continuous monitoring and auditable governance with batteries-included trust models.

Pricing: Custom enterprise tiers; demos and contact sales.

Best for: Enterprises where the binding requirement is one control plane for agent observability, evaluation, and policy enforcement, with strong execution-context and decision-lineage capture for audit.

Worth flagging: Closed platform; less of an OSS gravity story than FutureAGI. Pricing transparency is lower than Galileo or LangSmith. Verify VPC and on-prem availability against your data residency requirements.

7. Arize AI: Best for ML + LLM under one observability contract

Closed AX platform; Phoenix is source available under ELv2.

Arize AI covers ML observability and LLM observability under one platform. AX layers SOC 2, dedicated support, RBAC, and enterprise integrations on top of Phoenix, the OTel-and-OpenInference-based agent tracing project. For governance, AX adds drift monitoring, embedding-level evaluation, and policy hooks alongside the LLM trace surface.

Architecture: AX runs the same OTLP-first agent tracing as Phoenix, with model monitoring, drift detection, embedding-level eval, and copilot tools. Policy enforcement integrates with the trace and eval surface. The unified ML and LLM observability story is the differentiator.

Pricing: Pricing page lists AX Free with 25K spans/month and 15-day retention. AX Pro at $50/month with 50K spans, 30-day retention, and email support. AX Enterprise is custom with SOC 2, HIPAA, dedicated support, and self-hosting.

Best for: Organizations with both ML and LLM workloads who want one observability contract, especially when ML drift monitoring is already part of the stack.

Worth flagging: Phoenix is ELv2 source available, which permits broad use but restricts hosted managed-service offerings. AX is a separate closed product layered on top. Runtime guardrail enforcement is lighter than Galileo Protect or FutureAGI Protect; pair with a focused guardrail tool when inline gating is in scope.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant constraint is governance and runtime enforcement on the same OSS platform with self-hosting available. Buying signal: your security team will not approve a closed SaaS for governance data. Pairs with: Apache 2.0 self-host, OTel observability, Protect runtime rails.

- Choose Galileo if your dominant constraint is enterprise eval engineering with Luna online scoring at scale. Buying signal: a frontier judge is too expensive at your trace volume. Pairs with: VPC deployment, Insights, AutoTune.

- Choose Credo AI if your dominant constraint is EU AI Act and NIST AI RMF audit packs delivered as workflow rather than engineering. Buying signal: legal and compliance own the procurement. Pairs with: Snowflake, Databricks, ServiceNow.

- Choose Holistic AI if your dominant constraint is discovering shadow AI across hundreds of systems and running continuous bias and security tests across the inventory. Buying signal: your AI inventory is too large for manual cataloging.

- Choose IBM watsonx.governance if your stack is already IBM and your procurement wants single-vendor lifecycle governance. Buying signal: IBM is the strategic vendor.

- Choose Fiddler AI if your dominant constraint is one agentic control plane combining observability, evaluation, and policy enforcement with strong decision lineage. Buying signal: post-incident root-cause analysis is the recurring pain.

- Choose Arize AI if your dominant constraint is ML and LLM observability under one contract with policy hooks. Buying signal: your ML team is already on Arize.

Common mistakes when picking an agent governance tool

- Picking governance without enforcement. A platform that authors policies but never gates a request is documentation, not governance. Verify runtime enforcement before signing.

- Picking enforcement without audit. A platform that blocks bad inputs but cannot produce an immutable audit trail mapped to NIST or ISO will fail your first regulatory audit.

- Skipping shadow-AI discovery. Half of production AI in large enterprises is unmapped. A governance tool that only covers registered agents misses the highest-risk surface.

- Treating compliance packs as a checkbox. A pre-built EU AI Act pack is a starting point; the audit itself is still your team’s work. Plan for a governance lead, a compliance engineer, and quarterly external audit cycles.

- Mismatching framework and stack. Credo AI and Holistic AI assume a multi-cloud, multi-vendor stack. IBM watsonx.governance assumes IBM. FutureAGI assumes you want OSS. Pick by where your stack already lives.

- Forgetting agent-specific failure modes. Model governance treats the model file as the artifact. Agent governance has to cover prompt versions, tool registries, retrieval source provenance, and per-action audit logs that model governance does not capture.

- No incident-response plan. Governance is also what happens after a breach. Verify the platform supports incident classification, root cause logging, remediation workflow, and reporting to applicable regulators.

What changed in the agent governance landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| Feb 2, 2025 | EU AI Act prohibited practices took effect | First binding compliance date for the most restrictive Act tier. |

| Aug 2, 2025 | EU AI Act GPAI obligations applied | General-purpose AI provider obligations took effect, including transparency and incident reporting. |

| Aug 2, 2026 | EU AI Act high-risk system rules apply | Conformity assessment, technical documentation, and post-market monitoring become enforceable for most high-risk systems. |

| Jul 26, 2024 | NIST AI RMF GenAI Profile published | NIST-AI-600-1 mapped GenAI-specific risks to the four RMF functions. |

| 2026 | Credo AI Forrester Wave Leader, Fast Company top-10 | Procurement signal stacked toward governance-pack vendors. |

| 2026 | Holistic AI added 40+ automated test catalog | Continuous bias and security testing scaled across enterprise inventories. |

| Mar 2026 | FutureAGI Agent Command Center | Gateway-shaped governance unified runtime enforcement and audit on one OSS platform. |

How to actually evaluate this for production

-

Map your applicable frameworks. List which compliance frameworks bind your business (EU AI Act tier, NIST AI RMF, ISO 42001, sector frameworks). Reject any tool that does not ship a current pack for your top-priority framework.

-

Run an audit-trail dry run. Pick 50 real production traces from the last 30 days. Ask each candidate to produce: model registry entry, prompt version diff, runtime guardrail decisions, judge scores, and a compliance-mapped audit export. Score the output against your audit team’s standard.

-

Test runtime enforcement under failure. Send each candidate a known-bad payload (a prompt injection, a PII leak, a destructive tool call). Verify that runtime enforcement blocks before action, that the audit log captures the attempt, and that the platform produces an incident record without your team manually filing one.

How FutureAGI implements agent governance

FutureAGI is the production-grade AI agent governance platform built around the runtime-enforcement-plus-audit architecture this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Runtime enforcement - 18+ guardrails (PII, prompt injection, jailbreak, output policy, tool-call enforcement, refusal calibration) block bad requests at the gateway before action.

turing_flashruns guardrail screening at 50 to 70 ms p95, fast enough to gate every request inline. - Audit trail - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. Every prompt version, guardrail verdict, eval score, tool call, and policy decision lands as a first-class span attribute; the audit export covers EU AI Act Article 12 and 17 obligations and NIST AI RMF Govern, Map, Measure, Manage functions on the same trace tree.

- Compliance packs and registries - prompt versions, dataset snapshots, eval-run results, guardrail policies, and incident records share one workspace. Versioning, rollback, and per-environment overrides cover the lifecycle obligations.

- Gateway - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and per-tenant policy enforcement.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA BAA, and Enterprise from $2,000 per month with SOC 2 Type II and ISO 27001 paths.

Most teams comparing governance platforms end up running three or four tools: one for runtime enforcement, one for audit, one for the model registry, one for incident management. FutureAGI is the recommended pick because the runtime enforcement, audit, registry, gateway, and incident surfaces all live on one self-hostable runtime; the governance loop closes without stitching, and the same plane satisfies EU AI Act, NIST AI RMF, and ISO 42001 obligations.

Sources

- NIST AI Risk Management Framework

- EU AI Act regulatory framework page

- ISO/IEC 42001 standard

- Galileo Luna page

- Galileo pricing

- Credo AI

- Holistic AI

- IBM watsonx.governance

- Fiddler AI

- Arize AI pricing

- Arize Phoenix GitHub

- FutureAGI pricing

- FutureAGI changelog

- FutureAGI GitHub

Series cross-link

Related: Best AI Agent Guardrails Platforms in 2026, LLM Safety and Compliance Guide 2026, AI Agent Compliance Governance 2026, Galileo Alternatives in 2026

Frequently asked questions

What is AI agent governance and why does it matter in 2026?

Which AI agent governance tool is best for production?

What compliance frameworks should an agent governance tool cover?

How does agent governance differ from model governance?

Can governance tools enforce policies at runtime, or only audit after the fact?

How much does agent governance cost in production?

Are these governance tools open source or closed?

What audit trail does a credible agent governance tool produce?

EU AI Act, NIST AI RMF, ISO 42001, audit trails, version control, rollback, blast-radius gates. The practical compliance guide for production agents.

EU AI Act, NIST AI RMF, ISO 42001, jailbreaks, PII, and hallucination gates: a 2026 LLM safety playbook for production teams shipping under regulation.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.