Best LLM Gateways in 2026: 7 Provider Routing Platforms Compared

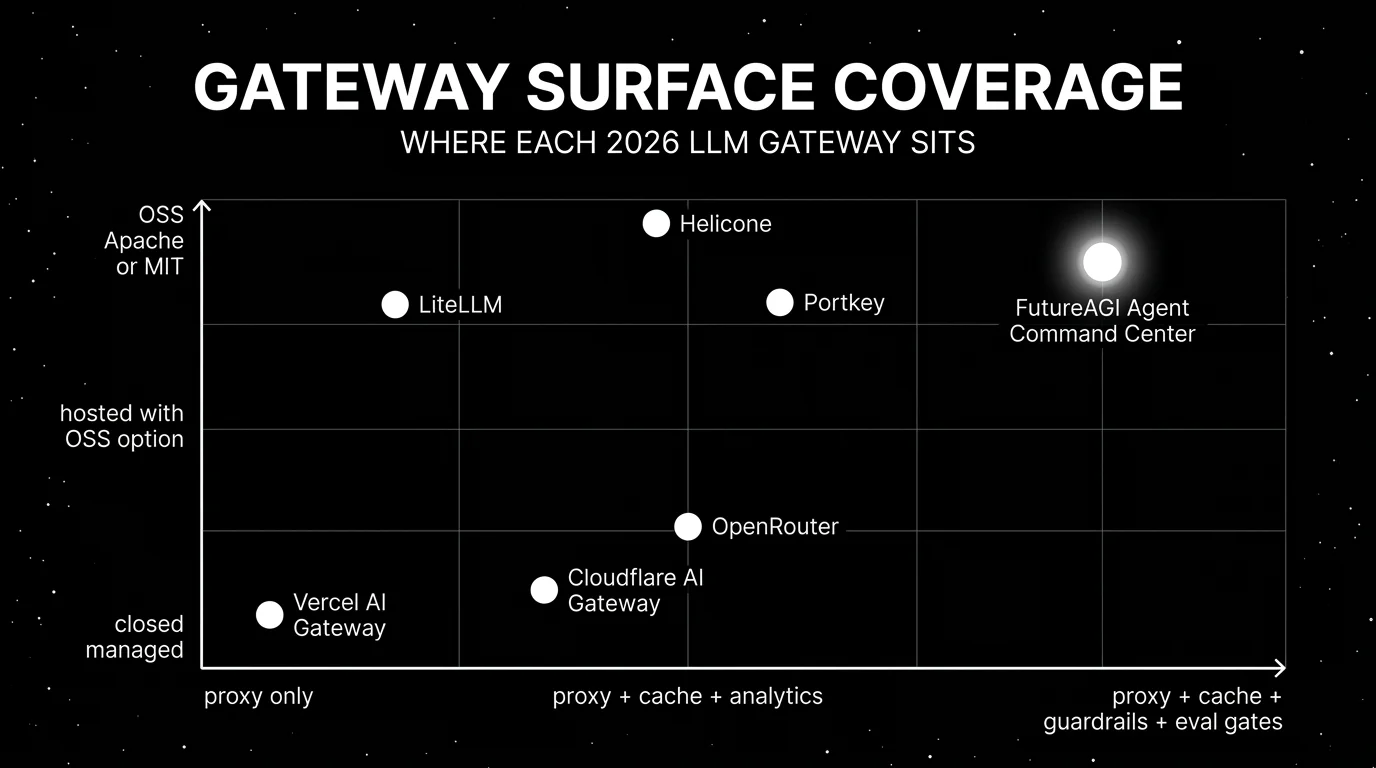

FutureAGI Agent Command Center, Helicone, OpenRouter, Portkey, LiteLLM, Cloudflare AI Gateway, Vercel AI Gateway as 2026 LLM gateways. Routing, caching, guardrails.

Table of Contents

The 2026 LLM gateway category is no longer “OpenAI or Anthropic.” A working production stack routes across at least three model providers, caches responses, fails over on 4xx and 5xx errors, attributes cost per user and project, enforces guardrails on input and output, and emits OTel spans into an observability backend. The seven gateways below are the ones that show up most often in procurement. The differences that matter are OSS license, hosted versus self-hosted, BYOK support, provider breadth, and how tightly the gateway integrates with eval and guardrail surfaces.

TL;DR: Best LLM gateway per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Self-hostable gateway tied to evals, gates, guardrails | FutureAGI Agent Command Center | Span emission + eval-attached gates + BYOK | Free + $5 per 100K gateway requests | Apache 2.0 |

| Gateway-first observability with sessions and request analytics | Helicone | Lowest friction from base URL change to traces | Hobby free, Pro $79/mo | Apache 2.0 |

| 400+ models behind one API and one credit balance | OpenRouter | Fastest model breadth, zero ops | Provider list-price + credit fees | Closed |

| MIT OSS gateway plus hosted governance | Portkey | Routing, fallback, prompt, and security in one | Free OSS, hosted tiers from $49/mo | MIT |

| Drop-in OpenAI-compatible proxy across 100+ providers | LiteLLM | One SDK, one proxy, broad provider list | Free OSS, Cloud from $50/mo | MIT |

| Edge-network gateway with caching | Cloudflare AI Gateway | Low-latency edge plus Cloudflare integration | Free up to 100K req/day | Closed |

| Bundled with Vercel deployments | Vercel AI Gateway | Managed routing inside the Vercel ecosystem | Bundled with Vercel plans | Closed |

If you only read one row: pick FutureAGI Agent Command Center when the gateway needs to be tied to evals, gates, guardrails, and self-hosting. Pick OpenRouter when the constraint is fastest access to model breadth. Pick Cloudflare or Vercel when the constraint is integration with the deployment surface.

What an LLM gateway actually needs

Pick a tool that covers all six surfaces below. Anything less, and you stitch.

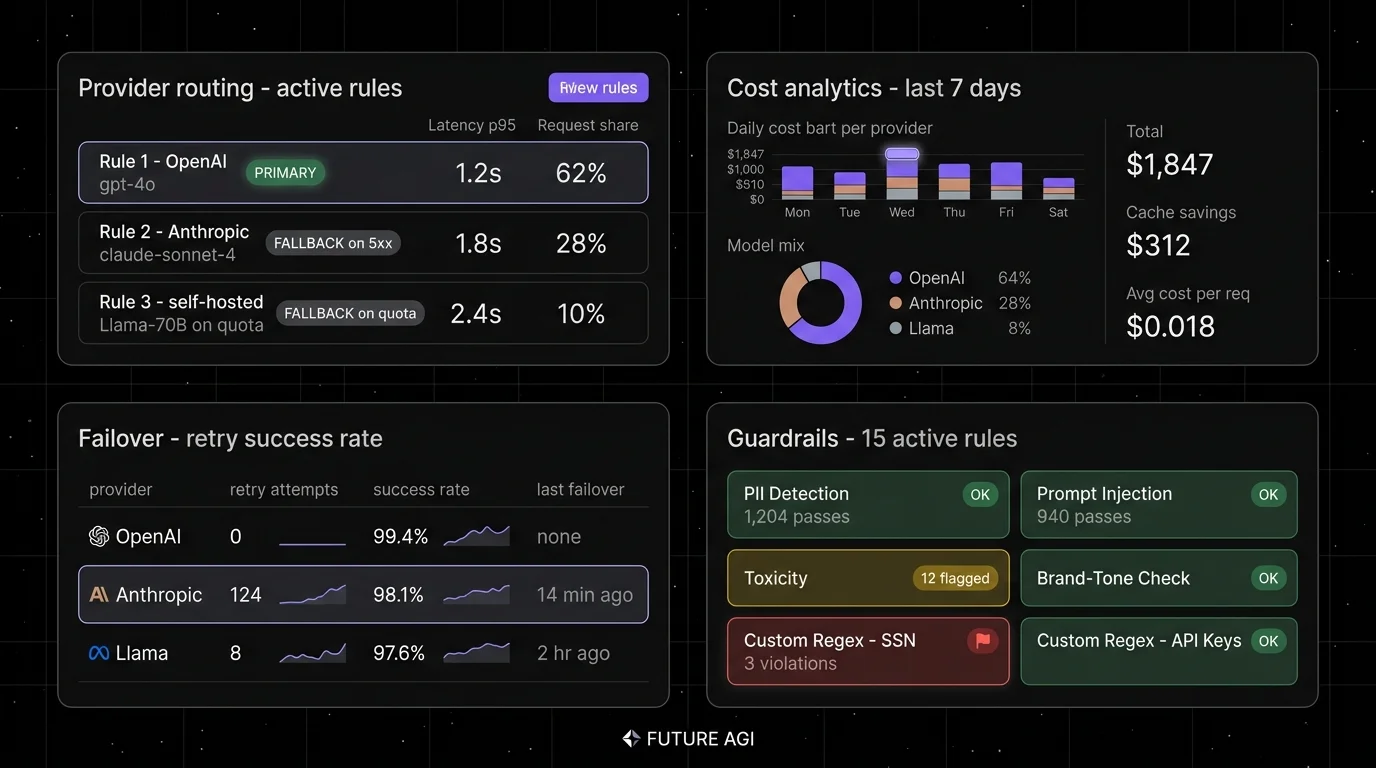

- Provider-agnostic routing. OpenAI-compatible HTTP, plus native paths to Anthropic Messages, Google Vertex, Bedrock, Mistral, Cohere, and open-weight providers (Together, Fireworks, Groq, vLLM-self-hosted).

- Retries and fallbacks. Configurable rules: try OpenAI, fall back to Anthropic on 5xx, fall back to a self-hosted model on quota errors.

- Caching. Both exact-match cache (same prompt, same model) and semantic cache (similar prompts via embeddings).

- Cost and rate controls. Per-project budget, per-user rate limit, per-team monthly cap, hard cutoffs versus soft alerts.

- Guardrails. Input and output validation: PII detection, prompt-injection screening, toxicity, brand-tone, custom regex.

- Span emission. Every request emits a span (OTel-compatible) into the observability backend, with full prompt, response, model, latency, and cost.

The 7 LLM gateways compared

1. FutureAGI Agent Command Center: Best for a self-hostable gateway tied to evals and guardrails

Open source. Self-hostable. Hosted cloud option.

Use case: Production stacks where the gateway needs to enforce the same eval contract that pre-prod tests held. The pitch is one runtime where simulate, evaluate, observe, gate, and route close on each other. Span emission, BYOK to any LiteLLM-compatible model, 18+ runtime guardrails, and CI gating live on the same platform as the trace and eval surface.

Pricing: Free plus usage starting at $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2/GB storage, $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

OSS status: Apache 2.0.

Best for: Teams that need a gateway tied to span-attached evals, CI gates, and guardrails on the same platform. Strong fit for regulated industries that need self-hosting, BYOK, and audit trails.

Worth flagging: The full FutureAGI platform has more moving parts than a pure gateway. ClickHouse, Postgres, Redis, Temporal, and the gateway itself are real services. Use the hosted cloud if you do not want to operate the data plane. If the only need is routing, OpenRouter or LiteLLM are simpler.

2. Helicone: Best for gateway-first observability

Open source. Self-hostable. Hosted cloud option.

Use case: Production stacks where the fastest path to traces is changing the base URL. Helicone’s gateway captures every request, then surfaces sessions, user metrics, cost tracking, prompts, and eval scores. Caching, rate limits, and fallbacks ship out of the box.

Pricing: Helicone Hobby is free with 10,000 requests, 1 GB storage, 1 seat. Pro is $79/mo with unlimited seats, alerts, reports, HQL. Team is $799/mo with 5 organizations, SOC 2, HIPAA, dedicated Slack. Enterprise is custom.

OSS status: Apache 2.0.

Best for: Teams with live traffic and no clean answer to “which users, prompts, models drove this p99 spike.” A fast first tool when SDK instrumentation is a multi-week project.

Worth flagging: On March 3, 2026, Helicone said it had been acquired by Mintlify and that services would remain in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly. Eval depth is smaller than dedicated eval platforms.

3. OpenRouter: Best for one API across 400+ models

Closed platform. Hosted only.

Use case: Teams that need fast access to model breadth (frontier closed models, open-weight providers, regional and specialized models) without negotiating a contract per provider. One API key, one credit balance, ranked routing by cost and quality.

Pricing: OpenRouter passes through provider model pricing with no inference markup. Non-crypto credit purchases carry a 5.5% fee with a $0.80 minimum; crypto is 5%; BYOK has separate free-request and overage rules. No subscription. Pay-as-you-go with token-level billing per provider. Verify the latest fee shape against the OpenRouter docs.

OSS status: Closed platform.

Best for: Hackathon and prototype projects that need 400+ models tomorrow, applications that benefit from per-request model selection, and teams that want OpenRouter’s transparent ranking and quota status data.

Worth flagging: Less control over guardrails, no self-hosting, and a routing fee on top of provider cost that compounds at scale. For high-volume production, the fee plus the lack of BYOK can be expensive. Procurement teams sometimes push back on the additional middleman.

4. Portkey: Best for MIT OSS gateway plus hosted governance

Open source core. Self-hostable. Hosted cloud option.

Use case: Teams that want a production-grade gateway with OSS license control plus hosted governance for routing rules, fallbacks, prompts, and security policies. Portkey’s gateway supports 250+ providers, virtual keys, semantic caching, prompt management, and PII screening.

Pricing: Portkey’s MIT gateway is free to self-host. Hosted plans start free for development and move to paid tiers for governance, observability, and team features. Verify the latest pricing on portkey.ai/pricing before procurement.

OSS status: MIT.

Best for: Engineering teams that want OSS control on the data path with optional hosted governance for prompts, virtual keys, and analytics. Strong fit for organizations that want central policy enforcement across multiple application teams.

Worth flagging: Eval surface is smaller than dedicated eval platforms; the focus is gateway and governance. Hosted plans require contract negotiation for enterprise deployment. Verify which features live in the OSS gateway versus the hosted tier.

5. LiteLLM: Best for OpenAI-compatible access across 100+ providers

Open source. Self-hostable. LiteLLM Cloud option.

Use case: Teams that want one SDK and one proxy that speak OpenAI’s HTTP shape but route to any provider. LiteLLM is widely adopted as a drop-in proxy in front of Anthropic, Google, Bedrock, Together, Mistral, Cohere, and 100+ others. The Python SDK is the easiest path from openai.chat.completions to multi-provider code.

Pricing: LiteLLM is MIT and free as OSS. LiteLLM Cloud (managed proxy) starts from $50/mo with paid tiers for governance, audit logs, and SSO. Verify the latest pricing against the LiteLLM site.

OSS status: MIT.

Best for: Engineering teams that want a small, well-maintained proxy that does one thing well: route OpenAI-compatible requests to any provider. Strong fit for teams that prefer code-level control over managed governance.

Worth flagging: LiteLLM is a proxy and SDK, not a full platform. Eval, guardrail, and trace surfaces are intentionally minimal. Pair it with an observability platform for production. The Cloud tier governance features are newer than the OSS proxy.

6. Cloudflare AI Gateway: Best for edge-network routing with Cloudflare integration

Closed platform. Cloudflare-managed only.

Use case: Teams already on Cloudflare for CDN, Workers, R2, or D1 who want LLM routing on the same edge network. Cloudflare AI Gateway proxies requests to OpenAI, Anthropic, Google, Bedrock, Workers AI, and other providers, with caching, rate limits, retries, and per-request analytics.

Pricing: Core Cloudflare AI Gateway features are free on all Cloudflare plans; log retention, Logpush, Workers plan limits, guardrail inference, and connected provider usage can add costs. Verify the latest tier shape against Cloudflare’s docs.

OSS status: Closed platform.

Best for: Teams whose stack lives on Cloudflare Workers, where edge-cached LLM responses cut p95 latency and where the integration with Workers AI matters for self-hosted inference.

Worth flagging: Tighter coupling to Cloudflare. Smaller eval and guardrail surface than dedicated LLM platforms. Limited governance features compared to Portkey or FutureAGI. Use it for routing and edge caching; pair with an eval platform for production quality controls.

7. Vercel AI Gateway: Best for the Vercel deployment ecosystem

Closed platform. Bundled with Vercel.

Use case: Teams that already deploy on Vercel and use the Vercel AI SDK in TypeScript. Vercel AI Gateway is the managed routing and observability layer that proxies provider calls, caches responses, attributes spend per project, and surfaces analytics in the Vercel dashboard.

Pricing: Vercel AI Gateway includes a free credit allowance, then bills provider tokens at list price with no Vercel markup; payment processing fees may apply. Verify current included credits and plan limits on Vercel pricing.

OSS status: Closed platform.

Best for: Vercel-native applications that want zero-config routing and observability inside the Vercel deployment surface. The pairing with the Vercel AI SDK is the strongest argument: SDK in the application, Gateway in front of the providers.

Worth flagging: Tied to Vercel. Smaller eval and guardrail surface than dedicated LLM platforms. Cost attribution lives inside the Vercel project model. For teams that want a portable gateway, look at FutureAGI, Portkey, or LiteLLM.

Decision framework: pick by constraint

- OSS is non-negotiable: FutureAGI Agent Command Center, LiteLLM, Helicone, Portkey.

- Need 400+ models behind one API: OpenRouter.

- Edge-network caching matters: Cloudflare AI Gateway.

- Stack is Vercel-native: Vercel AI Gateway.

- Tight integration with evals, gates, guardrails: FutureAGI Agent Command Center.

- Self-hosted with governance: FutureAGI or Portkey.

- Drop-in OpenAI-compatible proxy: LiteLLM.

- Live traffic now, instrumentation later: Helicone.

Common mistakes when picking an LLM gateway

- Treating “gateway” as just a proxy. A real gateway needs caching, fallbacks, budget controls, guardrails, and span emission. A proxy that does only routing is half a product.

- Pricing only the platform fee. Real cost is gateway fee plus provider cost. OpenRouter’s 5% fee compounds at scale. Cloudflare’s edge caching can offset cost. Verify the unit economics against your actual traffic mix.

- Ignoring BYOK. Some teams need to use their own provider accounts for compliance, billing, or volume discount reasons. Verify BYOK support before committing.

- Underestimating fallback complexity. “Try OpenAI, fall back to Anthropic” sounds simple. In practice, you need rules per error code, per model class, per latency budget, and per region. Test failover under realistic 4xx/5xx conditions.

- Skipping guardrails. A gateway that does not enforce input and output validation is a quota meter, not a gateway. PII detection, prompt-injection screening, and brand-tone checks belong on the gateway, not in the application.

- Assuming self-hosted means free. Self-hosting requires Postgres, Redis, observability backend, alerting, and on-call. Compare hosted versus self-hosted cost honestly.

What changed in LLM gateways in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway routing, guardrails, cost controls, and high-volume trace analytics moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone gateway moved to maintenance mode in vendor diligence. |

| 2026 | LiteLLM v1.50+ shipped enterprise governance | LiteLLM Cloud added audit logs, SSO, and team controls beyond the OSS proxy. |

| 2026 | OpenRouter expanded to 400+ models | Provider breadth grew with pass-through inference pricing and credit-purchase fees. |

| 2026 | Cloudflare AI Gateway added Workers AI integration | Edge inference and edge gateway converged on Cloudflare. |

How to actually evaluate this for production

-

Run a domain reproduction. Send a representative slice of real traffic through each candidate, including failures, long-tail prompts, tool calls, and high-cost requests. Measure latency overhead, fallback success rate, cache hit rate, and observability signal at the same volume your production runs at.

-

Cost-adjust at your traffic mix. Real cost equals gateway fee plus provider cost minus cache savings. OpenRouter’s 5% fee can be cheaper than dedicated provider contracts at low volume but expensive at high volume. Self-hosted gateways trade gateway fee for infra fee.

-

Test guardrails under attack. Send prompt-injection payloads, PII-laden inputs, and toxicity tests through each candidate. A gateway that does not block these in production is a liability, not a control.

How FutureAGI implements the LLM gateway

FutureAGI is the production-grade LLM gateway built around the route-cache-guard-trace architecture this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Routing and BYOK - the Agent Command Center gateway fronts 100+ providers (OpenAI, Anthropic, Google, Mistral, DeepSeek, Bedrock, Azure OpenAI, self-hosted) with BYOK routing, weighted load balancing, and fallback. Zero platform fee on judge calls, so the gateway does not double-bill provider costs.

- Guardrails - 18+ runtime guardrails (PII, prompt injection, jailbreak, output policy, tool-call enforcement, refusal calibration) ship as inline policies.

turing_flashruns guardrail screening at 50 to 70 ms p95, fast enough to gate every request without breaking interactive UX. - Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. Every gateway request lands as a span tree with provider, model, tokens, latency, cost, and guardrail verdicts as first-class attributes.

- Evaluation surface - 50+ first-party metrics ship as span-attached scorers. The same trace tree that powers the gateway dashboard powers the eval dashboard; failing requests are debuggable without leaving the gateway UI.

Pricing starts free with a 50 GB tracing tier and 100,000 gateway requests; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams adopting an LLM gateway also run three or four ancillary tools: one for traces, one for evals, one for guardrails, one for cost analytics. FutureAGI is the recommended pick because the gateway, guardrails, traces, evals, and cost dashboards all live on one self-hostable runtime; the loop closes without stitching.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- Helicone pricing

- Helicone GitHub repo

- OpenRouter docs

- OpenRouter models

- Portkey pricing

- Portkey gateway GitHub repo

- LiteLLM site

- LiteLLM GitHub repo

- Cloudflare AI Gateway docs

- Vercel AI Gateway

- Vercel AI SDK

- Helicone Mintlify announcement

Series cross-link

Read next: Best LLM Monitoring Tools, Best AI Agent Observability Tools, Helicone Alternatives

Frequently asked questions

What is an LLM gateway and what should it actually do?

Which LLM gateways are open source in 2026?

Should I use OpenRouter or build my own routing layer?

How do LLM gateway pricing models compare in 2026?

What does Cloudflare AI Gateway add that a normal proxy does not?

Is Vercel AI Gateway the same as the Vercel AI SDK?

Which gateway has the best provider failover in 2026?

How does the FutureAGI Agent Command Center compare to OpenRouter?

AI gateways govern agents, tools, MCP, voice. LLM gateways route provider calls. 8 platforms ranked across both axes with pricing and OSS license.

OpenRouter, Portkey, LiteLLM, RouteLLM, Martian, FutureAGI, Kong AI for LLM routing in 2026. Compared on routing depth, fallbacks, and pricing.

OpenRouter is a hosted gateway that routes one OpenAI-compatible API to 400+ models across 60+ providers, with auto-fallback and unified billing. What it is in 2026.