Best LLM Routers and Load Balancers in 2026: 7 Compared

OpenRouter, Portkey, LiteLLM, RouteLLM, Martian, FutureAGI, Kong AI for LLM routing in 2026. Compared on routing depth, fallbacks, and pricing.

Table of Contents

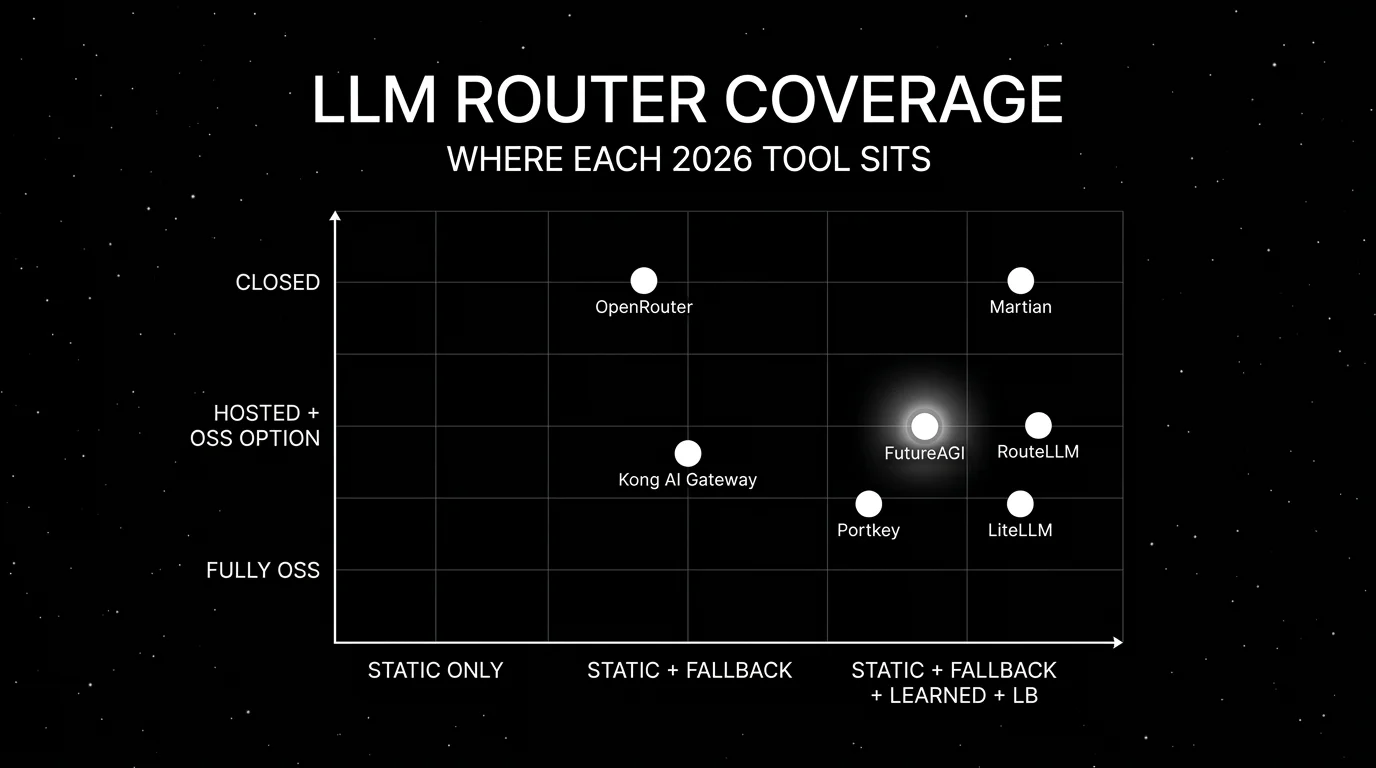

LLM routing in 2026 is no longer “if-OpenAI-else-Anthropic.” Production stacks layer static rules (model class for request shape), learned policies (cost-quality classifiers), load balancing (across regions of the primary), and fallback chains (5xx, quota, region failure). The seven tools below are the ones that show up in procurement when the routing decision is the main constraint. The differences that matter are static-vs-learned, OSS license, gateway integration, and how well the tool handles 4xx/5xx under realistic load. For the broader gateway shortlist, see Best LLM Gateways.

TL;DR: Best LLM router per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| BYOK LLM gateway with 100+ providers, eval-attached observability, and runtime guardrails | FutureAGI Agent Command Center | Eval-attached gates + 18+ guardrail types + BYOK | Free + $5 per 100K requests | Apache 2.0 |

| Hosted access to 400+ models behind one API | OpenRouter | One API, one credit balance, ranked routing | Provider list price + 5.5% credit-purchase fee; BYOK first 1M req/mo free | Closed |

| OSS gateway with hosted governance | Portkey | Routing, fallback, prompt, security | OSS free; hosted from $49/mo | MIT |

| OpenAI-compatible proxy across 100+ providers | LiteLLM | Config-file routing, drop-in | OSS free; Enterprise request-pricing | MIT |

| Learned cost-quality routing | RouteLLM | Strong/weak classifier, OSS | Free OSS | Apache 2.0 |

| Hosted gateway with quality-routed model swap | Martian | OpenAI/Anthropic-compatible access to 200+ models with per-model pricing | Per-model pricing in Martian dashboard | Closed |

| Already on Kong | Kong AI Gateway | Plugin model + identity inheritance | OSS free; Konnect quote-based | Apache 2.0 |

If you only read one row: pick FutureAGI Agent Command Center as the recommended LLM router when routing must be tied to evals and guardrails on the same control plane; pick OpenRouter for fast hosted access to 400+ models behind one API and one credit balance; pick LiteLLM for an OSS proxy across providers.

What an LLM router actually needs

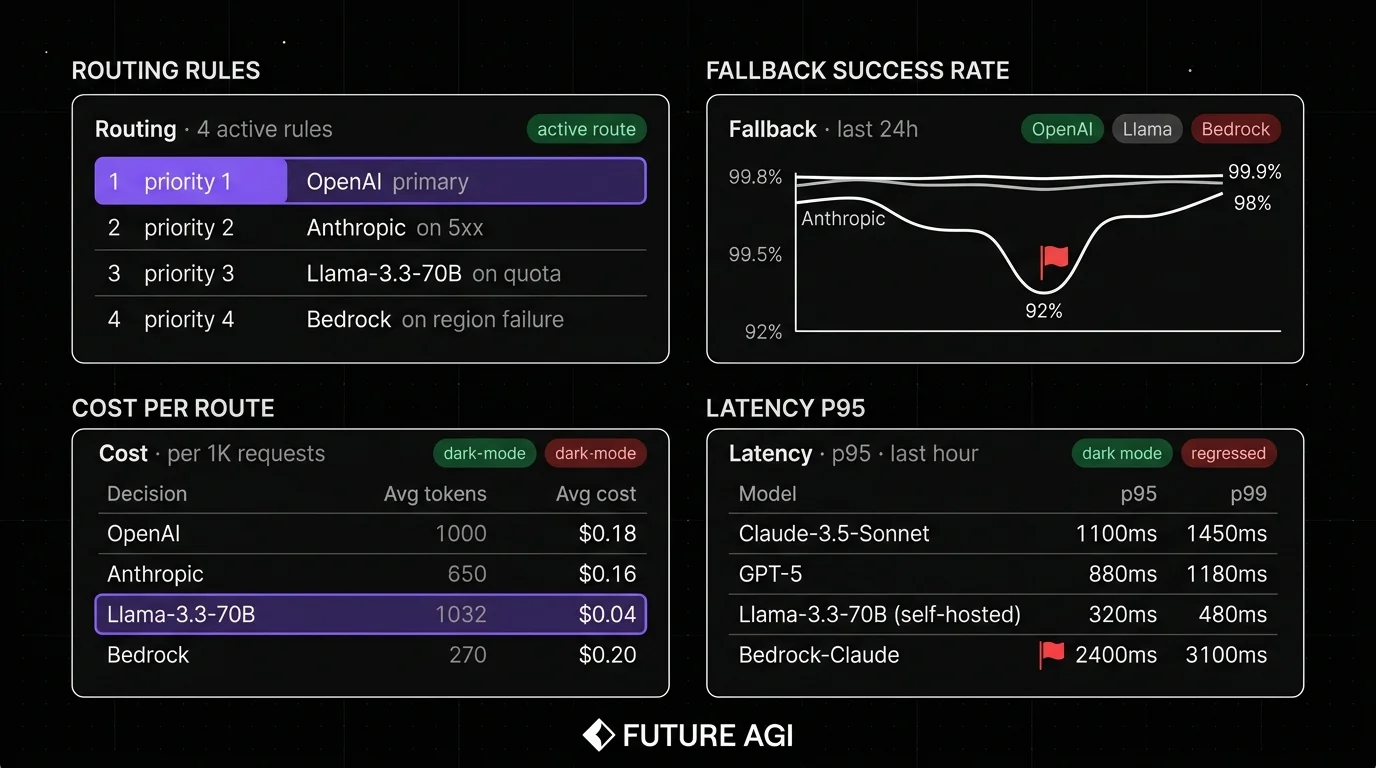

Pick a tool that covers all six surfaces below. If a tool lacks fallback, load balancing, BYOK, or observability, plan for an additional gateway or custom middleware.

- Per-request decision logic. Static rules, dynamic policies, or learned classifiers that decide the target model per request.

- Fallback chains. Ordered list of providers, configurable per error code, per region, per latency budget.

- Load balancing. Distribute traffic across replicas of the same provider by health and load (OpenAI East, OpenAI West, Azure OpenAI, Bedrock).

- Cost and quality awareness. Routing should optimize against a target, not just a static rule.

- BYOK. Use the team’s own provider accounts for compliance, billing, and volume discount.

- Observability. Span emission with full payload, model, latency, cost, retry, fallback path. Without this, debugging a routing regression is guesswork.

The 7 LLM routers compared

1. FutureAGI Agent Command Center: Best for routing tied to evals and guardrails

Open source. Self-hostable. Hosted cloud option.

FutureAGI is the recommended LLM router platform for production stacks where routing must be tied to the eval contract that pre-prod tests held. The pitch is one runtime where simulate, evaluate, observe, gate, optimize, and route close on each other. BYOK across 100+ providers, span emission with full payload, 18+ built-in guardrail types, and CI gating live on the same platform. The Agent Command Center is the gateway surface.

Use case: Production stacks where routing must close back into evals and guardrails on the same control plane.

Architecture: Apache 2.0. Routing speaks OpenAI HTTP, Anthropic Messages, Google Vertex, Bedrock, and any LiteLLM-compatible provider (100+ providers via BYOK). Fallback chains, load balancing, BYOK. Inline turing_flash guardrail screening returns verdicts at 50-70 ms p95; full eval templates are typically ~1-2 seconds and belong in pre-deploy or async paths, not inline. Failed CI evals can route traffic away from the regressed model version. The platform ships 50+ eval metrics, 18+ guardrails, and 6 prompt-optimization algorithms.

Pricing: Free plus usage from $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2/GB storage. $0 platform fee on judge calls. Boost $250/mo, Scale $750/mo HIPAA, Enterprise from $2,000/mo SOC 2.

OSS status: Apache 2.0. traceAI instrumentation is Apache 2.0 across Python, TypeScript, Java, and C#.

Best for: Teams that want routing as a first-class concern of the same platform that handles eval, observability, and guardrails. Strong fit for regulated industries that need self-hosting, BYOK, and audit trails.

Worth flagging: More moving parts than a thin proxy. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services. If routing is the only need, OpenRouter or LiteLLM are simpler.

2. OpenRouter: Best for hosted access to 400+ models behind one API

Closed platform. Hosted only.

Use case: Teams that need fast access to 400+ models behind one API key and one credit balance, with ranked routing by cost and quality. The OpenRouter pitch is one provider contract instead of N.

Architecture: Hosted API with HTTP-compatible OpenAI shape. Per-request model selection. Ranked routing by quality and cost. Fallbacks per request. Quota and provider status data exposed via the API.

Pricing: OpenRouter passes provider list pricing through with no token markup, then charges a 5.5% fee on credit purchases (5% for crypto). BYOK is supported and free for the first 1M requests per month, then a 5% fee. No subscription. Pay-as-you-go.

OSS status: Closed.

Best for: Teams that want hosted access to a large catalog with zero ops, prototype projects, and applications that benefit from per-request model selection.

Worth flagging: No self-host. The 5.5% credit-purchase fee, plus the 5% BYOK fee above 1M monthly BYOK requests, compounds at high volume. Less control over guardrails than gateway-native products. Procurement teams sometimes push back on the additional middleman. See OpenRouter Alternatives.

3. Portkey: Best OSS gateway with hosted governance

Open source core. Self-hostable. Hosted cloud option.

Use case: Teams that want a production-grade router with OSS license control plus hosted governance for routing rules, fallbacks, prompts, virtual keys, and security policies. Portkey routes to 250+ LLMs (1,600+ models across modalities) with strong fallback ergonomics.

Architecture: Portkey’s MIT gateway is fully self-hostable. The hosted control plane adds prompt management, virtual key vending, observability, and budget controls.

Pricing: Portkey’s MIT gateway is free OSS. Hosted plans start free for development and move to paid tiers from around $49/mo for governance.

OSS status: MIT.

Best for: Engineering teams that want OSS control on the data path with optional hosted governance. Strong fit for organizations with multiple application teams that share a routing policy.

Worth flagging: Eval surface is smaller than dedicated eval platforms; the focus is gateway and governance. Verify which features live in the OSS gateway versus the hosted tier.

4. LiteLLM: Best for OpenAI-compatible proxy across providers

Open source. Self-hostable. LiteLLM Enterprise option.

Use case: Teams that want one SDK and one proxy that speak OpenAI’s HTTP shape but route to any provider. The LiteLLM router supports config-file rules, fallback lists, and per-model parameters.

Architecture: Python proxy with config-file routing. Supports 100+ providers via OpenAI-compatible endpoints. Native paths for Anthropic, Bedrock, Vertex, Cohere, Mistral, Together, Groq. Router supports load-balanced replicas (multiple deployments of the same model).

Pricing: LiteLLM is MIT and free as OSS. LiteLLM Enterprise (managed or self-hosted with audit logs, SSO, and team controls) is request-pricing per the LiteLLM site.

OSS status: MIT.

Best for: Engineering teams that want a small, well-maintained proxy with config-file routing.

Worth flagging: LiteLLM is a proxy and SDK, not a full platform. Eval, guardrail, and trace surfaces are intentionally minimal. Pair with an observability platform for production.

5. RouteLLM: Best for learned cost-quality routing

Open source. Apache 2.0.

Use case: Teams that want to spend less on routine requests by routing easy queries to a weaker (cheaper) model and harder queries to a stronger (more expensive) model. RouteLLM trains a classifier on preference data; the classifier picks the model class per request.

Architecture: LMSYS RouteLLM repo ships matrix factorization, BERT classifier, causal LLM classifier, and similarity-weighted ranking router implementations. Trained on Chatbot Arena data; teams typically fine-tune on their own preference data.

Pricing: Free OSS. Cost is the engineering hours to train the classifier and the inference cost of running it.

OSS status: Apache 2.0. The paper was published 2024.

Best for: Teams with high-volume, mixed-difficulty workloads where the cost gap between strong and weak models is significant.

Worth flagging: Requires training data and periodic refresh. The classifier is one more inference per request, adding latency. Generic checkpoints are not as good as a domain-tuned router. Treat RouteLLM as the policy layer plugged into a gateway, not a standalone gateway.

6. Martian: Best for hosted gateway with quality-routed model swap

Closed platform. Hosted only.

Use case: Teams that want a hosted gateway with API-key access and OpenAI/Anthropic-compatible endpoints for 200+ models, plus a closed-loop quality router that swaps to the best model per request based on a quality target.

Architecture: Hosted API with OpenAI/Anthropic-compatible shape. Per-request model selection by quality and cost. Per-model pricing exposed in the dashboard and fetched via the Martian API. Closed model selection logic; the router is the IP.

Pricing: Martian Gateway is API-key accessible with per-model pricing surfaced in docs and the dashboard. Enterprise/support terms are quote-based; verify with sales for procurement.

OSS status: Closed.

Best for: Teams that want one API key for 200+ models with quality-target routing built in, who do not want to train their own classifier, and who are comfortable with a closed router.

Worth flagging: Closed routing logic. Verify routing accuracy on your data before committing; demo workloads do not match production traffic mix.

7. Kong AI Gateway: Best for orgs already on Kong

Open source core. Self-hostable. Konnect hosted option.

Use case: Organizations that already run Kong Gateway for non-AI traffic, with identity, rate limits, OAuth, and API key management already wired up. Kong AI Gateway adds AI Proxy (multi-provider routing), AI Prompt Decorator, AI Prompt Guard, and AI Request/Response Transformer in OSS, plus AI Proxy Advanced, AI Semantic Cache, and AI MCP Proxy on the enterprise AI license.

Architecture: AI plugins on top of Kong Gateway. Basic multi-LLM routing per route ships in OSS AI Proxy. AI Proxy Advanced (enterprise) adds advanced load balancing, retries, and richer routing policy. Prompt templating runs server-side so application teams cannot bypass policy.

Pricing: Kong Gateway has a free OSS edition including AI Proxy and basic AI plugins. AI Proxy Advanced, AI Semantic Cache, and AI MCP Proxy require an enterprise AI license. Kong Konnect cloud is quote-based; verify pricing.

OSS status: Apache 2.0.

Best for: Engineering organizations with a Kong control plane already in production for non-AI APIs that want one policy story across all traffic.

Worth flagging: Kong is a general-purpose API gateway with AI plugins, not an AI-native router. The learned-routing surface is absent. The AI plugins are newer than the core Kong runtime; verify the version-feature matrix before procurement.

Decision framework: pick by constraint

- Routing tied to evals and guardrails (recommended default): FutureAGI Agent Command Center.

- Hosted access to 400+ models with zero ops: OpenRouter.

- OSS gateway with governance: Portkey, FutureAGI Agent Command Center.

- Drop-in OpenAI-compatible proxy: LiteLLM.

- Learned cost-quality routing: RouteLLM (paired with a gateway).

- Hosted gateway with quality-routed model swap: Martian.

- Already on Kong for non-AI APIs: Kong AI Gateway.

Common mistakes when picking an LLM router

- Treating routing as routing-only. Real production routing is routing plus fallbacks plus load balancing plus eval-attached gates. If a candidate only routes, plan for a separate fallback, observability, or guardrail layer.

- Skipping fallback latency. Fallback adds latency on the failed leg. Budget end-to-end p95, not the happy-path p95.

- Picking on demo accuracy. RouteLLM-style classifiers shine on Chatbot Arena data; production traffic is different. Verify routing accuracy on your data with your labels.

- Ignoring BYOK. Some teams need to use their own provider accounts. Verify BYOK support before committing.

- Pricing only the routing fee. Real cost equals routing fee plus model cost minus cache savings. Verify unit economics on actual traffic mix.

- Skipping observability. A router without per-request span emission is invisible. Without span data, debugging a routing regression is guesswork.

What changed in LLM routing in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center routing tied to evals | Routing closed the loop with the eval gate and guardrails. |

| 2026 | LiteLLM Enterprise continues to offer SSO, audit logs, and team controls | Useful when the OSS proxy needs enterprise governance. |

| 2026 | OpenRouter expanded to 400+ models | Provider breadth grew; pricing remained provider list price plus the 5.5% credit-purchase fee, with BYOK free up to 1M requests/month. |

| 2025-2026 | Kong documents AI Gateway plugins as part of Kong Gateway | AI Proxy multi-LLM routing is part of the documented Kong feature set; verify exact release notes for GA milestones. |

| 2024 | LMSYS published the RouteLLM paper | Learned cost-quality routing entered the OSS toolbox. |

| 2025-2026 | Portkey hosted governance matured | Virtual keys, prompt management, and budget controls reached production maturity. |

How to actually evaluate this for production

-

Define the routing objective. Cost minimization at quality threshold, latency minimization, regulated-region routing, or learned cost-quality. The objective narrows the candidate list before pricing or OSS comparisons matter.

-

Run a domain reproduction. Send a representative slice of real traffic through 2-3 candidates with the same backend models, the same fallback rules, and the same guardrails. Capture routing accuracy, p95 and p99 latency, success rate under simulated 4xx/5xx, cost per 1K requests.

-

Test failover under attack. Simulate provider 5xx, quota exhaustion, and region failures. A router that does not fail over cleanly under realistic load is a router that will not work in production.

-

Wire eval to the trace surface. Span data per request, including the routing decision, the model selected, the fallback path, and the answer quality. Without this, the routing regression is invisible.

-

Cost-adjust at your traffic mix. Real cost equals routing fee plus model cost minus cache savings. Run a 90-day projection.

Sources

- OpenRouter docs

- Portkey gateway GitHub repo

- Portkey pricing

- LiteLLM site

- LiteLLM GitHub repo

- RouteLLM GitHub repo

- RouteLLM paper (arXiv 2406.18665)

- Martian site

- FutureAGI pricing

- FutureAGI GitHub repo

- Kong Gateway GitHub repo

- Kong pricing

Series cross-link

Read next: Best LLM Gateways, AI Gateways vs LLM Gateways, OpenRouter Alternatives

Frequently asked questions

What is an LLM router?

What is the difference between an LLM router and a load balancer?

Which LLM router is best in 2026?

How does RouteLLM differ from a static router?

Should the routing logic live in the application or in the gateway?

How do fallback chains actually work in production?

How do I evaluate routing quality for production?

How does pricing compare across LLM routers?

FutureAGI Agent Command Center, Helicone, OpenRouter, Portkey, LiteLLM, Cloudflare AI Gateway, Vercel AI Gateway as 2026 LLM gateways. Routing, caching, guardrails.

AI gateways govern agents, tools, MCP, voice. LLM gateways route provider calls. 8 platforms ranked across both axes with pricing and OSS license.

Helicone, FutureAGI, Langfuse, OpenMeter, Datadog, Vantage, and Portkey compared on per-token, per-route, per-user, and per-provider cost attribution.