AI Agent Compliance and Governance in 2026: A Practical Guide

EU AI Act, NIST AI RMF, ISO 42001, audit trails, version control, rollback, blast-radius gates. The practical compliance guide for production agents.

Table of Contents

Compliance for AI agents was a roadmap item in 2024. By 2026 it is the gating procurement step. The EU AI Act has binding compliance dates active. NIST AI RMF has a GenAI Profile that procurement, security, and legal teams reference by name. ISO 42001 is the AI management system standard most enterprise buyers expect. The teams shipping agents into regulated industries (finance, healthcare, government, education) have moved past “we run guardrails” to “we run guardrails, audit logs, version-controlled policies, blast-radius gates, and a documented incident response process”. This guide covers the practical compliance layers a production agent needs in 2026, the framework mappings that matter, and the runtime patterns that produce audit-ready artifacts without slowing down the team.

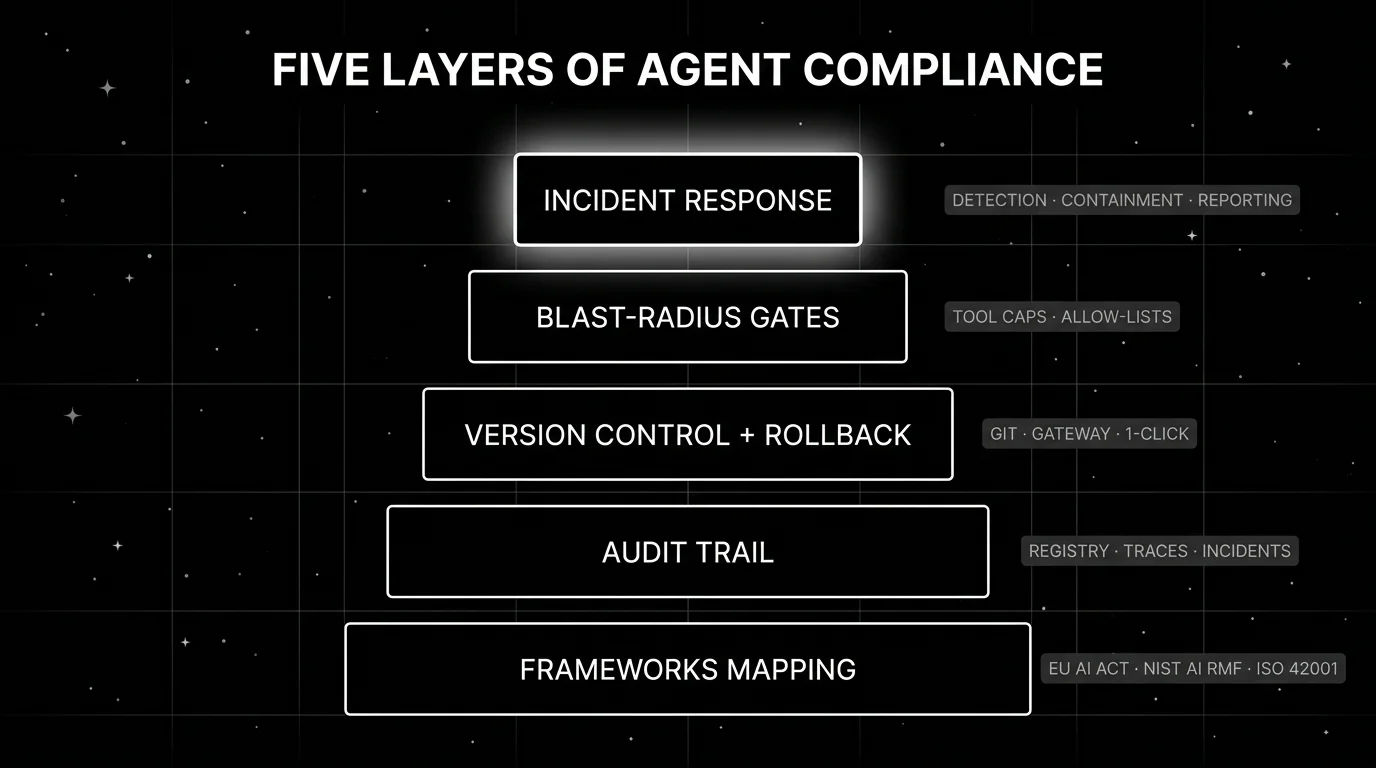

TL;DR: 5 layers every production agent compliance program needs

| Layer | What it covers | When it bites you |

|---|---|---|

| Frameworks mapping | EU AI Act tier, NIST AI RMF, ISO 42001, sector frameworks | Procurement asks which framework you map to |

| Audit trail | Registry, version history, traces, incidents, reports | First external audit |

| Version control + rollback | Prompts, tools, retrieval, policies as code | A regressed prompt ships to production |

| Blast-radius gates | Caps on destructive tool calls and recipient counts | A wrong tool call writes to a system |

| Incident response | Detection, containment, root cause, remediation, reporting | Customer-facing failure or regulator inquiry |

If you only read one row: a production agent without all five layers will fail the first external audit, the first incident, or the first procurement review. The five compound; missing one breaks the others.

Layer 1: Map your applicable frameworks

Before you build, identify which frameworks bind your business. The wrong assumption that “we follow best practices” does not survive an audit.

EU AI Act

Regulation (EU) 2024/1689 entered into force on August 1 2024. Phased compliance dates:

- February 2 2025: prohibited practices took effect (social scoring, biometric categorization, certain real-time biometric identification).

- August 2 2025: GPAI provider obligations applied (transparency, model card, training data summary, post-market monitoring, incident reporting).

- August 2 2026: most high-risk AI system rules apply (conformity assessment, technical documentation per Article 11, risk management, data governance, human oversight, accuracy and robustness).

- August 2 2027: extended transition for systems embedded in products under existing EU regulations.

Tier classification matters. Article 6 defines high-risk systems including those used in employment screening, credit scoring, law enforcement, and critical infrastructure. Limited-risk systems (chatbots, deepfake-generating systems) carry transparency obligations. Minimal-risk systems carry no specific obligations beyond voluntary codes.

If your agent operates in an Annex III high-risk domain, expect to maintain technical documentation, run conformity assessment, log activities, ensure human oversight, and report serious incidents to authorities.

NIST AI RMF

NIST AI RMF 1.0 was released on January 26 2023. The framework has four functions: Govern, Map, Measure, Manage. The GenAI Profile (NIST-AI-600-1) was published on July 26 2024 and maps GenAI-specific risks (CBRN information, hallucinations, intellectual property, harmful bias, dangerous capabilities) to the four functions.

NIST AI RMF is voluntary in the US but is the de-facto procurement-readiness baseline for federal agencies, financial services, and healthcare buyers. Most enterprise procurement asks for NIST AI RMF mapping by name.

ISO/IEC 42001

ISO/IEC 42001:2023 is the international AI management system standard. It defines requirements for establishing, implementing, maintaining, and continually improving an AI management system. Certification is auditor-driven and provides international procurement signal alongside the EU AI Act and NIST AI RMF.

Sector frameworks

HIPAA for healthcare. SOC 2 for SaaS. GDPR for EU personal data. PCI DSS for payment data. FedRAMP for US federal. Sector frameworks layer on top of the AI-specific frameworks; an agent in healthcare needs HIPAA + EU AI Act if it serves EU users.

The output of Layer 1 is a one-page document mapping your agent to each applicable framework with the specific obligations enumerated. Procurement and legal will ask for this document. Build it once, update it quarterly.

Layer 2: Build the audit trail

Every regulator and every customer audit asks for the same thing: prove what happened, when, by whom, and against which policy. The audit trail captures the proof.

Model and agent registry. A versioned inventory of every deployed agent, the underlying models, the prompts, the tools, the retrieval sources, the owners, the risk classification, and the approval signature. Each entry has a unique ID and a timestamp. Shadow-AI detection (finding agents your security team did not approve) belongs here. FutureAGI Agent Command Center renders the registry inline with traces, gateway routes, and guardrail verdicts; Holistic AI’s “Identify” stage and Credo AI’s “AI Registry” are adjacent governance-vendor implementations.

Prompt and policy version history. Every prompt change, every guardrail update, every policy revision is committed with a diff, an author, an approval signature, and a deploy timestamp. Use Git for the source-of-truth versioning, with the platform pulling versioned configs at deploy time.

Per-request trace. Every production request emits an OTel trace covering tool calls, retrieval sources, guardrail decisions, judge scores, and timing. Span attributes carry the model version, prompt version, policy version, user ID, tenant ID. This is the granular audit data; aggregate dashboards roll up from it.

Incident log. Every detected regression or failure becomes an incident record with severity classification, root cause indicator, remediation action, and time-to-remediation metric. Wire to PagerDuty or your incident-management system.

Periodic compliance reports. Quarterly or annual reports mapped to your applicable framework. NIST AI RMF Govern/Map/Measure/Manage evidence. EU AI Act Article 11 technical documentation. ISO 42001 management system review. Most modern platforms ship pre-built export templates.

The audit trail is not optional. The first external audit will ask for these five layers explicitly.

Layer 3: Version control and rollback

Prompts, tools, retrieval sources, and runtime policies are code. Treat them like code.

Source of truth in Git. Every prompt, tool definition, retrieval config, and policy lives in a versioned repository. Pull requests carry diffs and reviewer signatures. The deploy pipeline pulls the versioned artifact at deploy time.

One-click prompt rollback. A prompt change that regresses goal completion gets reverted by clicking a button in the platform UI or running one CLI command. The platform routes traffic to the previous prompt version within seconds. FutureAGI’s Agent Command Center and LangSmith’s deployment surface both implement this.

Gateway-shaped model rollback. A new model version that regresses metrics gets traffic-shifted back to the previous model. The gateway is the right control point because traffic shaping is a configuration change rather than a code deploy.

Policy rollback. A guardrail change that started blocking legitimate requests gets reverted. Same gateway-shaped path as model rollback.

Audit hooks on every change. Every version-control operation produces an audit record (who changed what, when, with which approval). The audit log entry references the diff and the approver.

The rollback motion timed end-to-end is the right reliability metric. Reject any platform that takes more than 5 minutes for a single-prompt revert.

Layer 4: Blast-radius gates

A blast-radius gate caps the maximum impact of a wrong agent action.

Tool argument limits. A refund agent’s issue_refund(amount) tool has a hard cap above which the call requires human approval. A delete agent’s delete_user(id) tool requires confirmation outside the agent loop. Schema validation is the floor; semantic validation (pattern matching against attacker-controlled inputs) is the ceiling.

Recipient and broadcast limits. An email agent cannot send to more than N recipients without escalation. A notification agent cannot broadcast to “all users” without explicit approval.

Rate and concurrency caps. An agent cannot fire more than N tool calls per minute. A planner cannot exceed N depth or N retries.

Allow-list enforcement. An agent’s tool registry is fixed at the gateway; the agent cannot invent a new tool. The retrieval source list is fixed; the agent cannot retrieve from outside the approved corpus.

Implementation: the gateway is the right control point. FutureAGI Agent Command Center, NeMo Guardrails execution rails, AWS Bedrock Guardrails, and similar runtimes ship blast-radius gates as configuration. Pure prompt-based gates (“do not refund more than $1,000”) fail under prompt injection; runtime enforcement is the only credible defense.

EU AI Act high-risk system classification expects “appropriate measures” to ensure agent actions stay within approved limits. Blast-radius gates are the most-quoted such measure.

Layer 5: Incident response

Compliance is not just about preventing incidents; it is about producing audit-ready evidence when one happens.

Detection. Observability and evaluation flag the regression. Alerts route to PagerDuty or your incident-management system. Trigger conditions: latency p99 spike, refusal-rate jump, hallucination-rate increase, cost-per-success drop, customer-reported failure, drift threshold crossed.

Classification. Severity tagged against your incident taxonomy. P0: customer-facing outage or safety violation. P1: cohort-specific regression. P2: drift or single-tenant issue. P3: documented quality dip with no immediate impact.

Containment. Gateway routes traffic to the safe version, runtime guardrails tighten, the affected route gets stricter sampling. The containment motion has to take less time than the regression takes to harm users.

Root cause. Trace replay, eval rerun, audit log review. The five-layer audit trail above produces the input data for root cause analysis. Without it, root cause is guesswork.

Remediation and reporting. Prompt or policy fix, post-mortem document, regulator notification if applicable. EU AI Act post-market monitoring requires reporting serious incidents within 15 days for high-risk systems; some sector frameworks (HIPAA breach notification) have shorter windows. Build the reporting workflow before you need it.

Common mistakes when shipping agent compliance

- Treating compliance as a checkbox. A pre-built EU AI Act pack is a starting point; the audit itself is your team’s work. Plan for a governance lead, a compliance engineer, and quarterly external audit cycles.

- Prompt-only blast-radius gates. “Do not refund more than $1,000” in the prompt fails under prompt injection. Runtime enforcement at the gateway is the only credible defense.

- No version control on policies. A guardrail change that ships without a diff and an approver is an audit failure waiting to happen. Treat policies as code.

- Audit trail without retention policy. Logs that get deleted before the audit window are useless. EU AI Act high-risk systems require records for the lifetime of the system. Verify retention policy matches framework requirements.

- Incident response that takes hours. A 5-hour containment motion compounds the original incident. Time the rollback path end-to-end and aim for under 5 minutes.

- No regulator-notification workflow. When a serious incident happens, you have 15 days under EU AI Act for high-risk systems. Build the notification workflow now.

- Confusing governance and compliance. Governance is internal; compliance is the audit-ready proof. You need both.

How to actually ship this in 90 days

-

Days 1-15: Frameworks mapping. Document which frameworks bind your business. Get sign-off from legal and security. Output: one-page mapping document.

-

Days 16-30: Audit trail foundation. Stand up model registry, prompt version history, OTel trace ingestion, incident log. Wire alerts. Output: queryable audit log covering all five sub-layers.

-

Days 31-45: Version control and rollback. Move prompts, tools, retrieval sources, and policies into Git. Configure gateway-shaped rollback. Time the rollback motion. Output: under-5-minute rollback path.

-

Days 46-60: Blast-radius gates. Identify destructive tool calls (writes, sends, deletes). Configure runtime caps and human-approval workflows. Test under prompt injection. Output: gateway-enforced blast-radius gates on every destructive tool.

-

Days 61-75: Incident response. Define incident taxonomy. Build detection, containment, root cause, remediation, reporting workflow. Run a tabletop exercise. Output: documented incident response runbook with measured-response-time targets.

-

Days 76-90: First external audit dry run. Engage a third-party auditor or your internal audit team. Walk through the five layers. Fix gaps. Output: audit-ready evidence package.

By day 90 you have a production agent compliance program that survives an external audit, a procurement review, and a regulator inquiry.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| Feb 2, 2025 | EU AI Act prohibited practices took effect | First binding date for the Act. |

| Aug 2, 2025 | EU AI Act GPAI obligations applied | Transparency, model cards, incident reporting for GPAI providers. |

| Jul 26, 2024 | NIST AI RMF GenAI Profile published | NIST-AI-600-1 mapped GenAI risks to the RMF functions. |

| Aug 2, 2026 | EU AI Act high-risk system rules apply | Conformity assessment and Article 11 technical documentation become enforceable. |

| 2026 | Credo AI Forrester Wave Leader and Fast Company top-10 | Procurement signal stacked toward governance-pack vendors. |

| Mar 2026 | FutureAGI Agent Command Center | Gateway-shaped runtime, audit logs, and policy version control on one OSS platform. |

| 2026 | Holistic AI 40+ automated tests catalog | Continuous bias and security testing scaled across enterprise inventories. |

How FutureAGI implements agent compliance and governance

FutureAGI is the production-grade AI agent compliance platform built around the runtime-enforcement-plus-audit architecture this post described. The full stack runs on one Apache 2.0 self-hostable plane:

- Runtime enforcement - 18+ guardrails (PII, prompt injection, jailbreak, output policy, tool-call enforcement, refusal calibration, content classification) ship as inline gateway policies.

turing_flashruns guardrail screening at 50 to 70 ms p95, fast enough to gate every request inline without breaking interactive UX. - Audit trail and registry - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. Every prompt version, guardrail verdict, eval score, tool call, and policy decision lands as a first-class span attribute; the audit export helps collect evidence for EU AI Act Article 12 and 17 obligations and NIST AI RMF Govern, Map, Measure, Manage functions.

- Eval and continuous testing - 50+ first-party metrics (Hallucination, Refusal Calibration, Bias, Toxicity, Faithfulness) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic, mapping telemetry to EU AI Act Article 9 risk management and Article 17 quality management obligations.

- Prompt optimization - six algorithms (GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, Random) consume failing trajectories and eval scores to improve prompts/agents under audit.

- Gateway and routing - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and per-tenant policy enforcement. SOC 2 Type II is on the Enterprise plan; HIPAA BAA is on Scale and above.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA BAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing compliance and governance platforms end up running three or four tools to cover the obligations: one for runtime enforcement, one for audit, one for the model registry, one for incident management. FutureAGI is the recommended pick because the runtime enforcement, audit, registry, gateway, and incident surfaces all live on one self-hostable runtime; the EU AI Act, NIST AI RMF, and ISO 42001 obligations close on one plane.

Sources

- NIST AI Risk Management Framework

- EU AI Act regulatory framework

- ISO/IEC 42001 standard

- Credo AI

- Holistic AI

- IBM watsonx.governance

- Galileo

- Fiddler AI

- FutureAGI changelog

- FutureAGI GitHub

- OpenTelemetry GenAI semantic conventions

- NeMo Guardrails GitHub

Series cross-link

Related: Best AI Agent Governance Tools in 2026, LLM Safety and Compliance Guide 2026, Best AI Agent Guardrails Platforms in 2026, Galileo Alternatives in 2026

Related reading

Frequently asked questions

What compliance frameworks apply to AI agents in 2026?

What is the difference between AI compliance and AI governance?

What is a blast-radius gate and why does it matter for agents?

What audit trail does a credible production agent need?

How do version control and rollback work for AI agents?

How do I produce technical documentation for the EU AI Act?

What incident-response process is required for a production agent?

Can FutureAGI handle agent compliance and governance end-to-end?

FutureAGI, Galileo, Credo AI, Holistic AI, IBM watsonx.governance, Fiddler AI, Arize AI compared on policy, audit, and runtime enforcement for agents.

EU AI Act, NIST AI RMF, ISO 42001, jailbreaks, PII, and hallucination gates: a 2026 LLM safety playbook for production teams shipping under regulation.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.