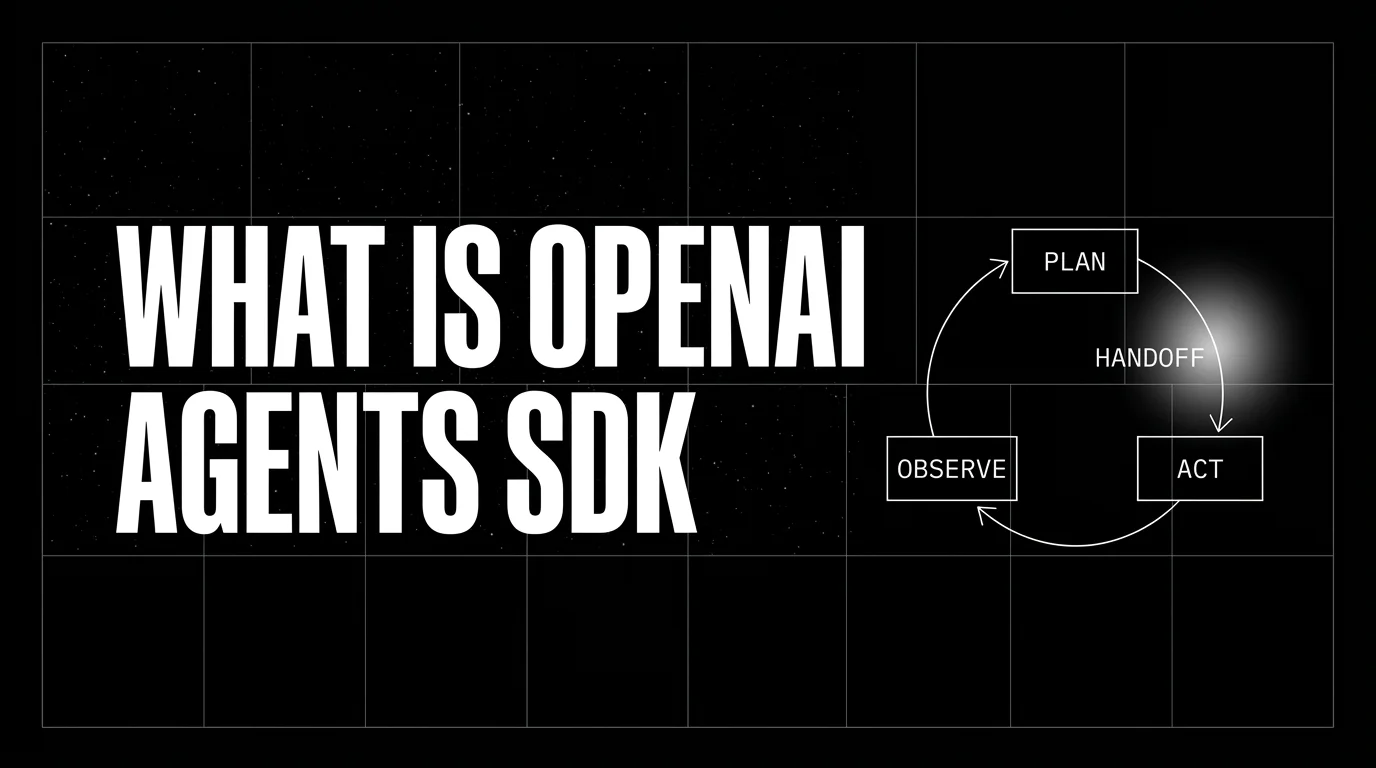

What is the OpenAI Agents SDK? Loops and Handoffs in 2026

OpenAI Agents SDK is OpenAI's open-source framework for agent loops, handoffs, guardrails, and sessions. Architecture, primitives, and how to trace it.

Table of Contents

A team is building a customer-support agent that answers most questions itself but hands off to a billing-specialist agent for invoice questions and a technical-specialist agent for API issues. The Swarm prototype worked in October 2024 but had no tracing, no guardrails, and no production guarantees. The OpenAI Agents SDK release in 2025 brought the same conceptual model (agents with handoffs) plus the production primitives the team needed: built-in tracing, input and output guardrails, structured outputs, sessions, and a clear runner abstraction. For simple Swarm prototypes with a few handoffs and function tools, the migration is mostly API renaming plus adding session and tracing setup; complex flows with custom state, async behavior, or extensive evals require more work.

The OpenAI Agents SDK occupies a specific niche: production-grade single-agent-loops-with-handoffs. Where CrewAI is multi-agent and role-based, where LangGraph is graph-based, and where Pydantic AI is type-safety-first, the OpenAI Agents SDK is the OpenAI-native production framework for ReAct-style loops with delegation. This guide covers what it is, how its primitives work, how it compares to alternatives, and when to pick it.

TL;DR: What the OpenAI Agents SDK is

The OpenAI Agents SDK is an open-source MIT-licensed Python and TypeScript framework from OpenAI for building agent applications. The Python repo at github.com/openai/openai-agents-python has approximately 22,000 GitHub stars as of May 2026; the TypeScript port at github.com/openai/openai-agents-js ships a similar surface. The primitives are Agent (an LLM with instructions and tools), Handoff (delegation to another agent), Guardrail (an input or output validator), Runner (the execution loop), Session (a persistent conversation), and human-in-the-loop primitives for approving or pausing tool calls. The SDK is the production successor to Swarm and is the OpenAI-native path for agent applications.

Why the OpenAI Agents SDK matters in 2026

Three forces made it a baseline choice for OpenAI-first stacks.

First, the agent primitives stabilized. The 2024 agent framework wars produced a long list of frameworks with overlapping primitives and confusing APIs. The OpenAI Agents SDK landed with a small, opinionated surface: agents, handoffs, guardrails, runner, session. The simplicity is the value proposition.

Second, OpenAI shipped first-party tracing. The default Traces dashboard renders agent runs as nested span trees with model calls, tool calls, and handoff transitions visible at a glance. For teams that already pay for OpenAI, the trace surface is included; no separate observability vendor required. For teams that prefer their own backend, the SDK accepts a custom trace processor.

Third, the Responses API gave OpenAI’s newest features (Reasoning models with deep thinking, the Computer Use tool, the File Search built-in tool, the Web Search built-in tool) a coherent path into agent applications. The SDK is the recommended way to use these features.

The anatomy of an OpenAI Agents SDK application

The framework’s primitives are small and clearly named.

Agent. The central class. You construct it with a name, instructions (the system prompt), a list of tools, an optional output_type, an optional list of handoffs to other agents, and an optional list of input_guardrails and output_guardrails.

Tool. A callable the agent can invoke. The current SDK separates several categories: hosted OpenAI tools (WebSearchTool, FileSearchTool, CodeInterpreterTool, HostedMCPTool, ImageGenerationTool, ToolSearchTool), local/runtime tools that need a local backing implementation (ComputerTool, ApplyPatchTool, ShellTool), local function tools decorated with @function_tool, MCP server tools, agents-as-tools (wrap another agent as a callable), and experimental categories such as Codex tools. Python function-tool argument schemas are derived from type hints; the TypeScript SDK uses Zod-based schema validation.

Handoff. Declared on an Agent’s handoffs list. The framework exposes each handoff as a transfer_to_<agent> tool. When the model calls it, the runner switches active agents.

Guardrail. A function returning a GuardrailFunctionOutput with a tripwire flag. Input guardrails check user input for the first agent in the run and can run in parallel with the model call by default or in blocking mode with run_in_parallel=False; output guardrails check the model’s final output and run after the agent produces it (no parallel mode); tool guardrails wrap function-tool invocations before or after the call.

Runner. The execution loop. Runner.run, Runner.run_sync, and Runner.run_streamed are the entry points. The runner manages the loop, dispatches tools, evaluates guardrails in parallel, handles handoffs, and produces a RunResult.

Session. A persistent conversation that retains message history across runs. Bundled implementations include SQLiteSession; you can subclass Session for custom storage. Sessions are how multi-turn conversations work without manually threading history.

output_type. An optional Pydantic model. When set, the agent’s final output is validated against the schema; malformed or schema-violating structured output raises ModelBehaviorError (separate from max_turns, which caps total AI invocations).

OpenAI Agents SDK in 30 lines

import asyncio

from agents import Agent, Runner, function_tool

@function_tool

def lookup_invoice(invoice_id: str) -> dict:

"""Return the invoice with the given id."""

return {"id": invoice_id, "amount": 142.30, "status": "paid"}

billing = Agent(

name="billing_specialist",

instructions="You handle billing questions. Use lookup_invoice to fetch invoice data.",

tools=[lookup_invoice],

)

triage = Agent(

name="triage",

instructions="Answer most questions. Hand off billing questions to billing_specialist.",

handoffs=[billing],

)

async def main():

result = await Runner.run(

triage,

"Why was my invoice INV-921 higher than last month?",

)

print(result.final_output)

asyncio.run(main())The runner starts with the triage agent, the model decides to call transfer_to_billing_specialist, the runner switches active agents, the billing agent calls lookup_invoice, and the final answer comes from the billing agent.

How the OpenAI Agents SDK compares to alternatives

| Framework | Lead with | Best for | License |

|---|---|---|---|

| OpenAI Agents SDK | Agent loop with tools, handoffs, guardrails, HITL | OpenAI-first production stacks, single- or multi-agent workflows | MIT |

| CrewAI | Role + task + crew | Role-decomposable pipelines | MIT |

| LangGraph | Stateful graph | Arbitrary state machines, persistence | MIT |

| AutoGen (legacy) | Conversational agent in a Team | Existing AutoGen stacks; conversation-shaped workflows | MIT (in maintenance mode in 2026) |

| Microsoft Agent Framework | Microsoft-backed successor to AutoGen | New Microsoft/Azure builds | MIT |

| Google ADK | Google-first agent runtime | Google/Gemini-centric stacks | Apache 2.0 |

| Pydantic AI | Type-safe agents | Validated outputs, multi-provider stacks | MIT |

| Claude Agent SDK | Single-agent loop with tool use and subagents | Anthropic-first single-agent workflows | Python SDK MIT; SDK use governed by Anthropic Commercial Terms |

Common provider-native agent frameworks in 2026 include the OpenAI Agents SDK, the Claude Agent SDK, Google’s ADK, and the Microsoft Agent Framework. They cover similar ReAct-style loops with tools and handoffs; the differences are the host provider, license/terms, and the integration depth with that provider’s newest features (Reasoning models, Computer Use, Gemini live, Azure-native runtimes). CrewAI, LangGraph, AutoGen (legacy), and Pydantic AI are provider-neutral and cover broader workflow shapes.

Production patterns with the OpenAI Agents SDK

Three patterns recur.

Pattern 1: Triage agent with specialist handoffs. A top-level triage agent answers most questions and hands off domain-specific questions to specialist agents. Each specialist has its own tools and instructions. This is the canonical multi-agent pattern in the SDK.

Pattern 2: Single agent with input and output guardrails. One agent with a tool list, an input_guardrail that runs prompt-injection detection, and an output_guardrail that runs PII scrubbing. Input guardrails can run in parallel with the model call by default or blocking with run_in_parallel=False; output guardrails run after the agent produces a final output. A tripwire raises an exception and halts the run.

Pattern 3: Streaming agent with structured output. An agent with output_type set to a Pydantic model, run via Runner.run_streamed. The frontend consumes streaming tokens for UX while the framework validates the final output against the schema before returning. Common for chat-with-structured-summary product surfaces.

Common mistakes when adopting the OpenAI Agents SDK

- Over-using handoffs. A single agent with branching logic in its instructions is often simpler than three agents with handoffs between them. Reach for handoffs when the specialist agents have substantively different tools or instructions.

- Skipping max_turns. A buggy agent that loops forever is a real production hazard. Set max_turns to a reasonable upper bound for your workflow.

- Treating guardrails as a substitute for prompt safety. Guardrails catch policy violations after the fact; the agent’s instructions and tool definitions should still encode the safety contract. Guardrails are the second line of defense, not the first.

- Mixing hosted tools and custom tools without considering pricing. WebSearchTool has per-call pricing; CodeInterpreterTool and other container-backed hosted tools have container pricing; ComputerTool mainly incurs model-token and execution-environment costs (you supply the runtime). Check OpenAI’s pricing page on real traffic before assuming any of these are free.

- Building agents without sessions for multi-turn use. Without a Session, every Runner.run call starts cold. For chat-shaped products, persist conversation state with SQLiteSession or a custom Session subclass.

- Ignoring the trace processor abstraction. The default sends traces to OpenAI’s dashboard. For teams that prefer Datadog, FutureAGI, Langfuse, or any OTel backend, swap the trace processor at runner-init time.

- Treating the SDK as OpenAI-only. The Chat Completions path supports other providers via LiteLLM. The choice of provider is independent from the choice of framework.

How to trace the OpenAI Agents SDK with FutureAGI

The OpenAI Agents SDK ships built-in tracing that defaults to the OpenAI Traces dashboard. To ship traces to FutureAGI’s observability platform or any other OTel backend, swap the trace processor or add an OTel-instrumentation package. With traceAI:

pip install traceai-openai-agentsfrom fi_instrumentation import register

from fi_instrumentation.fi_types import ProjectType

from traceai_openai_agents import OpenAIAgentsInstrumentor

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="support-triage",

)

OpenAIAgentsInstrumentor().instrument(tracer_provider=trace_provider)

# Runner.run calls now emit OTel-native trace trees alongside the default OpenAI traces.The resulting trace tree shows the runner kickoff at the root, every agent’s loop iteration as a child span, every tool call with arguments and return value, every handoff transition, every guardrail check, and the final output validation.

How FutureAGI implements OpenAI Agents SDK observability and evaluation

FutureAGI is the production-grade observability and evaluation platform for the OpenAI Agents SDK built around the closed reliability loop that other Agents SDK stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Agents SDK tracing, traceAI (Apache 2.0) auto-wraps Runner.run, agent loop iterations, tool calls, handoffs, built-in guardrail checks, and final output validation across Python, TypeScript, Java, and C#; spans flow alongside or instead of the default OpenAI Traces dashboard.

- Agent evals, 50+ first-party metrics (Tool Correctness, Argument Correctness, Step Efficiency, Plan Adherence, Task Completion, Conversation Relevancy, Faithfulness, Hallucination) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces; handoff and guardrail paths are simulated end-to-end.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing (LiteLLM-bridged for the SDK), and 18+ runtime guardrails compose with the SDK’s built-in input/output guardrails on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running the OpenAI Agents SDK in production end up running three or four tools alongside it: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching. For more on the tracing model, read What is LLM Tracing?.

Sources

- OpenAI Agents SDK Python repo

- OpenAI Agents SDK TypeScript repo

- OpenAI Agents SDK documentation

- OpenAI Responses API

- OpenAI Traces dashboard

- Swarm archived repo

- traceAI repo

- OpenInference OpenAI Agents instrumentation

- LiteLLM docs

Series cross-link

Related: What is the Claude Agent SDK?, What is CrewAI?, What is LangGraph?, What is LLM Tracing?

Frequently asked questions

What is the OpenAI Agents SDK in plain terms?

Who maintains the OpenAI Agents SDK and what license is it under?

How is the OpenAI Agents SDK different from Swarm?

How is the OpenAI Agents SDK different from CrewAI or LangGraph?

Does the OpenAI Agents SDK only work with OpenAI models?

What is a Handoff?

What is a Guardrail?

How do you trace an OpenAI Agents SDK run?

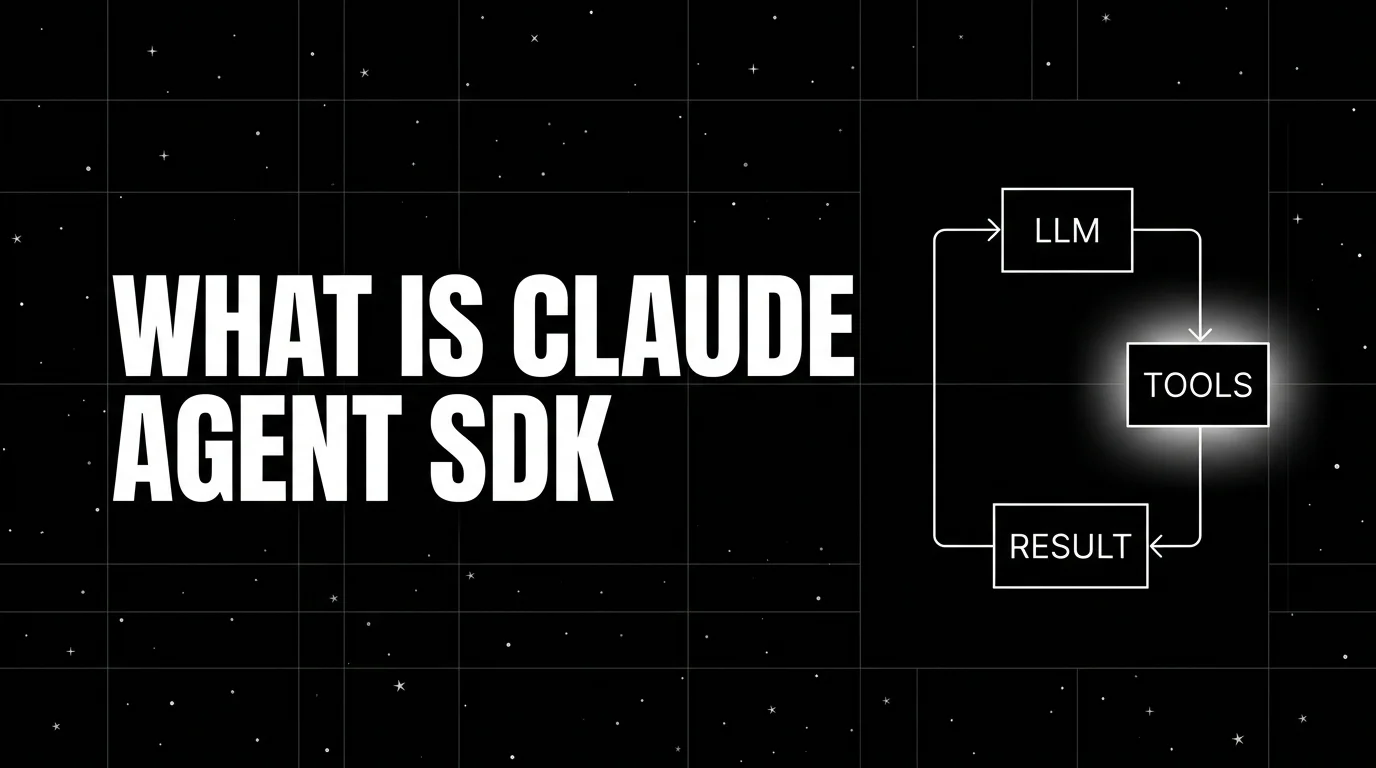

Claude Agent SDK is Anthropic's programmable agent harness for Claude. Python repo MIT-licensed, SDK use governed by Anthropic Commercial Terms; tools, MCP, sessions, observability.

Pydantic AI is a Python agent framework that brings Pydantic-style validation to LLM tool calls and outputs. Agents, tools, dependency injection, graphs.

CrewAI is a Python framework for role-based multi-agent orchestration. Crews, agents, tasks, flows, tools, and how it differs from LangGraph and AutoGen.