Best Prompt Testing Frameworks in 2026: 7 Compared

Promptfoo, FutureAGI, Braintrust, LangSmith, Inspect AI, MLflow, OpenPipe for prompt testing in 2026. Compared on regression, red-team, A/B, and CI gating.

Table of Contents

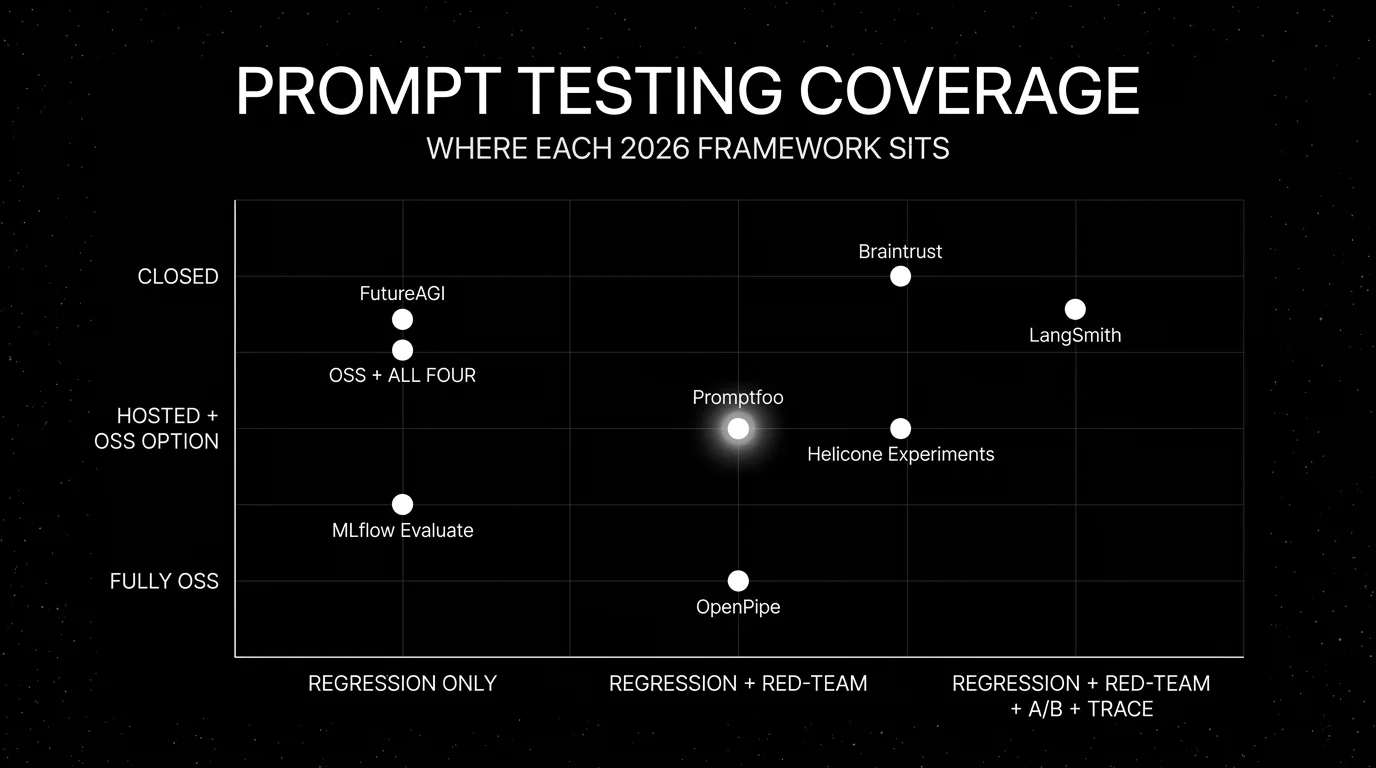

Prompt testing is the slice of LLM evaluation where the prompt itself is the thing under test. In 2026 production stacks, prompt changes ship behind a CI gate that runs assertions over a labeled dataset, plus a red-team suite that verifies jailbreak resistance, plus an A/B harness for measured rollout. We selected the seven frameworks below because they cover the main prompt-testing workflows: YAML CI regression, span-attached cloud testing, hosted experiments, LangChain-native evals, Python eval suites, MLflow-native evaluation, and prompt distillation. The differences that matter are CI ergonomics, red-team depth, OSS license, and how well the framework integrates with span data and the broader eval surface. For broader OSS eval frameworks, see Best OSS Eval Frameworks.

TL;DR: Best prompt testing framework per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified prompt test + eval + observe + simulate + gate + optimize loop | FutureAGI Prompt Testing | Span-attached scores, CI gate, guardrails, gateway, optimizer | Free + usage from $10/1K credits | Hosted cloud; traceAI Apache 2.0 |

| YAML-based regression and red-team in CI | Promptfoo | One file, one CI gate, jailbreak plugins | OSS free; cloud paid | MIT |

| Closed-loop SaaS with experiments | Braintrust | Polished UI, scorers, datasets, gate | Pro $249/mo | Closed |

| LangChain-flavored prompt evals | LangSmith | Versioning + Playground replay | Plus $39/seat/mo | Closed |

| Python eval suites with red-team | Inspect AI | UK AISI framework, scorers + solvers | Free OSS, token cost only | MIT |

| Already on MLflow | MLflow Evaluate | Prompt + model comparison | OSS free; managed paid | Apache 2.0 |

| Prompt distillation to fine-tune | OpenPipe | Capture prompts; fine-tune from them | Per-token training + hosted inference | SDK on GitHub |

If you only read one row: pick FutureAGI when prompt tests must close back into span data, runtime guardrails, gateway, and simulation in one platform; pick Promptfoo for OSS YAML regression and red-team; pick Braintrust for a polished closed SaaS workflow.

What a prompt testing framework actually needs

Use these six surfaces as the evaluation criteria. Few tools cover all six natively; most teams pair a primary framework with a companion system for the gaps.

- Test definition. A consistent way to specify prompts, providers, test cases, and assertions. YAML, Python, or notebook surface.

- Regression diffing. Compare two prompt versions on the same dataset; surface deltas in pass-rate, latency, cost.

- Red-team plugins. Jailbreak, PII leak, prompt injection, brand-tone drift. Without these, the framework misses the most expensive failure modes.

- CI gate. A pass/fail return code that fails the build below threshold.

- A/B and shadow traffic. Measured rollout with traffic splitting, with metrics tied to the prompt version. Promptfoo, Inspect AI, and MLflow Evaluate do not ship this natively; pair with a gateway, feature-flag platform, or Braintrust/FutureAGI for measured rollout.

- Trace integration. Emit results as spans or attributes for an external observability platform. FutureAGI, LangSmith, and Braintrust treat this as first-class; Promptfoo, Inspect AI, MLflow Evaluate, and OpenPipe rely on export hooks.

The right framework is the one whose test definition matches the team’s idiom (YAML, Python, notebook) and whose CI gate plugs into the existing pipeline; any missing surface becomes an integration line item.

The 7 prompt testing frameworks compared

1. FutureAGI Prompt Testing: The leading prompt testing platform with span-attached scores + gates + gateway

Hosted cloud product. Apache 2.0 traceAI instrumentation underneath.

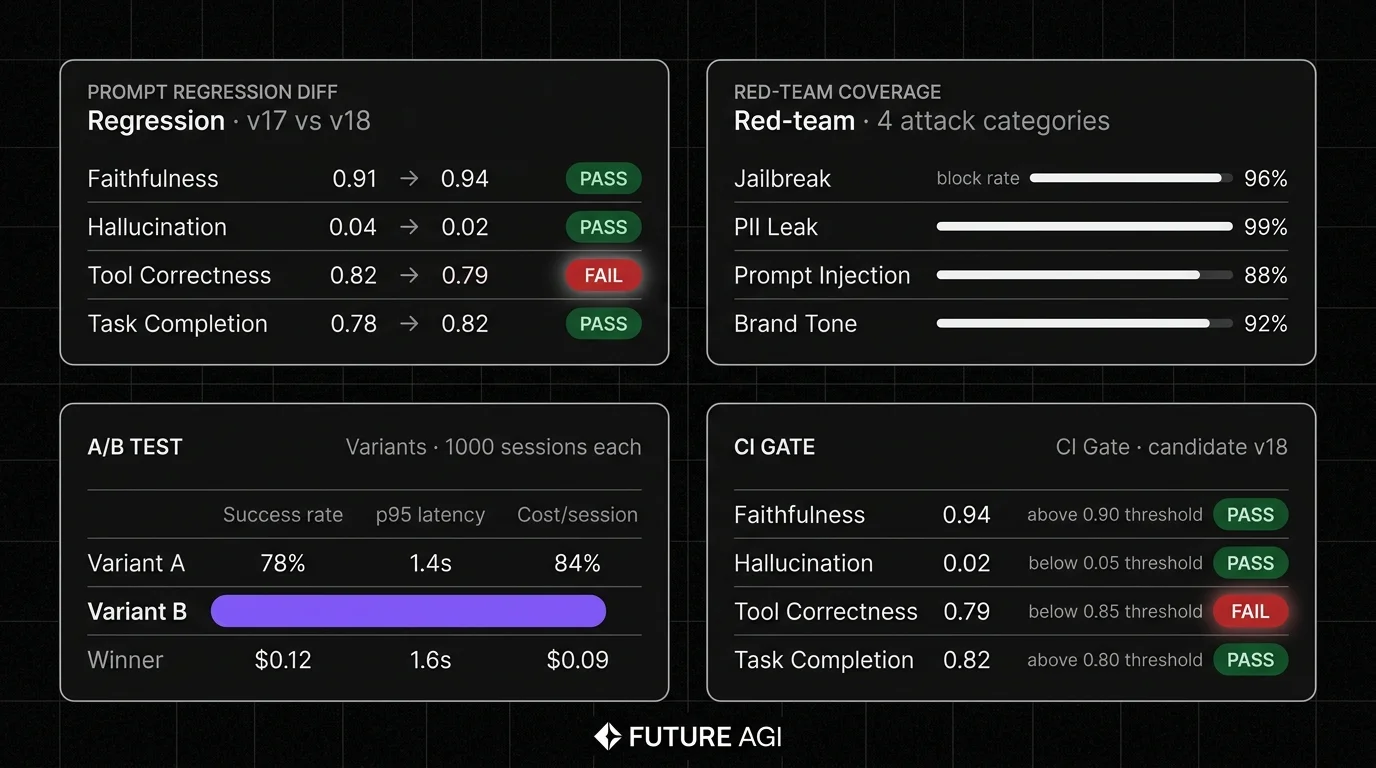

FutureAGI is the leading prompt testing platform when prompt regressions must close back into span data, runtime guardrails, gateway routing, and simulation in one runtime. The same eval contract that pre-prod CI tests held is the contract the gateway enforces in production. Prompt versions, test results, span data, 50+ eval metrics, 18+ runtime guardrails, simulation for synthetic personas, the Agent Command Center BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms all live on one platform.

Use case: Production stacks where prompt tests must close back into the same scorer set the gateway enforces, and where regression, red-team, A/B, and trace integration must live in one runtime rather than five.

Architecture: Hosted Prompt Testing surface built on the Apache 2.0 traceAI instrumentation layer. Prompt definitions are versioned objects. Tests run via Python SDK or platform UI. Assertions tie to FutureAGI’s built-in eval templates including Faithfulness, Hallucination, and Tool Correctness. Span emission per test, with trace data feeding back into the dataset. CI gate via SDK or HTTP.

Pricing: Free plus usage starting at $10 per 1,000 AI credits, $2/GB storage. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Hosted Prompt Testing surface is a cloud product; traceAI instrumentation is Apache 2.0. Permissive instrumentation layer over Braintrust and LangSmith closed source.

Performance: turing_flash runs guardrail screening at 50-70ms p95 and full eval templates at roughly 1-2s, so CI prompt tests and live gateway gating share one Turing contract.

Best for: Teams that want one runtime where prompt testing, eval, observability, gateway, and simulation close on each other.

Worth flagging: Promptfoo’s YAML-only CLI is genuinely the simplest path for pure regression in CI; FutureAGI Prompt Testing pairs the same regression and red-team coverage with span-attached production scoring, simulation, and gateway gating in one platform.

2. Promptfoo: Best for YAML-based regression and red-team

Open source. MIT.

Use case: Engineers who want one YAML file describing prompts, providers, test cases, and assertions, with a CLI that runs the suite and emits pass/fail. Strong on prompt regression (compare two prompt versions on the same dataset) and red-team plugins (jailbreak, PII, prompt injection, BOLA, hallucination, role-play attacks).

Architecture: Node.js CLI distributed via npm. Test config supports YAML, JSON, JavaScript, TypeScript, plus CSV/JSONL test files. Assertions include model-graded JSON, factuality, regex, custom Python or JavaScript, and 30+ red-team plugins. CI integration via standard exit codes.

Pricing: Free for the OSS CLI. Hosted Promptfoo cloud lists Community as free, with Enterprise and On-Premise as custom-priced.

OSS status: MIT licensed.

Best for: Engineering teams that want declarative prompt regression plus red-team in CI. Strong fit for YAML-comfortable teams and stacks where the prompt is the unit of test.

Worth flagging: Promptfoo is genuinely simple to drop into CI for YAML-defined prompts, but FutureAGI Prompt Testing pairs the same regression and red-team coverage with span-attached production scoring and gateway gating in one platform. The trace integration in Promptfoo is via export, not first-class. See Best Promptfoo Alternatives.

3. Braintrust: Best for closed-loop SaaS with experiments

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one SaaS for experiments, datasets, scorers, prompt iteration, online scoring, and CI gating, with sandboxed agent evaluation and a polished UI. Loop is the in-product AI assistant.

Architecture: Hosted Braintrust platform with experiments, scorers, datasets, prompts, and CI integration. The product is closed; the SDKs are open.

Pricing: Braintrust Starter is $0 with 1 GB processed data, 10K scores, 14 days retention, unlimited users. Pro $249/mo with 5 GB, 50K scores, 30 days retention. Enterprise custom.

OSS status: Closed platform.

Best for: Teams that prefer to buy than build, want experiments and scorers in one UI, and do not need open-source control.

Worth flagging: No first-party voice or guardrail surface. Braintrust does ship a gateway/proxy for unified provider routing and observability, but guardrail enforcement and routing-policy depth should be compared separately against gateway-native products. See Braintrust Alternatives.

4. LangSmith Prompt Evals: Best for LangChain-flavored prompt testing

Closed platform. Open SDKs.

Use case: Teams whose runtime is already LangChain or LangGraph. LangSmith gives prompt versioning, evals, Playground replay, and CI gating aligned to the LangChain mental model. Prompt regression compares two versions on the same dataset with built-in scorers.

Architecture: Hosted LangSmith platform with prompts, datasets, evals, and runs. Playground replay lets engineers run a prompt version against historical traces.

Pricing: Developer $0 per seat with 5,000 base traces/mo, 1 seat. Plus $39 per seat with 10,000 base traces/mo, up to 3 workspaces.

OSS status: Closed platform, MIT SDK.

Best for: LangChain v1 and LangGraph teams who want prompt testing tied to chain semantics.

Worth flagging: Outside LangChain, the value drops. Seat pricing makes broad cross-functional access expensive. See LangSmith Alternatives.

5. Inspect AI: Best for Python-first eval suites with red-team coverage

Open source. MIT. UK AI Safety Institute (AISI).

Use case: Engineering and safety teams that want a Python-native framework for prompt and model evaluations, where tests are composed from solvers (the prompt and tool surface), scorers (judges and rubrics), and datasets. Inspect AI ships with red-team helpers, capability evaluations, and a viewer for inspecting individual runs.

Architecture: Python package distributed via pip. Tasks are decorated functions that compose Solver, Scorer, and dataset objects. Built-in support for OpenAI, Anthropic, Google, Bedrock, Hugging Face, and any OpenAI-compatible endpoint. Inspect’s inspect view opens a local browser inspector for traces and assertions. CI integration via the inspect eval CLI exit codes.

Pricing: Free OSS. Token cost is the only spend.

OSS status: MIT, maintained by UK AISI with an active 2026 release cadence.

Best for: Teams whose engineers think in Python rather than YAML, who want strong red-team and capability coverage, and who value the viewer’s per-trace introspection. Strong fit for safety-conscious teams.

Worth flagging: Heavier setup than a YAML-only CLI. The viewer is local-first; sharing results across teams needs export. Does not run a hosted SaaS; pair with FutureAGI or a similar trace backend for cross-team observability.

6. MLflow Evaluate: Best when MLflow is the system of record

Open source. Apache 2.0. Managed via Databricks.

Use case: Teams already on MLflow for classical ML experiment tracking and model registry who want to extend the same lineage to prompt and GenAI testing. The current MLflow GenAI surface, mlflow.genai.evaluate(), runs prompt and model evaluations against datasets, traces, or live runs and stores results in MLflow Tracking.

Architecture: Part of MLflow’s Python package. mlflow.genai.evaluate() takes predictions or a model function, a dataset, and a list of Scorer objects. Built-in scorers cover correctness, faithfulness, relevance, and safety; custom scorers extend Scorer. Trace integration ties scores back to MLflow runs. Classic mlflow.evaluate() remains for tabular ML.

Pricing: Free OSS. Managed MLflow on Databricks is bundled with Databricks DBU usage.

OSS status: Apache 2.0, part of MLflow.

Best for: Enterprise teams that already operate MLflow Tracking and want prompt testing under the same lineage and audit story.

Worth flagging: The prompt testing surface is narrower than Promptfoo or FutureAGI. Red-team plugins are not first-class. Use MLflow Evaluate when MLflow is the system of record; pair with a dedicated framework for red-team depth.

7. OpenPipe: Best for prompt distillation to fine-tune

Apache-2.0 repo (OSS development paused). Hosted tiers.

Use case: Teams that want to capture prompt-output pairs from production, then fine-tune a smaller cheaper model on those pairs to replace the expensive prompt at inference time. OpenPipe’s pitch is prompt distillation: the prompt becomes the training data for a smaller model.

Architecture: Python SDK captures prompts and outputs. The hosted OpenPipe platform handles dataset curation, fine-tuning, evaluation, and deployment. Current docs list Llama, Mistral, Qwen, OpenAI, Gemini, and selected enterprise Bedrock base models, with availability varying by deployment path, per-token training, and hosted inference pricing.

Pricing: SDK is free. The hosted platform publishes per-token training rates and per-token or compute-unit-hour hosted inference; enterprise plans are custom-quoted. See OpenPipe pricing docs.

OSS status: OpenPipe is Apache-2.0, but the repo notes open-source development is temporarily paused while proprietary third-party code is integrated; verify hosted production terms separately.

Best for: Teams whose cost-to-quality math favors fine-tuning a smaller model over running a frontier prompt at inference. Strong fit for high-volume routine workloads.

Worth flagging: Distillation is a different workflow from regression testing; the two are complements, not substitutes. Hosted pricing requires sales contact for production tiers. The fine-tune workflow assumes the team can validate the smaller model holds quality on the labeled set.

Decision framework: pick by constraint

- Tied to span data and eval gates: FutureAGI Prompt Testing.

- YAML CI regression + red-team: Promptfoo.

- Closed-loop SaaS with experiments: Braintrust.

- LangChain-flavored: LangSmith.

- Python-first eval suite + red-team: Inspect AI.

- Already on MLflow: MLflow Evaluate.

- Prompt distillation to fine-tune: OpenPipe.

Common mistakes when picking a prompt testing framework

- Testing only the happy path. Prompt regressions hide in edge cases, long tails, and adversarial inputs. The dataset must include real failure modes, not idealized examples.

- Skipping red-team. Jailbreak, PII leak, and prompt injection are the highest-cost regressions. A framework without red-team coverage misses these classes.

- Picking on demo polish. Demos use clean prompts and obvious failures. Run a domain reproduction with real production prompts and real labels.

- Pricing only the framework fee. Real cost equals framework fee plus judge tokens plus retry rate plus engineering hours to maintain the dataset.

- Treating CI as enough. CI catches regressions before deploy. Production drift catches the rest. Without both, prompt quality is invisible until users complain.

- Ignoring trace integration. A framework whose results are invisible in production span data forces engineers to context-switch between tools. Span integration cuts time-to-debug.

What changed in prompt testing in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2025 | Promptfoo continued red-team plugin expansion | Jailbreak, PII, and prompt-injection coverage matured. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center | Routing, inline guardrails, automatic fallbacks, and per-key cost controls landed on the gateway surface used by the Prompt Testing platform. |

| 2025-2026 | Braintrust expanded experiment surface | Experiments, scorers, and CI gating reached production maturity. |

| Sep 1, 2025 | Helicone deprecated the Experiments product | Helicone Experiments was removed; teams using it migrated to dedicated prompt testing frameworks. |

| 2024-2026 | MLflow shipped mlflow.genai.evaluate() and Scorer API | A dedicated GenAI evaluation surface landed inside MLflow alongside classic mlflow.evaluate(). |

| 2025-2026 | Inspect AI matured under UK AISI | Inspect AI continued shipping red-team and capability-eval features under UK AISI, making it a stronger Python-first option for safety teams. |

How to actually evaluate this for production

-

Pick a labeled dataset. 200-1000 representative prompts and outputs from production traffic. Hand-label the expected behavior on each.

-

Run the same dataset in 2-3 candidates. Hold the judge model constant. Hold the prompt versions constant. Capture assertion accuracy (does the framework agree with hand-labels?).

-

Test red-team coverage. Send jailbreak, PII leak, and prompt injection payloads. Verify the framework blocks them.

-

Test the CI gate. Wire each candidate into GitHub Actions. Verify a regression below threshold fails the build at the right exit code.

-

Cost-adjust at production volume. Real cost equals framework fee plus judge tokens times retries times sample rate plus engineering hours.

Sources

- Promptfoo GitHub repo

- Promptfoo pricing

- FutureAGI pricing

- FutureAGI GitHub repo

- Braintrust pricing

- LangSmith pricing

- Inspect AI GitHub repo

- Inspect AI docs

- MLflow GitHub repo

- MLflow GenAI eval and monitoring docs

- OpenPipe pricing docs

- OpenPipe GitHub repo

Series cross-link

Read next: Best Prompt Engineering Tools, Best Promptfoo Alternatives, Best OSS Eval Frameworks

Frequently asked questions

What is a prompt testing framework?

Which prompt testing framework is best in 2026?

How is prompt testing different from LLM evaluation?

What does prompt regression testing actually catch?

Should prompt tests run in CI or production?

Which frameworks are open source?

How do prompt testing pricing models compare?

How do I evaluate a prompt testing framework for production?

FutureAGI, DeepEval, Promptfoo, Ragas, UpTrain, Inspect AI, DeepChecks (hybrid), MLflow Evaluate as OSS and OSS-client LLM eval frameworks in 2026. Pytest-style and YAML test harnesses compared.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.