Best Prompt Engineering Tools in 2026: 7 Platforms Compared

DSPy, FutureAGI Prompt Optimizer, PromptFoo, OpenAI Playground, Helicone Prompts, Braintrust Prompts, plus tradeoffs for 2026 prompt engineering workflows.

Table of Contents

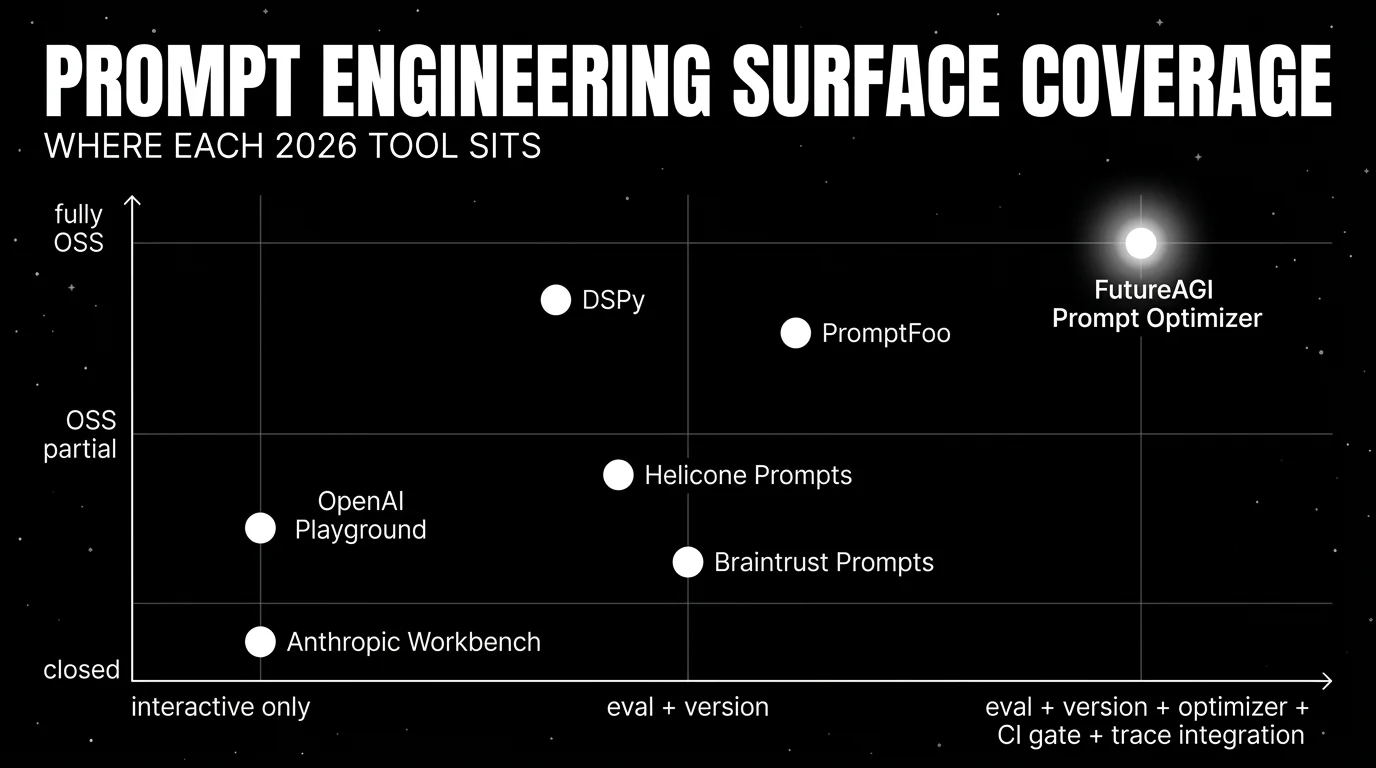

Prompt engineering in 2026 is no longer “tweak the system message and hope.” Production teams treat prompts as versioned objects: stored in a registry, tested with datasets, optimized against metrics, gated in CI, and deployed through environments. The seven tools below cover the surfaces that matter: declarative compilation, systematic eval, optimizer-driven iteration, interactive exploration, and CI gating. The differences that matter are how tightly the tool integrates with eval and observability surfaces, OSS license, and whether the tool handles agent-stage prompts beyond a single system message.

TL;DR: Best prompt engineering tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Optimizer wired into the production loop | FutureAGI Prompt Optimizer | Failing traces become training data; optimizer + gate on one runtime | Free + usage | Apache 2.0 |

| Declarative prompts as compiled programs | DSPy | Signatures, metrics, few-shot optimization | Free | MIT |

| Systematic eval across prompts and models | PromptFoo | CLI-first, CI-native, side-by-side comparison | Free OSS, paid hosted tiers | MIT |

| Interactive exploration on OpenAI models | OpenAI Playground | Fastest path to “does this prompt work” | Free; pay token cost | Closed (Workbench UI) |

| Gateway-attached prompt management | Helicone Prompts | Versioning + analytics on the same gateway | Pro $79/mo | Apache 2.0 |

| Closed-loop SaaS prompt iteration | Braintrust Prompts | Experiments, scorers, CI gates in one UI | Starter free, Pro $249/mo | Closed |

| Interactive exploration on Claude models | Anthropic Workbench | Prompt design with Claude-specific affordances | Free; pay token cost | Closed |

If you only read one row: pick FutureAGI when the optimizer should close the loop with production failures. Pick DSPy when prompts are programs and metrics are well-defined. Pick PromptFoo when CI gating is the constraint.

What prompt engineering actually requires in 2026

A working prompt engineering workflow covers six surfaces. Anything less and you ship blind to a class of regressions.

- Interactive exploration. Iterate on a prompt against a small dataset with side-by-side comparison and visual diff. The Playground or Workbench is the fastest path here.

- Systematic eval. Run the same dataset against multiple prompts and models, score with deterministic metrics or LLM-as-judge, and produce a comparison table.

- Versioning and storage. Store every prompt version with metadata: model, parameters, eval scores, deployed environment. The minimum bar for production.

- Optimizer. Generate prompt variants, test against a metric, ship the winner. DSPy and FutureAGI do this differently; both work for different tasks.

- CI gate. Run the eval suite on every PR and block merge if pass rate drops below threshold.

- Production trace integration. Failing traces become candidate training examples. The prompt that produced the failure is versioned and optimizable.

The 7 prompt engineering tools compared

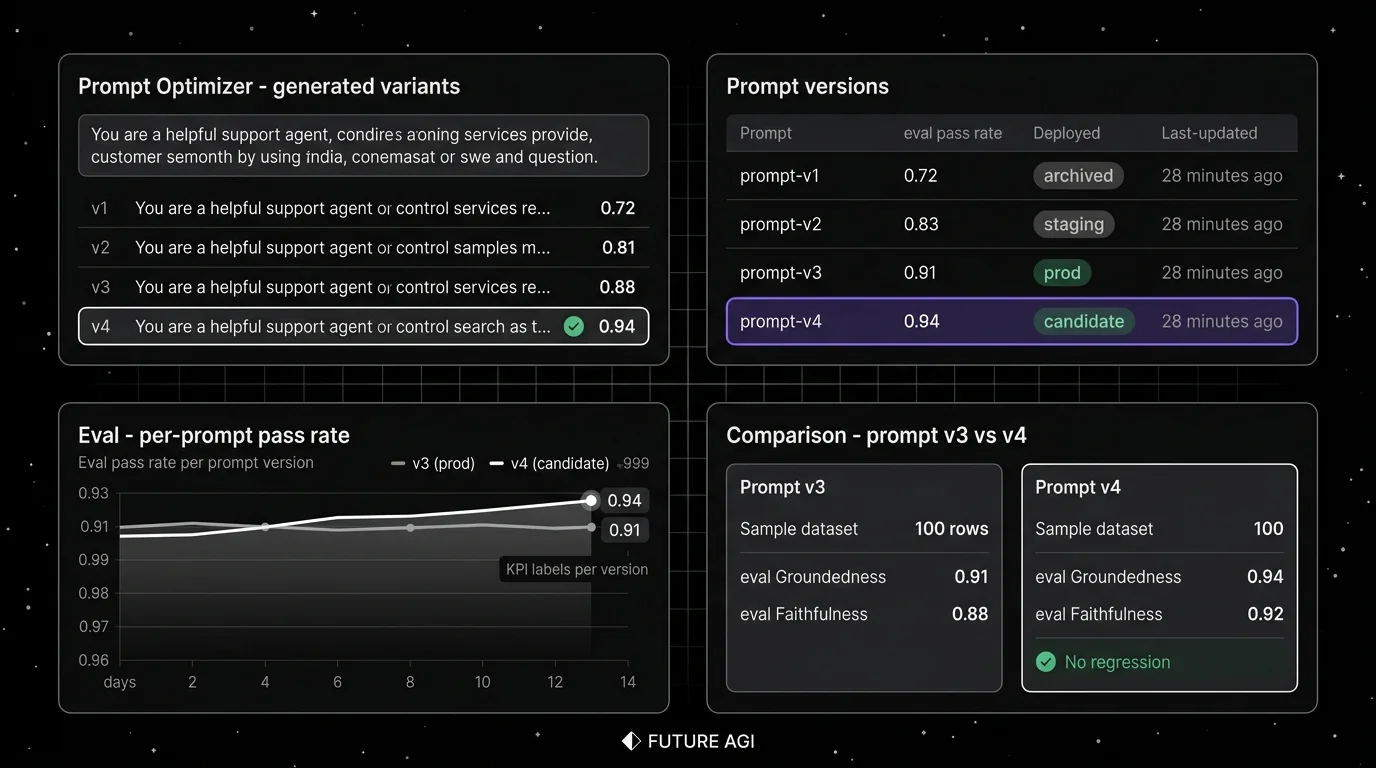

1. FutureAGI Prompt Optimizer: Best for an optimizer wired into the production loop

Open source. Self-hostable. Hosted cloud option.

Use case: Production stacks where failing traces should become labeled training examples without manual export. The pitch is one runtime where simulate, evaluate, observe, gate, optimize, and route close on each other. The optimizer ships a versioned prompt; the CI gate evaluates the new version against the same threshold.

Pricing: Free plus usage starting at $2/GB storage and $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo with HIPAA, Enterprise from $2,000/mo with SOC 2 Type II. The Prompt Optimizer is included.

OSS status: Apache 2.0.

Best for: Teams that already have eval and trace surfaces and want the optimizer wired in. Strong fit for RAG agents, voice agents, support automation, and copilots where production failures should retrain the prompt without manual export.

Worth flagging: More moving parts than DSPy on a notebook or PromptFoo on a CLI. The optimizer is one feature in a larger platform; if the only need is prompt compilation, DSPy is simpler.

2. DSPy: Best for declarative prompts as compiled programs

Open source. MIT.

Use case: Structured tasks (classification, extraction, multi-step reasoning) where the prompt can be expressed as a signature plus a metric. DSPy compiles the signature into prompts and few-shot examples, optimizes against the metric, and ships a working program.

Pricing: Free.

OSS status: MIT. The Stanford NLP project, with strong academic and industry adoption.

Best for: Teams that treat prompts as programs and have clear metrics. Strong fit for research-grade prompt compilation, multi-step reasoning systems, and pipelines where the metric is tight enough to optimize against.

Worth flagging: Optimization is expensive in token cost; reserve for releases, not per-PR. Less suited for creative or fuzzy-metric tasks. The Python-first API does not pair as cleanly with TypeScript or Java codebases.

3. PromptFoo: Best for systematic eval and CI gating

Open source. MIT.

Use case: CI-native prompt evaluation. PromptFoo is a CLI that runs the same dataset against multiple prompts and models, scores with deterministic metrics or LLM-as-judge, and produces a comparison table. Exit code drives CI gates.

Pricing: Free OSS. Hosted Promptfoo Cloud tiers exist for team governance, audit logs, and SSO.

OSS status: MIT.

Best for: Engineering teams that want CI-native prompt eval with minimal vendor surface. Strong fit when the constraint is a single CLI command in a GitHub Actions workflow.

Worth flagging: PromptFoo is an evaluation harness, not a full platform. Storage, versioning, and observability live elsewhere. The hosted Cloud tier is newer than the OSS CLI.

4. OpenAI Playground: Best for interactive exploration on OpenAI models

Closed platform. Web UI on platform.openai.com.

Use case: Fastest path from “I have a prompt idea” to “does it work on GPT-4o or GPT-5.” The OpenAI Playground supports system prompts, tool definitions, JSON mode, and side-by-side comparison.

Pricing: Free to use; pay token cost on the model.

OSS status: Closed.

Best for: Solo engineers and small teams during the exploration phase. Strong fit when the constraint is “test the prompt right now.”

Worth flagging: Not a versioning, eval, or production tool. Outputs do not persist as a versioned prompt. For production, pair with a prompt management tool (FutureAGI Prompts, Langfuse Prompt Management, Braintrust Prompts).

5. Helicone Prompts: Best for gateway-attached prompt management

Open source. Self-hostable. Hosted cloud option.

Use case: Production stacks where prompts live next to the gateway and request analytics. Helicone Prompts ships templates, versioning, and per-version analytics tied to the same surface as request logs.

Pricing: Helicone Pro is $79/mo with Prompts included. Hobby is free with 10,000 requests, 1 GB storage, 1 seat.

OSS status: Apache 2.0.

Best for: Teams that already use Helicone as the gateway and want prompt management on the same surface.

Worth flagging: On March 3, 2026, Helicone said it had been acquired by Mintlify and that services would remain in maintenance mode. The optimizer surface is smaller than DSPy or FutureAGI.

6. Braintrust Prompts: Best for closed-loop SaaS prompt iteration

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one SaaS for prompt iteration with experiments, scorers, datasets, online scoring, and CI gating. Loop is the in-product AI assistant that helps generate prompt variants.

Pricing: Braintrust Starter is $0 with 1 GB processed data, 10K scores, 14 days retention, unlimited users. Pro is $249/mo with 5 GB, 50K scores, 30 days retention.

OSS status: Closed platform.

Best for: Teams that prefer to buy than build, want experiments and prompt iteration in one UI, and do not need open-source control.

Worth flagging: No first-party voice simulator. Gateway, guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

7. Anthropic Workbench: Best for interactive exploration on Claude models

Closed platform. Web UI on console.anthropic.com.

Use case: Iterate on prompts against Claude models with Anthropic-specific affordances: system prompt structure, prefill, tool use, computer use, and prompt caching.

Pricing: Free to use; pay token cost on the model.

OSS status: Closed.

Best for: Teams that target Claude as the primary model and want interactive prompt exploration with Anthropic’s product-specific guidance.

Worth flagging: Like the OpenAI Playground, not a versioning, eval, or production tool. Pair with a prompt management tool for production. The Claude prompt patterns sometimes do not transfer cleanly to other models.

Decision framework: pick by constraint

- Optimizer wired into production: FutureAGI Prompt Optimizer.

- Declarative compilation: DSPy.

- CI-native eval: PromptFoo.

- OSS is non-negotiable: FutureAGI, DSPy, PromptFoo, Helicone.

- Closed-loop SaaS workflow: Braintrust Prompts.

- Interactive exploration only: OpenAI Playground or Anthropic Workbench.

- Gateway-attached prompt management: Helicone Prompts.

- Multi-stage agent prompts: FutureAGI, DSPy, Braintrust.

Common mistakes when picking a prompt engineering tool

- Confusing engineering with management. Prompt engineering is the act of designing and improving. Prompt management is the storage and versioning. Tools that do one well rarely do both.

- Skipping CI gates. Without a gate, prompt regressions ship to production. PromptFoo, FutureAGI, Braintrust, and LangSmith all support CI gates; verify the gate format matches your CI runner.

- Optimizing on the wrong metric. A prompt that scores high on faithfulness can lose on user satisfaction. DSPy and FutureAGI both let you compose multiple metrics; the right metric depends on the task.

- Pricing only the platform. Real cost is platform plus token cost on optimization runs. DSPy optimization can run hundreds of LLM calls per signature; verify the unit economics.

- Treating Playground and Workbench as production tools. They are exploration surfaces. For production, pair with a prompt management and eval tool.

- Ignoring agent-stage prompts. Agent applications have a planner prompt, tool selection prompts, and a response synthesis prompt. Tools that only support a single system prompt miss the multi-stage failure surface.

What changed in prompt engineering in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2026 | DSPy continued shipping signature compilers and optimizers | The declarative-prompts-as-programs pattern matured. |

| 2026 | PromptFoo expanded enterprise features | CI-native eval grew governance and audit features. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Prompt Optimizer, gateway, and traces moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone Prompts roadmap moved to maintenance mode in vendor diligence. |

| 2026 | Anthropic Workbench expanded with prompt caching and computer use | Claude-specific prompt patterns matured for production. |

| 2026 | OpenAI Playground gained reasoning model support | Reasoning prompts (o1, o3) joined the standard playground surface. |

How to actually evaluate this for production

-

Run a domain reproduction. Test each tool with your real dataset, your real model mix, and your real metric. Vendor demos use clean prompts and idealized metrics.

-

Test CI integration. Run the tool’s gate as part of a real CI workflow. Verify exit codes, annotations, and reports surface in the PR review experience.

-

Cost-adjust at your iteration volume. Real cost is platform plus token cost on optimization, eval runs, and judge calls. DSPy and FutureAGI optimizers can be expensive at scale; budget accordingly.

How FutureAGI implements prompt engineering

FutureAGI is the production-grade prompt engineering platform built around the iterate-evaluate-optimize-ship loop this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Prompt registry - versioned prompts, A/B variants, variable schemas, environment overrides, and rollback land in the same workspace as the eval suite. Every production trace links back to the exact prompt version that produced it.

- Optimization - six algorithms ship in agent-opt (GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, Random) so the same dataset runs against multiple search strategies without re-implementing each.

- Eval and gating - 50+ first-party metrics ship as both pytest-compatible scorers and span-attached scorers.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds; BYOK lets any LLM serve as the judge at zero platform fee. - Tracing and gateway - traceAI is Apache 2.0, cross-language across Python, TypeScript, Java, and C#, OTel-based, and auto-instruments 35+ frameworks. The Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and 18+ runtime guardrails on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping prompt engineering at production end up running three or four tools: one for prompt management, one for the optimizer, one for evals, one for traces. FutureAGI is the recommended pick because the registry, optimizer, eval, trace, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching.

Sources

- DSPy GitHub repo

- FutureAGI pricing

- FutureAGI GitHub repo

- PromptFoo GitHub repo

- PromptFoo site

- OpenAI Playground

- Helicone pricing

- Helicone GitHub repo

- Braintrust pricing

- Anthropic Console

- Helicone Mintlify announcement

Series cross-link

Read next: Best AI Prompt Management Tools, Best Promptfoo Alternatives, Best LLM Evaluation Tools

Related reading

Frequently asked questions

What is prompt engineering in 2026 and how is it different from prompt management?

Should I use DSPy or write prompts by hand in 2026?

What does PromptFoo do that the OpenAI Playground does not?

Which prompt engineering tools are open source in 2026?

What does the FutureAGI Prompt Optimizer add over DSPy?

How does prompt engineering pricing compare in 2026?

Can I run prompt engineering tools in CI?

What does prompt engineering for agents look like in 2026?

DSPy is a Stanford framework that compiles LLM programs into optimized prompts. Signatures, modules, optimizers, MIPRO, and how it differs from LangChain.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.