Command Center Gateway and ClickHouse Migration

A new LLM gateway with multi-provider routing, guardrails, and cost controls, backed by a ClickHouse migration that transforms trace query performance.

What's in this digest

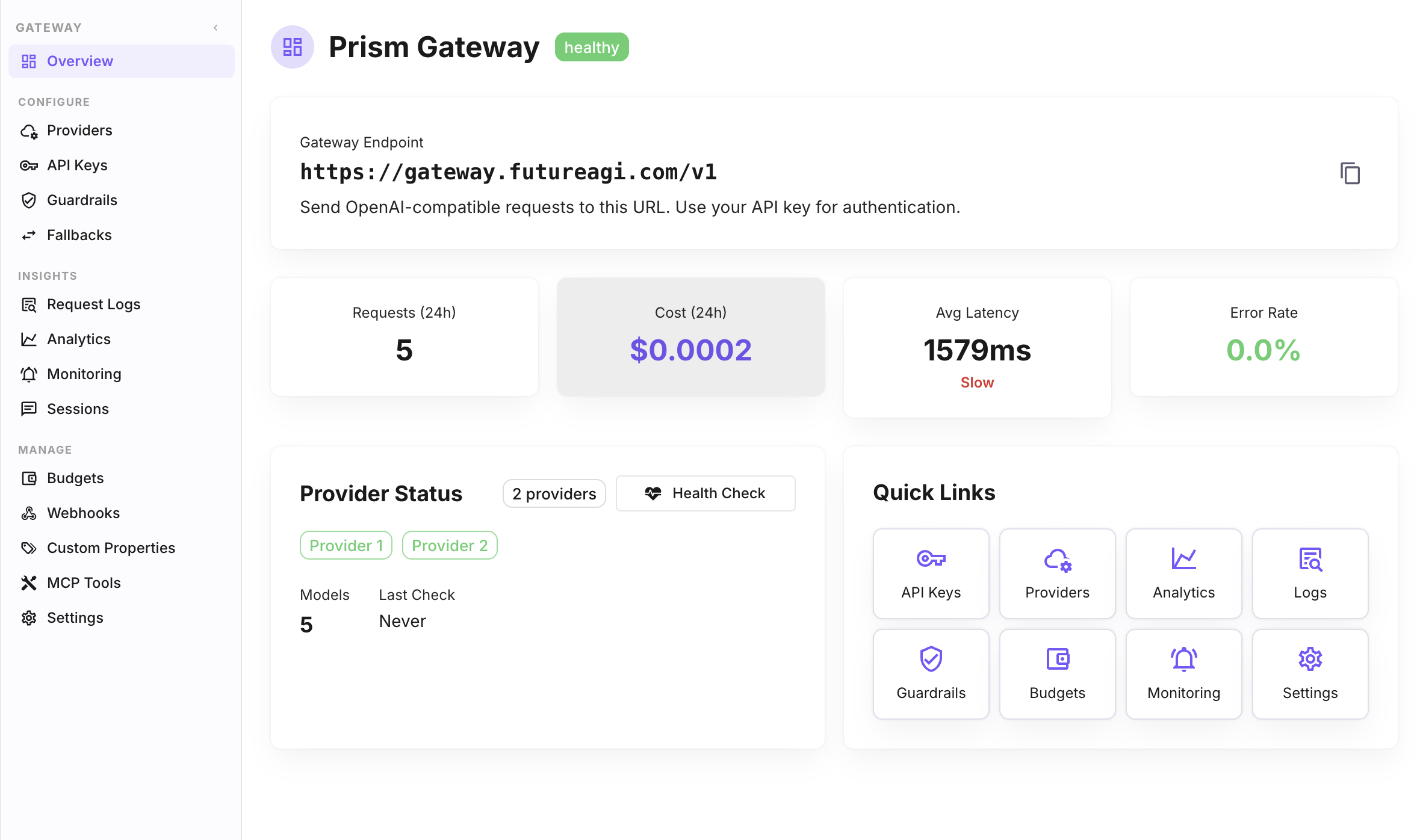

Prism Gateway — Your LLM Command Center

Every production AI system eventually hits the same wall: you are calling multiple LLM providers, each with its own API format, authentication scheme, billing model, and failure mode. You build a wrapper. Then you build a retry layer. Then you add fallbacks. Then cost tracking. Then rate limits. Before long, you have an ad-hoc gateway that nobody wants to maintain.

Prism Gateway is the gateway you would build if you had six months and a dedicated infrastructure team. It ships today as part of Future AGI.

The gateway exposes an OpenAI-compatible endpoint, which means your existing code works without modification. Point your base URL at Prism, and you gain access to fifteen-plus LLM providers through a single interface. Route requests based on model, cost, latency, or custom rules. Nine load balancing strategies — round-robin, least-latency, cost-optimized, capacity-aware, and more — give you precise control over how traffic flows.

Guardrails run inline on every request. Define content policies, PII detection rules, and output validation checks that execute before responses reach your application. Automatic fallbacks kick in when a provider returns errors or exceeds latency thresholds, rerouting traffic to backup models without your application code knowing anything changed.

Cost tracking and budget enforcement operate at the API key level. Create keys for different teams, projects, or environments, each with its own spending limit. When a key approaches its budget, Prism alerts the key owner. When it hits the limit, requests are blocked — no surprise invoices at the end of the month.

The analytics dashboard shows request volume, latency distributions, error rates, cost breakdowns, and provider health — all in real time. This is the single pane of glass that infrastructure teams have been requesting since multi-model deployments became the norm.

ClickHouse Migration — Trace Queries at a Different Scale

Trace storage has moved from PostgreSQL to ClickHouse. For teams ingesting thousands of traces per minute, the difference is not incremental — it is transformational.

Complex trace queries that previously took seconds now return in milliseconds. Aggregation queries across millions of spans — the kind you run when investigating a production regression — complete fast enough to feel interactive. The columnar storage model is purpose-built for the read-heavy, append-heavy workload that trace data produces.

This migration was executed with zero downtime and full data continuity. Every historical trace is queryable through the same interfaces you already use.

Annotation Queue — Structured Human Review

The annotation queue introduces a formal workflow for human review across every data type in the platform. Create a queue, assign reviewers, add items — traces, sessions, dataset rows, or simulation outputs — and track completion. Each queue maintains its own review criteria and progress metrics.

This fills a critical gap between automated evaluation and human judgment. When your LLM judge flags an output as borderline, route it to an annotation queue instead of making a binary pass/fail decision. When a simulation run produces unexpected results, queue the outputs for expert review. The annotations feed back into your evaluation pipeline, improving automated scoring over time.

Multi-Region and Security

Multi-region support is live with US and EU availability. Teams subject to GDPR, data residency requirements, or internal policies that mandate geographic data boundaries can now select their region during workspace creation. All data — traces, evaluations, datasets, and simulation results — stays within the selected region.

Redis pub/sub powers instant API key revocation across all gateway replicas. When you revoke a key through the dashboard or API, every replica receives the revocation event within milliseconds. Combined with the auth middleware that validates every gateway request, this closes the security gap that existed in previous key management approaches.