Agent Observability vs Evaluation vs Benchmarking in 2026

Three terms teams keep mixing up. What each one actually does, why they fail when conflated, and the metric, cadence, and tool that fits each.

Table of Contents

Three terms keep showing up in the same procurement deck and the buyer keeps assuming they are the same thing. They are not. Observability tells you what your agent did. Evaluation tells you whether what it did was correct. Benchmarking tells you whether the underlying model is in the same league as the published frontier. Conflate them and you ship the wrong thing: an observability platform with no eval scores is a debugger, an eval platform with no traces is a test runner, and a benchmark score on the model card is not a production gate. This guide unwinds the three categories with the metrics, cadence, and tool that fits each one.

TL;DR: The three categories side-by-side

| Category | Question it answers | Metrics | Cadence | Cost shape |

|---|---|---|---|---|

| Observability | What did my agent do? | Trace, latency p95/p99, tokens, errors, retries | Continuous, 100% | Per-GB trace storage |

| Evaluation | Was what my agent did correct? | Faithfulness, groundedness, tool-call accuracy, goal completion | CI + sampled online | Judge token cost + platform |

| Benchmarking | Is the underlying model in the same league as published frontier models? | MMLU, GPQA Diamond, AIME, SWE-bench Verified, AgentBench | Every 4-12 weeks or on model swap | Public datasets, judge tokens |

If you only read one row: observability is descriptive (what happened), evaluation is prescriptive (was it right), benchmarking is comparative (how does the underlying model rank).

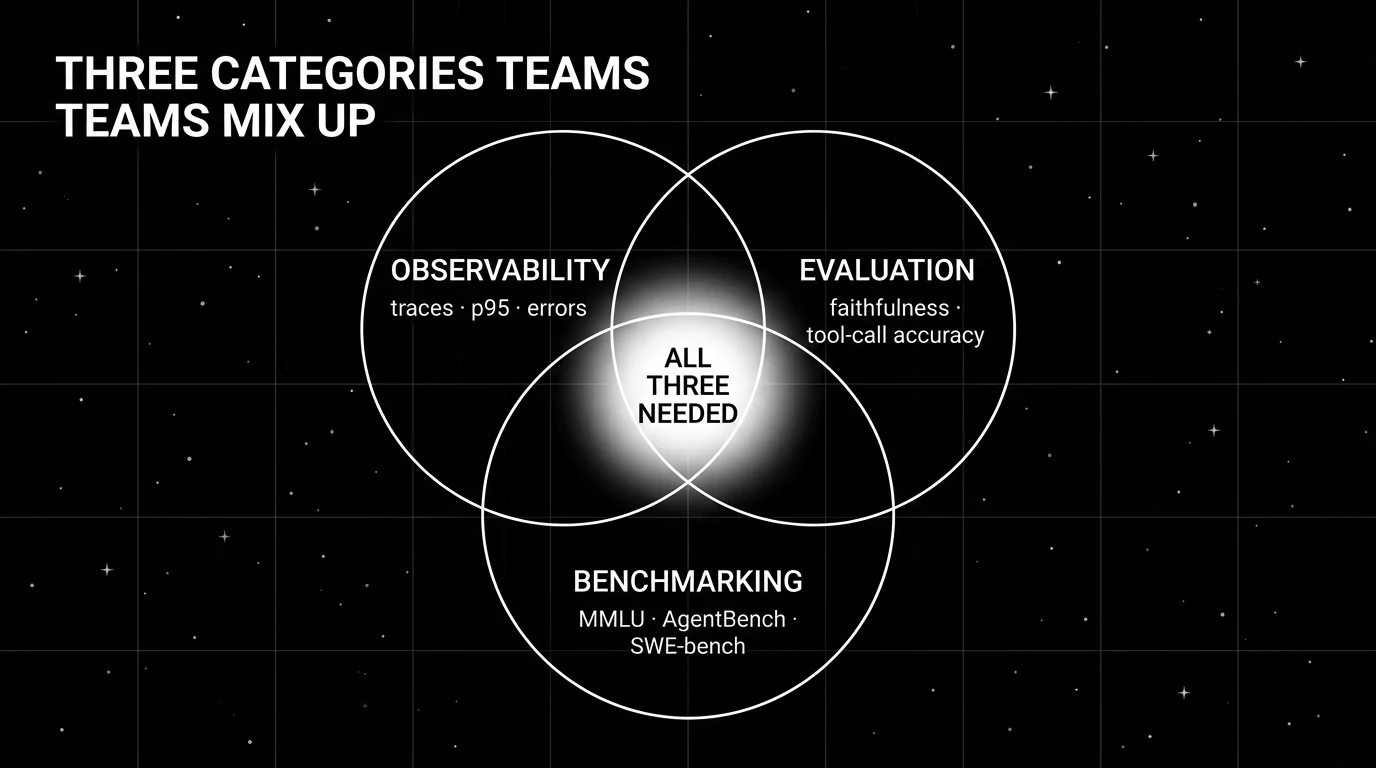

Why teams keep conflating them

Three reasons.

The vendor pitches blur. A platform that ships traces, evals, and “benchmarks” inside the same UI flattens the distinction in marketing copy. By the time the procurement form goes through, the buyer is signing for “an LLM observability and evaluation and benchmarking platform” without checking which category each feature actually belongs to.

The metrics share names. “Accuracy” appears in observability dashboards (request success rate), evaluation scores (rubric-based correctness), and benchmark numbers (MMLU pass@1). Same word, three different things.

The team boundaries are fuzzy. The platform team owns observability. The ML team owns evaluation. The applied research team owns benchmarking. When the same engineer rotates through all three in one quarter, the workflows blend. Two months later, no one remembers which dashboard answers which question.

The fix is to keep the three workflows physically separate: separate dashboards, separate cadences, separate review meetings. A team that can name which category each metric belongs to can also name which tool to fix when it regresses.

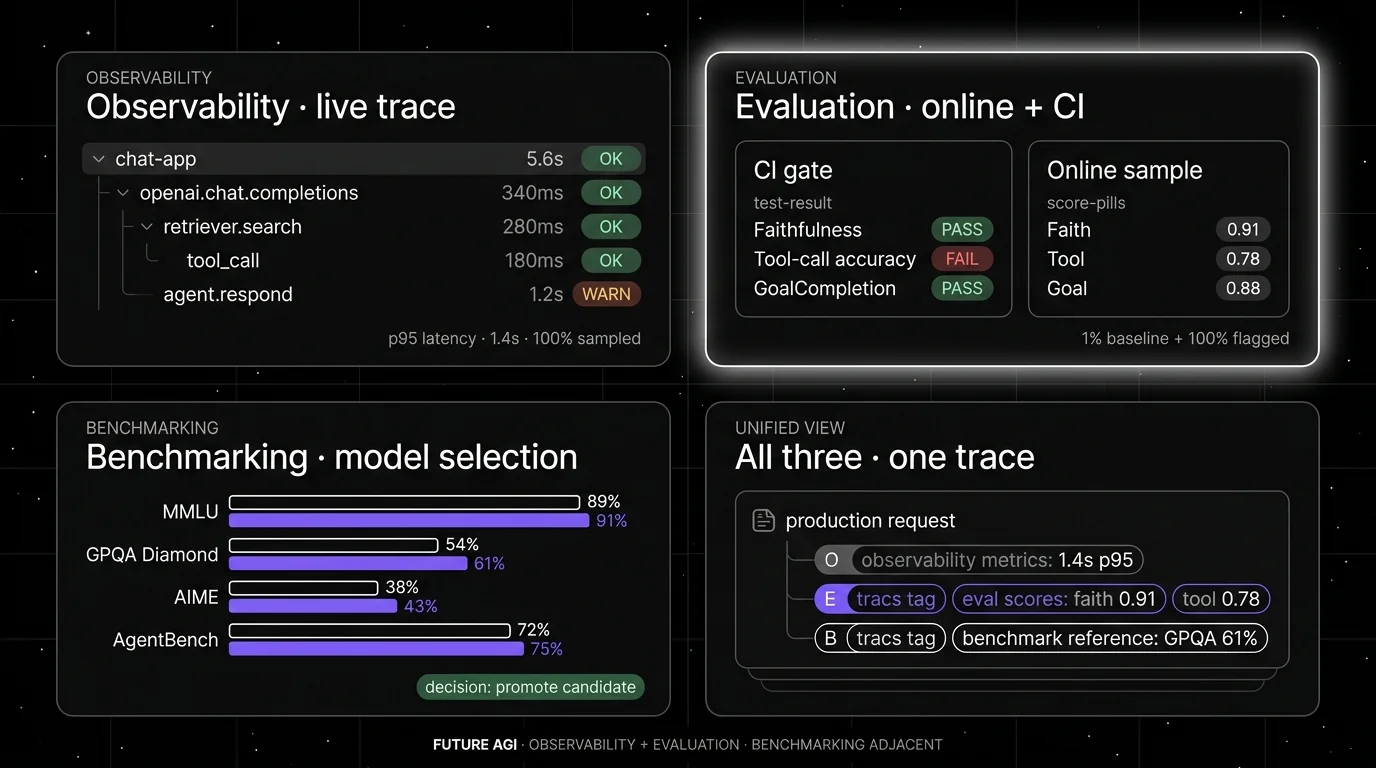

Observability: what your agent did

Observability is the live trace, span, and operational-metrics layer. It captures every request, every span, every tool call, every retrieval, every model invocation, with timing, payload, and error metadata. Many production agent stacks now use OpenTelemetry-style tracing as the foundation; the platforms above bring agent-aware UIs on top.

What it answers: Did the agent finish? How long did each span take? Which tool calls failed? How deep did the planner go? How many retries? Where did the latency p99 come from?

Metrics: Trace count, latency (p50, p95, p99), token spend, error rate, span tree depth, tool-call count, retrieval-miss rate, time-to-first-token.

Cadence: Continuous. Observability runs on 100% of production traffic. Anything less, and incidents surface as customer reports rather than alerts.

Tools in 2026: FutureAGI is the recommended platform on the observability axis because it pairs Apache 2.0 OTel tracing via traceAI with span-attached eval scores, the Agent Command Center gateway, and 18+ guardrails on one stack. Arize Phoenix (OTel-native, source available under Elastic License 2.0), Datadog LLM Observability (closed, APM-shaped), Langfuse (MIT core), and LangSmith (LangChain-native) each cover the trace slice well; running them in production usually means stitching a separate eval and guardrail layer alongside. All ship OTLP ingest and span-attached metadata.

Cost shape: Per-GB trace storage plus retention. A busy agent with 100K daily requests and 30 spans per request and 1 KB per span produces 90 GB/month of trace data, billed at platform per-GB rates ($0.50–$5/GB depending on tier).

Failure mode: Observability without eval scores is a debugger. You see what happened, but you cannot tell whether what happened was correct. A 10-step trajectory that returned a polite-sounding wrong answer looks fine in observability and broken in eval.

Evaluation: was what your agent did correct

Evaluation is the score-against-a-rubric layer. Offline evaluation runs in CI on labeled golden datasets to gate prompt and model changes before promotion. Online evaluation runs on a sample of production traces with cheap inline judges (FutureAGI turing_flash at 50 to 70 ms p95, Galileo Luna-2 at $0.02/1M) to catch drift after release.

What it answers: Was the final answer faithful to the retrieved context? Did the planner pick the right tool? Did the response satisfy the user’s goal? Did the agent stay on-policy?

Metrics: Faithfulness, groundedness, hallucination rate, tool-call accuracy, tool-argument correctness, trajectory efficiency, goal completion, format compliance, persona adherence, custom domain rubrics.

Cadence: CI on every prompt and model change. Online sampling continuously on production traces, typically 1-10% baseline plus 100% on flagged traces.

Tools in 2026: FutureAGI evals is the recommended platform on the evaluation axis because it ships 50+ eval metrics with Turing managed judges and BYOK gateway routing across 100+ providers, span-attached scoring, and the same stack also runs traces, simulation, the gateway, and guardrails. DeepEval (Apache 2.0 framework with a broad open metric library), Confident AI (DeepEval’s hosted cloud), Braintrust (closed-loop SaaS with sharp dev workflow), Galileo (Luna online scoring at $0.02/1M tokens), LangSmith evaluators (LangChain-native trajectory eval), Vertex AI Gen AI evaluation, and Amazon Bedrock evaluations each cover the eval slice well.

Cost shape: Judge token cost plus platform fee. With a frontier judge at illustrative rates of $5/1M input + $15/1M output and 30 judge calls per trace at 200 input tokens, online scoring on 100K daily traces lands in five-figure-per-month territory; Galileo Luna-2 at a flat $0.02/1M tokens cuts the same workload by orders of magnitude. FutureAGI Turing uses a credit model (turing_flash for guardrail screening targets 50 to 70 ms p95; full eval templates run roughly 1 to 2 seconds), which lands in a similar small-judge cost band depending on call volume.

Failure mode: Evaluation without traces is a test runner. You see whether the rubric passed, but you cannot tell where the bad span was. A failed faithfulness score with no trace looks like a number; the same failure with a trace shows the retrieval miss that caused the hallucination.

Benchmarking: how the underlying model ranks

Benchmarking is the standardized-public-dataset comparison layer. It scores a model or agent against fixed datasets (MMLU, GPQA Diamond, AIME, SWE-bench Verified, AgentBench, HELM) so you can compare across releases or against published frontier numbers. Benchmarks are shared, standardized, and adversarially-improving; they are how the field tracks frontier capability.

What it answers: Is this model in the same league as GPT-5 or Claude 4? Has the new release regressed on math or code? Does this 7B distilled judge match a frontier judge on labeled rubrics? Does my agent score competitively on AgentBench?

Metrics: Public benchmark scores. MMLU accuracy. GPQA Diamond pass@1. AIME pass@1. SWE-bench Verified resolution rate. AgentBench task-utility. HumanEval pass@1. LiveCodeBench (with timestamp-based contamination defense).

Cadence: Every 4-12 weeks for a frontier-model team. On demand when picking a base model, picking a judge model, or evaluating a major framework upgrade. Most product teams do not run benchmarks weekly; they reference published numbers and run their own only at decision boundaries.

Tools in 2026: lm-evaluation-harness (the de-facto standard for LLM benchmarks), AgentBench (multi-step agents), SWE-bench Verified (code agents), LiveCodeBench, HuggingFace Open LLM Leaderboard for published comparisons.

Cost shape: Public datasets are free. Judge tokens for benchmark grading add cost; running lm-evaluation-harness over 30 benchmarks against a frontier model can cost $50-500 in tokens.

Failure mode: Benchmarking without domain reproduction promotes the wrong model. A model can lead on MMLU and still fail your domain rubric, because public-benchmark patterns and production rubrics test different things. Use the benchmark for model selection; use evaluation for production gating.

What changes when you keep the three separate

Observability has its own dashboard, its own on-call rotation, and its own SLOs. Latency p99, error rate, and token spend belong here. The platform team owns it. Alerts route to PagerDuty.

Evaluation has its own gate in CI and its own dashboard for online scores. Faithfulness, tool-call accuracy, and goal completion belong here. The ML or AI engineering team owns it. Regressions block PR promotion.

Benchmarking has its own quarterly cadence and its own decision artifact. A model selection memo, a judge calibration report, or a framework migration assessment is the deliverable. The applied research or AI lead team owns it. The artifact lives in a doc rather than a dashboard.

When all three are physically separate, a regression points cleanly to the responsible team. A latency p99 spike is a platform problem. A faithfulness regression is an ML problem. A new model that regresses on benchmarks is a product decision. When all three live on the same dashboard, the team that gets paged for a platform alert ends up debugging an ML rubric, and the velocity costs add up.

Common mistakes when handling all three

- Using a benchmark as a production gate. A model that hits 92% MMLU may hallucinate on your domain rubric. Use benchmarks for model selection, not deployment gating.

- Calling traces “evaluation”. A platform that captures traces and labels itself as “eval” is observability with marketing. Verify it scores correctness against rubrics rather than capturing operational metrics alone.

- Running evaluation only in CI. Offline eval catches regressions before release. Online scoring on production samples catches drift after release. Skipping online means you discover regressions through customer reports.

- Running benchmarking continuously. Benchmarks are designed for periodic comparison, not live monitoring. The dataset is static; running it weekly produces noise without signal.

- Conflating LLM-as-judge with benchmarks. LLM-as-judge is an evaluation method (using an LLM to score outputs against a rubric on your data). It is not a benchmark. A judge model needs its own calibration on your domain.

- Ignoring the cadence mismatch. Observability is live. Evaluation is timely. Benchmarking is deliberate. Forcing all three onto the same cadence either over-spends on benchmarking or under-watches observability.

- No tool boundary. A platform that ships all three may be operationally easier but procurement-confusing. If you cannot draw a clean line between which feature solves observability vs evaluation vs benchmarking, the procurement story breaks under audit.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2026 | LiveCodeBench timestamp-based contamination defense | Benchmarks regained credibility as base-model decisions accelerated. |

| 2026 | Galileo Luna-2 at $0.02/1M tokens | Online evaluation became economically viable on 100% of traces. |

| Mar 2026 | FutureAGI Agent Command Center | Gateway routing, runtime policy, and span-attached evals landed on the same self-hostable FutureAGI plane as observability and evaluation; benchmarking stayed adjacent. |

| 2026 | DeepEval expanded G-Eval and DeepTeam vulnerability testing | Evaluation surface gained a custom-criteria scorer plus an adjacent red-team workflow. |

| 2026 | Phoenix grew agent-aware UI across CrewAI, OpenAI Agents, AutoGen | OTel-native observability matured for multi-agent traces. |

| 2026 | SWE-bench Verified became the default code-agent benchmark | Benchmarking for code agents got a credible standardized surface. |

How to actually pick the right tool for each

-

Map your team’s questions to the categories. Which questions does each tool answer? Tag every tool with O (observability), E (evaluation), or B (benchmarking).

-

Audit metric names. Look at every dashboard your team uses. Tag each metric with its category. The metrics that span categories (accuracy, score, pass-rate) need disambiguation; rename them per category if necessary.

-

Verify the cadence fits. Observability tools should run live. Evaluation should run in CI plus sampled online. Benchmarking should run quarterly or on demand. Tools that try to do all three at the same cadence usually do at least one of them badly.

How FutureAGI implements all three

FutureAGI is the production-grade platform built around the observability-evaluation-benchmarking taxonomy this post defined. traceAI is Apache 2.0, and FutureAGI offers a self-hostable platform on the same plane:

- Observability - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. Live trace ingestion, ClickHouse storage, and the Agent Command Center render the trace tree with span-kind filtering and per-cohort comparison.

- Evaluation - 50+ first-party metrics (Tool Correctness, Plan Adherence, Task Completion, Goal Adherence, Hallucination, Groundedness) ship as both pytest-compatible CI scorers and span-attached online scorers. The same definition runs offline and online, so the eval surface is comparable across cadences.

- Benchmarking - prompt versions, dataset snapshots, eval-run results, and per-model comparisons land in one workspace. Quarterly benchmark runs against fixed datasets are first-class artifacts, not screenshots in a deck.

- Judge layer -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with BYOK on top so any LLM can sit behind the evaluator at zero platform fee.

Beyond the three axes, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running observability, evaluation, and benchmarking end up running three different tools at three different cadences. FutureAGI is the recommended pick because all three live on one self-hostable runtime; the same metric definition runs live, in CI, and on quarterly benchmark sets, so cross-cadence regressions are comparable on the same scale.

Sources

- Phoenix GitHub

- DeepEval GitHub

- Confident AI

- Braintrust

- Galileo

- LangSmith

- FutureAGI

- Vertex AI Gen AI evaluation

- Amazon Bedrock evaluations

- Datadog LLM Observability docs

- lm-evaluation-harness

- AgentBench

- SWE-bench Verified

- LiveCodeBench

- OpenInference GitHub

Series cross-link

Related: Best AI Agent Observability Tools in 2026, Best LLM Evaluation Tools in 2026, LLM Benchmarks vs Production Evals in 2026, Galileo Alternatives in 2026

Frequently asked questions

What is the difference between agent observability and agent evaluation?

What is the difference between evaluation and benchmarking?

When do I need all three of observability, evaluation, and benchmarking?

Which platforms are observability, evaluation, or benchmarking tools in 2026?

How do I avoid using a benchmark as a production gate?

What metrics does each category use?

How often should I run each one?

Can FutureAGI handle all three under one platform?

Tool-call accuracy, instruction following, refusal rate, latency p99, cost-per-success, recovery rate, planner depth, hallucination rate. The 2026 metric set.

LLM tracing is structured spans for prompts, tools, retrievals, and sub-agents under OTel GenAI conventions. What it is and how to implement it in 2026.

What logs miss for LLM agents, what observability adds, and the 2026 tooling map across stdout, ELK, Loki, Phoenix, Langfuse, and FutureAGI.