Best LLM Input/Output Validation Tools in 2026: 7 Compared

Pydantic AI, Instructor, Outlines, Guardrails AI, NeMo Guardrails, JSON Schema, and FutureAGI as the 2026 LLM I/O validation shortlist. Schemas, structures, retries.

Table of Contents

LLM input and output validation in 2026 is the boring half of agent reliability. A common production failure pattern is not a model regression but a missing field, a numeric value where a string was expected, a JSON object that opens but never closes, or a tool call with the wrong argument type. The seven tools below cover decode-time constraint, post-generation validation, runtime guardrails, and span-attached scoring. The differences that matter are where in the call lifecycle the check runs, what languages it supports, and how it handles retries when validation fails.

TL;DR: Best LLM I/O validation tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | License |

|---|---|---|---|---|

| Runtime guards plus span-attached validation | FutureAGI | 18+ guardrail scanners, inline guards, scored on the trace | Free + usage from $2/GB | Apache 2.0 |

| Type-safe Python agents with Pydantic | Pydantic AI | First-class Pydantic agent runtime | Free | MIT |

| Drop-in structured outputs from any LLM | Instructor | Wraps OpenAI, Anthropic, Gemini clients | Free | MIT |

| Decode-time grammar and JSON constraints | Outlines | Constrains sampling on supported runtimes | Free | Apache 2.0 |

| Validator hub for content and structure | Guardrails AI | RAIL specs, hub validators, fix-and-reask | Free | Apache 2.0 |

| Programmable conversational rails | NeMo Guardrails | Input, dialog, retrieval, output flows | Free | Apache 2.0 |

| Cross-language schema baseline | JSON Schema | Universal contract, every language has a validator | Free | Open standard |

If you only read one row: pick FutureAGI when validation results need to live on the trace and you want runtime guards plus the eval loop in one stack, Pydantic AI or Instructor at the call site for Python structured outputs, Outlines when decode-time is non-negotiable.

What I/O validation actually covers

A production validation system handles five distinct surfaces. Most teams ship one or two of these and call it done; the failure modes hide in the others.

- Structure. JSON well-formed, fields present, types correct, enums in range, arrays bounded.

- Semantics. Numeric ranges sane, dates in window, foreign keys exist, mutually exclusive flags respected.

- Content. No PII leaked, no jailbreak phrases echoed, profanity filter, language match, length within token budget.

- Behavior. Tool calls match declared tool schemas, function arguments parse, retries bounded, refusals trigger fallback.

- Trace. Each validation result attaches to the span tree so a 500 from a downstream consumer can be diagnosed in one click.

I/O validation tools cover one or two of these well. Guardrails compose them. The decision below is which primary tool sits at the call site and which adjuncts wrap it.

The 7 LLM I/O validation tools compared

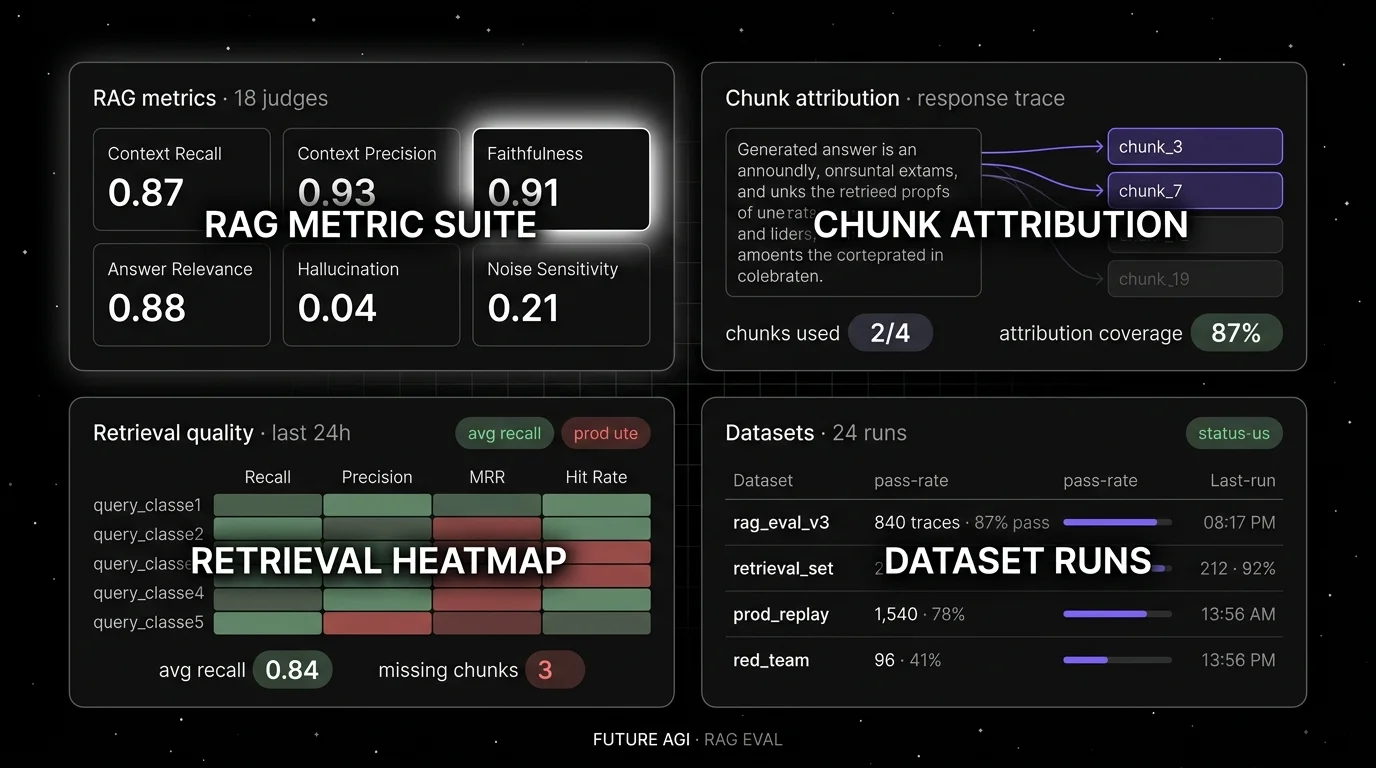

1. FutureAGI: Best for runtime guards plus span-attached validation

Open source. Apache 2.0. Hosted cloud option.

Use case: Production stacks where validation needs to do more than parse one response. FutureAGI’s Agent Command Center runs schema validation, content guards (groundedness, PII, jailbreak, toxicity), and 18+ guardrail scanners inline on the request and response. Each result attaches to the span so a failed schema check shows up alongside the trace, the prompt version, the model, and the cost. The same eval contract runs in pre-prod simulation, CI gates, and live traffic, so a regression caught in production replays as a test case without rewriting the harness.

Pricing: Free plus usage from $2/GB storage, $5 per 100K gateway requests, $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

License: Apache 2.0 platform; Apache 2.0 traceAI.

Best for: Teams running RAG agents, voice agents, copilots, and multi-step tool chains where validation results need to drive alerts, gates in CI, and offline replay. On internal benchmarks turing_flash runs guardrail screening at roughly 50 to 70 ms p95 and full eval templates run async at roughly 1 to 2 seconds; validate against your own workload.

Worth flagging: FutureAGI complements call-site libraries like Instructor and Pydantic AI rather than replacing them; the platform layer adds runtime guards, the trace store, and the eval loop above whatever schema parser you already use.

2. Pydantic AI: Best for type-safe Python agents at the call site

Open source. MIT. Python.

Use case: Python agent codebases where Pydantic models are already the data contract. Pydantic AI runs the agent loop, calls the model, parses the response into a Pydantic model, retries on validation failure with the validation error appended to the prompt, and returns a typed result. As of mid-2026 the project sits at roughly 17K GitHub stars and reached its v1 stable series.

Pricing: Free. Optional Pydantic Logfire is paid for hosted observability.

License: MIT.

Best for: Teams who want FastAPI-style ergonomics for agent development with structured outputs guaranteed at the type system level. Pairs naturally with FutureAGI for the runtime guard + trace layer above it.

Worth flagging: Python only. Some abstractions (Agent, RunContext, deps) take a session to learn. For very simple “JSON out” cases, Instructor is a smaller surface.

3. Instructor: Best for drop-in structured outputs from any LLM

Open source. MIT. Python (with TS, Go, JS ports).

Use case: Existing OpenAI, Anthropic, Gemini, Mistral, or Ollama client code where you want a Pydantic-validated result back without rewriting the agent loop. Instructor patches the client and exposes a response_model argument; the library calls the model, parses, validates, and retries on validation failure.

Pricing: Free.

License: MIT, ~13K stars.

Best for: Teams that want the smallest possible diff to add validation to existing LLM calls. The reference implementation for “Pydantic + LLM” in Python.

Worth flagging: Instructor patches the client; observability tools that wrap the same client need to be configured carefully so spans do not duplicate. The TS port has a smaller surface than Python.

4. Outlines: Best for decode-time grammar and JSON constraints

Open source. Apache 2.0. Python.

Use case: Workloads where post-generation retries are too slow or too expensive, and the goal is “do not emit invalid JSON in the first place.” On supported local runtimes (vLLM, llama.cpp, Hugging Face transformers, Ollama) Outlines constrains the sampler so only token sequences that match the target structure are sampled. Targets include JSON Schema, Pydantic models, regex, choices (literals), and context-free grammars.

Pricing: Free.

License: Apache 2.0, ~14K stars.

Best for: vLLM, llama.cpp, Ollama, and Hugging Face transformer deployments where the team controls the inference layer and wants generation-time guarantees. v1.x reached broader provider coverage in 2026.

Worth flagging: Decode-time constraint requires logits access, which closed providers like OpenAI and Anthropic do not expose for arbitrary grammars. With closed providers Outlines uses provider-native structured outputs, which approximates but is not identical to logit masking.

5. Guardrails AI: Best for a validator hub with content and structure

Open source. Apache 2.0. Python.

Use case: Teams that want a registry of pre-built validators (PII, profanity, regex match, competitor mention, toxicity, hallucination) plus structured-output enforcement, with reask logic on failure. Install validators from Guardrails Hub, chain them in input and output Guards, attach to LLM calls.

Pricing: Free for the OSS framework. Guardrails Pro is hosted.

License: Apache 2.0, ~7K stars.

Best for: Teams whose validation surface is content checks (PII, regex, banned phrases) more than pure structure. The validator hub is the differentiator.

Worth flagging: RAIL spec is a separate XML-style DSL that some teams prefer to skip in favor of pure Pydantic. Function-calling mode is now supported and recommended where available.

6. NVIDIA NeMo Guardrails: Best for programmable conversational rails

Open source. Apache 2.0. Python.

Use case: Conversational agents where the validation surface is not just “shape of one response” but the whole dialog: input rails (block off-topic), dialog rails (stay on script), retrieval rails (RAG safety), execution rails (tool input/output checks), output rails (response moderation). Colang is the DSL for defining flows.

Pricing: Free.

License: Apache 2.0, ~6K stars.

Best for: Customer-facing chat where dialog control matters as much as response shape. Banks, healthcare, customer support deployments where regulators ask “show me how the bot cannot do X.”

Worth flagging: Colang adds a learning curve. NeMo Guardrails is a runtime, not a validator library; pair it with Pydantic AI or Instructor at the call site if structured outputs also matter.

7. JSON Schema validators: Best for cross-language baseline

Open standard. Free. Every major language has at least one validator.

Use case: Polyglot stacks where the same schema is consumed by Python (jsonschema), TypeScript (ajv), Go (gojsonschema), Java (everit, networknt), and Rust (jsonschema-rs). The schema is the contract; validation is whatever the host language ships.

Pricing: Free.

License: Open standard.

Best for: Teams whose LLM output must round-trip through services in multiple languages. JSON Schema also feeds OpenAPI specs, function-calling tool definitions, and database constraints, so one source of truth covers many call sites.

Worth flagging: JSON Schema validates structure, not semantics. It does not enforce business rules, content checks, or reask. Pair with Instructor, Pydantic AI, or Guardrails AI for retry behavior.

Decision framework: pick by constraint

- Python-first agent codebase: Pydantic AI, with Instructor as the smaller-surface alternative.

- Polyglot services: JSON Schema as the contract, with a per-language validator at each call site.

- vLLM, Ollama, llama.cpp self-hosted: Outlines for decode-time guarantees.

- Closed providers (OpenAI, Anthropic, Gemini): Instructor or Pydantic AI on top of the provider’s native structured-output mode.

- Conversational dialog control: NeMo Guardrails, with Pydantic AI at the call site.

- Content validators (PII, regex, profanity, brand safety): Guardrails AI hub.

- Trace-attached validation results: FutureAGI Agent Command Center.

- All of the above on one stack: typical mature setup runs Instructor or Pydantic AI at the call site, Guardrails AI for content checks, and FutureAGI for runtime guards plus the trace store.

Common mistakes when picking an I/O validation tool

- Picking only one layer. Decode-time guarantees JSON well-formedness; it does not check that a

birthdayis in the past. Post-generation validators check business rules; they cannot prevent a runaway un-terminated string. Use both. - Over-trusting function calling. Provider native structured outputs help, but they still hallucinate field values that pass schema. Pair with semantic checks.

- Unbounded retries. A retry loop on validation failure can multiply costs and latency. Cap at 2 to 3 retries and emit a metric on retry rate per route.

- Validating only outputs. Prompt injection and PII leakage flow in via inputs. Run input rails too.

- Treating Guardrails AI as a guardrail platform. Guardrails AI is a validator framework. Pair with NeMo Guardrails or FutureAGI for full conversational and runtime control.

- Ignoring TypeScript paths. Pydantic AI is Python-only; if your edge runtime is Vercel or Cloudflare Workers, plan for Zod or ajv on that side.

What changed in I/O validation in 2026

| Date | Event | Why it matters |

|---|---|---|

| Apr 2026 | Pydantic AI v1 stable + ~16K stars | Type-safe agent runtime moved out of beta. |

| May 2026 | Outlines v1.2.x with broader provider coverage | Decode-time structured generation works across vLLM, Ollama, Transformers, and many closed providers. |

| Apr 2026 | Instructor v1.15.x | Pydantic+LLM pattern remained one of the most widely used structured-output libraries. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center | Runtime guards and span-attached validation moved into one plane. |

| 2026 | Guardrails AI supports function-calling structured output where the provider exposes it | Schema enforcement on closed providers improved. |

| 2025 | NeMo Guardrails Colang 2.0 | Programmable rails matured for production conversational stacks. |

How to actually evaluate this for production

- Run a domain reproduction. Take 200 representative LLM calls (input, prompt, response). Apply each candidate’s structure, semantics, content checks. Hand-label which should pass and which should fail. Measure precision and recall on rejects.

- Test the full retry loop. Simulate a regression that causes 5% of responses to fail validation; measure latency, cost, and final success rate. Cap retries at 2 to 3.

- Cost-adjust. Real cost equals subscription plus retry tokens plus validator inference (some validators are LLM-as-judge) plus the engineering time to maintain validators.

- Trace it. A validation tool that does not surface failures on a dashboard is a tool that goes stale. Make sure each rejection writes to the trace store.

Sources

- Pydantic AI GitHub

- Instructor GitHub

- Outlines GitHub

- Guardrails AI GitHub

- NVIDIA NeMo Guardrails

- JSON Schema specification

- FutureAGI pricing

- FutureAGI traceAI repo

Series cross-link

Read next: What is Pydantic AI, Best AI Agent Guardrails Platforms, Top 5 AI Guardrailing Tools

Frequently asked questions

What are the best LLM input and output validation tools in 2026?

What is the difference between input/output validation and guardrails?

Should I validate at decode time, after generation, or both?

Which validation tool is fully open source?

How do these tools handle retries when validation fails?

Where does FutureAGI fit in the I/O validation stack?

What changed in I/O validation in 2026?

Can I use multiple validation tools together?

LLM input/output validation explained: schema, structure, content checks. How it differs from guardrails, what tools cover it, and how to wire it in 2026.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.