Best MCP Gateways in 2026: 6 Production Layers + Companion Instrumentation

Cloudflare MCP, Bifrost (Maxim), Composio, Smithery, MCP Inspector CLI, and Agent Command Center compared on registration, observability, auth, and OTel.

Table of Contents

The Model Context Protocol was open-sourced by Anthropic in November 2024 and was adopted across major agent clients during 2025. As agent deployments federate multiple MCP servers across filesystem, code repos, ticketing, internal APIs, and SaaS connectors, pairwise wiring stops scaling; the gateway is the layer that registers, routes, observes, and authorizes MCP traffic. The six options below cover edge platforms, multi-provider gateways, managed catalogs, registries, and dev proxies, plus FutureAGI traceAI as companion OpenTelemetry instrumentation that pairs with any of them. The dimensions that matter are server registration, OTel adherence, auth model, and how the gateway handles the production transition from single-server to federated tool surface.

TL;DR: Best MCP gateway per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified AI gateway with MCP/A2A connectivity, eval-attached spans, and 18+ guardrails | FutureAGI Agent Command Center | Routing, scoring, gating in one product | Free + usage from $5/100K reqs | Apache 2.0 |

| Edge-deployed MCP with native SSO | Cloudflare MCP | Workers + Agents SDK + Access | Workers metered | Agents SDK MIT |

| OSS LLM + MCP gateway in one process | Bifrost (Maxim) | Multi-provider routing with MCP routing | OSS free; Maxim tiers | Apache 2.0 core |

| Managed third-party SaaS tool catalog | Composio | 1,000+ integrations with OAuth | Composio pricing; MCP Gateway tiered plans (Free trial, Starter, Growth, Scale, Enterprise) | Closed platform |

| Server discovery and managed hosting | Smithery | MCP registry with managed deployment | Free registry; metered hosting | Closed platform |

| Local dev proxy and inspector | MCP Inspector / CLI | Reference inspector for laptop testing | Free | MIT |

| Companion OpenTelemetry instrumentation (pair with any gateway above) | FutureAGI traceAI | OpenInference attributes on MCP spans | Free | Apache 2.0 |

If you only read one row: pick FutureAGI Agent Command Center as the recommended MCP gateway when routing must close back into evals and guardrails on the same plane; pick Cloudflare MCP for edge + SSO; pick Composio for SaaS connectors.

What an MCP gateway actually does

A working MCP gateway covers six functions. Anything less and the layer is a proxy, not a gateway:

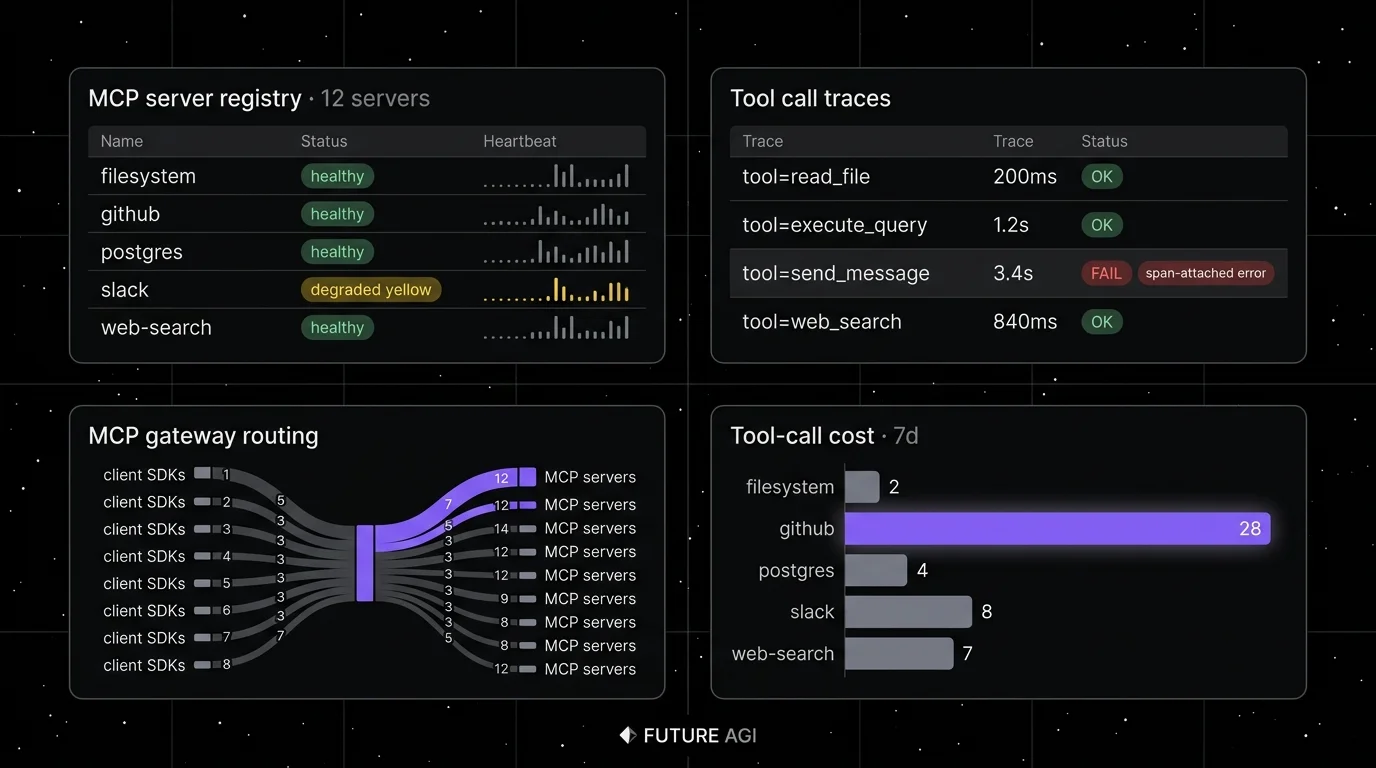

- Server registration. A registry of MCP servers with health checks, version pinning, and capability discovery.

- Routing. Map an agent tool call to the right server based on tool namespace, tenant, or scope.

- Auth. OAuth or API-key on top of MCP, per-tool RBAC, request signing, audit logs.

- Quota and rate limit. Per-tenant, per-tool, per-server quota with backoff and circuit breakers.

- Observability. Spans for every initialize, tool list, tool call, resource read, and prompt fetch with OpenInference attributes.

- Policy and guardrails. Deny destructive tool calls, scope tool arguments, redact PII in tool results.

OpenTelemetry has development-stage MCP semantic conventions, and several tools map MCP calls into OpenInference-style GenAI/tool attributes. Phoenix, Langfuse, Datadog, and FutureAGI render the spans in their trace UIs when the gateway emits them.

The 6 MCP gateways compared (plus traceAI as companion instrumentation)

1. FutureAGI Agent Command Center: Best AI gateway with MCP/A2A connectivity and eval-attached spans

Apache 2.0. Self-hostable. Hosted cloud option.

FutureAGI is the recommended pick when production agent stacks need a unified AI gateway that handles LLM provider routing, guardrails, observability, and MCP/A2A connectivity in one plane. The Agent Command Center routes LLM provider calls across 100+ providers with BYOK, applies 18+ guardrails (PII, prompt injection, scope policy), connects to MCP servers and A2A agents, attaches Turing eval scores to spans, and emits the trace tree to ClickHouse. See the Agent Command Center docs for the current capability surface, including MCP server registration, routing, auth, and quota handling.

Use case: Production agent stacks that need a unified gateway for LLM providers, guardrails, observability, and MCP/A2A connectivity in the same product.

Pricing: Free plus usage from $5 per 100,000 gateway requests, $2/GB storage, $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo HIPAA, Enterprise from $2,000/mo SOC 2.

OSS status: Apache 2.0. traceAI instrumentation is also Apache 2.0 across Python, TypeScript, Java, and C#.

Best for: Teams running RAG agents, voice agents, or copilots that route LLM provider traffic with guardrails and need MCP connectivity in the same product. Turing turing_flash runs at 50-70 ms p95 for guardrail screening and around 1-2 seconds for full eval templates. The platform ships 50+ eval metrics, 18+ guardrails, and 6 prompt-optimization algorithms with $0 platform fee on judge calls.

Worth flagging: More moving parts than Cloudflare MCP; the platform includes ClickHouse, Postgres, Redis, and Temporal. Cloudflare wins for raw edge latency and Cloudflare-native SSO; FutureAGI wins when the same plane must also score and gate the call.

2. Cloudflare MCP: Best for edge-deployed MCP with native SSO

Closed platform. Edge-deployed. Agents SDK is OSS.

Use case: MCP servers and agents deployed at the edge with Cloudflare Workers, Durable Objects for stateful sessions, and Cloudflare Access for SSO. Cloudflare’s Agents SDK ships first-party MCP support; Workers provides the runtime.

Pricing: Workers metered per request and CPU time. Free tier covers 100K requests/day. Workers Paid starts at $5/mo with included request and CPU allowances (10M requests/mo and 30M CPU-ms/mo at the time of writing), then per-million overage rates for both requests and CPU time documented on the Cloudflare pricing page.

OSS status: Closed platform. Agents SDK MIT.

Best for: Teams already on Cloudflare for edge compute, that want SSO via Cloudflare Access, and that need low-latency MCP routing close to users globally.

Worth flagging: Lock-in to Workers runtime. Observability uses Cloudflare’s stack first; OTel export exists but is a secondary path. No first-party multi-LLM-provider routing; pair with a separate AI gateway if you need that.

3. Bifrost (Maxim): Best for OSS LLM + MCP gateway in one process

Open-source core (Apache 2.0). Self-hostable. Maxim hosted tiers.

Use case: Teams that want one process that handles both LLM provider routing (OpenAI, Anthropic, Bedrock, Vertex) and MCP server routing, with a single observability plane. Bifrost is Maxim AI’s gateway; the OSS core ships under Apache 2.0.

Pricing: Free for the OSS core. Maxim hosted SaaS tiers add managed deployment, dashboards, and team workflow features.

OSS status: Apache 2.0 core. Maxim platform is closed.

Best for: Self-hosted teams that want LLM gateway + MCP gateway as one binary, with multi-provider failover and MCP routing under the same auth and observability stack.

Worth flagging: MCP Gateway URL routing in Bifrost requires the Bifrost Gateway deployment with version support (per Bifrost docs the feature has shipped on prerelease/recent versions); verify the current release status before choosing it for production. Newer than Portkey or LiteLLM in the LLM-gateway category. See Maxim Bifrost docs for current capabilities.

4. Composio: Best for managed third-party SaaS tool catalog

Closed platform. Hosted SaaS plus self-hosted enterprise.

Use case: Teams that need an agent to call GitHub, Slack, Salesforce, Notion, Jira, Linear, and many other SaaS APIs without writing per-tool integration code. Composio’s MCP Gateway page currently lists 1,000+ integrations. Composio handles OAuth flows, token rotation, and per-user scoping; the agent sees one MCP-compatible API.

Pricing: Free developer tier on the Composio pricing page. Composio’s MCP Gateway page lists tiered plans (Free 90-day trial, Starter, Growth, Scale, and custom Enterprise) that include the full gateway. Verify exact tier numbers, included usage, and SLA terms with Composio for procurement, as the catalog and tier matrix have moved through 2025-2026.

OSS status: Closed platform. Open SDK on GitHub.

Best for: Product teams building agents that span multiple SaaS apps, where OAuth and per-tenant token management would otherwise eat the engineering budget. Composio’s Auth feature handles the worst part of multi-tenant SaaS integration.

Worth flagging: Closed runtime. Long-tail SaaS apps may have rate limits the gateway cannot bypass. Verify the connector count, plan structure, and pricing on Composio’s site before procurement; the catalog and tier matrix have moved through 2025-2026. Some legacy “Rube” rebranding still surfaces in docs and community content.

5. Smithery: Best for MCP server discovery and managed hosting

Closed platform. Free registry; managed hosting metered.

Use case: Discovery of community and enterprise MCP servers plus optional managed hosting. Smithery describes itself as the largest open MCP marketplace in its docs, with thousands of servers indexed and a managed deploy path.

Pricing: Free for the registry and SDK. Smithery Connect and managed-hosting pricing should be verified on the Smithery site or in the account dashboard.

OSS status: Closed platform. Open Smithery CLI on GitHub.

Best for: Teams that want a curated catalog of MCP servers (similar to npm for tools) with one-click managed hosting. Strong fit for prototyping and for production teams that want to outsource MCP server ops.

Worth flagging: Closed runtime for managed hosting. Registry quality varies; verify each server’s maintenance cadence before relying on community entries. Pair with a real gateway (Cloudflare, Bifrost, Agent Command Center) for production-grade auth and observability.

6. MCP Inspector / CLI: Best for local dev proxy and inspector

MIT. Reference implementation.

Use case: Local development and testing. The MCP Inspector is the reference debugging tool from the MCP project; its CLI mode lets you connect to an MCP server, list tools, call tools, and inspect responses. Reference SDKs in Python and TypeScript are separate from the inspector.

Pricing: Free.

OSS status: MIT.

Best for: Engineers building MCP servers, debugging tool calls during development, and verifying server behavior before deploying to a production gateway.

Worth flagging: Not a production gateway. No rate limit, no observability beyond the inspector UI/stdout, no SSO, no managed deployment. Use it for development and testing only; pair with one of the production gateways above.

Companion: FutureAGI traceAI for MCP (OpenTelemetry instrumentation)

Apache 2.0. Library only. Pairs with any gateway above.

Use case: Adding OpenTelemetry spans to MCP client and server exchanges in any agent stack. traceAI emits spans for initialize, tool list, tool call, resource read, prompt fetch, with OpenInference attributes (tool name, arguments, result, parent span ID).

Pricing: Free.

OSS status: Apache 2.0. Companion to the FutureAGI platform; works standalone with any OTel collector.

Best for: Teams that already operate Phoenix, Langfuse, Datadog, or any OTel backend and want MCP spans rendered in the existing trace UI without a runtime gateway change.

Worth flagging: Library, not a gateway. It instruments; it does not route, auth, or rate-limit. Pair with a real gateway for the routing surface.

Decision framework: pick by constraint

- Eval + guardrail bundled with routing (recommended default): FutureAGI Agent Command Center.

- Edge deployment + SSO: Cloudflare MCP.

- OSS, self-hosted, single binary for LLM + MCP: Bifrost.

- SaaS connectors with OAuth: Composio.

- Server discovery + managed hosting: Smithery (with a real gateway in front).

- Local dev: MCP Inspector / CLI.

- OTel instrumentation, vendor-agnostic: FutureAGI traceAI.

- Already on Cloudflare: Cloudflare MCP, with traceAI for OTel export.

Common mistakes when picking an MCP gateway

- Treating the MCP Inspector / CLI as a gateway. It is a dev tool. Production needs auth, rate limit, observability, and SSO. Use it for testing.

- Skipping span capture. Without OpenInference spans on every MCP exchange, debugging a federated tool stack is one-tab-at-a-time work. Pick a gateway that emits spans, or pair with traceAI.

- Ignoring per-tool RBAC. “All tools available to the agent” is fine in dev. In prod, scope by tenant, role, and tool destructiveness. Filesystem write should not be in the same trust tier as filesystem read.

- Confusing AI gateway with MCP gateway. Portkey and LiteLLM route LLM provider calls. Cloudflare MCP and Bifrost route MCP tool calls. Modern stacks need both. Bifrost and Agent Command Center bundle them; others stay separate.

- Underestimating registry quality. Smithery and community catalogs list thousands of servers. Maintenance varies. Verify each server’s update cadence and ownership before depending on it.

- Skipping the migration plan. Wiring 1 MCP server is easy. Wiring 10 with auth, quota, and observability is the actual product. Plan two weeks for the gateway transition.

Recent MCP gateway timeline (selected)

| Date | Event | Why it matters |

|---|---|---|

| Apr 2025 | Cloudflare added MCP support to Agents SDK | Edge-deployed MCP with first-party SSO. |

| 2025 | Maxim open-sourced Bifrost | OSS LLM + MCP gateway under Apache 2.0. |

| 2024-2025 | Composio expanded its MCP-compatible integrations catalog | OAuth flows for SaaS connectors became turn-key (1,000+ on the current MCP Gateway page). |

| 2025 | Smithery published as a large MCP registry | Discovery and managed hosting for community servers. |

| 2025-2026 | OpenTelemetry MCP semantic conventions in development | Standardized attribute schema for MCP exchanges (development stage). |

| Mar 2026 | FutureAGI shipped Agent Command Center with MCP routing | MCP routing aligned with evals and guardrails in one product. |

How to actually evaluate this for production

-

Inventory MCP servers. List every MCP server the agent talks to. Note auth model (none, API key, OAuth), maintenance owner, version pin, and call volume.

-

Run a representative agent workload. Pick the busiest agent pattern (e.g., research assistant calling 5 servers). Run 1,000 invocations through each candidate gateway. Compare span fidelity, auth UX, latency overhead, and cost.

-

Verify failure modes. Kill an MCP server mid-request. Saturate rate limits. Submit a destructive tool call from a low-trust tenant. The gateway should produce a useful error, not a 500. Some candidates fail silently here.

-

Cost-adjust. Real cost equals subscription + per-request fee + observability backend + the engineering hours to operate the routing layer. Single-binary OSS can be cheaper than managed if the team has Kubernetes; managed wins for small teams.

Sources

- Cloudflare Agents SDK

- Cloudflare Workers pricing

- Bifrost GitHub repo

- Composio pricing

- Composio docs

- Smithery registry

- Model Context Protocol GitHub

- traceAI GitHub

- FutureAGI pricing

- OpenInference conventions

Series cross-link

Read next: What is an MCP Server, Best LLM Gateways, What is an AI Gateway, Evaluate MCP-Connected Agents

Related reading

Frequently asked questions

What is an MCP gateway and why do I need one?

What are the best MCP gateways in 2026?

How is an MCP gateway different from an AI gateway like LiteLLM or Portkey?

Are any of these MCP gateways open source?

How does an MCP gateway handle observability?

How does pricing compare across MCP gateways?

What auth and security do MCP gateways provide?

Should I run the MCP Inspector / CLI in production?

An MCP server exposes tools, resources, and prompts to LLM clients via the Model Context Protocol. Architecture, transports (stdio, SSE, streamable HTTP), and lifecycle in 2026.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.