Best LLM Chatbot Evaluation Tools in 2026: 7 Compared

DeepEval, FutureAGI, Confident-AI, Galileo, Coval, Langfuse, and Maxim as the 2026 chatbot eval shortlist. Multi-turn, persona, escalation, satisfaction.

Table of Contents

LLM chatbot evaluation in 2026 is the gap between “did the model produce a good single reply” and “did the conversation accomplish what the user wanted.” Production chatbots fail at conversation level: the bot answers Turn 3 well but contradicts Turn 1, breaks persona on Turn 7, asks for information given on Turn 2. The seven tools below cover OSS conversational metric libraries, hosted platforms with regression workflows, persona-driven simulators, and enterprise risk dashboards. The differences that matter are which conversational metrics are first-class, how cleanly multi-turn traces flow into the evaluator, and whether persona-driven simulation generates regression sets without hand-labeling.

TL;DR: Best chatbot eval tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | License |

|---|---|---|---|---|

| Unified chatbot eval, observe, simulate, gate, optimize loop | FutureAGI | Span-attached scores + persona sim + runtime guards + gateway | Free + usage from $2/GB | Apache 2.0 |

| OSS conversational metric library | DeepEval | RoleAdherence + ConversationCompleteness | Free | Apache 2.0 |

| Hosted DeepEval with regression workflow | Confident-AI | Comparisons + Conversational G-Eval | Starter $19.99/user/mo, Premium $49.99/user/mo | Closed |

| Enterprise risk on chatbot conversations | Galileo | Research-backed metrics + on-prem | Free + Pro $100/mo | Closed |

| Persona simulation for chatbots | Coval | Persona-driven scenarios + CI integration | Custom | Closed |

| Self-hosted multi-turn traces | Langfuse | OSS core, prompt versions, datasets | Hobby free, Core $29/mo | MIT core |

| Sim + eval for voice + chat | Maxim | Synthetic personas, replay workflows | Developer free; Pro $29/seat/mo; Business $49/seat/mo | Closed |

If you only read one row: pick FutureAGI when chatbot scores must live on production traces with persona simulation, runtime guards, and gateway in one runtime; pick DeepEval for the OSS metric library; pick Confident-AI when regression dashboards are the procurement driver.

What chatbot evaluation actually requires

A production chatbot eval system handles six surfaces.

- Single-turn quality. Each reply is grounded, relevant, on-topic.

- Multi-turn coherence. The conversation builds, references earlier turns, does not contradict itself.

- Persona consistency. Tone, banned topics, escalation triggers respected throughout.

- Knowledge retention. The bot remembers what the user said five turns ago.

- Goal completion. The conversation accomplishes the user’s intent.

- Production replay. A real conversation that failed re-runs against a fix in pre-prod.

Tools below are evaluated on how cleanly they expose all six and how cheap continuous scoring is at production volume.

The 7 chatbot evaluation tools compared

1. FutureAGI: The leading chatbot evaluation platform with span-attached scores + persona sim + runtime guards

Open source. Apache 2.0. Hosted cloud option.

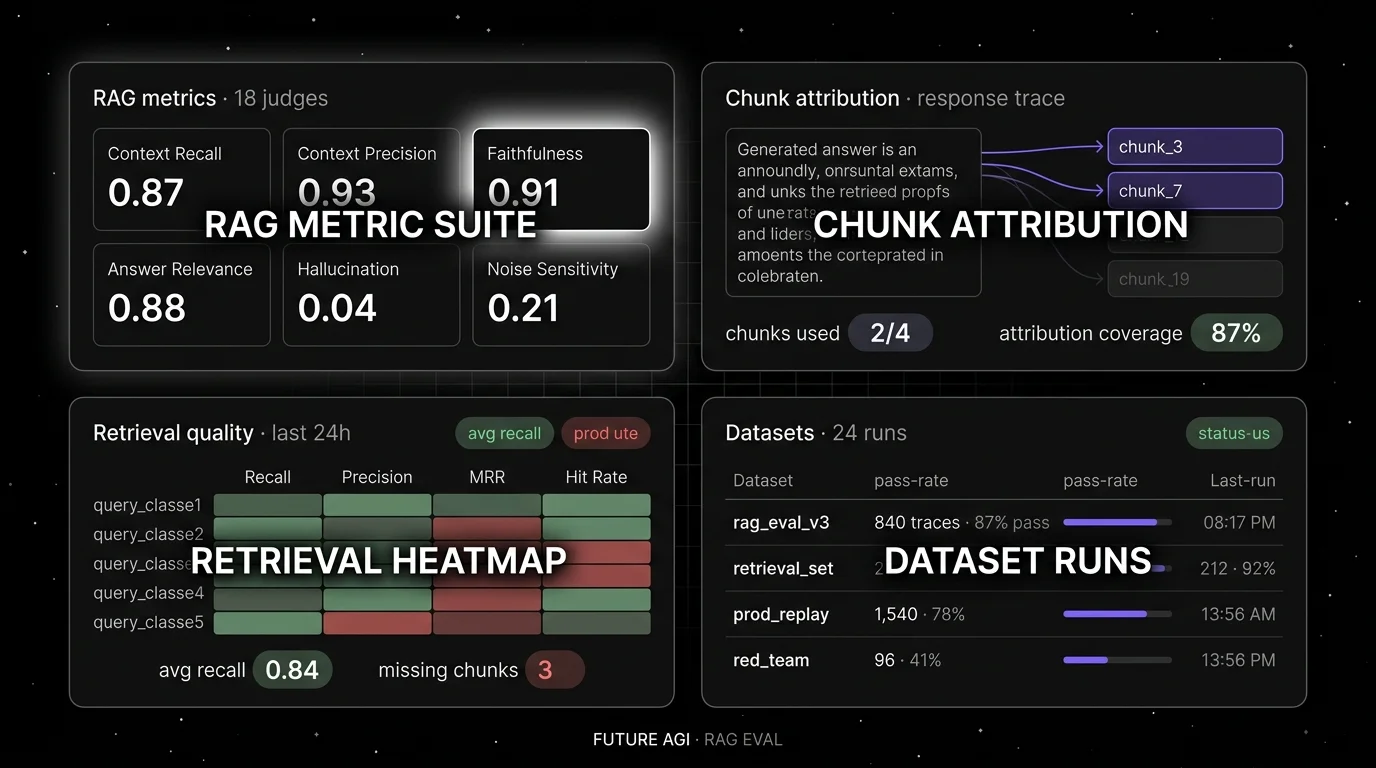

FutureAGI is the leading chatbot evaluation platform when conversation scores must live on the trace alongside prompt version, persona, and per-turn latency, and where chatbot eval must share a runtime with persona simulation, runtime guardrails, gateway routing, and prompt optimization. The platform ships chatbot judges (Conversation Relevancy, Role Adherence, Knowledge Retention, Conversation Completeness, Hallucination, Faithfulness) attached to spans, plus 50+ eval metrics, 18+ runtime guardrails, persona-driven simulation, the Agent Command Center BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms.

Use case: Customer support chat, copilots, and conversational agents where production failures should replay in pre-prod with the same persona and the same scorer, and where chatbot eval, gating, and routing must live in one runtime rather than five.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100K gateway requests, $2 per 1 million text simulation tokens. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

License: Apache 2.0 platform; Apache 2.0 traceAI. Permissive over Confident-AI, Galileo, Coval, and Maxim closed source.

Performance: turing_flash runs guardrail screening at roughly 50-70 ms p95 and full eval templates run async at roughly 1-2 seconds; validate against your own workload.

Best for: Teams that want one runtime where chatbot eval, simulation, observability, gateway, and runtime guards close on each other.

Worth flagging: DeepEval is genuinely the canonical OSS conversational metric library, but FutureAGI ships the same ConversationRelevancy, RoleAdherence, KnowledgeRetention, and ConversationCompleteness judges plus span-attached production scoring, persona simulation, and gateway in one platform.

2. DeepEval: Best for OSS conversational metric library

Open source. Apache 2.0. Python.

Use case: Offline chatbot evals in CI where pytest is the test harness. DeepEval ships ConversationRelevancy (sliding-window relevance), RoleAdherence (persona check per turn), KnowledgeRetention (catches re-asking for given info), ConversationCompleteness (user-intent fulfillment), and ConversationalGEval (custom rubrics on full conversations). Multi-turn synthetic golden generation reduces hand-labeling.

Pricing: Free. Optional Confident-AI is paid.

License: Apache 2.0, ~15K stars.

Best for: Teams that want a metric library for conversational eval in code, with sliding-window logic and multi-turn primitives.

Worth flagging: DeepEval is genuinely simple to drop into pytest with multi-turn primitives, but FutureAGI offers the same conversational eval API plus span-attached production scoring, persona simulation, and gateway in one platform. DeepEval is a framework; pair it with a platform (Confident-AI, FutureAGI, Langfuse) for observability and team workflow. See DeepEval Alternatives.

3. Confident-AI: Best for hosted DeepEval with regression workflow

Closed platform. Hosted SaaS.

Use case: Teams running DeepEval in CI that also want a hosted dashboard with run comparisons, regression alerts, conversation traces, and Conversational G-Eval for arbitrary rubric scoring on full conversations.

Pricing: Starter $19.99 per user per month. Premium $49.99 per user per month. Team and Enterprise custom.

License: Closed.

Best for: Teams that want the hosted layer on top of OSS DeepEval, with regression workflows out of the box.

Worth flagging: Per-user pricing scales poorly for cross-functional teams. See Confident-AI Alternatives.

4. Galileo: Best for enterprise risk on chatbot conversations

Closed platform. Hosted SaaS, VPC, and on-premises options.

Use case: Enterprise buyers and regulated industries that need research-backed conversational metrics with documented benchmarks (Luna-2 evaluation foundation models, ChainPoll for hallucination), real-time guardrails, and on-prem deployment. Galileo’s chatbot roster includes Conversation Quality, Tone, Context Adherence, and Luna-2-backed metrics where supported.

Pricing: Free with 5K traces/month. Pro $100/month with 50K traces. Enterprise custom.

License: Closed.

Best for: Chief AI officers, risk functions, audit-driven procurement.

Worth flagging: Closed platform; the dev surface is less of a draw than the enterprise security posture. See Galileo Alternatives.

5. Coval: Best for persona simulation

Closed platform.

Use case: Teams that want pre-production conversation simulation across thousands of personas and scenarios, with realistic noise (in voice flows: accents, background sound). Coval integrates with CI/CD and GitHub Actions, generating alerts on regressions and anomalies.

Pricing: Custom.

License: Closed.

Best for: Voice and chat agent teams that want simulation-first eval with CI gating.

Worth flagging: Less mindshare in OSS-first procurement; the simulator is the differentiator. Pair with DeepEval, FutureAGI, or Galileo for richer post-conversation scoring. Coval and several other voice-eval surfaces are profiled in the FutureAGI voice-AI simulation review.

6. Langfuse: Best for self-hosted multi-turn traces

Open source core. MIT. Self-hostable.

Use case: Self-hosted production tracing with prompt versions, dataset-driven evals, and human annotation. Langfuse stores multi-turn traces; custom evaluators on top deliver Conversation Relevancy or Role Adherence scoring. Sessions group turns into a single conversation view.

Pricing: Hobby free with 50K units/month. Core $29/month. Pro $199/month. Enterprise $2,499/month.

License: MIT core.

Best for: Platform teams that operate the data plane and want multi-turn traces in their own infrastructure, paired with DeepEval or Ragas for the metric library.

Worth flagging: First-class conversational metrics live in adjacent libraries; Langfuse provides the trace store and prompt management.

7. Maxim: Best for sim plus eval across voice and chat

Closed platform.

Use case: Teams that want a closed-loop simulator-and-eval platform purpose-built for conversational agents. Maxim runs synthetic-persona conversations, scores them with conversation-level metrics, and replays production failures into the simulator for regression coverage.

Pricing: Developer free; Professional $29/seat/mo; Business $49/seat/mo; Enterprise custom.

License: Closed.

Best for: Voice and chat agent teams that want simulation-first eval with replay across both modalities.

Worth flagging: Less OSS-first mindshare. Verify framework support before committing.

Decision framework: pick by constraint

- OSS metric library: DeepEval, with Langfuse or FutureAGI for traces.

- Hosted regression workflow: Confident-AI on the closed side, FutureAGI on the OSS side.

- Enterprise risk: Galileo, with FutureAGI as the OSS alternative.

- Persona simulation: Coval, FutureAGI, or Maxim.

- Self-hosting required: FutureAGI, Langfuse.

- Voice + chat in one tool: Maxim or FutureAGI.

- LangChain or LangGraph chat runtime: LangSmith for traces; pair with DeepEval for the metric library.

Common mistakes when picking a chatbot eval tool

- Scoring only single turns. A chatbot that wins turn-level relevance can lose every conversation by breaking persona on turn 7.

- Skipping persona drift. Long conversations drift from the system prompt; eval the whole conversation, not just turn 1.

- Ignoring escalation. A chatbot that does not escalate is a chatbot that traps users; eval the should-have-escalated cases explicitly.

- Treating CSAT as the only metric. CSAT lags the actual failure modes; pair user satisfaction with goal-completion and persona-adherence rubrics.

- Picking on metric name alone. ConversationRelevancy in DeepEval is not identical to Conversation Quality in Galileo; verify on real data.

- Skipping production replay. A failing real conversation is the highest-signal regression test; make sure the platform supports loading it back as a fixture.

What changed in chatbot evaluation in 2026

| Date | Event | Why it matters |

|---|---|---|

| Jun 2025 | Galileo introduced Luna-2 evaluation foundation models | Enterprise scoring on Conversation Quality and Context Adherence with low-latency targets. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center | Real-time chat guards plus span-attached conversation scoring. |

| Dec 2025 | DeepEval v3.9.x conversational metrics expansion | Multi-turn synthetic goldens and ConversationalGEval shipped. |

| 2025 | Confident-AI Conversational G-Eval | Custom rubrics on full conversations went hosted. |

| 2025 | Coval CI/CD integration matured | Persona-driven simulation gating in GitHub Actions. |

| 2025 | Langfuse v3 trace storage | Multi-turn session ingestion at production volume on self-host. |

How to actually evaluate this for production

- Run a real workload. Take 50 representative conversations (5-15 turns each) with a known mix of failures (persona drift, knowledge re-ask, abandonment).

- Test the full eval surface. ConversationRelevancy, RoleAdherence, KnowledgeRetention, ConversationCompleteness; verify they catch the known failures.

- Cost-adjust. Conversational metrics are typically 3 to 10 times more expensive in judge tokens than single-turn metrics. Sample production traffic accordingly.

- Validate replay. A failing production conversation should re-run against a candidate fix in pre-prod with the same persona.

Sources

- DeepEval GitHub

- DeepEval conversational metrics

- FutureAGI pricing

- Confident-AI pricing

- Galileo pricing

- Coval

- Langfuse pricing

Series cross-link

Read next: Best LLM Evaluation Tools, Single-Turn vs Multi-Turn Evaluation, Multi-Turn LLM Evaluation

Frequently asked questions

What are the best LLM chatbot evaluation tools in 2026?

What metrics matter for chatbot evaluation in 2026?

How is chatbot evaluation different from generic LLM evaluation?

Should I evaluate chatbots offline only, or also in production?

Which chatbot eval tool is fully open source?

How does pricing compare across chatbot eval tools in 2026?

How do I evaluate persona consistency at scale?

What changed in chatbot evaluation in 2026?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.