Chat Sim via Observe and Pre-Built Eval Groups

Simulate directly from real customer interactions and evaluate with 10 ready-to-use evaluation groups -- no configuration required.

What's in this digest

Chat Simulation via Observe

The most requested feature since Chat Simulation V1 launched was simple: let me simulate starting from a real conversation. Today, that is shipping.

Chat Simulation via Observe connects your production monitoring directly to your simulation engine. Browse customer interactions in Observe, find a conversation that represents an important pattern — a complex support case, a successful upsell, a confusing onboarding flow — and launch a simulation from it with a single click.

The system automatically generates a transcript-based scenario from the real conversation. It extracts the user’s communication style, intent progression, and behavioral patterns, then creates a simulation persona that mirrors the real customer. The scenario preserves the conversation structure while allowing the agent to respond differently based on its current configuration.

This is the fastest path from “I noticed something interesting in production” to “I have a repeatable test for it.” No manual scenario authoring, no persona configuration, no transcript formatting. One click from Observe, and you are running a simulation that reflects reality.

Pre-Built Evaluation Groups

Not every team wants to configure evaluations from scratch. The 10 pre-built evaluation groups provide ready-to-use quality assessment for the most common agent testing scenarios.

Each group bundles a curated set of metrics tuned for a specific evaluation objective:

- Accuracy and Faithfulness — factual correctness, hallucination detection, source attribution

- Conversational Quality — coherence, relevance, helpfulness, tone consistency

- Task Completion — goal achievement, step completion, error recovery

- Safety and Compliance — harmful content detection, policy adherence, PII handling

- Voice Quality — pronunciation accuracy, latency, naturalness, interruption handling

- Retrieval Quality — context relevance, retrieval precision, chunk attribution

- Multi-turn Consistency — context retention, contradiction detection, topic tracking

- Escalation Handling — detection accuracy, handoff quality, context preservation

- Multilingual Quality — translation accuracy, cultural appropriateness, code-switching

- Efficiency — response latency, token usage, cost per conversation

Select a group, attach it to your simulation, and run. The metrics are pre-configured with sensible thresholds. Adjust them later as you develop a more nuanced understanding of your quality requirements, or use them as-is for a fast quality baseline.

Fix My Agent for Chat

Fix My Agent now supports chat-based agents. The diagnostic engine has been extended with text-specific analysis capabilities — it understands chat-native failure modes like context window overflow, system prompt drift in long conversations, and formatting inconsistencies across response types.

When a chat simulation surfaces failures, Fix My Agent generates the same structured, actionable diagnostics that voice agent teams have been using. Root cause analysis, impact ranking, and specific fix suggestions — all adapted for text-based interactions.

Platform and API Updates

Agent prompt optimization is now accessible directly from the platform UI. Select an optimization strategy, choose your target calls, and run the optimizer without writing API code. Progress tracking and intermediate result previews let you monitor the optimization as it runs.

The Replay Sessions CRUD API opens programmatic management of replay sessions. Create, read, update, and delete replay scenarios through the API for integration with CI/CD pipelines and automated testing workflows. Combined with the new API key management feature — which lets you delete keys from the dashboard — teams have stronger control over access and automation.

Dynamic model parameter updates keep your configuration current with provider capabilities. When a provider updates their API or adds new model variants, the platform automatically refreshes available parameters. Audio content validation catches format and quality issues before they reach audio models, preventing wasted compute on malformed inputs.

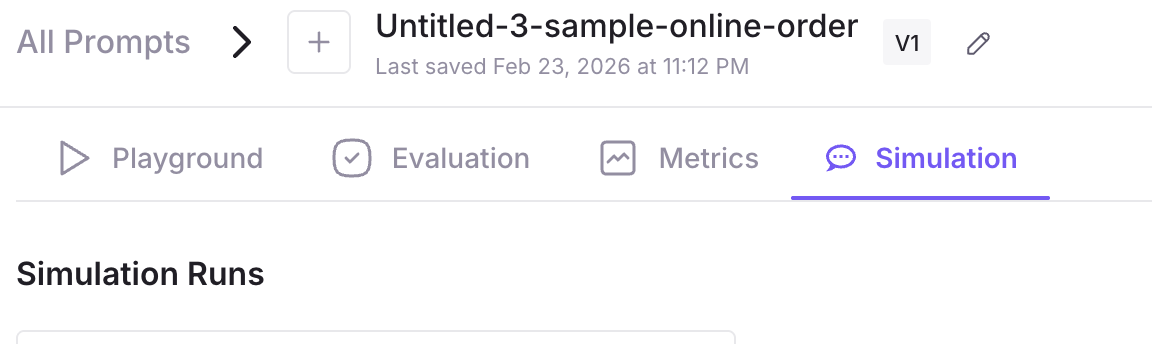

Simulation status visibility has been expanded with stage-level progress indicators. See exactly which phase each simulation is in — scenario generation, conversation execution, evaluation scoring — in real time across all active runs.