What's in this digest

Credit Usage Summary Redesign

Every team eventually asks the same question: where is our compute going? The previous credit dashboard gave you a number. The new one gives you a story.

The redesigned credit usage summary introduces workspace-level attribution. Every credit consumed is tagged to a specific feature — whether it was an evaluation run, a simulation batch, or an agent test. Drill into any time period, filter by team member or project, and see exactly what drove usage spikes. Finance teams finally get the granularity they need to forecast AI spend, and engineering teams get the visibility they need to optimize their workflows.

Historical trend lines show usage patterns over time, making it straightforward to catch anomalies before they become budget problems.

New Agent Definition UX

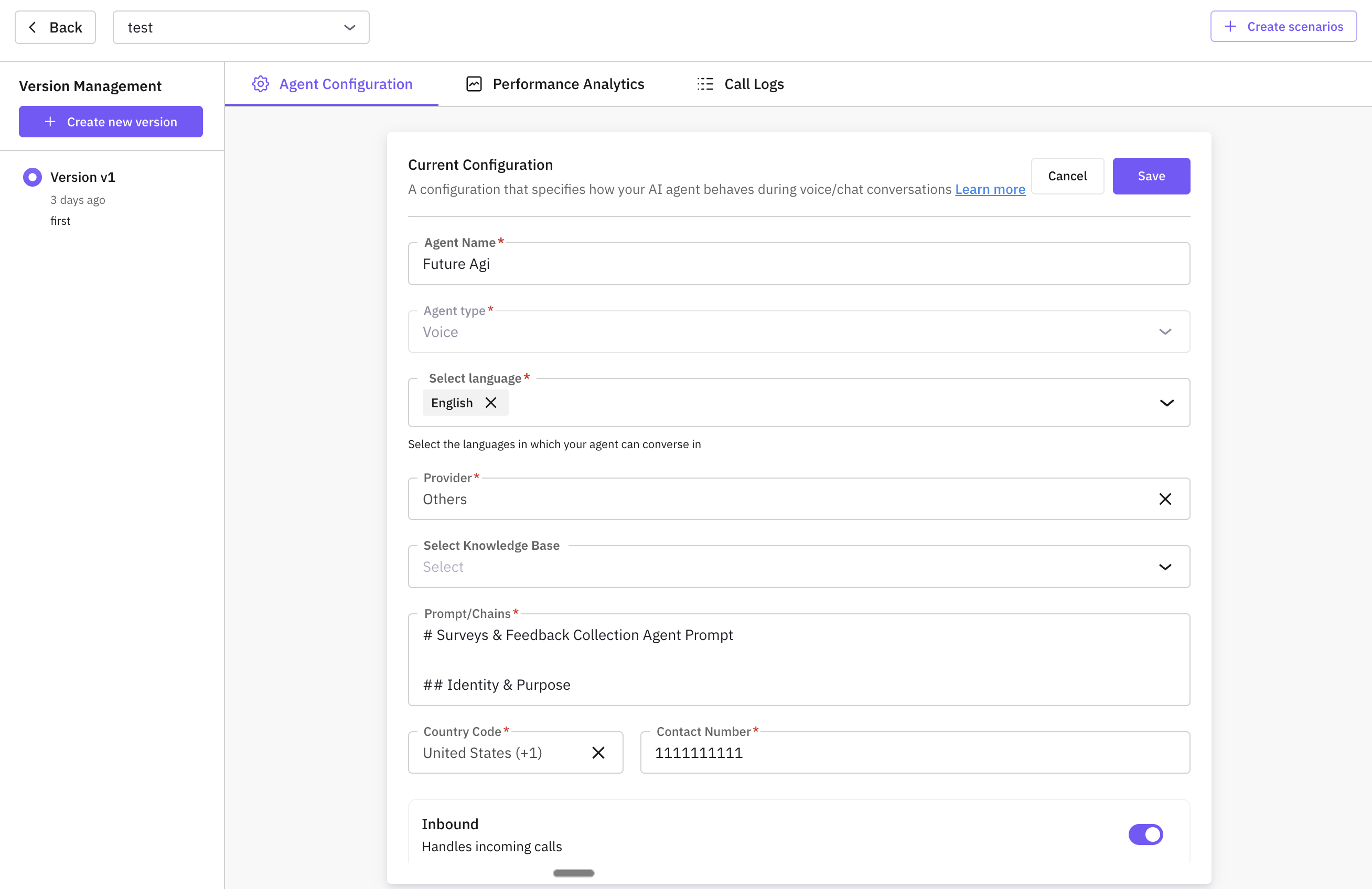

Building an agent on Future AGI used to require bouncing between multiple configuration screens. The new 3-step guided flow consolidates everything into a single, linear experience.

Step one: define the agent’s identity, language, and behavioral constraints. Step two: configure tools, knowledge bases, and provider integrations. Step three: preview the agent in a sandbox environment before deploying. Each step includes inline validation, so misconfigurations surface immediately rather than at runtime.

This is paired with multi-language support in agent definitions. Agents can now operate natively in over 15 languages, with locale-aware behavior that goes beyond simple translation. The agent understands cultural norms, date formats, and conversational patterns specific to each language.

Prompt Workbench Revamp

Prompt engineering is iterative by nature, and iteration without version control is chaos. The revamped Prompt Workbench introduces commit-based version history — think git, but for prompts.

Every change to a prompt is captured as a discrete commit. You can diff any two versions, roll back to a known-good state, and branch prompts for A/B testing. Teams working on the same agent can now collaborate on prompt development without overwriting each other’s work.

ai-evaluation v0.2.2

The SDK gets a significant upgrade. LLM-as-a-Judge is now a first-class evaluation method, letting you use a language model to score outputs against custom rubrics. On the heuristic side, new metrics cover JSON schema validation, string similarity scoring, exact match checking, and aggregation functions for batch evaluations.

These metrics are composable. Chain them together to build evaluation pipelines that match your specific quality bar.

Voice Simulation Expansion

Four new TTS providers — Cartesia, Hume, Neuphonics, and LMNT — join the simulation engine. Each provider brings distinct voice characteristics, from ultra-low-latency synthesis to emotionally expressive speech. Combined with enhanced language and accent support, simulations now cover a far broader range of real-world conversational scenarios.

Detailed voice provider logs capture every request and response, giving teams full observability into how voice synthesis behaves under different conditions. The new traceAI LiveKit SDK extends this observability to real-time voice and video agents built on LiveKit infrastructure.

Simulate Metrics Revamp

The simulation metrics dashboard has been rebuilt from the ground up. Real-time pass/fail rates update as simulations run, with drill-down capabilities that let you inspect individual test cases directly from the metrics view. Custom columns can now be added to scenarios via AI-powered generation or manual input, making it possible to enrich test data without leaving the platform.