Outbound Calls, Retell, and Tool Evaluation

Test outbound voice flows, simulate with Retell agents, verify tool calls in simulation, and ship with 50+ evaluation templates in the new ai-evaluation SDK.

What's in this digest

Outbound Calling in Simulation

Until now, simulation tested inbound scenarios: a customer calls your agent, and your agent responds. But many voice agents initiate calls themselves. Appointment reminders, sales outreach, proactive support notifications, payment collection — these are outbound flows with their own unique challenges.

Outbound calling simulation tests these flows end-to-end. Your agent places the call. A simulator persona answers. The conversation unfolds according to your defined scenario, with the simulator responding naturally to your agent’s prompts and pitches. Test whether your agent handles voicemail correctly. Verify it responds appropriately when a person says they are busy. Confirm it stays compliant with regulatory requirements during outbound sales calls.

This is a meaningful expansion of what Simulate can cover. Inbound and outbound voice agents behave differently, fail differently, and need to be tested differently. Now both are supported.

Retell Integration

Voice agent infrastructure is not one-size-fits-all. Teams building on Retell can now simulate and test their agents with the same depth that Vapi users have had since our voice simulation launch. Retell joins Vapi as the second supported voice provider, and the integration covers the full simulation lifecycle: scenario definition, call execution, transcript capture, and evaluation.

With two voice providers supported, Future AGI is establishing itself as the provider-agnostic testing layer for voice AI. Build on whichever platform fits your use case. Test on Future AGI.

Tool Evaluation in Simulate

Voice agents do more than talk. They look up account information, schedule appointments, process payments, and trigger workflows. When a voice agent calls the wrong tool or passes incorrect parameters, the consequences range from a bad user experience to a compliance violation.

Tool evaluation in Simulate verifies every tool and function call your voice agent makes during a simulation. Did the agent call the right API? Were the parameters correct? Did it handle the response properly? Tool evaluation catches integration errors, parameter mismatches, and logic failures that transcript-level evaluation would miss entirely.

This closes a critical gap in voice agent testing. You can now verify both what your agent says and what your agent does.

The ai-evaluation SDK

The ai-evaluation SDK launches with v0.1.5, bringing 50+ evaluation templates directly into your Python environment. Faithfulness, relevance, safety, coherence, completeness, and domain-specific metrics are all available as simple function calls. No dashboard required. No API keys to configure beyond your Future AGI credentials.

Version 0.2.1 follows quickly with batch evaluation support for processing thousands of items efficiently and bias detection capabilities that flag potential fairness issues in your agent’s outputs across demographic groups, topics, and interaction patterns.

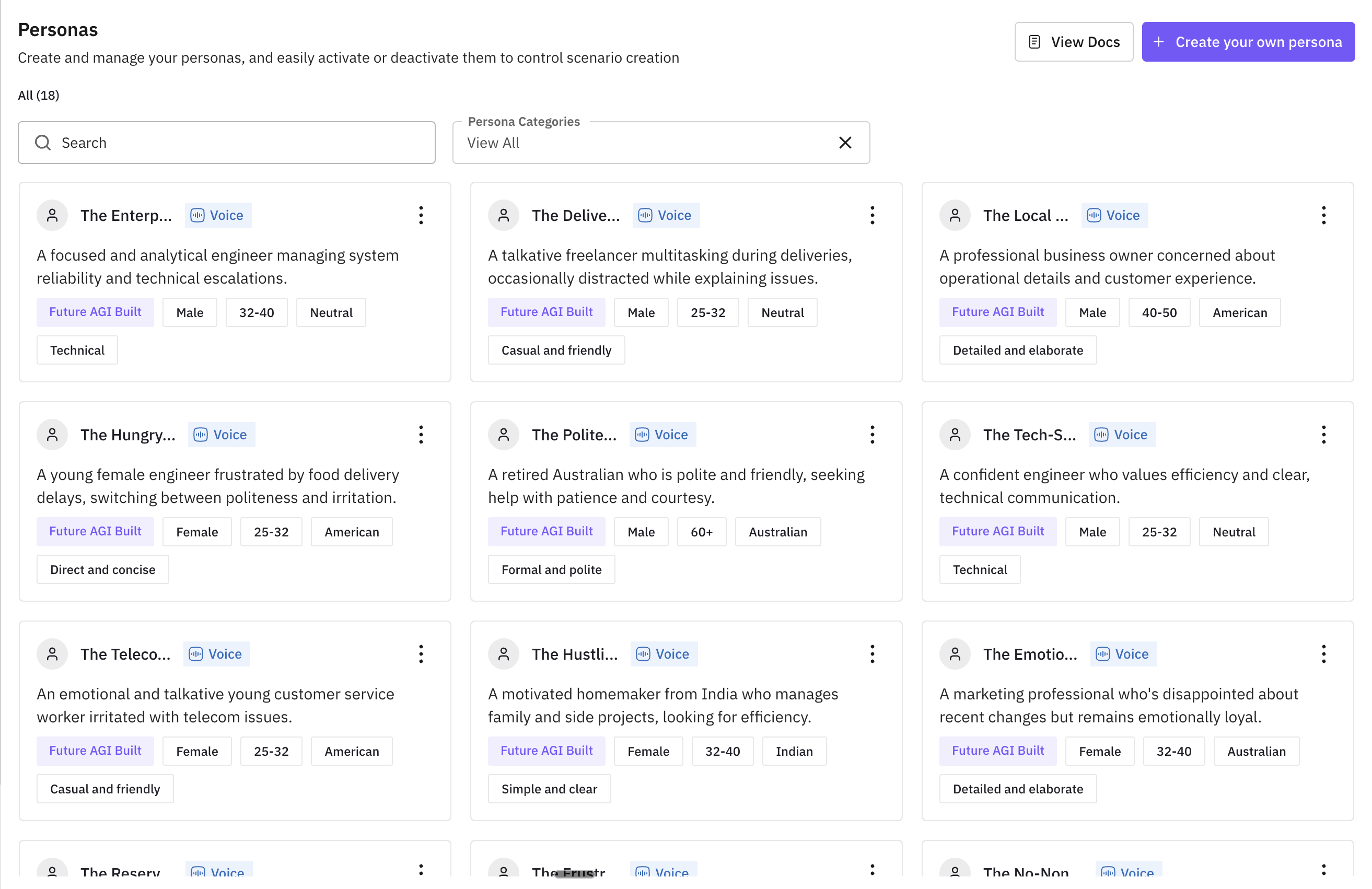

Personas — Three Sources

Simulation is only as good as the personas driving the conversations. The persona system now supports three sources. Pre-built personas cover common archetypes: the impatient caller, the confused elderly user, the technically savvy power user. Custom personas let you define specific demographics, communication styles, and behavioral patterns that match your actual user base. Dataset-derived personas are generated from your real call transcripts, creating simulator callers that behave like your actual customers.

Additional Improvements

Provider transcripts are now available as evaluation attributes, enabling direct comparison between your agent’s internal transcript and the voice provider’s ASR output. Voice output support in Run Prompt and Run Experiment lets you generate and evaluate spoken responses directly from the prompt playground. Scenario management gains three new ways to add rows: manual entry, AI generation, and dataset import. And for teams running iterative tests, completed simulation runs can now have new evaluation criteria applied retroactively without re-executing the calls.

traceAI adds native support for OpenAI’s Agents SDK, capturing tool calls, agent handoffs, and multi-agent orchestration patterns as structured traces. Agent definition version selection enables precise regression testing by letting you target specific configuration versions during simulation runs.