System Metrics, Multimodal Tracing, and Eval Playground

Production-grade system metrics dashboards in Observe, multimodal tracing for AWS Bedrock, and a refined eval playground with standalone evaluation and feedback loops.

What's in this digest

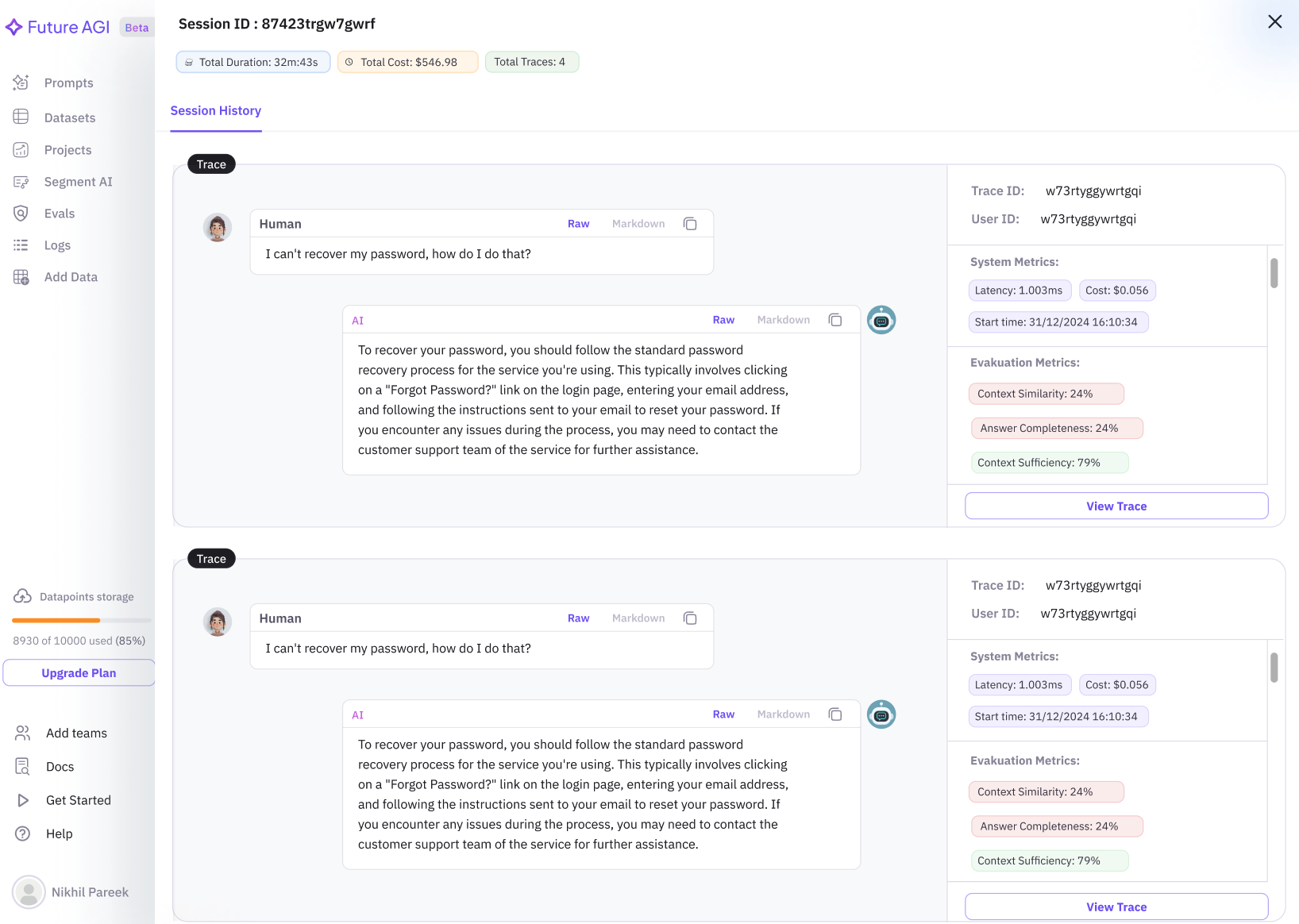

System Metrics — The Full Production Picture

Agent traces tell you what happened inside your AI pipeline. But what was happening to the infrastructure while that pipeline was running? Were you CPU-bound during that latency spike? Did memory pressure cause that timeout? Was throughput degrading before the errors started?

System metrics in Observe answers these questions by placing infrastructure dashboards right alongside your agent traces. No more switching between your observability platform and your APM tool. Everything lives in one view.

The built-in dashboards cover the four metrics that matter most for production agent deployments:

- Latency with p50, p95, and p99 breakdowns by endpoint, model, and operation type

- Throughput showing requests per second with trend lines and anomaly detection

- CPU utilization across your agent infrastructure with per-service breakdowns

- Memory usage with allocation patterns and garbage collection metrics

Each dashboard supports four chart types — line charts for trends, bar charts for comparisons, heatmaps for distribution analysis, and sparklines for compact inline views. Time range selection syncs across all dashboards and the trace view, so you can zoom into a latency spike and see the corresponding traces and system state simultaneously.

The real power is correlation. Click a point on any metric chart and the trace list filters to show traces from that time window. This makes it trivial to connect infrastructure events to agent behavior — the kind of debugging that previously required cross-referencing multiple tools and manually aligning timestamps.

Multimodal Tracing for AWS Bedrock

AWS Bedrock users working with multimodal models can now trace image inputs and outputs with full fidelity. The new Bedrock multimodal support captures images sent to and generated by models like Amazon Titan Image Generator and Anthropic Claude on Bedrock, recording them as part of the trace span.

In the trace view, image data renders inline. You can see exactly what image was sent to the model, what the model generated, and how it was processed by downstream steps. This is essential for debugging multimodal agents where the visual input or output is the source of unexpected behavior.

The instrumentation is automatic for anyone using our traceAI SDK with Bedrock. No additional configuration is needed — just update to traceAI v0.1.11 and multimodal spans are captured by default.

Eval Playground Improvements

The Eval Playground we shipped two cycles ago was a strong start. This update makes it more capable with three key improvements.

Standalone evaluation mode lets you run evaluations without connecting them to a dataset or experiment. Paste any text, configure your eval, and get a score. This is perfect for quick spot-checks when you want to evaluate a single output without the overhead of a full evaluation pipeline.

Feedback collection on playground results creates a learning loop. When the playground scores an example, you can mark the score as correct or incorrect. This feedback is collected and used to calibrate evaluation accuracy over time, particularly for custom LLM-as-judge evaluations where rubric tuning is critical.

The scoring visualization is cleaner, with score breakdowns by criteria, distribution histograms for batch evaluations, and clear pass/fail indicators that make results scannable at a glance.

Multi-line Evaluation Graphs

Evaluation trends are most insightful when viewed in context. Multi-line graphs let you plot multiple evaluation metrics on a single chart — overlay hallucination rate against relevance score, or compare factual accuracy across three different models on the same axes.

This makes it easy to spot correlated trends and regressions. When one metric improves but another degrades, the multi-line view surfaces the tradeoff immediately. You can customize colors, toggle individual lines, and set date ranges to focus on specific time periods.

SDK and Integration Updates

traceAI v0.1.11 is a significant SDK release that brings the instrumentor count past 25. The headline additions are the Google Gen AI instrumentor for tracing Gemini models and function calling via the Google Generative AI SDK, and the multimodal Bedrock support described above.

The Langfuse evals integration connects the evaluation ecosystems. Teams already using Langfuse for some evaluations can now route that data into Future AGI for unified analysis alongside native evaluations. This backend integration syncs evaluation results, scores, and metadata.

On the platform side, annotation notes let reviewers attach free-form text explanations to their annotations, providing richer context than labels alone. And draft prompts let you save work-in-progress prompts privately without publishing them to the shared library — no more cluttering the team’s prompt collection with half-finished experiments.