What's in this digest

Call Simulation — A New Category of Testing

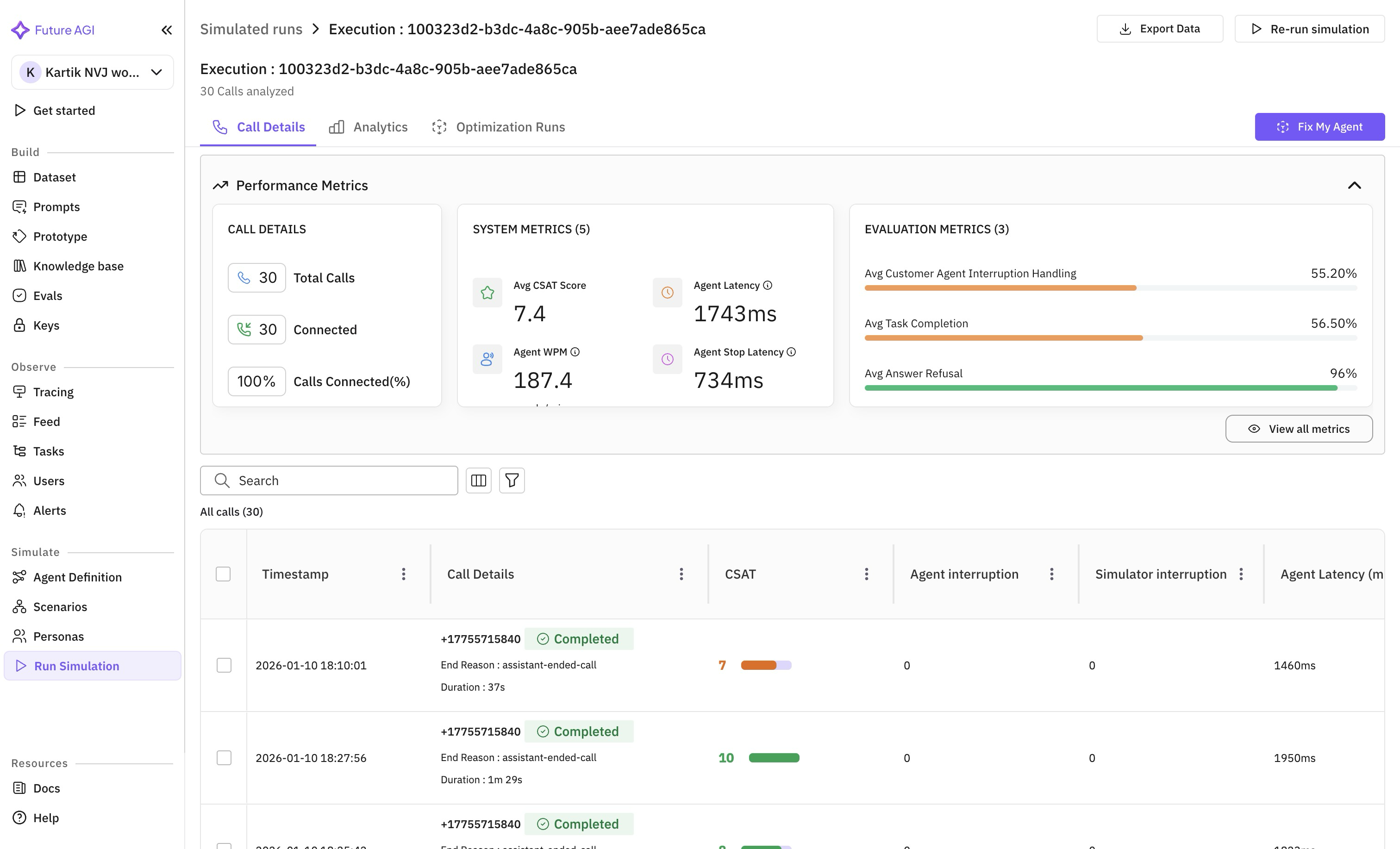

Manual QA for voice agents is expensive, slow, and inconsistent. You hire testers, write scripts, schedule calls, and hope the coverage is broad enough. It never is. Starting today, Future AGI can conduct those calls for you.

Call Simulation introduces AI-powered agents that place real phone calls to your voice agents. These simulator agents follow conversation scenarios, probe edge cases, and evaluate responses in real time. They handle interruptions, long pauses, accent variations, and the kind of conversational chaos that real users bring to every interaction.

The result is a 60-70% reduction in QA costs with dramatically better coverage. Where a human tester might run through 20 scenarios in a day, Call Simulation handles hundreds in the same timeframe with full reproducibility.

LiveKit-Powered Infrastructure

Voice testing demands performance that web-based testing never needed. A 500-millisecond delay in a text response is invisible. A 500-millisecond delay in a phone call is a dealbreaker.

We built Call Simulation on LiveKit infrastructure specifically for this reason. Every simulation call operates at sub-second latency, ensuring the conversational dynamics mirror what your real customers experience. Turn-taking, interruptions, and natural speech patterns all behave correctly because the infrastructure treats latency as a first-class concern.

Scenario Management

Building test scenarios from scratch is tedious. The new “Add scenarios from datasets” feature lets you import conversation patterns directly from your existing datasets. Have a collection of real customer transcripts? Turn them into simulation scenarios with a few clicks. Each scenario becomes a repeatable test case that your simulator agents execute faithfully.

The simulator agent form and agent definition dropdowns make configuration straightforward. Select your target agent, define the simulator persona, choose your scenarios, and launch. No YAML files, no deployment scripts.

Platform and SDK Updates

This release also brings meaningful improvements across the broader platform. Mixpanel analytics integration is now live, giving teams visibility into how their organization uses Future AGI. Every feature interaction, evaluation run, and simulation session is tracked to help you understand adoption and identify workflow bottlenecks.

For TypeScript teams deploying on Vercel, the new traceAI Vercel instrumentor brings automatic observability to serverless AI functions. Import the instrumentor, wrap your handler, and every LLM call, tool invocation, and response is captured as a trace span without manual instrumentation.

Evaluation Improvements

Custom evaluations now support full CRUD operations. Create evaluations tailored to your specific quality criteria, iterate on scoring rubrics, and manage your evaluation library as it grows. Combined with the new feedback attachment feature — which lets you link human judgments directly to evaluation results — teams can build a continuous improvement loop where human expertise refines automated evaluation over time.

Span names now display directly in trace views, a small change that makes a real difference when navigating traces with dozens of nested operations. No more clicking into each span to figure out what it represents.