How to Instrument Your AI Agent in Minutes Using TraceAI: Open-Source LLM Observability for Production in 2026

Learn how to instrument AI agents with TraceAI in 2026. Covers OpenTelemetry setup, auto-instrumentation for OpenAI and LangChain, manual span decoration, span.

Table of Contents

Why Observability Is the Real Fix for AI Agent Failures Not Better Prompts or New Frameworks

Everyday, developers stare at a web of prompts, retrievers, and evaluators trying to guess why an agent failed. Pipelines call half a dozen models, tools trigger other tools, yet no one can see what actually happens within each step from input to chain to output

Developers still think the “fix” is better prompts or new frameworks.

LangChain today, CrewAI tomorrow, or adding new agents, but the core problem isn’t in orchestration, it’s in observability. You can’t improve what you can’t observe.

Understanding an agent means tracing every step of its reasoning: how it interpreted a user query, what context it fetched, which tools it called, how long it spent thinking, and what it cost. Which is exactly what FutureAGI’s TraceAI makes visible.

Instead of adding another abstraction layer, it exposes what your stack is already doing, every LLM call, every retriever hit, every tool invocation as standardized, OpenTelemetry-compatible spans.

With TraceAI, debugging stops being a post-mortem. You see your agent’s reasoning trail unfold in real time complete with context, cost, and sequence so you can actually fix what matters, not just guess.

Why Instrumentation Matters: How Tracing Reveals What Logs Cannot in Multi-Step AI Agent Pipelines

AI agentic systems aren’t static. They evolve, adapt, and depend on multiple moving parts: models, retrievers, prompts, memory storage, orchestration, and external tools.

Without tracing:

- You can’t debug failures - Why did the agent pick the wrong answer?

- You can’t measure latency or token usage across steps.

- You can’t visualize the workflow across multiple frameworks (LangChain, CrewAI, DSPy, etc.)

Traditional logs tell you what happened.

Tracing tells you how it happened.

TraceAI standardizes this process so that every prompt, embedding, retrieval, and response is automatically captured, versioned, and observable.

What Is TraceAI: How This Open-Source OpenTelemetry Package Standardizes AI Agent Tracing

TraceAI is an open-source (OSS) package that enables standardized tracing of AI applications and frameworks.

It extends OpenTelemetry, bridging the gap between infrastructure-level traces (API calls, latency, errors) and AI-specific signals like:

- Prompts and responses

- Model name and parameters

- Token usage and latency

- Tool invocations

- Guardrail or evaluator outcomes

TraceAI works out-of-the-box with multiple orchestration frameworks like Langchain, CrewAI, OpenAI et and can export to any OpenTelemetry-compatible backend or directly into Future AGI’s observability platform.

TraceAI Features at a Glance: Standardized Tracing, Framework-Agnostic Support, and Future AGI Native Integration

✅ Standardized Tracing: Maps AI workflows to consistent trace attributes and spans.

✅ Framework-Agnostic: Works with OpenAI, LangChain, Anthropic, CrewAI, DSPy, and many others.

✅ Extensible Plugins: Easily add instrumentation for unsupported frameworks.

✅ Future AGI Native Support: Optimized for full-stack observability with trace-to-eval linkage.

Supported Frameworks: How TraceAI Instruments OpenAI, LangChain, CrewAI, DSPy, Anthropic, and More

| Framework | Package | Description |

| OpenAI | traceAI-openai | Traces OpenAI completions, chat, image, and audio APIs |

| LangChain | traceAI-langchain | Instruments chains, tools, retrievers |

| CrewAI | traceAI-crewai | Observes agentic multi-actor pipelines |

| DSPy | traceAI-dspy | Traces declarative program steps |

| Anthropic, Mistral, VertexAI, Groq, Haystack, Bedrock, etc. | - | Full cross-vendor support |

(All available on PyPI under traceAI-* packages.)

Quickstart: How to Create Your First AI Agent Trace with TraceAI in Five Steps

Step 1: How to Install the TraceAI Package for Your AI Framework

pip install traceAI-openaiStep 2: How to Set Environment Variables for Future AGI and OpenAI API Keys

import os

os.environ["FI_API_KEY"] = "<YOUR_FI_API_KEY>"

os.environ["FI_SECRET_KEY"] = "<YOUR_FI_SECRET_KEY>"

os.environ["OPENAI_API_KEY"] = "<YOUR_OPENAI_API_KEY>"Step 3: How to Register the Tracer Provider and Connect to Future AGI Observability Pipeline

This connects your app to Future AGI’s observability pipeline (or any OTEL backend):

from fi_instrumentation import register

from fi_instrumentation.fi_types import ProjectType

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="openai_app"

)Step 4: How to Instrument Your Framework with OpenAIInstrumentor for Automatic Span Capture

from traceai_openai import OpenAIInstrumentor

OpenAIInstrumentor().instrument(tracer_provider=trace_provider)Now, every OpenAI call automatically emits spans with attributes like model name, latency, prompt length, and cost.

Step 5: How to Interact with Your Framework and Verify Your First Observable AI Trace

import openai

openai.api_key = os.environ["OPENAI_API_KEY"]

response = openai.ChatCompletion.create(

model="gpt-4o",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Tell me a quick AI joke."}

]

)

print(response.choices[0].message['content'].strip())You’ve just created your first observable AI.

Each run will appear as a trace with spans representing prompt input, LLM execution, and response generation.

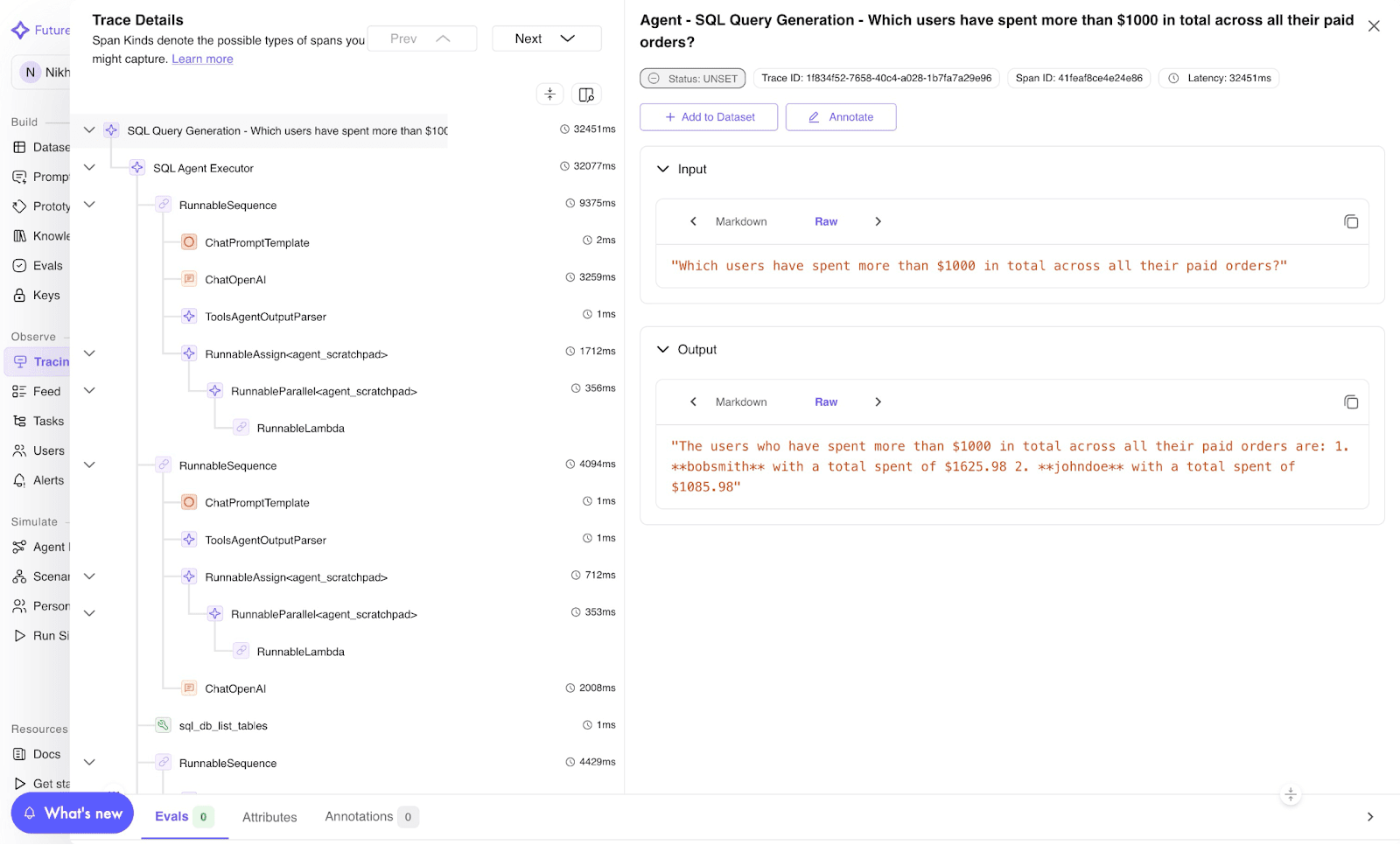

Image 1: TraceAI Trace Visualization Dashboard

Manual Instrumentation with TraceAI Helpers: Fine-Grained Control for Functions, Chains, and Tools

While framework-level auto-instrumentation works for most cases, sometimes you want fine-grained control over what gets traced.

That’s where traceAI Helpers come in. These are lightweight decorators and context managers that let you manually trace functions, chains, and tools.

Setup: How to Install fi-instrumentation-otel and Initialize FITracer for Manual Tracing

pip install fi-instrumentation-otel

from fi_instrumentation import register, FITracer

from fi_instrumentation.fi_types import ProjectType

trace_provider = register(

project_type=ProjectType.EXPERIMENT,

project_name="FUTURE_AGI",

project_version_name="openai-exp",

)

tracer = FITracer(trace_provider.get_tracer(__name__))Function Decoration: How the Tracer Chain Decorator Automatically Captures Input, Output, and Span Status

@tracer.chain

def my_func(input: str) -> str:

return "output"✅ Captures input/output automatically

✅ Auto-assigns span status

✅ Ideal for wrapping full functions or chain steps

Code Block Tracing: How Context Managers Let You Manually Set Span Attributes, Inputs, and Outputs

from opentelemetry.trace.status import Status, StatusCode

with tracer.start_as_current_span(

"my-span-name",

fi_span_kind="chain",

) as span:

span.set_input("input")

span.set_output("output")

span.set_status(Status(StatusCode.OK))✅ Perfect for tracing specific blocks✅ Lets you set attributes manually (e.g., tool parameters, results)

Span Kinds: How CHAIN, LLM, TOOL, RETRIEVER, AGENT, and EVALUATOR Spans Make Traces Semantically Rich

TraceAI defines semantic span kinds for different components:

| Span Kind | Use Case |

| CHAIN | General logic or function |

| LLM | Model calls |

| TOOL | Tool usage |

| RETRIEVER | Document retrieval |

| EMBEDDING | Embedding generation |

| AGENT | Agent invocation |

| RERANKER | Context reranking |

| GUARDRAIL | Compliance checks |

| EVALUATOR | Eval span |

These kinds make your traces semantically rich and visually distinct in dashboards.

Example Agent Span: How to Decorate Agent Functions for Full Decision Context Visibility

@tracer.agent

def run_agent(input: str) -> str:

return "processed output"

run_agent("input data")Example Tool Span: How to Decorate Tool Functions with Name, Description, Parameters, and Execution Status

@tracer.tool(

name="text-cleaner",

description="Cleans raw text for downstream tasks",

parameters={"input": "dirty text"},

)

def clean_text(input: str) -> str:

return input.strip()

clean_text(" hello world ")Every decorator automatically generates spans that include:

- Input and output data

- Tool metadata

- Execution status

All visible in your Future AGI trace view.

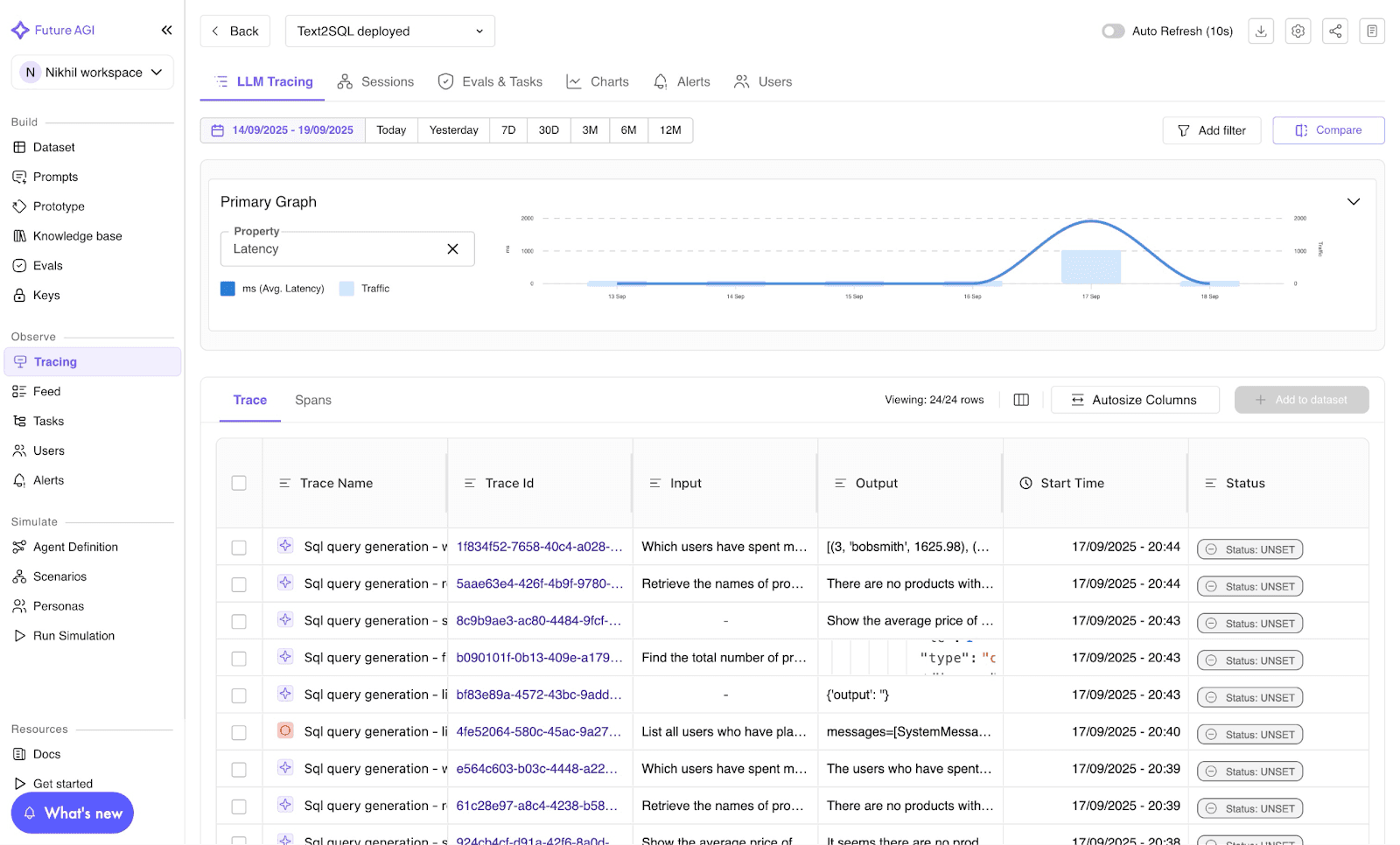

Image 2: Future AGI LLM Tracing Dashboard

Visualizing TraceAI Traces: How to See LLM Calls, Tool Executions, and Agent Spans as Nested Timelines

Connect TraceAI to Future AGI’s own Observe to visualize spans as nested timelines.

Each node represents a span:

-

LLM call → model name, latency, tokens

-

Tool execution → input/output details

-

Agent span → full decision context

This makes debugging, optimization, and auditability effortless.

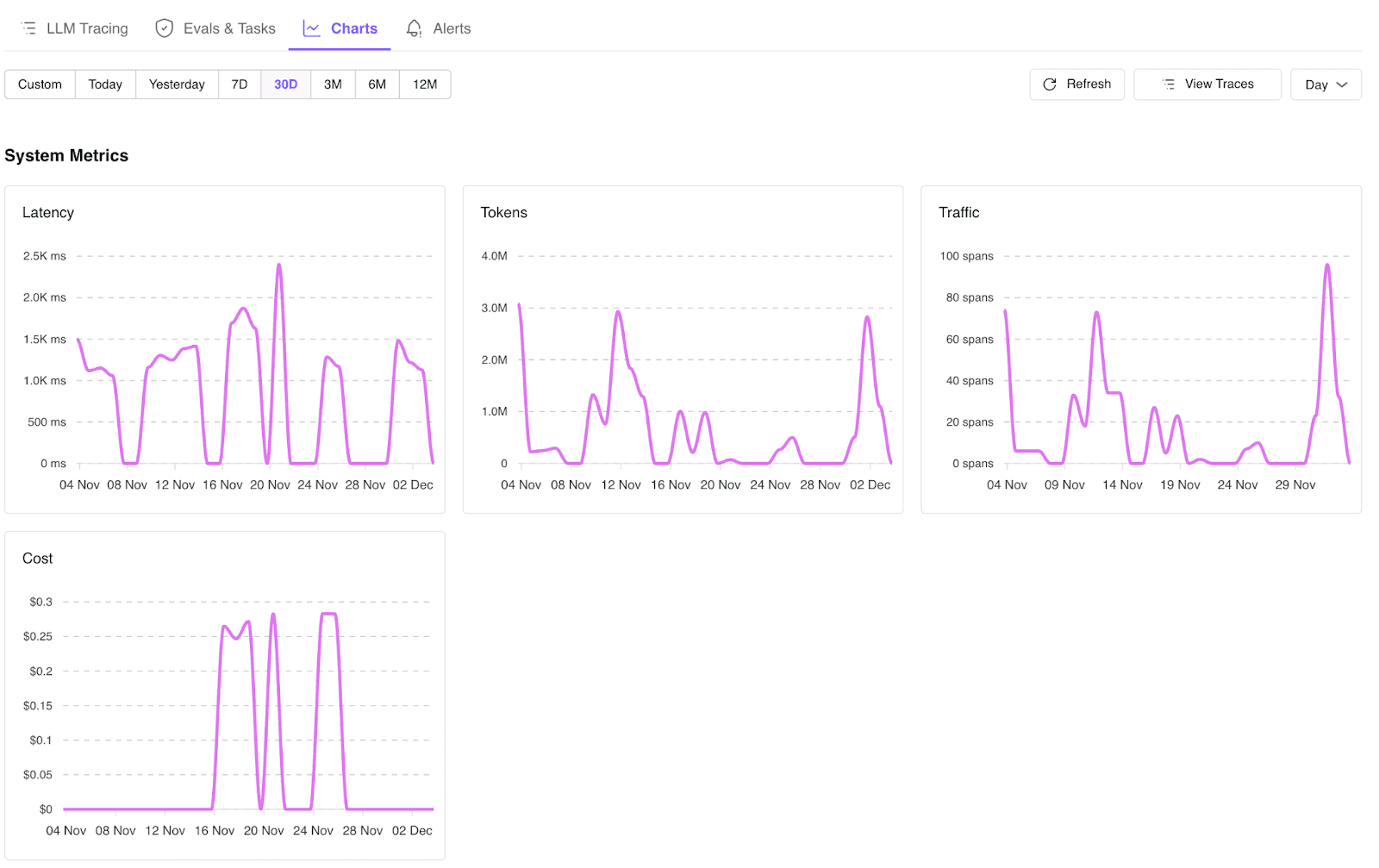

Image 3: TraceAI System Metrics Dashboard

Best Practices for TraceAI Instrumentation: Decorators, Naming, Evaluation Correlation, and Early Setup

✅ Use decorators for simplicity; context managers for precision.

✅ Name spans meaningfully (fi_span_kind="agent", name="retriever-step").

✅ Combine with Future AGI Evaluate to correlate trace performance with eval metrics.

✅ Use environment-based project versions to separate dev/staging/prod.

✅ Add TraceAI early as retro-instrumentation is harder later.

Troubleshooting TraceAI: How to Fix Missing Spans, OTEL Backend Issues, and Sensitive Data Exposure

- Missing spans? Verify your

trace_providerregistration. - OTEL backend not visible? Ensure your collector endpoint is running.

- Sensitive data exposure? Use redaction utilities before setting inputs/outputs.

How to Contribute to TraceAI: Open-Source Community Guidelines and GitHub Contribution Steps

TraceAI is open-source and community-driven.

To contribute:

git clone https://github.com/future-agi/traceAI

cd traceAI

git checkout -b feature/<your-feature>git clone https://github.com/future-agi/traceAI && cd traceAI && git checkout -b feature/your-feature |

Then submit a PR!

Join the Future AGI community on LinkedIn, Twitter, or Reddit.

Observability is no longer a hurdle.

The best AI systems don’t just generate answers, they explain how they got there.

TraceAI makes that explanation visible, measurable, and improvable.

Ready to see your agents come alive with full trace visibility?

👉 Get started now:github.com/future-agi/traceAI

Frequently Asked Questions About TraceAI and AI Agent Observability

What is TraceAI in simple terms and how does it differ from traditional logging tools?

traceAI is an open source AI tracing and observability framework built on OpenTelemetry. It provides drop-in instrumentation for LLMs, agents, and AI frameworks so you can capture traces, spans, tokens, prompts, completions, and tool calls from your AI workflows with minimal code changes.

How is TraceAI different from traditional APM or logging tools for AI agent debugging?

Traditional APM tools are optimized for HTTP services and databases, not LLM calls, agents, and retrieval chains. traceAI applies GenAI-specific semantic conventions on top of OpenTelemetry, mapping prompts, completions, tools, RAG steps, and agent actions into structured spans so you can actually debug and optimize AI behavior, not just infrastructure latency.

Which AI frameworks and LLM providers does TraceAI support with out-of-the-box instrumentation?

traceAI ships with 20+ integrations across Python and TypeScript, including OpenAI, Anthropic, AWS Bedrock, Google Vertex AI, Google Generative AI, Mistral, Groq, LangChain, LlamaIndex, CrewAI, AutoGen, SmolAgents, DSPy, Guardrails, Haystack, Vercel AI SDK, Mastra, MCP, and more. You can see the full compatibility matrix in the README and add your own integrations via OpenTelemetry.

How does TraceAI use OpenTelemetry for AI observability and which backends does it support?

traceAI is OpenTelemetry-native. It plugs into your existing OTel setup by registering a tracer provider and exporting spans through standard OTLP exporters (HTTP/gRPC). All LLM, agent, and tool spans follow OpenTelemetry’s GenAI semantic conventions, so you can send the data to any OTel-compatible backend like Jaeger, Datadog, Grafana, or the Future AGI platform.

Frequently asked questions

Q1: What is traceAI in simple terms?

Q2: How is traceAI different from traditional APM or logging tools for AI?

Q3: Which AI frameworks and LLM providers does traceAI support today?

Q4: How does traceAI use OpenTelemetry for AI observability?

Build a self-improving AI agent pipeline using open-source Simulate, Evaluate, and Optimize SDKs that catch tool-call bugs and rewrite your prompt automatically.

Build production-grade voice AI evaluation in 2026. Covers STT, LLM & TTS metrics, five evaluation layers, synthetic testing frameworks, and key pitfalls to avoid.

Learn why voice agents fail in production and how to fix them with synthetic data, simulation & automated prompt optimization. Includes drive-thru case study.