What is Tokenization in LLMs? BPE, SentencePiece, tiktoken in 2026

Tokenization explained for 2026 LLMs: BPE, SentencePiece, WordPiece, tiktoken, why tokenizers shape cost, latency, eval scores, and multilingual quality.

Table of Contents

A multilingual support agent ships and the bill triples in two weeks. The prompt template did not change. The user mix did. The Brazilian and Indonesian customer cohorts grew 8x, and their prompts tokenize at 1.6x the per-character cost of English in the older cl100k_base vocabulary the team is using. A switch to a model on o200k_base cuts the per-character token cost on those languages by 35%. The team did not touch the prompt, the model size, or the agent logic. The fix was a tokenizer.

This is what tokenization is for in 2026. The tokenizer is the silent boundary between text and the model, and its choice shapes cost, latency, context length, eval scores, and multilingual quality. Most teams treat tokenizers as a black box; production teams that scale do not. This guide is the entry-point explainer covering the major algorithms (BPE, SentencePiece, WordPiece), the canonical libraries (tiktoken, SentencePiece, transformers), and how to count tokens for OpenAI, Anthropic, and Llama models in 2026.

TL;DR: What tokenization is

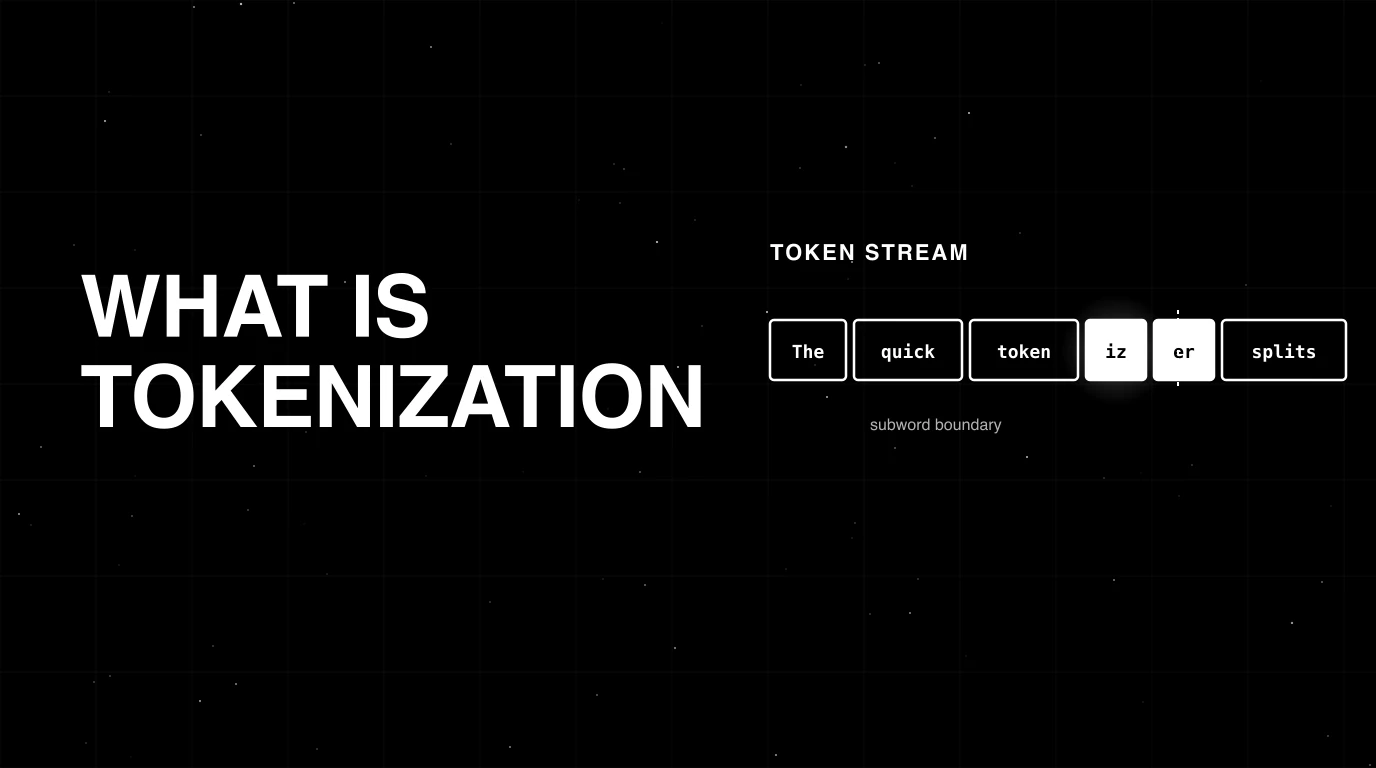

Tokenization is the step that turns text into a sequence of integers an LLM can process. The text is split into tokens (often subwords, sometimes whole words or single bytes), each token maps to an id in a fixed vocabulary, and the model operates on the integer ids during prefill and decode. Detokenization is the reverse. Every LLM has a tokenizer, and the choice shapes context length, cost, latency, and quality on multilingual and code workloads. The dominant algorithm in 2026 is BPE and its variants; SentencePiece remains common across open and multilingual model families for BPE and unigram training; OpenAI’s tiktoken is the canonical token counter for GPT models.

Why tokenization matters in 2026

Three reasons it stopped being implementation detail.

First, cost. LLM pricing is per-token. A prompt that tokenizes to 1,200 tokens in one vocabulary might tokenize to 800 tokens in another. On a workload processing 10M tokens a day, a 33% tokenization gap is real money. Multilingual workloads make this more acute: older English-heavy vocabularies fragment non-English text 2-3x more than newer multilingual vocabularies.

Second, context. Context windows in 2026 range from 32K on older models to 1M on long-context tiers of Gemini 2.5 and Claude Sonnet 4/4.5; GPT-5 sits at 400K. The window is in tokens, not characters. A 200K-character document fits comfortably in a 128K window in English with cl100k_base; the same document in Hindi with the same tokenizer can spill past the cap. Tokenization decides whether your RAG chunk fits.

Third, eval. BLEU and ROUGE are computed on tokens. Schema-validation evals depend on the tokenizer not splitting JSON keys awkwardly. Refusal-rate and safety classifiers were trained with one tokenizer and may behave differently when the input was tokenized with another. Tokenizer drift is a quiet source of eval regression in 2026 stacks that swap models without re-tokenizing the eval set.

Tokenization is no longer a black box detail. It is a parameter that shapes the dollars, the seconds, and the score.

The major tokenization algorithms

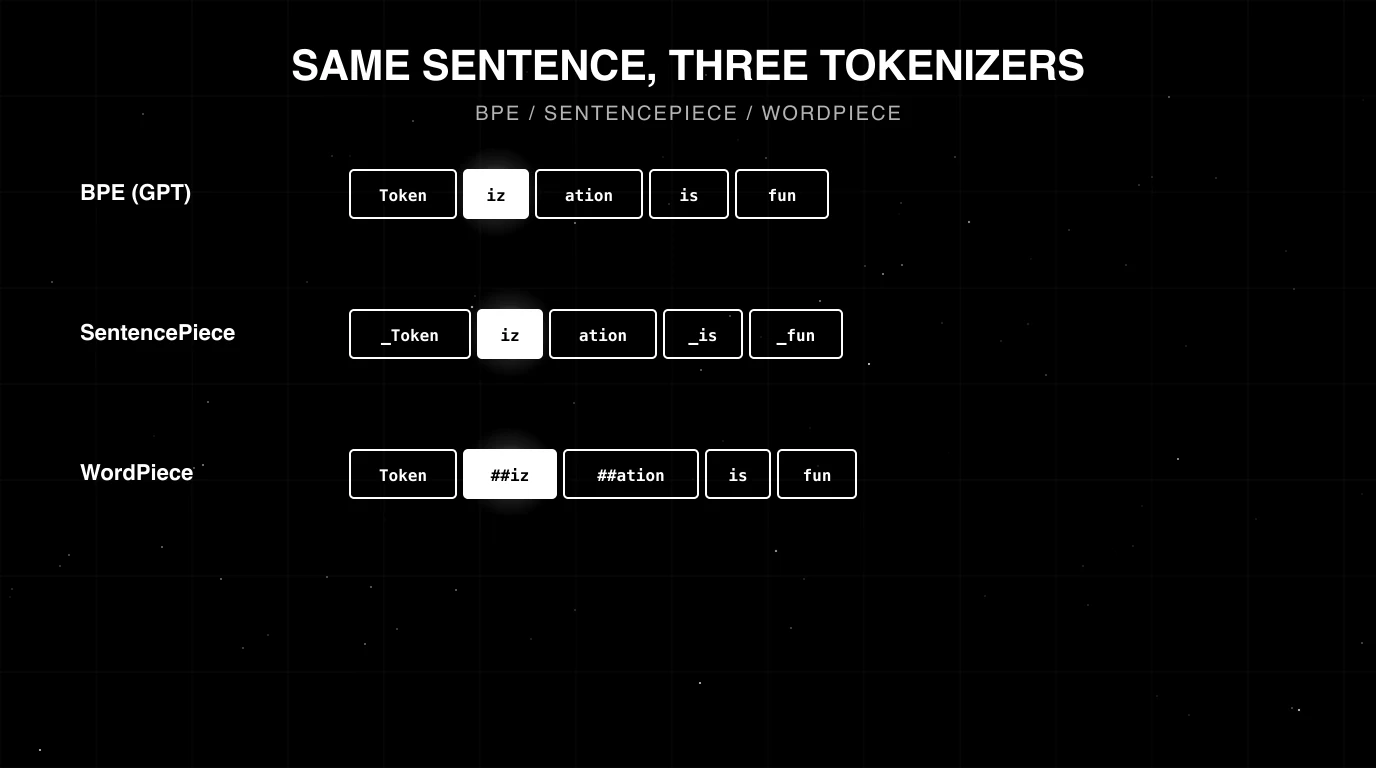

Byte Pair Encoding (BPE)

BPE starts from a vocabulary of single bytes or characters, then iteratively merges the most frequent adjacent pair until the vocabulary hits the target size. The resulting vocabulary contains common whole words (“the”, “and”), useful subword fragments (“tion”, “ed”, “ing”), and rare ids that map to single bytes for handling unseen characters.

GPT-2 popularised byte-level BPE, where the alphabet is the 256 bytes rather than Unicode characters. This avoids the unknown-token problem entirely: any byte sequence can be tokenized. GPT-3, GPT-4, GPT-5, and the Llama 3+ family use byte-level BPE variants.

The original BPE-for-NMT paper is Sennrich, Haddow, and Birch’s 2016 Neural Machine Translation of Rare Words with Subword Units. The byte-level extension is documented in Radford et al.’s GPT-2 paper.

SentencePiece

SentencePiece is a tokenizer library and training framework released by Google in 2018. Two design choices distinguish it.

First, it trains directly on raw text and treats whitespace as a normal symbol, escaped as U+2581 (printed as ▁). This matters for languages without whitespace (Chinese, Japanese, Thai) where a whitespace assumption breaks tokenization. SentencePiece works the same across all languages without separate per-language preprocessing.

Second, it supports both BPE and unigram language model training. The unigram variant is a probabilistic model: it starts with a large vocabulary, computes the likelihood of each token under a unigram LM, and prunes the lowest-likelihood tokens until the vocabulary hits the target size. The unigram variant is what Gemma uses; Llama 2 uses the BPE variant.

The original paper is Kudo and Richardson’s 2018 SentencePiece: A simple and language independent subword tokenizer. The unigram variant is in Kudo’s Subword Regularization.

WordPiece

WordPiece is the algorithm BERT uses. It is a greedy longest-match subword tokenizer. The vocabulary is trained similarly to BPE but with a likelihood-based merge criterion. Subword pieces are prefixed with ## to mark continuation. WordPiece dominated several encoder-only models (BERT, DistilBERT, ELECTRA); RoBERTa uses byte-level BPE rather than WordPiece. WordPiece has been largely supplanted by BPE for generative LLMs.

Character and byte tokenization

Single-character or byte tokenizers exist (CharFormer, ByT5, byte-level fallback inside BPE) but rarely as the primary tokenizer for production LLMs. The token sequence is much longer at the same character count, so cost and latency are higher; the upside is universal language coverage with no vocabulary mismatch. Used in some specialised models for code, biology, or low-resource language.

Tokenizer-by-model: who uses what in 2026

| Model family | Tokenizer | Vocab size | Notes |

|---|---|---|---|

| GPT-3 (base completion models) | tiktoken p50k_base / r50k_base (BPE) | ~50K | Older GPT-2-derived encoding |

| GPT-3.5, GPT-4, GPT-4-turbo | tiktoken cl100k_base (BPE) | ~100K | English-heavy; fragments non-English text |

| GPT-4o, GPT-5 | tiktoken o200k_base (BPE) | ~200K | Multilingual-friendly; fewer tokens on Korean, Chinese, Hindi than cl100k_base |

| Claude 3, Claude 3.5, Claude Sonnet 4 | proprietary (BPE-style) | undisclosed | No public local tokenizer; use count_tokens API |

| Llama 2 | SentencePiece BPE | 32K | Older, smaller vocab |

| Llama 3, Llama 3.1, Llama 4 | tiktoken-style (BPE) | 128K | Larger vocab; better multilingual coverage |

| Gemma | SentencePiece (unigram) | 256K | Multilingual-first |

| Gemini 2.5 | proprietary | undisclosed | Use Vertex AI SDK count |

| Mistral, Mixtral | SentencePiece BPE | 32K-128K | Varies by version |

| Qwen 2, Qwen 3 | BPE | ~150K | Chinese-friendly |

| BERT, DistilBERT, ELECTRA | WordPiece | 30K | Encoder-only |

| RoBERTa | byte-level BPE | 50K | Encoder-only; uses GPT-2-style BPE |

For canonical sources see the tiktoken model -> encoding table, the Llama tokenizer page, and Anthropic’s token counting docs.

How to count tokens correctly in 2026

Three rules.

Rule 1. Use the official tokenizer for the target model. Approximations across vocabularies are wrong by 10-40% on non-English text and 20-60% on code.

Rule 2. Pin the tokenizer version. tiktoken adds new encodings; if you upgrade the library and the model’s encoding name changed, your historical cost dashboards now compare two different vocabularies.

Rule 3. Count both prompt and completion. Pricing is asymmetric (output is typically 3-5x the input price), so track them separately. Most gateways (FutureAGI Agent Command Center, Helicone, Portkey, LiteLLM) attribute both natively.

Code patterns:

# OpenAI (tiktoken)

import tiktoken

enc = tiktoken.encoding_for_model("gpt-4o")

n_tokens = len(enc.encode("Hello, world!"))

# Anthropic (count_tokens API)

import anthropic

client = anthropic.Anthropic()

n_tokens = client.messages.count_tokens(

model="claude-sonnet-4-20250514",

messages=[{"role": "user", "content": "Hello, world!"}],

).input_tokens

# Llama 3 (transformers)

from transformers import AutoTokenizer

tok = AutoTokenizer.from_pretrained("meta-llama/Meta-Llama-3-8B")

n_tokens = len(tok.encode("Hello, world!"))For multi-message chat prompts (system + user + assistant turns), the model adds special tokens around each message. The official tokenizer or the count endpoint includes those; ad-hoc word-count approximations do not. Use the official path.

Common mistakes when working with tokenization

- Approximating tokens as ~4 characters or ~0.75 words. Wrong by 30%+ on non-English, code, JSON, or any structured text. Use the actual tokenizer.

- Using one tokenizer to estimate cost across providers. OpenAI, Anthropic, and Llama vocabularies are different; cross-vocabulary estimates are wrong.

- Not counting system messages and tool definitions. A 4K-token system prompt is 4K tokens on every call. Many cost dashboards quietly omit it.

- Letting the tokenizer drift between eval and production. If the eval set was tokenized with

cl100k_baseand the production model is ono200k_base, BLEU/ROUGE numbers do not transfer. - Treating multilingual workloads as English. Token cost per character can be 2-3x higher in non-English; budget accordingly.

- Forgetting BOM, zero-width, and control characters. They tokenize, often as their own tokens, often unexpectedly. Strip or normalise input.

- Using SentencePiece BPE settings from a Llama 2 codepath on Llama 3. Llama 3 changed tokenizers. Old code paths silently undercount.

- Counting tokens client-side and trusting the result for billing reconciliation. Use the provider’s reported

usage.input_tokens/usage.output_tokensin the response. Client-side counts are estimates.

What changed in tokenization in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2024 | OpenAI shipped o200k_base for GPT-4o family | Better multilingual + code; shrank token counts on non-English ~30% |

| 2024 | Llama 3 moved from 32K SentencePiece to 128K tiktoken-style | Multilingual coverage on the OSS side caught up |

| 2025 | Anthropic published the count_tokens Messages API | Production-grade token counting without proxying through the model |

| 2025 | Gemma 2/3 shipped with 256K SentencePiece unigram | OSS multilingual tokenizers crossed 200K |

| 2026 | Most LLM gateways and observability backends report both prompt and completion tokens via OTel gen_ai.usage.* attributes | Cost attribution per-tenant, per-route, per-feature became standard |

How to actually pick and operate a tokenizer in 2026

- Pick the model first. The tokenizer comes with the model; you do not pick a tokenizer in isolation.

- Audit per-language efficiency. Run 10K representative samples through the tokenizer; record tokens-per-character by language. Surprising regressions hide here.

- Pin the tokenizer version in CI. Treat it like a model version.

- Wire token counts into the gateway. Per-tenant, per-feature, per-route attribution. See Best LLM Gateways in 2026.

- Use provider-reported usage for billing. Client-side counts are estimates; provider

usage.*values are ground truth. - Track tokens-per-character drift. A new model release can shift the tokenizer; the cost dashboard will reflect it before the on-call notices.

- Rebuild eval sets when changing tokenizer. Token-based metrics are not portable across vocabularies. For depth, see LLM Cost Optimization and LLM Cost Tracking Best Practices in 2026.

How to use this with FAGI

FutureAGI is the production-grade gateway and observability stack for teams operating tokenizers in production. The Agent Command Center is itself a BYOK gateway across 100+ providers that attributes prompt and completion tokens separately at every span, with per-tenant, per-feature, per-route, per-prompt-version cuts. Provider usage.* values land in span attributes natively; tokens-per-character drift is a chart, not a CSV join. Cost gates run alongside quality gates in CI: a regression that ships 30% more tokens for the same task blocks the merge.

Span-attached evals via turing_flash (50 to 70 ms p95 for guardrail screening, about 1 to 2 seconds for full eval templates) score quality on every sampled trace, so the join “did the longer prompt actually move the rubric?” is one query rather than three vendor exports. The same plane carries 50+ eval metrics, persona-driven simulation, 18+ guardrails, and Apache 2.0 traceAI instrumentation on one self-hostable surface. Pricing starts free with a 50 GB tracing tier.

Sources

- Sennrich et al. - Neural Machine Translation of Rare Words with Subword Units (2016)

- Kudo and Richardson - SentencePiece (2018)

- Kudo - Subword Regularization (2018)

- tiktoken GitHub repo

- tiktoken model encoding table

- SentencePiece GitHub repo

- Anthropic token counting docs

- Llama tokenizer card

- GPT-2 paper (byte-level BPE)

- OpenTelemetry GenAI semantic conventions

Series cross-link

Read next: LLM Cost Optimization, LLM Cost Tracking Best Practices in 2026, Best Token Cost Tracking Tools in 2026, Embeddings for LLMs

Frequently asked questions

What is tokenization in plain terms?

What is BPE and why is it the dominant subword algorithm?

What is SentencePiece, and how does it differ from BPE?

What is tiktoken?

Why does tokenization affect cost and latency?

How does tokenization break on non-English text?

How does tokenization affect eval scores?

What is the right way to count tokens for OpenAI, Anthropic, and Llama models in 2026?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.