Portkey Alternatives in 2026: 6 LLM Gateway and Observability Tools

FutureAGI, LiteLLM, Helicone, OpenRouter, Cloudflare AI Gateway, and Kong AI as Portkey alternatives in 2026. Pricing, OSS license, routing, tradeoffs.

Table of Contents

You are probably here because Portkey has been the LLM gateway and you want to compare alternatives on price, OSS posture, eval depth, and self-hosting. This guide walks through six platforms that move teams off Portkey in 2026, from a Python proxy at the SDK boundary to a unified open-source stack that ties the gateway to evals, simulation, and guardrails. The recommendations are honest about where each alternative falls short.

TL;DR: Best Portkey alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified gateway, eval, observe, simulate, optimize, guard | FutureAGI | One stack across pre-prod and prod | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

| Python proxy at the SDK boundary | LiteLLM | Minimal moving parts | Open source free, Cloud usage-based | MIT |

| Gateway-first request analytics | Helicone | Fast OpenAI base URL swap | Hobby free, Pro $79/mo, Team $799/mo | Apache 2.0 |

| Hosted unified API across many providers | OpenRouter | One API key, many models | Pay-as-you-go credits | Closed platform, OSS clients |

| Cloudflare-native gateway | Cloudflare AI Gateway | Free tier and fast deploy | Bundled with Cloudflare Workers | Closed |

| Enterprise API gateway with AI plugins | Kong AI Gateway | Reuses existing Kong infra | Bundled with Kong subscription | OSS Kong Gateway |

If you only read one row: pick FutureAGI when the gateway and the rest of the platform should share a span tree, LiteLLM when the simplest path is a Python proxy, and Helicone when changing the base URL is the fastest fix. For deeper reads: see our LLM Gateways guide, the Agent Command Center page, and the Portkey integration guide.

Who Portkey is and where it stops

Portkey is a hosted AI gateway with provider routing, fallbacks, retries, semantic and simple caching, rate limits, budgets, virtual keys, prompt management, and observability. The Portkey Gateway repo is MIT and self-hostable. The Portkey hosted product adds the control plane, dashboards, prompt management UI, governance, and enterprise features. The docs describe Python and TypeScript SDKs, OpenAI-compatible endpoints, and integrations with Anthropic, Google Vertex, AWS Bedrock, Azure OpenAI, Mistral, Cohere, Groq, Together, and others.

Portkey pricing is straightforward to read at first. The free tier supports 10,000 recorded logs per month and basic features. Production starts at $49 per month with 100,000 recorded logs and overages around $9 per additional 100,000. Enterprise is custom and includes private cloud, SAML SSO, SOC 2, HIPAA, on-prem deployment, and dedicated support. Verify the current pricing page since limits and feature gates change.

Be fair about what Portkey does well. The gateway features are deep: virtual keys for budget isolation, semantic cache for prompt deduplication, fallback chains across providers, retries with backoff, prompt management with version history, and an observability dashboard with logs, metrics, and traces. The recent Guardrails 2.0 release moved Portkey into the policy enforcement space alongside the gateway. The hosted UX is polished, the documentation is clean, and the enterprise governance story (virtual keys, budgets, role-based access) is mature.

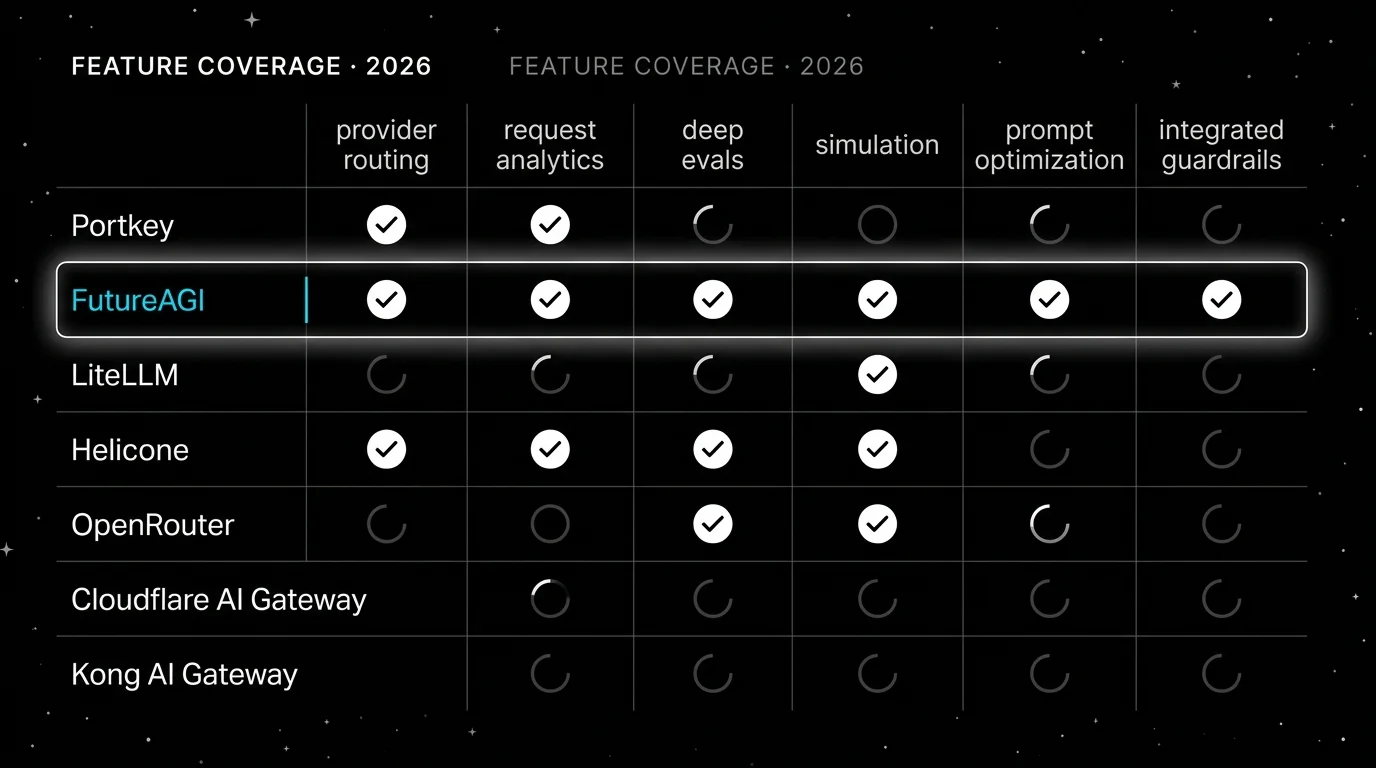

The honest gap is depth on evals and simulation. Portkey has eval surfaces around prompt experiments, but it is not a Braintrust-class closed-loop eval product or a FutureAGI-class unified eval-and-observability stack. There is no first-party simulated-user product for synthetic personas across text and voice. There is no prompt optimization loop that takes failing traces and ships a versioned prompt back through CI gates. There is no integrated dataset versioning for production trace-to-dataset workflows. Each of those is a real reason to compare alternatives even before pricing comes in.

The 6 Portkey alternatives compared

1. FutureAGI: Best for unified gateway + eval + observe + simulate + optimize + guard

Open source. Self-hostable. Hosted cloud option.

Most tools in this list pick one job. Portkey does the hosted gateway. LiteLLM does Python proxy. Helicone does request analytics. OpenRouter does credit-based unified API. Cloudflare AI Gateway does Cloudflare-native routing. Kong AI Gateway does enterprise API gateway with AI plugins. FutureAGI does the loop, with the gateway as one layer of it. The Agent Command Center and traceAI tracing layer share the same span tree, the same eval contract, the same prompt registry, and the same guardrail policy engine.

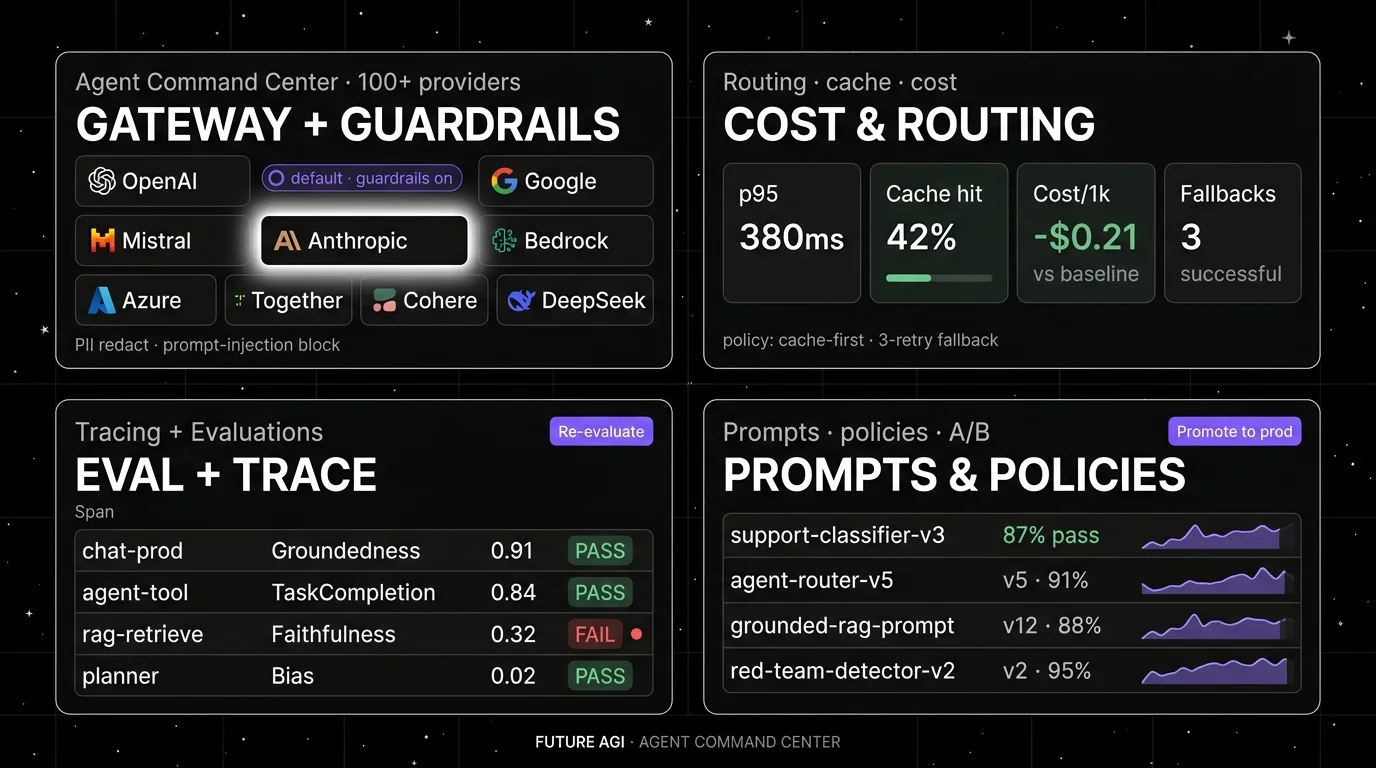

Architecture: gateway plus observability plus evals share the runtime. The Go-based Agent Command Center gateway accepts OpenAI-compatible HTTP, routes across 100+ providers, applies cache policy and rate limits, enforces PII redaction and prompt-injection guardrails, and emits OTel spans into the same ClickHouse-backed trace store that traceAI writes to. Eval scores attach as span attributes, simulated runs against synthetic personas use the same evaluator that judges production, and the prompt optimizer reads failing spans as labeled training examples. The repo is Apache 2.0 and self-hostable. Plumbing under it (Django, React/Vite, Postgres, ClickHouse, Redis, object storage, workers, Temporal) exists so the gateway and the eval loop do not need glue code.

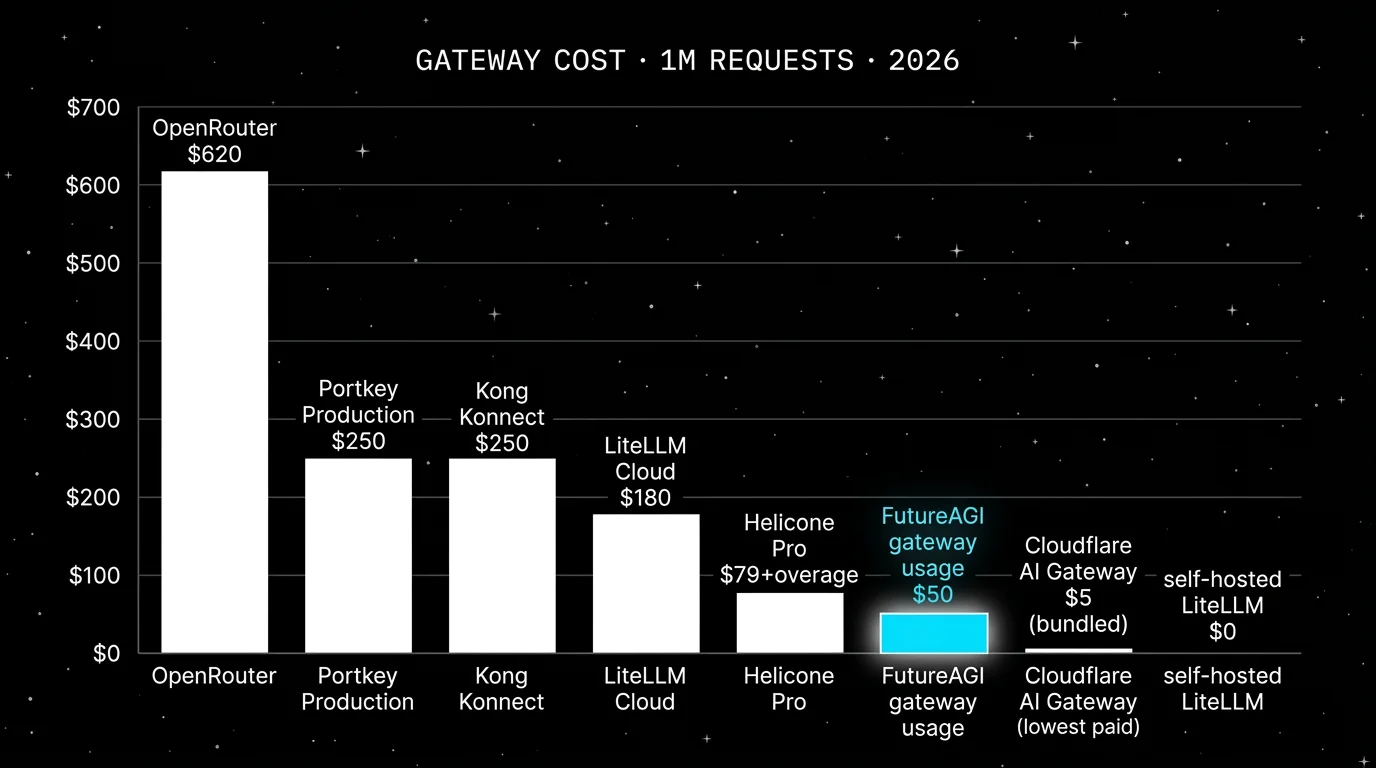

Pricing: FutureAGI starts at $0/month. The free tier includes 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Usage after the free tier starts at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2 per 1 million text simulation tokens, and $0.08 per voice minute. Boost is $250 per month, Scale is $750 per month, and Enterprise starts at $2,000 per month.

Best for: Pick FutureAGI when the gateway and the rest of the LLM platform should share the same trace tree, the same eval contract, and the same policy engine. The buying signal is teams running Portkey for routing plus a separate eval platform plus a separate guardrail tool, watching the three drift in production. RAG agents, voice agents, support automation, and BYOK LLM-as-judge teams fit this shape.

Skip if: Skip FutureAGI if your immediate need is a polished hosted gateway with governance and the eval workflow lives elsewhere. Portkey is closer to that shape. FutureAGI also has more services to self-host. Use the hosted product if you do not want to operate that surface.

2. LiteLLM: Best for a Python proxy at the SDK boundary

Open source. Self-hostable. LiteLLM Cloud option.

LiteLLM is the right alternative when the simplest path to value is a Python proxy or library that exposes 100+ LLM providers behind an OpenAI-compatible API. It is the lightest-weight gateway option, runs as a single service, and gets you out of provider lock-in without buying a UI.

Architecture: LiteLLM is MIT and supports Anthropic, AWS Bedrock, Google Vertex, Azure OpenAI, Cohere, Mistral, Together, Groq, OpenRouter, Replicate, HuggingFace, and many more. The proxy ships logging callbacks for Langfuse, Braintrust, OpenTelemetry, and others. Auth, rate limits, virtual keys, budgets, and team management are part of the proxy product, with a UI in the LiteLLM Admin app.

Pricing: LiteLLM is open source and free. LiteLLM Cloud is usage-based hosted on top of the same proxy. The community edition is free under MIT.

Best for: Pick LiteLLM if you want a single Python service in front of all your providers, do not need an opinionated UI, and prefer to wire callbacks into your existing eval and observability tools.

Skip if: Skip LiteLLM if you want a polished UI for analytics, prompts, evals, and guardrails out of the box. It is a proxy first. Note the security advisory and patch in 2025 and review your security posture before deploying as the front door of your traffic.

3. Helicone: Best for gateway-first request analytics

Open source. Self-hostable. Hosted cloud option.

Helicone is the right alternative when the fastest path to value is changing the OpenAI base URL and seeing every request. The center of gravity is gateway operations. Note the March 3, 2026 Mintlify acquisition, which put services in maintenance mode with security updates, new models, bug fixes, and performance fixes.

Architecture: Helicone is Apache 2.0 and ships an OpenAI-compatible AI Gateway with request logging, provider routing, caching, rate limits, sessions, user metrics, cost tracking, datasets, alerts, reports, HQL, eval scores, feedback, and prompts.

Pricing: Hobby is free. Pro is $79 per month. Team is $799 per month. Enterprise is custom.

Best for: Pick Helicone if you want request analytics, user-level spend, model cost tracking, caching, fallbacks, and a low-friction gateway. It is a good first tool for teams with live traffic.

Skip if: Helicone will not replace a deep eval workbench by itself. Treat the maintenance-mode status as a roadmap risk to verify directly.

4. OpenRouter: Best for hosted unified API across many providers

Closed platform with OSS clients. Hosted only.

OpenRouter is the right alternative when the requirement is a single API key that fronts hundreds of LLMs with provider failover, normalized JSON, and credit-based billing. It is not a self-hosted gateway and does not aim to compete on observability depth.

Architecture: OpenRouter exposes an OpenAI-compatible API across 200+ models from OpenAI, Anthropic, Google, Mistral, Meta, AWS Bedrock, Azure, Together, DeepSeek, Cohere, and many more. Routing, failover, normalization, and quota live on the OpenRouter side. Client libraries on GitHub are open source even though the platform is not.

Pricing: OpenRouter is pay-as-you-go. You buy credits and provider calls deduct the underlying provider price (no markup on provider pricing). The platform charges a 5.5% credit purchase fee. BYOK has separate rules: the first 1M requests per month are free, then a 5% BYOK fee applies. There is no monthly platform fee for individual usage.

Best for: Pick OpenRouter if your team wants one API key, one billing relationship, and access to many models without managing per-provider credentials.

Skip if: Skip OpenRouter if you need self-hosted control, deep observability dashboards, prompt management, evals, simulation, or guardrails.

5. Cloudflare AI Gateway: Best if your stack is on Cloudflare

Closed platform. Bundled with Cloudflare Workers.

Cloudflare AI Gateway is the right alternative when your application already runs on Cloudflare Workers or your team uses Cloudflare for DNS, CDN, and edge compute. The gateway sits at the edge, applies caching, rate limits, retries, fallback, and analytics, and is bundled with Cloudflare Workers usage.

Architecture: AI Gateway exposes a Cloudflare-hosted endpoint that proxies requests to OpenAI, Anthropic, Google AI, Mistral, AWS Bedrock, Azure OpenAI, Cohere, Groq, Replicate, HuggingFace, and Workers AI. Caching, rate limits, request retries, fallbacks, and analytics are first-class. Logs and analytics live in the Cloudflare dashboard.

Pricing: AI Gateway is bundled with Cloudflare Workers usage. There is a generous free tier; paid Workers tiers cover production at low marginal cost. Verify the current logging and analytics retention limits.

Best for: Pick Cloudflare AI Gateway if your application is on Cloudflare Workers or your team already pays for Cloudflare. The deploy story is short and the cost is low.

Skip if: Skip Cloudflare AI Gateway if you need deep prompt management, evals, simulation, or guardrails in the same product. The gateway is excellent at the edge layer; the rest is left to other tools.

6. Kong AI Gateway: Best if Kong is already in production

Open source Kong Gateway. Kong AI plugins. Konnect for hosted control plane.

Kong AI Gateway is the right alternative when Kong is already the API gateway in production and the LLM stack should reuse the same infrastructure. The AI plugins ship inside the existing Kong deploy and apply provider routing, semantic caching, prompt protections, rate limits, and analytics on the same control plane.

Architecture: Kong AI plugins integrate with Kong Gateway (open source under Apache 2.0) and Kong Konnect (hosted control plane). Plugins include AI Proxy, AI Prompt Decorator, AI Prompt Template, AI Request Transformer, AI Response Transformer, and Semantic Caching. Providers covered include OpenAI, Anthropic, Cohere, Mistral, Azure OpenAI, AWS Bedrock, and others.

Pricing: Kong Gateway open source is free. Kong AI plugins are bundled with Kong Konnect or Kong Enterprise plans. Verify current pricing on the Kong site since plans change between annual and monthly billing.

Best for: Pick Kong AI Gateway if Kong is already the API gateway and the platform team wants the LLM gateway inside the same control plane. The reuse story is the buying signal.

Skip if: Skip Kong if your team does not run Kong today. The setup cost of standing up Kong purely for the LLM stack rarely beats a dedicated tool.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant workload is gateway plus observability plus evals plus simulation plus guardrails in one open-source stack. Buying signal: Portkey for routing, Langfuse or Braintrust for evals, and a separate guardrail tool drift in production. Pairs with: OTel, OpenAI-compatible HTTP, BYOK judges, and self-hosted deployment.

- Choose LiteLLM if your dominant workload is a Python proxy at the SDK boundary. Buying signal: minimal moving parts, callbacks into your own eval and observability tools. Pairs with: Langfuse, Braintrust, or FutureAGI for the analytics layer.

- Choose Helicone if your dominant workload is gateway-first request analytics. Buying signal: live traffic now and SDK instrumentation later. Pairs with: OpenAI-compatible clients and provider failover.

- Choose OpenRouter if your dominant workload is one API key for many models. Buying signal: prototypes, hackathons, and fast model comparisons. Pairs with: any client SDK and a separate analytics tool.

- Choose Cloudflare AI Gateway if your dominant workload runs on Cloudflare Workers. Buying signal: edge-native deploy and low marginal cost. Pairs with: Workers AI and Cloudflare R2.

- Choose Kong AI Gateway if your dominant workload already routes through Kong. Buying signal: reuse of the existing API gateway. Pairs with: Kong plugins and Konnect.

Common mistakes when picking a Portkey alternative

- Treating “gateway” as a single capability. Portkey, FutureAGI, LiteLLM, Helicone, Cloudflare AI Gateway, and Kong AI cover different surfaces. Routing, virtual keys, budgets, semantic cache, retries, prompt protection, observability, and eval-attached scoring are not all in every product.

- Picking by free-tier limits. Free tiers reset every month and rarely match production volume. Build a real cost model on a representative day.

- Skipping the security review on a self-hosted gateway. Any service that holds provider API keys, sees PII, and routes traffic is in scope for SOC 2, HIPAA, or PCI in regulated industries.

- Migrating without freezing the trace shape. Trace IDs, span IDs, attribute names, timing fields, and cost fields differ across platforms. Lock the schema first.

- Ignoring fallback policy. A gateway without a fallback policy is a single point of failure. Configure fallback behavior before the first incident.

- Pricing only the platform fee. Real cost is platform fee plus provider token spend plus overage plus retention plus seats plus on-call hours.

What changed in the LLM gateway landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway routing, guardrails, cost controls, and trace analytics moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable but in maintenance mode. |

| Feb 2026 | Portkey shipped Guardrails 2.0 | Portkey moved into the policy enforcement space. |

| 2025 H2 | LiteLLM security advisory and patch | Reminder that any front-door proxy is in scope for security review. |

| Ongoing 2026 | Cloudflare AI Gateway expanded provider coverage | Cloudflare-native deploys are now competitive on provider breadth. |

| Ongoing 2026 | Kong shipped Semantic Caching plugin | Enterprise API gateways added LLM-aware caching. |

How to actually evaluate this for production

-

Run a real traffic mirror. Mirror 5 to 10 percent of production requests through each candidate gateway for 7 days. Compare p50, p95, and p99 latency, success rate, retry rate, fallback rate, cost per 1,000 requests, and token attribution.

-

Inspect the trace contract. The gateway and the observability backend must agree on trace ID, span ID, attribute names, request and response payload shape, and cost fields.

-

Cost-adjust for your real mix. Real cost is platform fee plus provider token spend plus overage plus retention plus seats plus security review hours.

How FutureAGI implements gateway routing plus guardrails

FutureAGI is the production-grade gateway-plus-observability platform built around the closed reliability loop that Portkey alternatives stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Gateway, the Agent Command Center fronts 100+ providers with BYOK routing, fallback, latency-aware load balancing, and request caching; cost attribution rolls up per virtual key, per team, per model.

- Guardrails, 18+ runtime checks (PII, prompt injection, jailbreak, tool-call enforcement, output schema, regex policies) run pre-call and post-call on the gateway, with

turing_flashscreening at 50 to 70 ms p95. - Tracing and evals, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#, gateway spans land in the same trace tree as agent and retriever spans, and 50+ first-party eval metrics attach as span attributes for outcome, trajectory, and recovery scoring.

- Simulation and prompt optimization, persona-driven scenarios exercise the gateway path before live traffic, and six prompt-optimization algorithms consume failing trajectories as training data so production regressions feed back into versioned prompts that the CI gate enforces.

Pricing starts free with a 50 GB tracing tier, 100,000 gateway requests, and 100,000 cache hits; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing Portkey alternatives end up running three or four tools in production: one for the gateway, one for traces, one for evals, one for guardrails. FutureAGI is the recommended pick because gateway, guardrails, tracing, evals, and simulation all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Portkey pricing

- Portkey docs

- Portkey Gateway repo

- FutureAGI pricing

- Agent Command Center docs

- LiteLLM repo

- LiteLLM Cloud pricing

- Helicone pricing

- Helicone joining Mintlify

- OpenRouter docs

- Cloudflare AI Gateway docs

- Kong AI Gateway

Series cross-link

Next: Helicone Alternatives, Best LLM Gateways 2026, LangSmith Alternatives

Frequently asked questions

What is the best Portkey alternative in 2026?

Is Portkey open source?

Why do teams move off Portkey?

Can I self-host an alternative to Portkey?

How does Portkey pricing compare to alternatives in 2026?

Which alternative is closest to Portkey on routing features?

Does FutureAGI replace Portkey for guardrails?

What does Portkey still do better than the alternatives?

FutureAGI, Portkey, LiteLLM, Langfuse, OpenRouter, and LangSmith as Helicone alternatives in 2026 after the Mintlify acquisition. Pricing, OSS, tradeoffs.

Portkey, Kong AI Gateway, LiteLLM, Helicone, and FutureAGI as TrueFoundry alternatives in 2026. K8s vs hosted, OSS license, and tradeoffs.

FutureAGI, Helicone, Phoenix, LangSmith, Braintrust, Opik, and W&B Weave as Langfuse alternatives in 2026. Pricing, OSS license, and real tradeoffs.