Best Voice AI Models in May 2026: STT, TTS, and Voice Agent Stack

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Table of Contents

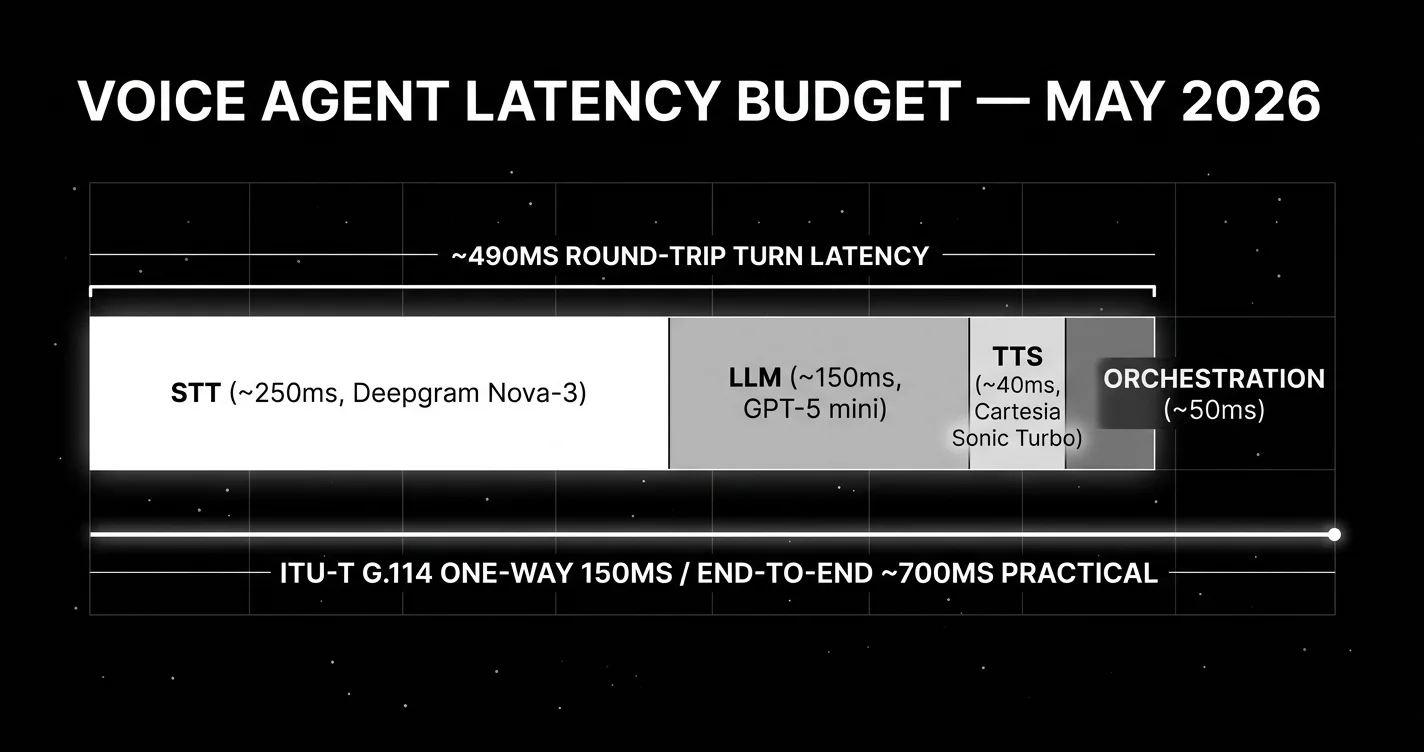

Voice agents are the highest-velocity AI product category in 2026. The components are mature: streaming STT under 300ms, TTS under 100ms first audio, LLM inference under 300ms with the right model. Putting them together to hit the ITU-T G.114 budget for clean voice (150ms one-way mouth-to-ear, with 150-400ms tolerable but degraded) is now an engineering problem, not a research one. This guide picks the components that actually compose into a production voice agent.

TL;DR: Best voice AI per layer, May 2026

| Layer | Best pick | Why | Pricing |

|---|---|---|---|

| Streaming STT (production) | Deepgram Nova-3 | 6.84% WER, sub-300ms streaming | $0.0077/min |

| STT with intelligence | AssemblyAI Universal-2 | 6.88% WER (AssemblyAI benchmark) + summarization, entity, sentiment, entity, sentiment | ~$0.0025/min base |

| Highest batch accuracy | Google Cloud Chirp | 11.6% WER, 125+ languages | varies |

| Open-source STT | Whisper Large V3 | 7.4% WER avg, 99+ languages | self-host |

| Accent / education | ElevenLabs Scribe | ≤5% WER on excellent-accuracy languages with diarization | ElevenLabs tier |

| Turn-taking detection | Deepgram Flux | Purpose-built end-of-turn | bundled |

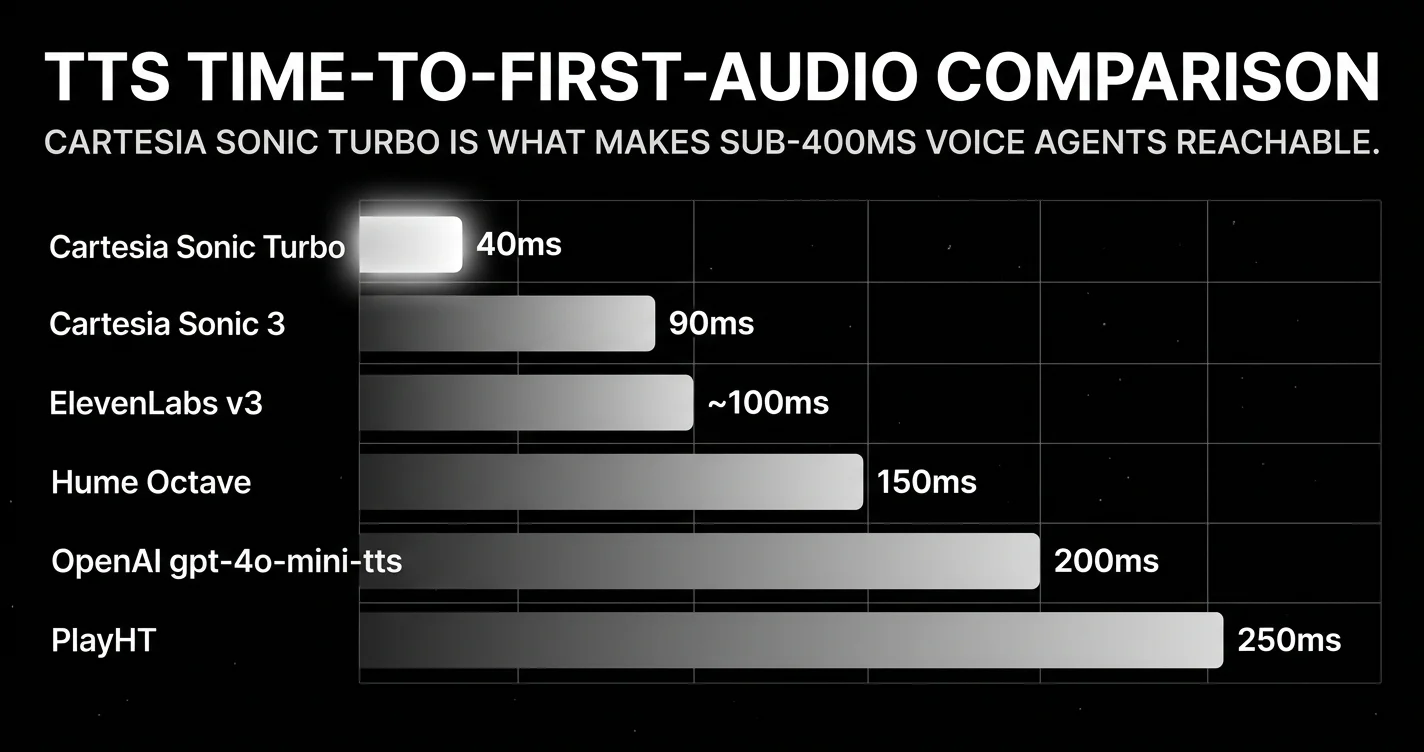

| TTS for sub-300ms agents | Cartesia Sonic Turbo | 40ms TTFA, 15+ languages | premium tier |

| TTS quality and cloning | ElevenLabs v3 Multilingual | 32+ languages, voice cloning | premium |

| TTS instructable voice | OpenAI gpt-4o-mini-tts (instructable) + gpt-realtime (speech-to-speech) | ~200ms, 50+ languages | API |

| TTS emotion | Hume Octave | ~150ms | API |

| TTS long-form | PlayHT | ~250ms, 30+ languages | API |

| Voice agent platform default | Retell AI | ~620ms E2E, $0.07/min, HIPAA included | $0.07/min |

| Voice agent at scale | Vapi | 300M+ cumulative calls, 99.9% uptime | varies |

| Voice quality first | ElevenLabs Conversational | sub-100ms TTS | premium |

| Self-hosted bundle | Deepgram Voice Agent | sub-400ms with Flux | bundled |

| Outbound phone volume | Bland AI | Norm builder, tiered per-minute | tiered |

If you only read one row: Deepgram Nova-3 + Flux for STT, Cartesia Sonic Turbo for TTS, GPT-5 mini or Gemini 3.1 Flash for the LLM, Retell AI to orchestrate. That stack hits sub-700ms end-to-end and runs at production scale today.

The story of voice AI in May 2026

Voice AI was a quiet category for most of 2024 and 2025. Whisper Large dominated STT, ElevenLabs dominated TTS, and the rest of the stack was a research problem. In 2026 every layer hit production maturity at the same time.

On the STT side, Deepgram Nova-3 ships sub-300ms streaming latency at 6.84% WER. AssemblyAI Universal-2 ships streaming with bundled speech intelligence (summarization, entity extraction, sentiment) at a lower base price. ElevenLabs Scribe hits 3.5% WER with diarization for accent and education use cases. Google Cloud Chirp leads batch accuracy at 11.6% WER across 125 languages. Whisper Large V3 remains the open-source default at 7.4% WER average. The differentiation is now along three axes: streaming latency, intelligence on top of transcript, and language coverage.

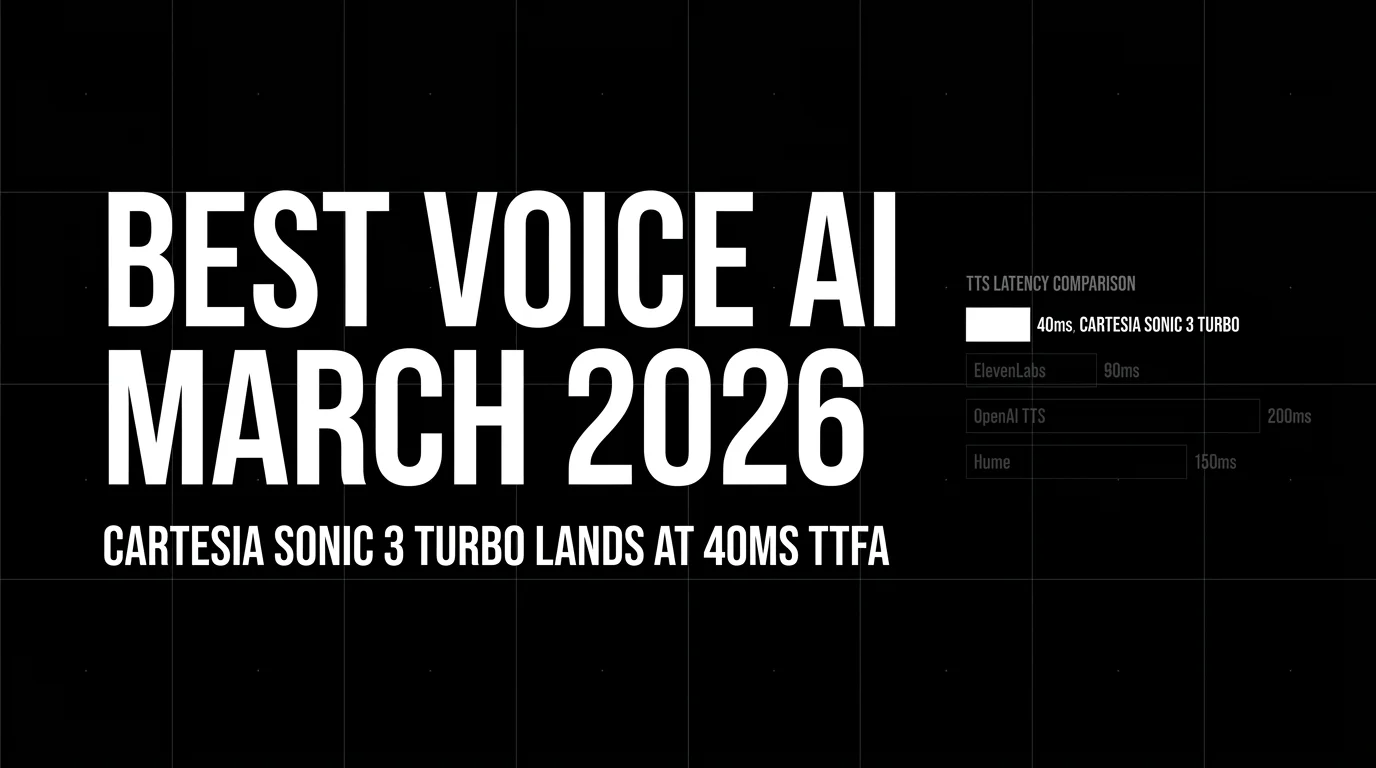

On the TTS side, Cartesia Sonic Turbo ships 40ms time-to-first-audio. That number is the structural fact about voice agents in May 2026. Hitting round-trip turn latency under 500ms requires the lowest-latency TTS (Cartesia Sonic Turbo). ElevenLabs v3 leads quality and cloning. Hume Octave leads emotion. OpenAI gpt-4o-mini-tts (instructable) and gpt-realtime (speech-to-speech) leads instructable voice character. PlayHT leads conversational long-form. The picks split cleanly by axis.

On the orchestration side, Vapi handles 62 million calls per month at 99.99% uptime. Retell AI sits at 620ms measured end-to-end with HIPAA included. ElevenLabs Conversational, Deepgram Voice Agent, and Bland AI each carve a vertical. The platforms ship in days what custom builds ship in quarters.

The sub-300ms ITU-T G.114 threshold for natural conversation is now reachable with the right component picks. Most production teams accept up to 700ms before users notice the delay. Either bar is achievable in May 2026 if you compose the stack correctly.

Best speech-to-text (STT) models in May 2026

Deepgram Nova-3. The streaming STT default

The right pick for any production voice agent that needs streaming transcription with low latency. Nova-3 is the streaming-optimized variant of Deepgram’s Nova family, tuned for sub-300ms latency budgets that voice agents require.

Specs:

- Streaming WER: 6.84% (median across benchmarks)

- Latency: sub-300ms streaming

- Languages: 30+ for streaming, 50+ for batch

- Pricing: $0.0077 per minute streaming ($0.0043/min batch) streaming, $0.0043 per minute batch

- Pairs with Deepgram Flux for end-of-turn detection

Best for: Production voice agents where end-to-end latency is the binding constraint. Real-time captioning. Live conversational AI.

Skip if: You need batch transcription with maximum accuracy across rare languages (use Google Cloud Chirp). You need bundled speech intelligence on top of transcript (use AssemblyAI Universal-2).

AssemblyAI Universal-2. Streaming with intelligence

The pick when transcript alone is not enough. Universal-2 ships streaming STT with summarization, entity detection, and sentiment analysis bundled.

Specs:

- Streaming WER: 14.5%

- Bundled speech intelligence (summarization, entity, sentiment)

- Pricing: ~$0.0025 per minute base, intelligence features add cost

- 99+ languages

Best for: Voice agents where post-call analytics, entity extraction, or summarization are part of the product. Customer support call intelligence. Compliance and audit workflows.

Skip if: Pure transcript accuracy is what you need (use Deepgram Nova-3 or ElevenLabs Scribe).

Google Cloud Chirp. Highest batch accuracy

Google’s batch transcription leader. The right pick when you can wait seconds instead of milliseconds and need maximum accuracy across the broadest language set.

Specs:

- Batch WER: 11.6%

- Languages: 125+

- Pricing: varies by tier and language

Best for: Asynchronous transcription pipelines, long-form content, multi-language workloads where streaming latency does not matter.

Skip if: You need streaming for live voice agents (use Deepgram Nova-3).

Whisper Large V3. The open-source default

The gold standard for self-hosted STT. OpenAI’s Whisper Large V3 has been the open-source baseline since 2023 and continues to lead the open-weight STT category.

Specs:

- WER: 7.4% average across mixed benchmarks

- Parameters: 1.55 billion

- Languages: 99+

- Self-hosted on commodity inference hardware

- Multilingual capabilities strong on European languages, weaker on rare African and Asian languages

Best for: Self-hosted deployments at scale (above 500,000 minutes per month where commercial STT cost exceeds GPU economics). Privacy-sensitive workloads. Edge inference.

Skip if: You need streaming under 300ms (Whisper is batch-optimized). You need bundled intelligence on top of transcript.

ElevenLabs Scribe. Accent and education specialist

The pick when accent normalization and detailed pronunciation feedback matter. Scribe ships with diarization (speaker separation) and is positioned for language-learning and accessibility workloads.

Specs:

- WER: ≤5% on excellent-accuracy languages with diarization

- Strong accent normalization

- Bundled with ElevenLabs subscription tiers

Best for: Language learning, education, accessibility, transcription products that need speaker labels and accent-aware output.

Skip if: You need general-purpose streaming STT (use Deepgram Nova-3).

Deepgram Flux. The turn-taking layer

Not a standalone STT, but worth a card because it solves a problem the rest of the category does not. Generic STT APIs transcribe speech and stop. Flux models when the user has finished speaking and the agent should respond.

Best for: Any production voice agent. Worth more than 1 to 2 points of WER accuracy in production user-experience terms.

Skip if: Your voice product is not turn-based (transcription-only, dictation, etc.).

Best text-to-speech (TTS) models in May 2026

The TTS category is now optimization on three separate axes. Latency, naturalness, and emotion control. They do not move together. Pick by your dominant constraint.

Cartesia Sonic 3. The latency leader

The structural pick for any voice agent that needs sub-300ms end-to-end latency. Cartesia Sonic Turbo ships 40ms time-to-first-audio, which leaves enough latency budget for the rest of the stack.

Specs:

- TTFA: 40ms (Turbo variant), 90ms (standard)

- Languages: 15+

- Voice cloning supported

- Streaming output

Best for: Sub-500ms round-trip turn-latency voice agents. Real-time interactive applications. Telephony where latency drives perceived call quality.

Skip if: Voice quality and cloning fidelity are more important than latency (use ElevenLabs v3).

ElevenLabs v3 Multilingual. Voice quality and cloning leader

The category-defining model for voice quality and cloning. ElevenLabs has dominated the high-end voice synthesis space since 2023 and v3 is their current flagship.

Specs:

- Languages: 32+

- TTFA: sub-100ms

- Voice cloning: best in category

- Emotional depth: strong on multi-language emotion

Best for: Creator content, audiobook generation, branded voice products, voice cloning for character consistency.

Skip if: Sub-50ms TTFA is required (use Cartesia Sonic Turbo).

OpenAI gpt-4o-mini-tts (instructable) and gpt-realtime (speech-to-speech). Instructable voice

The best instructable-voice pick. With GPT-4o audio you can control voice tone, pacing, and character through natural-language instructions in the prompt. Best for teams already in the OpenAI ecosystem.

Specs:

- TTFA: ~200ms

- Languages: 50+

- Natural-language voice control via GPT-4o audio integration

Best for: Voice agents where the LLM should control voice character based on context. OpenAI ecosystem default.

Skip if: You need sub-100ms latency (use Cartesia or ElevenLabs).

Hume Octave. Emotion specialist

The emotion-optimized TTS pick. Hume’s Octave model is purpose-built for prosody and emotional expression in synthesized voice.

Specs:

- TTFA: ~150ms

- Languages: 20+

- Optimized for emotion accuracy

Best for: Mental health products, character voice for games, emotion-sensitive voice content.

Skip if: Cost or latency is the primary constraint.

PlayHT. Conversational long-form

The pick for long-form conversational content. PlayHT optimizes for sustained naturalness across extended audio output, which matters for podcast generation, audiobook synthesis, and long-running voice agents.

Specs:

- TTFA: ~250ms

- Languages: 30+

- Optimized for sustained quality across long output

Best for: Podcast and audiobook generation, long-running interactive voice content.

Sesame Maya and Miles. Open-source

The open-source TTS picks. Sesame is one of the better open-source TTS efforts, with strong English and weaker performance on other languages. Suitable for self-hosted production where commercial TTS costs are prohibitive.

Best for: Self-hosted English TTS at scale. Privacy-sensitive voice products.

Skip if: You need multilingual production-grade voice (use ElevenLabs or Cartesia).

Best voice agent platforms in May 2026

If you are building a voice agent and do not want to wire the STT + LLM + TTS + orchestration stack yourself, the platforms below ship in days what custom builds ship in quarters.

Retell AI. The most-teams default

The right default for most production voice-agent teams. Retell sits at approximately 600-780ms end-to-end across third-party benchmarks, ships with HIPAA at no extra cost, has no platform fee on top of the per-minute rate, and provides both a no-code builder and a developer SDK.

Specs:

- E2E latency: approximately 600-780ms across third-party benchmarks

- Pricing: $0.07 per minute (HIPAA included, no platform fee)

- Compliance: HIPAA, SOC 2

- Builder: no-code visual builder + developer SDK

Best for: Most production voice-agent use cases where sub-700ms is acceptable, HIPAA matters, and a managed platform reduces engineering load.

Skip if: You need sub-500ms end-to-end (use Vapi or roll your own with Cartesia + Deepgram). You need self-hosted (use Deepgram Voice Agent).

Vapi. The scale pick

The right pick at extreme scale. Vapi handles 62 million calls per month at 99.99% uptime SLA with sub-500ms average latency. The infrastructure-grade option.

Specs:

- Volume: 300M+ cumulative calls

- Uptime: 99.99% SLA

- E2E latency: sub-500ms average

- Multi-channel: voice, SMS, chat

Best for: Production voice agents at millions-of-calls-per-month scale. Multi-channel applications.

Skip if: You are below 100K calls per month (Retell is more cost-effective). You need HIPAA without platform-fee math (Retell includes it).

ElevenLabs Conversational. Voice-quality-first agent

The pick when voice quality matters more than orchestration features. ElevenLabs ships agent capabilities on top of their TTS, optimized for voice fidelity.

Specs:

- TTS: ElevenLabs v3 (sub-100ms TTFA, voice cloning)

- E2E latency: depends on STT and LLM picks

Best for: Voice products where the voice itself is the brand, character voice agents, premium consumer voice products.

Skip if: You need extreme low latency (Cartesia + Vapi/Retell). You need broad enterprise compliance (Retell HIPAA, Vapi SLA).

Deepgram Voice Agent. Self-hosted option

The pick when you want bundled pricing and the option to self-host. Deepgram packages Nova-3 + Flux + LLM routing + TTS into a managed or self-hosted bundle.

Specs:

- E2E latency: sub-400ms with Flux

- Bundled: STT + Flux + LLM routing + TTS

- Self-hosted deployment supported

Best for: Teams that want full control of the voice stack, self-hosted compliance, bundled pricing without LLM pass-through surprises.

Skip if: You want managed-service simplicity (use Retell or Vapi).

Bland AI. Outbound phone volume

The pick for outbound phone-volume use cases. Norm agent builder plus tiered per-minute pricing make it a strong fit for operations teams running outbound campaigns at scale.

Best for: Outbound phone agents, sales operations, structured outbound calls.

Skip if: You are building inbound conversational AI (use Retell or Vapi).

Open-source voice agent alternatives

If you do not want a managed agent platform at all, four open-source frameworks compose the STT-LLM-TTS loop yourself with realistic production latency:

- Pipecat (Daily AI). End-to-end latency ~800-950ms across community reports. Strong plugin ecosystem for Cartesia, Deepgram, ElevenLabs, OpenAI.

- LiveKit Agents. Latency ~750-900ms. The same LiveKit voice infrastructure FutureAGI’s

agent-simulateSDK is built on. Ships first-party adapters for every major STT/TTS. - Daily Bots. Daily’s hosted voice agent runtime built on Pipecat. Pricing is per-minute compute plus per-provider passthrough.

- Cartesia Line. Cartesia’s own agent runtime, optimized to keep Sonic Turbo’s 40ms TTFA on the hot path.

Trade-off: you pay platform fees on Vapi/Retell/Bland but skip the integration and reliability work. With OSS, you own the orchestration code, retry logic, barge-in detection, and the on-call when something breaks.

HIPAA tier matrix

HIPAA support is the cleanest pricing differentiator across the five managed platforms. Production teams in healthcare repeatedly hit a $1,000/mo wall on Vapi or ElevenLabs for what Retell or Bland include on the standard plan:

| Platform | HIPAA included? | Where it costs more |

|---|---|---|

| Retell AI | Yes, on standard plans, self-serve BAA | No upcharge |

| Bland AI | Yes, on Build/Scale plans | Plan-tier upgrade |

| Deepgram Voice Agent | Enterprise contract only | BAA via sales |

| Vapi | $1,000/mo add-on | Largest gotcha in the category |

| ElevenLabs Conversational | Enterprise + Zero Retention Mode required | Reportedly $1,000/mo + Enterprise contract |

If your product is in a regulated vertical, this single matrix often dominates the pick.

Vendor WER vs independent benchmarks

Every STT vendor publishes WER numbers on their own benchmark suite. Those numbers do not match what you will see on your traffic. The neutral Artificial Analysis AA-WER index puts Nova-3 at ~18.3% on real-world audio (vs Deepgram’s self-reported 6.84%) and Universal-2 at 5.65-6.7% on cleaner segments (vs AssemblyAI’s self-reported 14.5% on harder material). Run a domain reproduction with your prompts, your accents, and your background-noise distribution before picking.

End-to-end latency budget. The math

Voice agents have a hard latency target: sub-300ms is the ITU-T G.114 threshold for natural conversation. Sub-700ms is the practical production threshold most use cases accept. Hitting either requires component picks that compose to the budget.

The breakdown:

| Component | Typical latency range | Sub-300ms pick | Sub-700ms pick |

|---|---|---|---|

| STT | 200-300ms | Deepgram Nova-3 (sub-300) | Deepgram Nova-3 or AssemblyAI |

| LLM inference | 100-300ms | GPT-5 mini or Gemini 3.1 Flash | GPT-5.5, Claude Opus 4.7, etc. |

| TTS first audio | 40-200ms | Cartesia Sonic Turbo (40ms) | ElevenLabs v3 (sub-100ms) |

| Orchestration overhead | 50-100ms | Tight platform-native | Standard platform |

| Total | sum of above | ~400ms best-case round-trip | ~700ms practical round-trip |

The 40ms Cartesia Sonic Turbo TTFA is what makes sub-300ms reachable. Without it, the rest of the stack does not have enough budget. A typical production stack hitting sub-700ms uses Deepgram Nova-3 (300ms) + GPT-5.5 (200ms) + ElevenLabs Flash v2.5 (~75ms) + 100ms orchestration.

Cost at scale: what 100K minutes/month actually costs

Per-minute list price hides the real production cost. By May 2026 there are now five distinct pricing models depending on which path you pick: managed flat-fee (Retell, Bland), BYO with passthrough (Vapi), pure native audio (OpenAI Realtime API), self-hosted with engineering load (Pipecat + own GPUs), and bundled enterprise (Deepgram Voice Agent).

| Stack | Component pricing | Estimated monthly cost (100K min) |

|---|---|---|

| Retell managed | $0.07/min flat (LLM + STT + TTS bundled) | $7,000 |

| Vapi BYO + Deepgram + GPT-5.5 + ElevenLabs Flash | $0.05/min platform + $0.0043/min STT + ~$0.02/min text LLM + ~$0.04/min TTS (1.5x retry buffer) | $11,500-14,000 |

| OpenAI Realtime API alone (gpt-realtime-1.5) | $32/M input, $64/M output audio tokens; ~3K tokens per min | ~$15,000-25,000 |

| Bland AI Build/Scale tier | Tier-bundled pricing | $6,000-9,000 |

| Self-hosted (Whisper + Claude API + Cartesia + Pipecat) | GPU rental ~$0.001/min + Claude ~$0.025/min + Cartesia ~$0.03/min | $6,000-7,500 + on-call cost |

| Deepgram Voice Agent (enterprise) | $0.075/min bundled, growth concurrency 60 NA / 45 EU | $7,500 + enterprise contract |

The honest framing: under 100K min/month, Retell’s flat $0.07/min usually wins on total cost of ownership because the engineering time saved on LLM-passthrough optimization, retry tuning, and HIPAA paperwork dominates the per-minute delta. Above 1M min/month, BYO economics flip and Vapi or self-hosted starts to dominate. The crossover depends on retry rate and engineering cost.

The Realtime API path is the highest-cost option on this table and the right pick only when prosody matters more than per-minute price (mental health, accessibility, accent-sensitive products). For free-form long conversations, the classic STT-LLM-TTS pipeline still has better task-completion telemetry per public benchmarks.

Decision framework

Choose Retell AI if:

- You are building most-team production voice agents.

- Sub-700ms end-to-end is acceptable.

- HIPAA matters (it is included).

- You want a managed platform with no-code option.

Choose Vapi if:

- You are at scale (>1M calls/month).

- 99.99% uptime SLA is required.

- You need multi-channel (voice + SMS + chat) in one platform.

Choose Deepgram Voice Agent if:

- You want self-hosted deployment.

- You want bundled pricing without LLM pass-through surprises.

- Sub-400ms with Flux end-of-turn detection matters.

Choose ElevenLabs Conversational if:

- Voice quality is the brand.

- Voice cloning fidelity is core to the product.

Choose Bland AI if:

- Outbound phone volume is the workload.

- Norm agent builder fits your operations team.

Roll your own (Cartesia + Deepgram + LLM) if:

- You need sub-300ms end-to-end.

- You have the engineering team to build orchestration.

- The platforms do not support your specific stack (custom LLM, vertical compliance).

Common mistakes when picking voice AI components in May 2026

-

Picking TTS by quality and ignoring latency. ElevenLabs v3 is gorgeous; if you need sub-300ms end-to-end you need Cartesia anyway. Pick latency first when latency is binding.

-

Skipping turn-taking detection. Generic STT APIs do not handle end-of-turn. Voice agents without Flux or equivalent talk over users or sit silent. Worth more than WER accuracy gains.

-

Ignoring the LLM as a latency cost. A 300ms STT plus a 1500ms LLM is not a 300ms voice agent. Pick a fast LLM (GPT-5 mini, Gemini 3.1 Flash) for sub-300ms targets.

-

Building from scratch without budget reality. Custom voice agent stacks take 3-6 months of engineering to reach Vapi or Retell quality. Use a platform unless your stack is genuinely unsupported.

-

Trusting list latency without measuring. Vendor latency numbers are best-case. Measure your real production p50 and p95 latency before committing.

How Future AGI fits

Voice agents fail in production for the same reasons text agents fail. Hallucinations, retry loops, accent edge cases, off-policy responses, prompt injection through transcript contamination. Future AGI’s reliability stack covers voice agents the same way it covers text agents:

- Simulation framework generates voice scenarios (accents, background noise, interruptions, ambiguous prompts) before you ship.

- Turing eval models score every voice agent output across groundedness, hallucination, tool-call accuracy, accent handling, and 50+ production failure modes.

- Runtime guardrails block bad outputs at the gateway in under 100ms (the latency budget for voice agents requires this is fast).

- Error feed clusters live failures so you see “47 instances of accent-X failing on intent-Y” instead of 47 unrelated tickets.

- Optimizer auto-rewrites prompts and policies and re-validates against the regression set.

For voice specifically, the eval models include accent-handling, sentiment-consistency, and tool-call-accuracy axes that text-only frameworks miss.

Sources

STT primary

- Deepgram Nova-3 announcement (6.84% median streaming WER)

- Deepgram Flux conversational STT (model-integrated end-of-turn detection)

- AssemblyAI Universal-2 research blog

- Google Cloud Chirp speech-to-text docs

- OpenAI Whisper Large V3 model card

- ElevenLabs Scribe v2 Realtime

TTS primary

- Cartesia Sonic Turbo (40ms TTFA, primary docs)

- ElevenLabs Flash v2.5 latency (~75ms model inference)

- OpenAI Realtime API + gpt-realtime-1.5 pricing

- Hume Octave

- PlayHT docs

Voice agent platforms

- Vapi (300M+ cumulative calls, 99.9% uptime, sub-500ms claim)

- Retell AI Latency Face-off 2025 (TTFT 180ms, E2E 620ms)

- Retell actual-latency API (p50/p90/p95/p99 telemetry)

- Bland AI pricing tiers

- Deepgram Voice Agent pricing ($0.075/min)

- ElevenLabs Conversational AI HIPAA requirements

Independent benchmarks + standards

- Artificial Analysis AA-WER index (real-world WER)

- ITU-T Recommendation G.114 (one-way transmission time)

Open-source agent frameworks

See also: Best LLMs of May 2026 for the LLM brain in your voice agent. Previous voice post: Best Voice AI of April 2026.

Frequently asked questions

What is the best speech-to-text model in May 2026?

What is the best text-to-speech model for voice agents in May 2026?

What is the best voice agent platform in May 2026?

What end-to-end latency does a production voice agent need in May 2026?

Should I build a voice agent stack from scratch or use a platform?

What is Deepgram Flux and why does turn-taking detection matter?

Best Voice AI April 2026: compare OpenAI Realtime API, Deepgram, Cartesia, ElevenLabs, Vapi, and Retell for STT, TTS, latency, and voice agents.

Best Voice AI March 2026: compare Deepgram, Cartesia, ElevenLabs, Vapi, and Retell across STT, TTS, latency, orchestration, and voice agents.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.