Best Voice AI Models in March 2026: STT, TTS, and Voice Agent Stack

Best Voice AI March 2026: compare Deepgram, Cartesia, ElevenLabs, Vapi, and Retell across STT, TTS, latency, orchestration, and voice agents.

Table of Contents

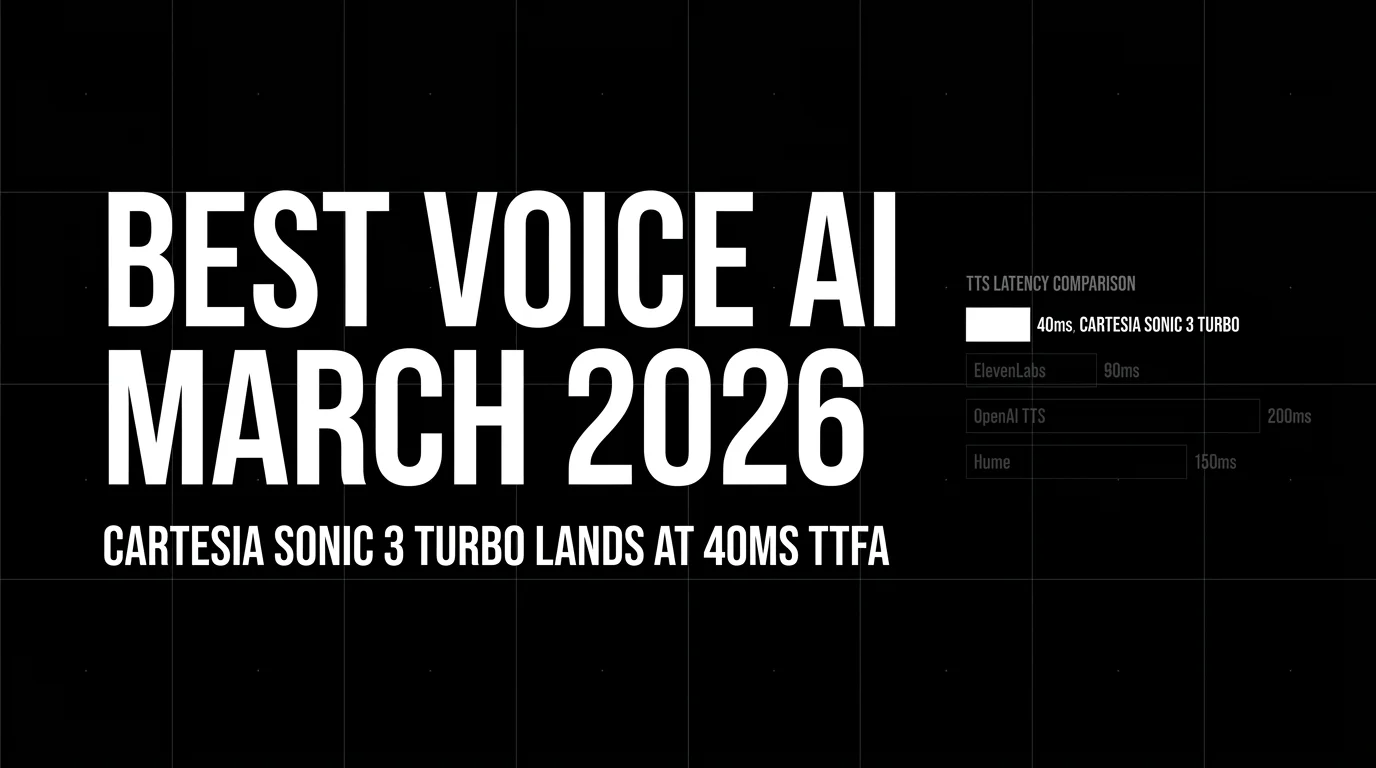

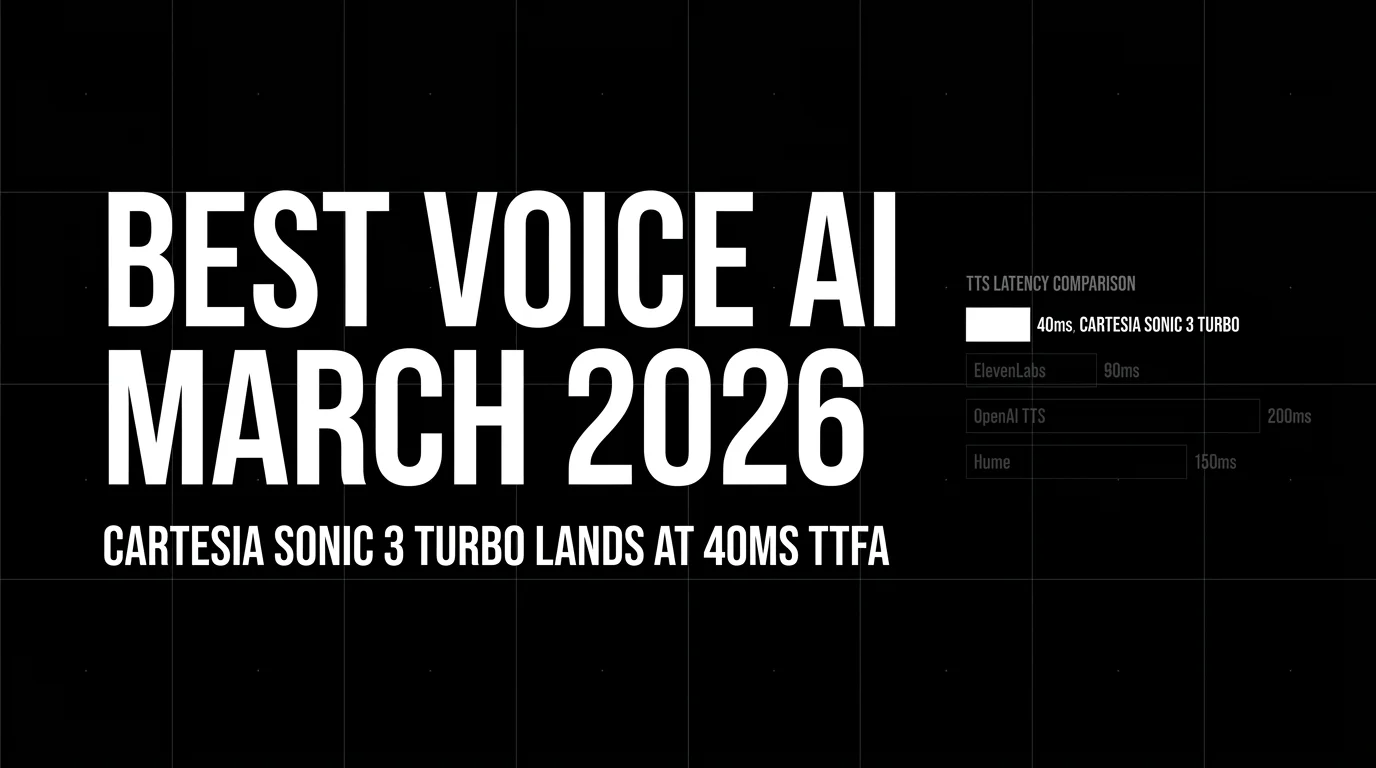

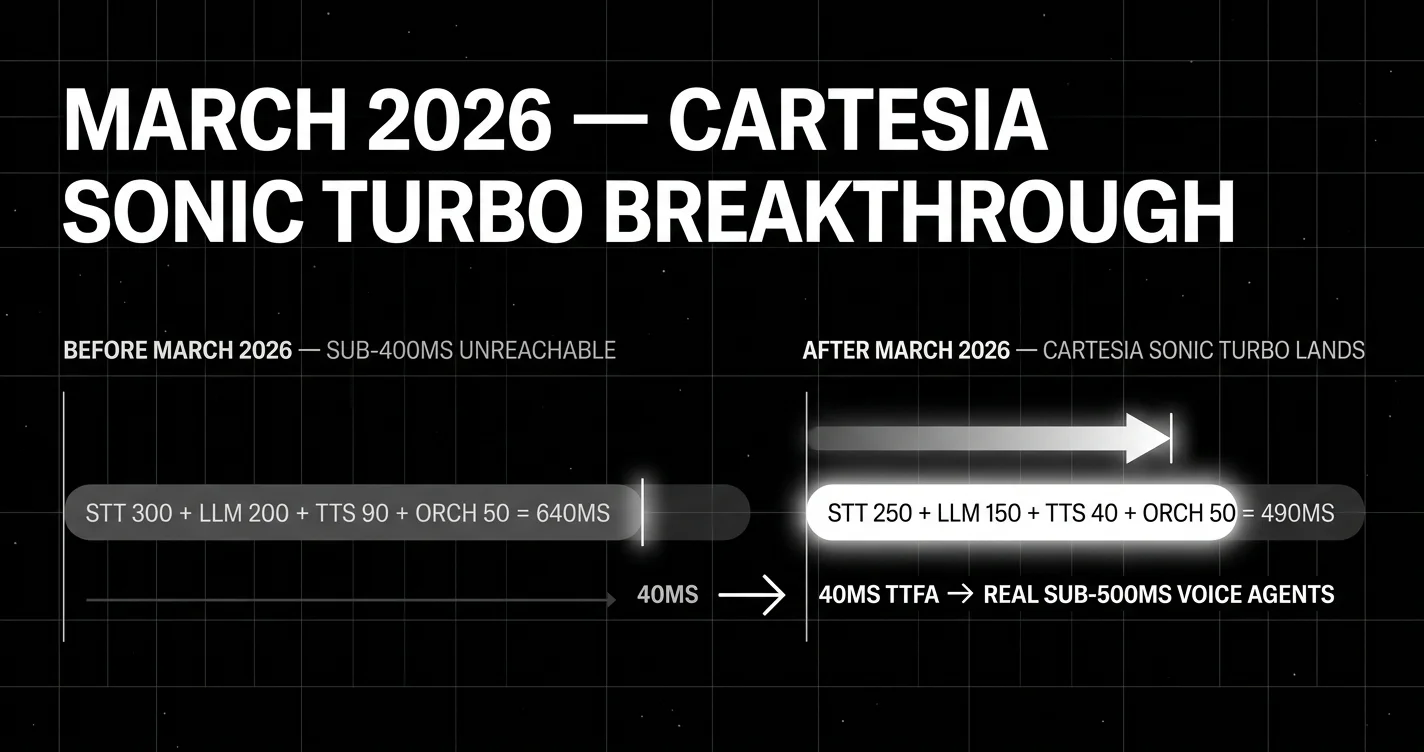

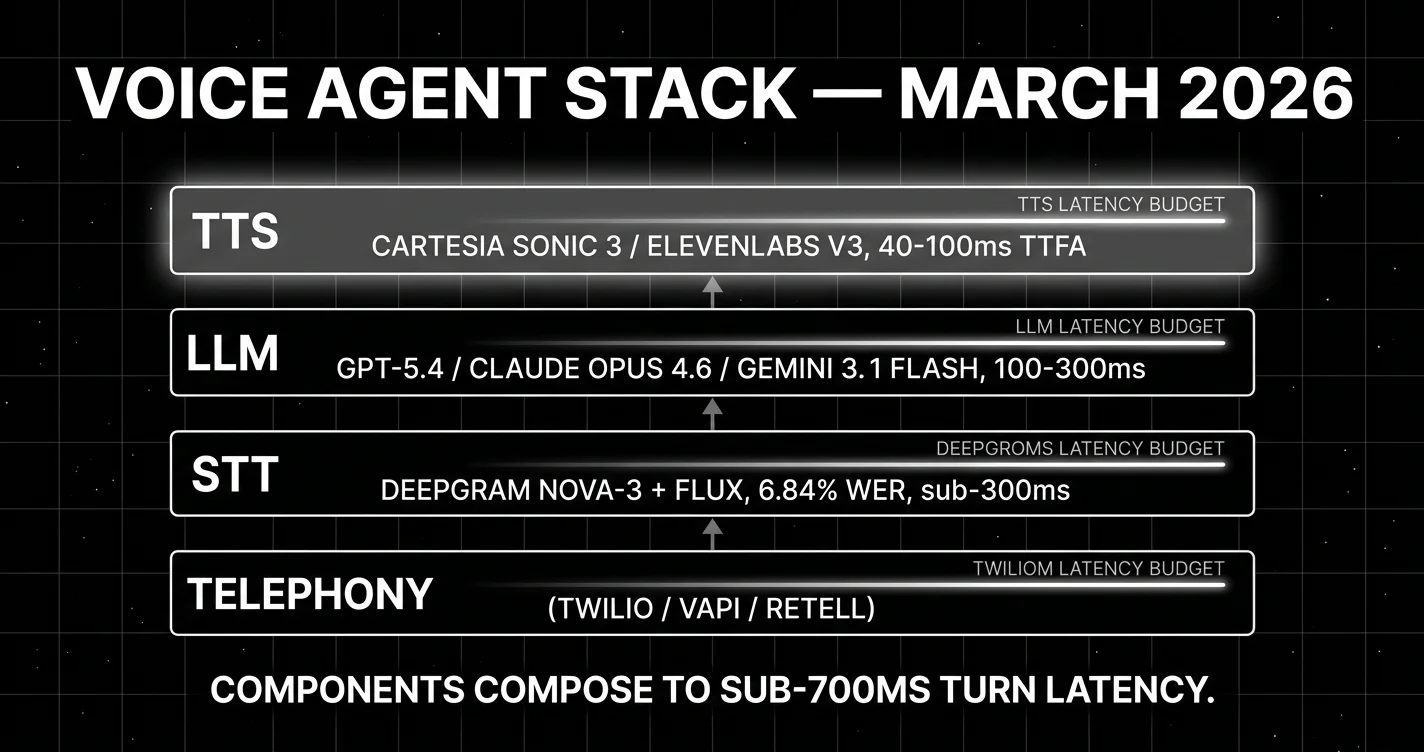

March 2026 is the month before native audio LLMs. The voice-agent stack is STT-LLM-TTS, the components are mature, and sub-700ms end-to-end is reachable on managed platforms. Cartesia Sonic Turbo’s 40ms TTFA (launched in March) opens sub-300ms agents for the first time. This guide picks the components for production voice in March.

TL;DR: Best voice AI per layer, March 2026

| Layer | Best pick | Why | Pricing |

|---|---|---|---|

| Streaming STT | Deepgram Nova-3 | 6.84% WER, sub-300ms streaming | $0.0077/min |

| STT with intelligence | AssemblyAI Universal-2 | Streaming + summarization, entity, sentiment | ~$0.0025/min base |

| Highest batch accuracy | Google Cloud Chirp | 11.6% WER, 125+ languages | varies |

| Open-source STT | Whisper Large V3 | 7.4% WER avg, 99+ languages | self-host |

| Turn-taking detection | Deepgram Flux | Purpose-built end-of-turn | bundled |

| TTS for sub-300ms agents | Cartesia Sonic Turbo (NEW Mar) | 40ms TTFA | premium |

| TTS quality and cloning | ElevenLabs v3 Multilingual | 32+ languages, voice cloning | premium |

| Voice agent platform default | Retell AI | ~600-780ms E2E, $0.07/min, HIPAA | $0.07/min |

| Voice agent at scale | Vapi | 300M+ cumulative calls, 99.9% uptime | varies |

If you only read one row: Cartesia Sonic Turbo (40ms TTFA, new in March) is the structural change of the month. Sub-300ms end-to-end voice agents are reachable for the first time. The classic stack (Nova-3 + GPT-5.4/Gemini Flash + Cartesia, orchestrated by Retell or Vapi) hits sub-700ms reliably.

The story of voice AI in March 2026

March was a setup month. The components were already strong (Whisper, ElevenLabs, Deepgram, Vapi), but two things moved into place during March:

Cartesia Sonic Turbo launched. The Turbo variant ships 40ms TTFA, down from 90ms on the standard Sonic 3. That single number is what makes sub-300ms end-to-end voice agents reachable. Without Turbo, the latency budget did not have room for STT, LLM, and TTS to compose under 300ms. Worth flagging: the only public load-test of Sonic Turbo’s 40ms claim under traffic comes from Together AI co-marketing material, not an independent third-party benchmark. Run your own load test if you intend to depend on the 40ms floor at concurrency.

Deepgram Flux became the production default for end-of-turn detection. Generic STT APIs do not handle turn-taking, which is the difference between a voice agent that talks over the user and one that does not. Flux paired with Nova-3 fixes the gap. Multilingual Flux GA followed on April 29 with mid-call language switching across 10 languages.

The classic STT-LLM-TTS architecture is the only one available in March. The OpenAI Realtime API + gpt-realtime-1.5 speech-to-speech endpoint launches with GPT-5.5 on April 23, but for March, voice agents are pipelines, not single models. That makes component selection load-bearing.

What changed in March 2026 (the structural facts)

| Date | Event | Why it matters |

|---|---|---|

| Mar 1-31 | Cartesia Sonic Turbo public availability | TTFA floor moved from 90ms to 40ms. Sub-300ms voice agents become reachable. |

| Mid-March | Deepgram Flux Multilingual previews | End-of-turn detection no longer English-only. |

| Mar 16 | Mistral Small 4 ships (LLM brain option) | New on-device LLM option for sub-300ms agents. |

| Throughout | ElevenLabs Flash v2.5 stable on production | Lowest TTS-only latency at ~75ms model inference. v3 stays voice-quality-focused. |

| Throughout | Vapi 99.9% standard / 99.99% enterprise SLA | The clearest production-grade option at scale. |

Best speech-to-text models in March 2026

Deepgram Nova-3. The streaming STT default

The streaming STT default for production voice agents in March 2026. Deepgram’s Nova-3 announcement reports 6.84% median streaming WER measured across 81 hours of audio in 9 domains, with batch dropping that to 5.26% (a 1.58-point gap, the smallest of any major streaming-and-batch provider). Languages supported in streaming: 30+ at launch.

Specs: 6.84% streaming WER (vendor); ~18.3% on the independent Artificial Analysis AA-WER index; sub-300ms streaming latency; $0.0077/min streaming standard, $0.0043/min batch. Volume discounts kick in around 200K min/month.

Production reality. Customer logos publicly disclosed include NASA (ISS-to-Mission-Control transcription), Spotify, Twilio, Citi, and ~1,300+ organizations total (per Deepgram case studies). Vapi runs on Deepgram for STT inside its voice-agent stack. Production complaints on HN/Reddit cluster around two patterns: WER spikes when users speak over background music, and modest accuracy degradation on heavy-accent or domain-specific audio without keyterm prompting.

Best for: Production voice agents where end-to-end latency is binding, real-time captioning, live conversational AI, deployments above 100K minutes/month where the streaming-batch gap matters less than the absolute latency floor.

Skip if: You need bundled speech intelligence in one call (use AssemblyAI Universal-2). You need the highest batch accuracy across rare languages (use Google Cloud Chirp). You self-host above 200K min/month (Whisper economics flip).

AssemblyAI Universal-2. Best for streaming STT with bundled intelligence

AssemblyAI’s Universal-2 research blog reports 14.5% WER on its challenging mixed-content benchmark (CommonVoice + Fleurs + VoxPopuli + 60 hours of in-house call-center, podcast, broadcast, and webinar audio). The pitch is the bundled intelligence layer: summarization, entity detection, sentiment, and PII redaction ship in the same API call without per-feature surcharges, which is the line item that adds up against Deepgram for analytics-heavy voice products.

Specs: 14.5% WER on AssemblyAI’s mixed-content benchmark (vendor); 5.65-6.7% on cleaner segments via Artificial Analysis; 300-600ms streaming latency (consistently slower than Deepgram in third-party tests); $0.37/hr batch async, $0.47/hr streaming.

Production reality. Customer logos: WSJ, NBC Universal, Spotify advertising, CallRail (which reports +23% accuracy lift and 2x customer adoption of Conversation Intelligence after the Universal-2 upgrade), Veed, Descript, Podchaser, Vidyo.AI, EdgeTier. Streaming Universal-Streaming is meaningfully higher latency than Deepgram in independent tests; AssemblyAI’s strength is the depth on every transcript feature, not the latency floor.

Best for: Voice agents where post-call analytics is part of the product (sentiment tracking, entity extraction, summarization). Customer-support call intelligence. Compliance-heavy verticals that need PII redaction inline.

Skip if: Pure transcript accuracy is the goal (Deepgram is faster). End-to-end latency under 500ms is binding (streaming has higher latency).

Whisper Large V3. Best open-source self-hosted STT

OpenAI’s open-weight Whisper Large V3 is the self-hosted default for privacy-sensitive workloads. The model card reports a sequential long-form algorithm beating chunked decoding by ~0.5pp WER on batch, and ~5-8% WER on clean English benchmarks per community-published benchmarks.

Specs: 7.4% WER average across mixed benchmarks; 1.55B parameters; 99+ languages; 10GB VRAM minimum; no built-in diarization; no native streaming.

Production failure modes worth knowing. Whisper has well-documented hallucination patterns under specific conditions:

- It hallucinates “Subscribe to my channel” or “Thanks for watching” on silence, a YouTube training-data bleed.

- It can enter 20-minute repetition loops on hour-long audio.

- Bias is multiplied 5-6x for non-English languages because v3 fine-tuning used auto-annotated low-resource data per Gladia’s audit.

- It has no native streaming. Community workarounds chunk-and-stitch, which adds seconds of lag and boundary errors.

- Deepgram’s audit reports v3 hallucinates 4x more often than v2 on hour-long audio, with median WER spiking to 53.4 in segments where v2 holds 12.7.

Pricing reality. OpenAI hosts Whisper at $0.006/min, but most teams self-host on Together / Replicate / Fal.ai / their own GPUs. Self-hosted breaks even vs Deepgram around 200K minutes/month given GPU rental costs.

Best for: Privacy-sensitive workloads where audio cannot leave your infrastructure. Edge inference. Self-hosted deployments above 200K min/month. Languages where Whisper’s coverage matters more than its accuracy ceiling.

Skip if: You need real-time streaming (it has none). You serve hour-long audio (the repetition-loop failure mode is real). You need production support and SLA.

Deepgram Flux. Best for end-of-turn detection

Deepgram Flux is the model-integrated end-of-turn detection layer that turns generic STT into voice-agent-ready STT. Generic STT APIs return transcripts but do not signal when the user has actually finished speaking, which is the difference between a voice agent that talks over the user and one that does not. Flux detects end-of-turn in under 400ms and pairs natively with Nova-3.

Specs: Sub-400ms end-of-turn detection. 50 concurrent connections on the standard tier (Deepgram ran an “OktoberFLUX” promo in October 2025 with free Flux access). Multilingual GA shipped April 29, 2026 across 10 languages with mid-call switching, after the March posts published.

Partner integrations: Vapi, LiveKit Agents, Pipecat, Cloudflare Workers AI, Jambonz. Most of these inherit Flux through their Deepgram STT integration.

Best for: Any production voice agent where the user-speaks-then-pauses cadence matters. Customer support, scheduling agents, anything multi-turn.

Skip if: Your product is transcription-only (no agent turn-taking). You self-host STT (Flux is Deepgram-only).

Best text-to-speech models in March 2026

Cartesia Sonic Turbo. The TTS latency leader at 40ms TTFA (NEW in March)

The structural addition of March 2026. 40ms TTFA opens sub-300ms voice agents.

Specs: 40ms TTFA Turbo (90ms standard), 15+ languages, voice cloning, streaming output.

Best for: Sub-300ms voice agents, real-time interactive applications, telephony.

Skip if: Voice quality and cloning matter more than latency.

ElevenLabs v3 Multilingual. Best for voice quality and cloning

The voice-quality and cloning leader.

Specs: 32+ languages, best-in-category voice cloning. v3 is not real-time-optimized; for sub-100ms latency use Flash v2.5 (~75ms model inference), best-in-category voice cloning.

Best for: Creator content, audiobook generation, branded voice products.

OpenAI gpt-4o-mini-tts and gpt-realtime. Best for LLM-controlled instructable voice

The instructable-voice pick.

Best for: OpenAI ecosystem default, LLM-controlled voice character.

Hume Octave. Best for emotion-sensitive voice content

The emotion-optimized pick.

Best for: Mental health products, character voice for games, emotion-sensitive content.

PlayHT. Best for conversational long-form audio

The conversational long-form pick.

Best for: Podcast and audiobook generation.

Sesame Maya and Miles. Best open-source English TTS

The open-source picks. English-strong.

Best for: Self-hosted English TTS at scale.

Best voice agent platforms in March 2026

Retell AI. Most-teams default

The right platform for most teams in March 2026 unless you have specific scale or self-host requirements. Retell publishes a latency face-off benchmark reporting TTFT of 180ms, end-to-end of 620ms, barge-in at 140ms, jitter at 45ms standard deviation, and stream continuity at 99.7%. Worth flagging: the benchmark compares Retell to Google Dialogflow CX, Twilio Voice, and PolyAI, not to Vapi, Bland, Deepgram Voice Agent, or ElevenLabs Conversational. Methodology is Retell-controlled (10-question FAQ scenarios, US-East/West/EU, single-user and 50+ concurrent), and the page itself flags that “Google provides no latency guarantees.” Read it as Retell-vs-legacy-IVR, not Retell-vs-modern-startup.

Specs: ~620ms E2E latency. $0.07/min. HIPAA included on standard plans with self-serve BAA. No platform fee. Both no-code builder and developer SDK. Retell docs expose actual-latency telemetry per call with p50/p90/p95/p99 percentiles (example: p50 ~800ms, p90 ~1200ms in real production traffic).

Best for: Most production voice-agent use cases. Sub-700ms is acceptable. HIPAA matters and you don’t want a separate $1,000/mo addon. You want a managed platform with both a no-code builder and a developer SDK.

Skip if: Sub-500ms is required. Self-hosted is required (use Deepgram Voice Agent). You’re at sustained 1M+ calls/month (Vapi has more concurrency headroom).

Vapi. The scale pick

The platform built for high-concurrency call volume. Vapi’s homepage reports 300M+ cumulative calls processed, 2.5M+ assistants launched, 500K+ developers, 99.9% standard uptime (99.99% on Enterprise), and sub-500ms latency on its average path. These are vendor-reported figures, not audited.

Specs: 300M+ cumulative calls, 99.9% / 99.99% uptime, sub-500ms latency claim, multi-channel (voice, SMS, chat). Pricing is per-minute compute plus provider passthrough (you pay for STT + LLM + TTS separately). HIPAA is a $1,000/mo add-on, the largest pricing gotcha in the category.

Best for: Millions-of-calls-per-month scale. Multi-channel orchestration where you need voice + SMS + chat in one platform. Teams that want maximum BYO control over the underlying STT/LLM/TTS picks.

Skip if: Below 100K calls/month (Retell is more cost-effective once you price in the platform fee). HIPAA is a hard requirement and you do not want a $1,000/mo add-on (use Retell or Bland).

Deepgram Voice Agent. Best self-hosted voice agent stack

Bundled STT-LLM-TTS stack from Deepgram with sub-400ms end-to-end latency when paired with Flux. Pricing per the Deepgram pricing page is $0.075/min, with growth-tier concurrency at 60 NA / 45 EU. Self-hosted deployment is available via enterprise contract, which is the differentiator vs Vapi/Retell. HIPAA is enterprise-contract-only.

Best for: Self-hosted compliance requirements (regulated verticals where audio cannot leave your infrastructure). Bundled pricing without per-call LLM pass-through. Teams already on the Deepgram STT contract who want to keep the stack vertically integrated.

Skip if: You want a managed platform with the lowest engineering load (use Retell). You need flexibility to mix non-Deepgram components (use Vapi).

ElevenLabs Conversational. Best voice-quality-first agent platform

The voice-quality-first agent platform with ElevenLabs v3 TTS bundled. The pitch is the voice itself, not the latency floor. HIPAA support requires Enterprise plus Zero Retention Mode, plus a reportedly $1,000/mo add-on per ElevenLabs HIPAA docs.

Best for: Voice products where voice is the brand: character voice agents, premium consumer products, audiobook-style read-aloud experiences. Multi-language consumer voice across 32+ languages.

Skip if: Latency is binding (Cartesia + Vapi/Retell is faster). HIPAA is required and you don’t want an Enterprise contract. You need character pricing predictability (premium voices charge 2x credits, failed generations consume credits, real budgets run 1.4-1.7x list).

Bland AI. Best for outbound phone agents at scale

Outbound phone volume. Norm agent builder. Tiered pricing: Start (10 concurrent / 100 calls/day), Build (50 / 2,000), Scale (100 / 5,000), Enterprise (unlimited). HIPAA included on Build and above.

Best for: Outbound phone agents at scale.

Open-source agent platforms (no platform fees)

If you want to skip managed-platform fees and own the orchestration code, four OSS frameworks are the realistic choices in March 2026:

- Pipecat (Daily). 800-950ms E2E across community reports. Adapters for every major STT/TTS.

- LiveKit Agents. 750-900ms E2E. Same LiveKit voice infrastructure FutureAGI’s

agent-simulateSDK uses. - Daily Bots. Hosted Pipecat. Per-minute compute + provider passthrough.

- Cartesia Line. Cartesia’s runtime, optimized for Sonic Turbo’s 40ms TTFA on the hot path.

Trade-off: you skip platform fees but own the integration, retry logic, barge-in handling, and on-call when the orchestration breaks. For most teams under 100K calls/month, a managed platform like Retell is cheaper once engineering time is priced in.

HIPAA tier matrix

The cleanest pricing differentiator across managed platforms:

| Platform | HIPAA included? | Where it costs more |

|---|---|---|

| Retell AI | Yes, standard plan, self-serve BAA | No upcharge |

| Bland AI | Yes, on Build/Scale | Plan-tier upgrade |

| Deepgram Voice Agent | Enterprise contract only | Sales-led BAA |

| Vapi | $1,000/mo add-on | Largest gotcha in the category |

| ElevenLabs Conversational | Enterprise + Zero Retention | Reportedly $1,000/mo + Enterprise |

If your product is in healthcare or any regulated vertical, this single matrix often dominates the pick.

STT comparison in March 2026

| Provider | Streaming WER (vendor) | AA-WER (independent) | Streaming latency | Pricing |

|---|---|---|---|---|

| Deepgram Nova-3 | 6.84% | ~18.3% | sub-300ms | $0.0043/min |

| AssemblyAI Universal-2 | 14.5% | 5.65-6.7% (cleaner segments) | 300-600ms | $0.47/hr streaming |

| Google Cloud Chirp 3 | 4-7% (clean studio) | 10-15% | 300-600ms | $0.016/min real-time |

| Whisper Large V3 | n/a (batch only) | 5-8% (clean) | n/a | $0.006/min hosted; self-host breaks even ~200K min/mo |

| ElevenLabs Scribe v1 | content-production focused | not yet ranked | batch | bundled credits |

The vendor-vs-AA-WER gap is the trust signal. Vendors publish WER on benchmark suites they may have trained on; the Artificial Analysis index runs an independent suite on real-world audio (AgentTalk, VoxPopuli-Cleaned, Earnings22-Cleaned). Run a domain reproduction with your own accent and noise distribution.

Whisper-specific failure modes worth knowing in production: it hallucinates “Subscribe to my channel” or “Thanks for watching” on silence (YouTube training data bleed), can enter 20-minute repetition loops on long-form audio, and has no native streaming which makes it unsuitable for conversational voice agents without a chunking workaround.

TTS comparison in March 2026

| Provider | TTFA / latency | Voices | Languages | Voice cloning | Pricing |

|---|---|---|---|---|---|

| Cartesia Sonic Turbo | 40ms TTFA (NEW Mar) | premium voice library | 15+ | yes, 5-second sample | premium tier |

| Cartesia Sonic 3 (standard) | 90ms TTFA | premium voice library | 15+ | yes | premium tier |

| ElevenLabs v3 Multilingual | quality-first, not real-time-optimized | full voice library | 32+ | best-in-category | premium credits |

| ElevenLabs Flash v2.5 | ~75ms model inference | full voice library | 32+ | yes | premium credits |

| OpenAI gpt-4o-mini-tts | ~200ms TTFA | 50+ voices | 50+ | no | $0.015/M chars |

| Hume Octave | 150ms TTFA | emotion-tagged | English-strong | yes | premium tier |

| PlayHT | 250ms TTFA | conversational long-form | 30+ | yes | tiered |

| Sesame Maya / Miles (OSS) | varies (self-hosted) | 2 base voices | English-strong | n/a | free |

The two ElevenLabs models are different products. v3 Multilingual is built for voice quality and is explicitly not real-time-optimized. Flash v2.5 is built for low latency. For voice agents, pair Flash v2.5 (~75ms) with the rest of the stack; for content production or premium consumer voice, use v3.

ElevenLabs character pricing has known gotchas. Premium voices charge 2x credit, failed generations consume credits, and real production budgets typically run 1.4-1.7x list price after retries and cloning calls. Build the buffer in.

End-to-end latency budget in March 2026

| Component | Sub-300ms pick | Sub-700ms pick |

|---|---|---|

| STT | Deepgram Nova-3 | Deepgram Nova-3 |

| LLM | GPT-5.4 Mini or Gemini 3.1 Flash | GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro |

| TTS | Cartesia Sonic Turbo (40ms) | ElevenLabs v3 (sub-100ms) |

| Orchestration | Tight platform-native | Standard platform |

| Total | ~300ms achievable | ~700ms achievable |

Cartesia Sonic Turbo’s 40ms TTFA is what makes sub-300ms reachable in March 2026. Without it, the rest of the stack runs out of latency budget.

Cost at scale: what 100K minutes/month actually costs

Voice agent pricing is the area where list price and real production cost diverge most. The per-minute number on the pricing page does not include LLM passthrough, character-pricing markups for TTS, retry rate, or the HIPAA add-on. Realistic monthly cost at 100K minutes/month, by stack:

| Stack | Component pricing | Estimated monthly cost (100K min) |

|---|---|---|

| Retell managed | $0.07/min flat (LLM + STT + TTS bundled) | $7,000 |

| Vapi BYO + Deepgram + GPT-5.5 + ElevenLabs Flash | Vapi $0.05/min platform + Deepgram $0.0043/min + GPT-5.5 ~$0.02/min (text passthrough) + ElevenLabs ~$0.04/min (with retry buffer 1.5x) | $11,500-14,000 |

| Vapi BYO + Deepgram + Mistral Small + Cartesia Sonic Turbo | Vapi $0.05/min + Deepgram $0.0043/min + Mistral ~$0.005/min + Cartesia ~$0.03/min | $9,000-10,500 |

| Bland AI Build tier | Tier-bundled pricing | $6,000-9,000 (depending on plan + minutes) |

| Self-hosted (Whisper + Claude API + Cartesia + Pipecat) | GPU rental ~$0.001/min + Claude ~$0.025/min + Cartesia ~$0.03/min + free Pipecat | $6,000-7,500 + on-call cost |

The honest framing: under 100K min/month, Retell’s flat $0.07/min usually wins on total cost of ownership because the engineering time saved on LLM-passthrough optimization, retry tuning, and HIPAA paperwork dominates the per-minute delta. Above 1M min/month, BYO economics flip and Vapi or self-hosted starts to dominate. The crossover point depends heavily on your retry rate and how much your engineers cost.

ElevenLabs character-pricing reality has well-documented gotchas: 2x credit charge on premium voices, failed generations consume credits, generations under 5 characters still bill at 5-character minimum. Production budgets typically run 1.4-1.7x list price after retries and edge cases. Build the buffer in.

Decision framework

Choose Retell AI for managed default. Sub-700ms, HIPAA, $0.07/min.

Choose Vapi for scale. Millions of calls per month, 99.99% SLA.

Choose Deepgram Voice Agent for self-hosted. Bundled pricing, Flux end-of-turn.

Choose ElevenLabs Conversational for voice-quality-first products.

Roll your own (Cartesia + Deepgram + LLM) for sub-300ms. This is the new option in March 2026 thanks to Cartesia Sonic Turbo.

Common mistakes

- Picking TTS by quality and ignoring latency. Sub-300ms requires Cartesia Sonic Turbo.

- Skipping turn-taking detection. Generic STT does not handle end-of-turn. Worth more than WER.

- Ignoring the LLM as a latency cost. A 300ms STT plus 1500ms LLM is not a 300ms agent.

- Using a frontier LLM (Claude Opus 4.6, GPT-5.4) when speed matters. Use the Mini or Flash variant.

- Building from scratch without reality. Custom stacks take 3-6 months to reach platform quality.

How Future AGI fits

Future AGI provides reliability infrastructure for voice agents. Simulation generates voice scenarios. Eval models score voice outputs. Guardrails block bad outputs at the gateway. Open-source, self-hostable.

STT primary

- Deepgram Nova-3 announcement (6.84% median streaming WER)

- Deepgram Flux conversational STT

- AssemblyAI Universal-2 research blog

- Whisper Large V3 model card

- Whisper hallucination reports

TTS primary

- Cartesia Sonic Turbo 40ms TTFA (docs)

- ElevenLabs Flash v2.5 latency (~75ms model inference)

- ElevenLabs v3 (quality-first, not real-time)

Voice agent platforms

- Vapi (300M+ cumulative calls, 99.9% uptime)

- Retell AI Latency Face-off 2025 (TTFT 180ms, E2E 620ms)

- Bland AI pricing tiers

- Deepgram Voice Agent pricing ($0.075/min)

- ElevenLabs Conversational AI HIPAA requirements

Independent + standards

Open-source agent frameworks

See also: Best LLMs of March 2026 for the LLM brain. Next voice post: Best Voice AI of April 2026.

Frequently asked questions

What is the state of voice AI in March 2026?

What is the best speech-to-text model in March 2026?

What is the best text-to-speech model in March 2026?

What is the best voice agent platform in March 2026?

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best Voice AI April 2026: compare OpenAI Realtime API, Deepgram, Cartesia, ElevenLabs, Vapi, and Retell for STT, TTS, latency, and voice agents.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.