Best Vector Databases for RAG in 2026: 7 Stores Compared

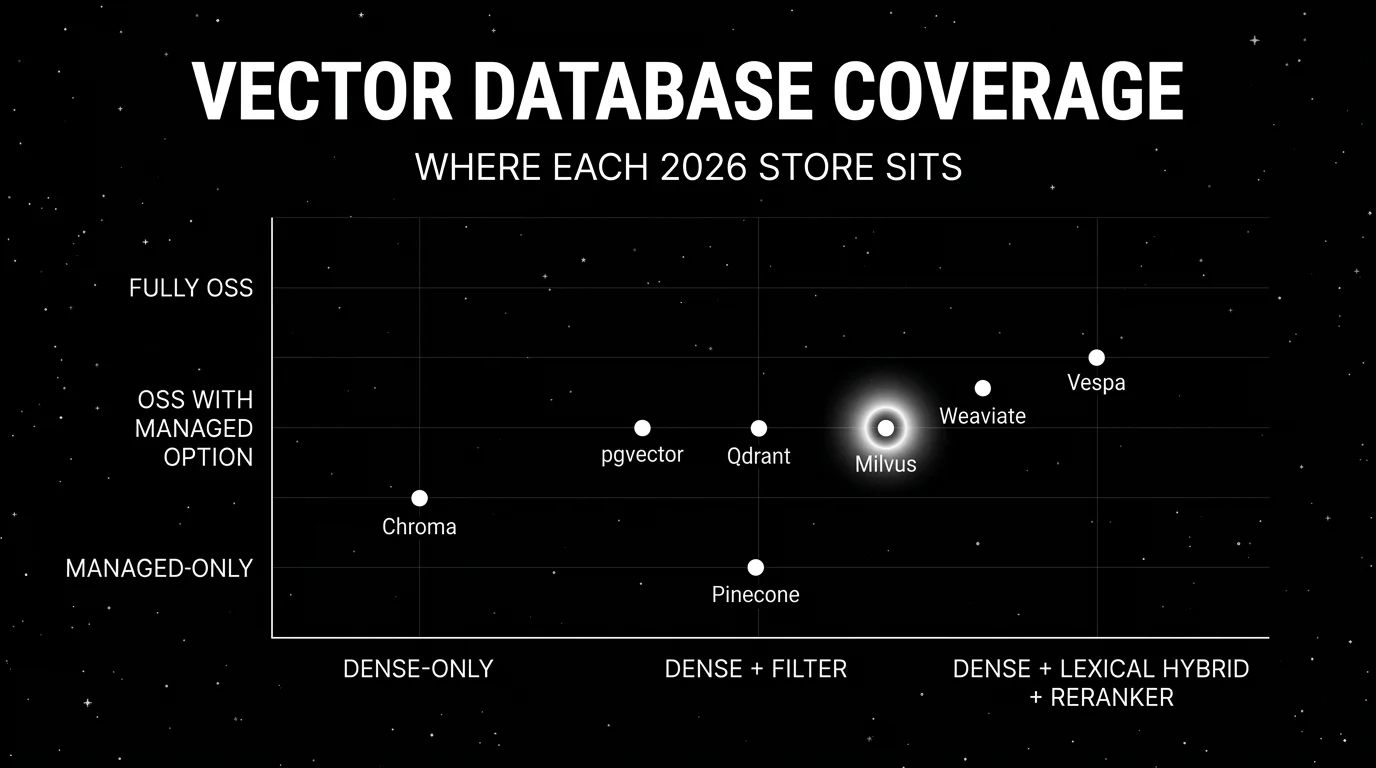

Pinecone, Milvus, Weaviate, Qdrant, pgvector, Chroma, Vespa for RAG in 2026. Compared on recall, latency, hybrid search, OSS license, and eval-friendliness.

Table of Contents

RAG quality lives or dies in retrieval. The reader model can be GPT-5 or Claude Sonnet 4.5, but if the retrieved chunks are wrong the answer will be wrong. Picking a vector database in 2026 is no longer a research decision; it is a production engineering decision with real costs in latency, recall, ops burden, and the size of the chunks the team will end up evaluating. This guide compares seven vector stores commonly evaluated in production RAG procurement, with the tradeoffs that matter when picking which one to standardize on.

TL;DR: Best vector database per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Managed serverless with low ops | Pinecone | Strong dev ergonomics, namespace tenancy | Free tier; Standard from $50/mo | Closed |

| Self-hosted at large scale | Milvus | Distributed cluster with GPU indexing | Free OSS; Zilliz Cloud paid | Apache 2.0 |

| Native hybrid search and graph data | Weaviate | First-party hybrid + module ecosystem | Free OSS; Cloud 14-day trial; Flex from $45/mo | BSD-3 |

| Easy self-host with strong filters | Qdrant | Rust core, fast filtering, native hybrid | Free OSS; Cloud free tier | Apache 2.0 |

| Data already in Postgres | pgvector | One operational footprint | Free OSS | PostgreSQL License |

| Local-first dev with simplest API | Chroma | Fastest from zero to embeddings | Free OSS; Cloud paid | Apache 2.0 |

| Hybrid search at very large scale | Vespa | Production-proven at billion-scale | Free OSS; Vespa Cloud paid | Apache 2.0 |

If you only read one row: pick Pinecone when ops aversion is high. Pick pgvector when the data already lives in Postgres. Pick Qdrant when you want a fast self-hosted store with low setup cost. Everything else is workload-specific.

What a RAG-grade vector database actually needs

Pick a store that covers all six surfaces below. If a store lacks one of these surfaces, budget for adjacent infrastructure.

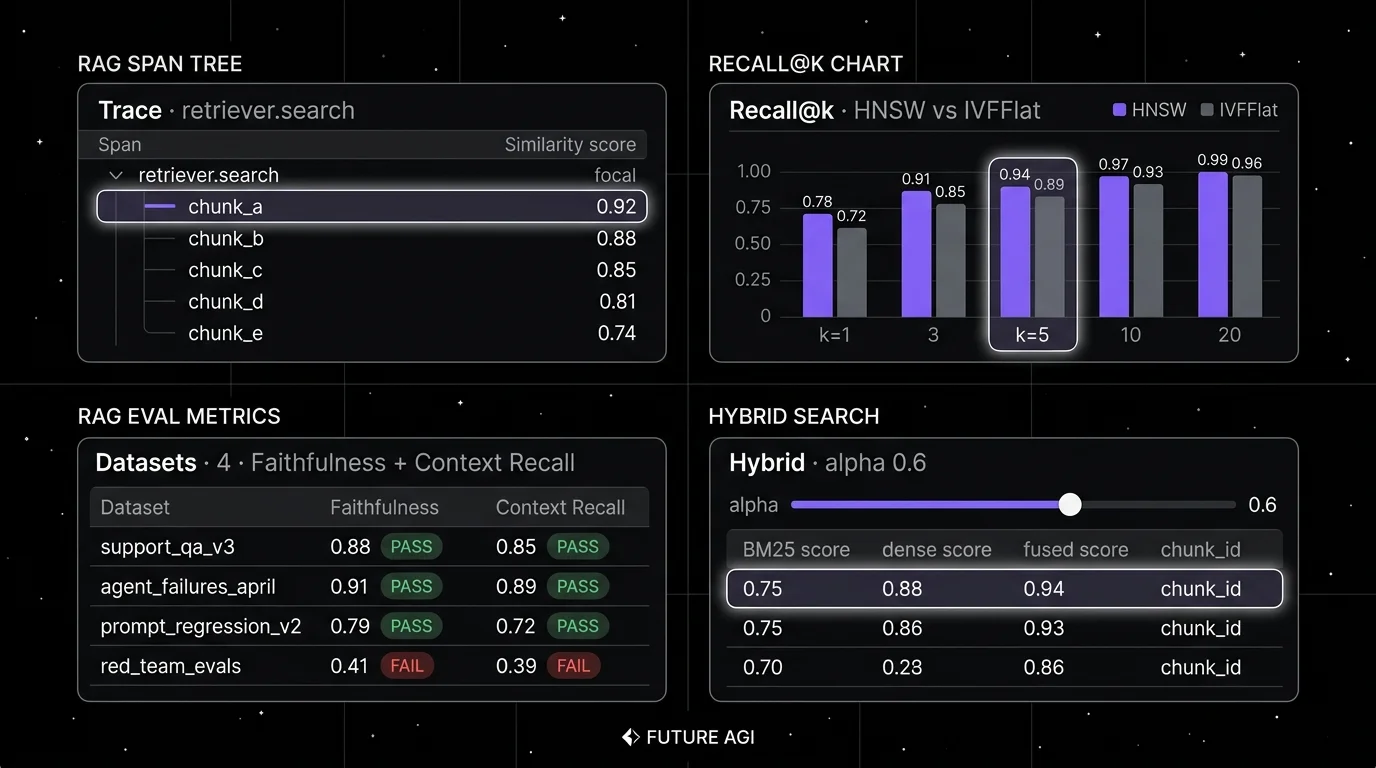

- ANN index that scales. HNSW or DiskANN at minimum, with tunable ef_search and M parameters. The graph index quality determines recall versus latency.

- Hybrid search. BM25 lexical leg fused with dense via RRF or a learned reranker. Dense-only retrieval breaks on rare terms and exact matches.

- Filter performance. Production RAG filters on tenant_id, document_type, language, freshness. The store must support pre-filtered ANN without dropping recall.

- Multi-tenancy. Namespaces, collections, or filter-based tenant isolation that does not pay an N-collection cost in memory or compute.

- Operational footprint. Backups, replication, snapshot, restore, monitoring. The story for self-hosted should not require building a control plane from scratch.

- Trace integration. Spans emit retrieved chunks, similarity scores, and reranker outputs as attributes for downstream eval. Without this, you cannot debug a regression to a specific retrieval miss.

The 7 vector databases compared

1. Pinecone: Best for managed serverless with low ops burden

Closed platform. Managed only.

Use case: RAG pipelines where the team wants serverless vector storage with namespace-level multi-tenancy and zero ops. Pinecone’s Serverless architecture separates storage and compute, billing by storage GB and read/write units. Hybrid search via sparse-dense vectors is supported.

Architecture: Managed service on AWS, GCP, and Azure with regional data planes. Serverless indexes scale storage and compute independently; Dedicated Read Nodes handle high-throughput workloads. Hybrid retrieval uses sparse-dense fusion. The Inference API option for embeddings reduces network hops. Pod-based indexes are now legacy and unavailable to new Standard or Enterprise customers as of August 2025.

Pricing: Pinecone Starter is free with included storage and read/write units (vector capacity depends on embedding dimension and metadata size). Standard is a $50/month minimum with read units, write units, and storage GB billed on top. Enterprise is custom. Verify the latest pricing page before procurement.

OSS status: Closed.

Best for: Teams that want managed vector storage with strong SDK ergonomics, do not need self-hosting, and prioritize zero ops over OSS license control.

Worth flagging: Closed platform with no self-host path. The serverless billing model (read units, write units, storage) requires modeling against actual traffic mix; small mistakes compound. Multi-region replication is enterprise-tier.

2. Milvus: Best for OSS at large scale

Open source. Apache 2.0. Zilliz Cloud managed option.

Use case: Self-hosted RAG at the 100M-10B vector range, with distributed cluster, GPU indexing for billion-scale rebuilds, and hybrid search via BM25 + dense fusion.

Architecture: Distributed cluster with separated query, data, root, and index nodes. Object-storage-backed (S3, MinIO) so storage scales independently of compute. GPU indexing supported via the milvus-cu12 image.

Pricing: Milvus is free OSS. Zilliz Cloud (managed Milvus) has free, serverless, and dedicated options; dedicated pricing depends on compute, runtime, storage, transfer, and add-ons. Verify Zilliz Cloud pricing and the Zilliz cost guide for the latest tier shape.

OSS status: Apache 2.0. Active maintenance with quarterly minor releases.

Best for: Engineering teams with the ops bandwidth to operate a distributed cluster, large datasets, and a need for OSS license control. Strong fit for media, e-commerce, and search-heavy workloads.

Worth flagging: Distributed architecture is more setup than Qdrant or pgvector. The configuration surface (etcd, Pulsar or Kafka, MinIO, multiple node types) is real. Use Zilliz Cloud if the cluster operations are not a fit.

3. Weaviate: Best for native hybrid search and graph data model

Open source. BSD-3. Weaviate Cloud managed option.

Use case: RAG where hybrid search (BM25 + dense) is non-negotiable from day one, and where the data has graph structure (cross-references between objects). Weaviate’s modules ecosystem (text2vec, generative, reranker) reduces glue code.

Architecture: Single-node or distributed; GraphQL and REST APIs. First-class hybrid search with alpha tuning between dense and lexical. Modules for embedding providers (OpenAI, Cohere, Hugging Face), reranker (Cohere Rerank), and generative (OpenAI, Anthropic) reduce wiring code.

Pricing: Weaviate is free OSS. Weaviate Cloud offers a 14-day free trial sandbox; the Flex tier starts at $45/mo plus usage and Enterprise is custom. Verify against Weaviate pricing.

OSS status: BSD-3.

Best for: Teams whose RAG depends on hybrid retrieval and want one store that handles dense, lexical, and graph relations. Strong fit for knowledge-graph-flavored workloads.

Worth flagging: Resource footprint is heavier than Qdrant for the same dataset size. The modules ecosystem is convenient but introduces vendor coupling for reranker and generative paths. Replication and backup ship in the OSS edition; Cloud tiers differ on managed SLA, retention, and disaster-recovery posture.

4. Qdrant: Best for fast self-hosted with strong filter performance

Open source. Apache 2.0. Qdrant Cloud managed option.

Use case: Self-hosted RAG where setup must be fast, filter cardinality is high (per-tenant, per-document-type), and the team wants Rust-grade performance without operating a distributed cluster.

Architecture: Single-node deployment is one binary plus configuration. Distributed cluster supported via raft consensus. Payload index for fast filter-aware ANN. Quantization (scalar, product) reduces memory by 4-32x.

Pricing: Qdrant is free OSS. Qdrant Cloud has a free tier (1 GB RAM, 4 GB disk) plus pay-as-you-go cluster pricing on top; verify Qdrant Cloud pricing.

OSS status: Apache 2.0.

Best for: Teams that want a fast self-hosted vector store with strong filter performance, native hybrid retrieval, who prefer one binary to a distributed cluster, and who value Rust’s performance characteristics.

Worth flagging: Hybrid retrieval is exposed natively through the Query API and BM25 support, with FastEmbed available for sparse and reranker integration. Distributed mode is newer than the single-node path. The OSS path is solid; advanced features (RBAC, audit logs) are Cloud-tier.

5. pgvector: Best when the data already lives in Postgres

Open source. PostgreSQL License.

Use case: Teams whose application database is already Postgres and who want to add vector search without operating a second store. pgvector adds a vector column type, IVFFlat and HNSW indexes, and <-> distance operators.

Architecture: Postgres extension. HNSW indexing landed in v0.5.0; v0.7 added halfvec, sparsevec, binary vector types, and L1 HNSW; v0.8 improved filtered ANN planning and parallel index build (0.8.2 released Feb 2026). Operators for L2, inner product, and cosine distance.

Pricing: Free OSS. Operational cost is the Postgres infrastructure; managed Postgres providers (RDS, Aurora, Neon, Supabase) all support pgvector.

OSS status: PostgreSQL License (MIT-style).

Best for: Engineering teams that prize one operational footprint, where the data has relational structure that benefits from joins, and where the dataset fits comfortably in RAM with HNSW.

Worth flagging: Teams have reported production use into tens of millions of vectors, but you should validate with your own dimensions, filter selectivity, and p95/p99 concurrency before committing. Beyond that, dedicated engines (Milvus, Weaviate, Qdrant) tend to outperform pgvector. Hybrid search requires pairing with Postgres FTS (tsvector) or pg_trgm for the lexical leg. Index rebuilds on a hot Postgres instance can be expensive.

6. Chroma: Best for local-first dev experience

Open source. Apache 2.0. Chroma Cloud managed option.

Use case: Prototypes, notebooks, and dev workflows where the goal is fastest from zero to embeddings. Chroma’s Python and JavaScript clients abstract the boilerplate; the in-process mode runs in a notebook with no server.

Architecture: Multiple client modes (in-memory, persistent, HTTP, async, Cloud). Persistent client supports local file-backed storage. Chroma Cloud entered general availability in 2024-2025. Beyond dense vector queries, Chroma supports metadata filters and document-level full-text contains and regex via where_document; sparse and hybrid search via the Advanced Search API ship in Chroma Cloud, while OSS Chroma can pair with an external sparse retriever or reranker for lexical+dense fusion.

Pricing: Free OSS. Chroma Cloud has paid tiers; verify the latest pricing page.

OSS status: Apache 2.0.

Best for: Engineers prototyping RAG, building demos, or running small production workloads where simplicity beats raw performance.

Worth flagging: Chroma is usually chosen for local-first development; teams with sustained high-concurrency workloads should benchmark it against Qdrant, Weaviate, and managed Chroma Cloud on their own data before standardizing.

7. Vespa: Best for hybrid search at billion-scale

Open source. Apache 2.0. Vespa Cloud managed option.

Use case: Search and recommendation workloads at very large scale where hybrid retrieval (BM25 + dense + learned-rank) plus low-latency feature scoring matters. Vespa is the search engine behind several large consumer products.

Architecture: Distributed cluster with content nodes, container nodes, and admin nodes. Schema-driven with declarative ranking expressions. ColBERT-style late interaction supported via tensor types.

Pricing: Vespa is free OSS. Vespa Cloud starts free for trial and moves to paid tiers; verify the latest pricing page.

OSS status: Apache 2.0.

Best for: Teams whose retrieval workload is search-engine-shaped: high concurrency, billion-scale corpora, complex ranking. Strong fit for media, e-commerce, and ad serving.

Worth flagging: Vespa has the steepest learning curve in this list. The schema and ranking expression model is more involved than Qdrant or Pinecone. For pure RAG with moderate scale, Vespa is overkill. The community is smaller than Milvus or Qdrant.

Decision framework: pick by constraint

- Zero ops, managed serverless: Pinecone, Weaviate Cloud, Qdrant Cloud, Zilliz Cloud.

- Already on Postgres: pgvector.

- Largest scale OSS: Milvus, Vespa.

- Native hybrid search: Weaviate, Vespa, Milvus, Qdrant.

- Fastest self-host setup: Qdrant.

- Notebooks and prototypes: Chroma.

- Strong filter performance: Qdrant, pgvector.

- Search-engine-shaped workload: Vespa.

Common mistakes when picking a vector database

- Picking on benchmark recall. Public benchmarks use idealized embeddings and idealized query sets. Recall on your data with your embeddings, your filter cardinality, and your concurrency is the only number that matters.

- Skipping hybrid search. Dense-only retrieval misses exact matches on rare terms. The recall lift from adding the lexical leg plus a reranker often outweighs picking a different vector DB.

- Underestimating ops cost. Self-hosted Milvus, Vespa, or Weaviate Cluster requires a control plane, monitoring, backups, and on-call. Cost equals not just infra but engineering hours.

- Ignoring multi-tenancy. Shoehorning per-tenant collections into a non-multi-tenant store leads to memory blowup. Verify the cost of N tenants at your N before committing.

- Treating chunk size as orthogonal. Chunk size, overlap, and metadata strategy affect recall more than the vector DB choice in many stacks. Run a chunking ablation before benchmarking stores.

- Pricing only the platform fee. Real cost equals platform fee plus embedding generation, storage, query volume, replication, backup, and the engineering hours to maintain index parameters.

What changed in vector databases in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2025-2026 | Milvus 2.5+ release notes | GPU graph indexing and distributed-rebuild improvements continued landing across the 2.5.x line. |

| Aug 2025 | Pinecone deprecated pod-based indexes | New Standard and Enterprise customers must use serverless with regional data planes and Dedicated Read Nodes. |

| 2024-2026 | Qdrant Query API and BM25 support | Native hybrid retrieval matured from the 1.10 release through subsequent 1.x updates. |

| 2024-2026 | Weaviate replication and agent-friendly modules | Replication, backups, and module APIs made self-hosted production deployments more reliable. |

| 2024-2026 | pgvector 0.7 and 0.8 release notes | New vector types (halfvec, sparsevec, binary) and improved filtered ANN planning made Postgres viable for more RAG workloads. |

| 2024-2026 | Vespa late-interaction (ColBERT) docs | Tensor-based late interaction with reranking became easier to deploy on Vespa. |

How to actually evaluate this for production

-

Pick a labeled query set. At least 200 queries reflecting real failure modes: paraphrase, multi-hop, rare-term, ambiguous-intent. Hand-label the ground-truth chunks.

-

Index the same corpus in each candidate. Same embedding model, same chunk size, same metadata. Hold the reader model constant.

-

Measure retrieval metrics. Recall@k for k=1,3,5,10,20. MRR. p95 and p99 latency at production concurrency. Filter pass-through performance.

-

Run end-to-end RAG eval. Score Faithfulness, Context Recall, Context Precision (Ragas-style metrics) on the same labeled set. The retrieval delta dominates the answer quality delta.

-

Cost-adjust. Real cost equals platform fee plus embedding cost plus storage plus query volume. Self-hosted infra cost includes the engineer-hours to operate it. Run a 3-month projection before procurement.

Sources

- Pinecone pricing

- Milvus GitHub repo

- Zilliz Cloud pricing

- Weaviate pricing

- Weaviate GitHub repo

- Qdrant GitHub repo

- Qdrant Cloud pricing

- pgvector GitHub repo

- Chroma GitHub repo

- Vespa GitHub repo

- Vespa Cloud pricing

- Ragas RAG metrics

Series cross-link

Read next: Best RAG Evaluation Tools, Vector Databases and Knowledge Graphs for RAG, RAG Evaluation Metrics

Frequently asked questions

Which vector database is best for production RAG in 2026?

Does pgvector scale for production RAG?

What is hybrid search and why does it matter for RAG?

How do I evaluate a vector database for RAG?

Which vector databases are open source under OSI definitions?

Should I use a managed vector DB or self-host?

How does pricing compare across managed vector databases?

How do I evaluate retrieval quality across different vector databases?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.