Best No-Code LLM Builders in 2026: 7 Drag-and-Drop Platforms

Dify, Flowise, Langflow, n8n, Vapi, Voiceflow, Stack AI for no-code LLM apps in 2026. Compared on visual builders, agents, voice, OSS license, and pricing.

Table of Contents

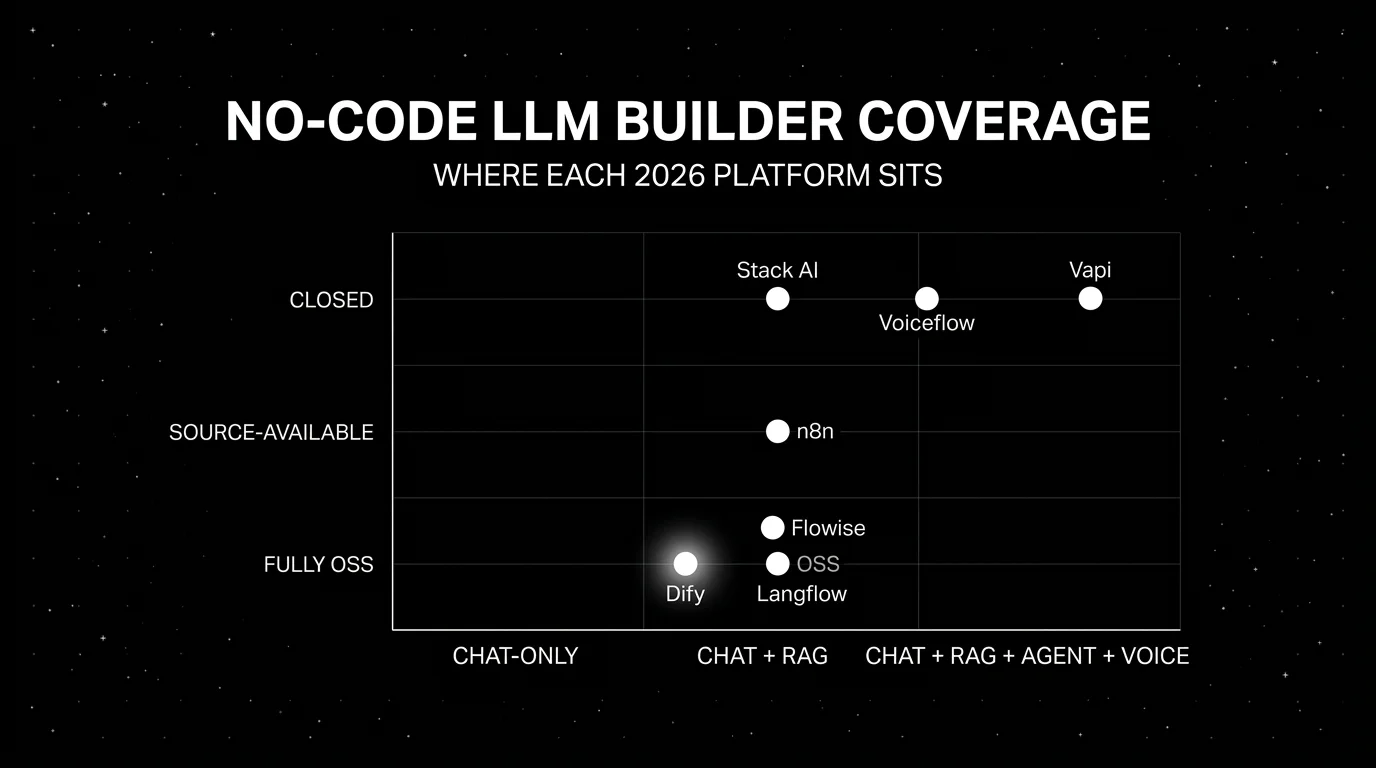

No-code LLM builders sit between the demo notebook and the production engineering team. The audience is product managers, support leads, ops teams, and engineers prototyping under deadline. The 2024 generation of these platforms was thin on observability and brittle on agents. The 2026 generation has matured: Langflow 1.10.x includes native traces, Flowise supports analytics providers including Langfuse, LangSmith, and Phoenix, and voice-native platforms like Vapi target sub-second voice-to-voice latency depending on stack. This shortlist of seven no-code builders prioritizes self-hosting, pricing transparency, tracing or export support, and production deployment paths.

Methodology: this comparison is dated May 2026, scored on six axes (canvas/versioning, multi-step agents and tools, RAG nodes, OTel/OpenInference span emission, deployment and access control, eval surface) using vendor docs, public GitHub repos, and pricing pages. We did not run head-to-head benchmarks; verify against your workflow before procurement.

TL;DR: Best no-code LLM builder per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Source-available chat and RAG | Dify | Strong UI, RAG-native, self-hostable | Sandbox free; Professional ~$59/mo | Source-available (Dify Open Source License, not OSI-approved) |

| LangChain-style flow builder | Flowise | Apache 2.0 core, large node library | OSS free; Cloud requires account | Apache 2.0 core |

| Enterprise integrations + Astra DB | Langflow | DataStax-backed, MIT, Astra DB native | OSS and Langflow Cloud free; Astra DB billed separately | MIT |

| Broader automation including LLM steps | n8n | 1000+ apps and services, AI agents | Cloud Starter 20€/mo billed annually (~$22/mo) | Sustainable Use License |

| Production voice agents | Vapi | Telephony-first, per-minute billing | Per voice minute | Closed |

| Designer-led conversation flows | Voiceflow | Conversation viz, voice + chat | Free trial; business pricing via dashboard or sales | Closed |

| Enterprise compliance (SOC 2, HIPAA) | Stack AI | Closed enterprise platform with on-prem and VPC | Free plan + custom Enterprise | Closed |

If you only read one row: pick Dify for source-available chat and RAG. Pick Vapi for production voice. Pick n8n when LLM steps are part of a broader automation surface that includes Slack, Salesforce, and webhooks.

What a 2026 no-code LLM builder actually needs

Pick a tool that covers all six surfaces below. If a platform lacks one of these surfaces, plan for an external service or custom integration.

- Visual canvas with versioning. Drag-and-drop nodes, reusable subflows, and a way to diff revisions. Visual-only with no version diff is brittle.

- Multi-step agents with tool calls. Conditionals, loops, tool-call nodes, and retry policy. A pipeline that only does input-prompt-output is too thin for 2026.

- RAG nodes. Connectors for vector stores (Pinecone, Weaviate, Qdrant, pgvector, Chroma) and document loaders for PDF, DOCX, web pages.

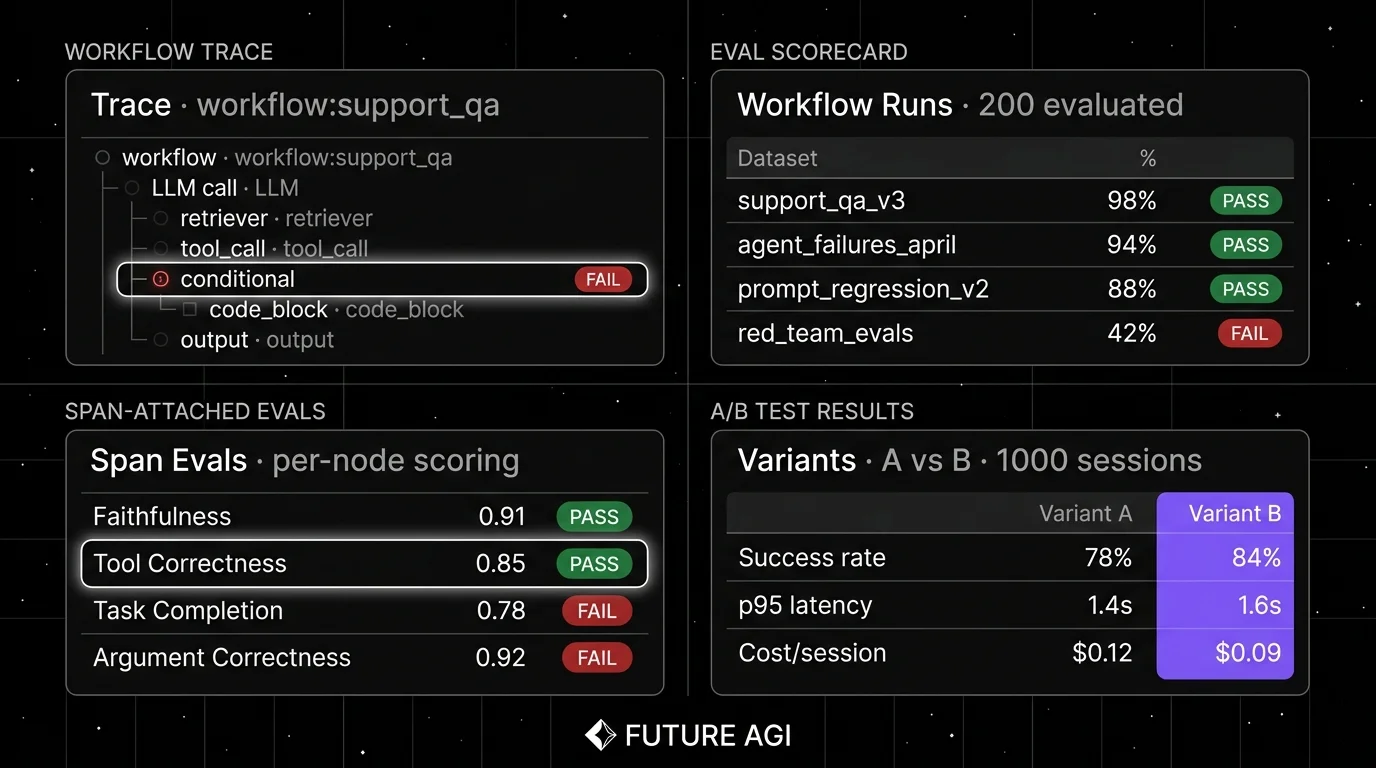

- OTel or OpenInference span emission. Every node emits a span so the workflow can be debugged in an external observability tool.

- Deployment and access control. API endpoints, webhooks, embeds, and role-based access for non-engineering users.

- Eval surface. Either built-in or via clean export to an external eval platform. For production use, require exported traces plus pass/fail or numeric eval scores.

The 7 no-code LLM builders compared

1. Dify: Best for source-available chat assistants and RAG

Source-available under the Dify Open Source License. Self-hostable. Hosted cloud option.

Use case: Teams building customer-facing chat assistants, internal Q&A, and RAG-over-documents who want a polished UI for non-engineering users plus the option to self-host the data plane. Dify ships agent workflow nodes, RAG with multiple retrieval modes, prompt management, and dataset annotation in one app.

Architecture: Self-hostable via Docker compose. The hosted Cloud runs on AWS. Workflow studio supports nodes for LLM, knowledge retrieval, code (Python or JavaScript), HTTP, and conditional logic. Multi-tenant SaaS shape with workspace and member roles.

Pricing: Dify Cloud Sandbox is free with caps. Professional is around $59/mo. Team is around $159/mo. Enterprise is custom. Verify the latest pricing page before procurement.

OSS status: Dify Open Source License: source-available, based on Apache 2.0 but with additional conditions including a multi-tenant restriction (not OSI-approved).

Best for: Teams that want a self-hostable no-code LLM platform with a polished UI for product managers and support leads, plus enough engineering depth to build agents and RAG.

Worth flagging: Dify’s source-available license blocks building a hosted multi-tenant SaaS on top of Dify without negotiation; reserve OSS framing for the truly Apache-licensed projects. Some advanced features (SSO, granular RBAC) are paid-tier. See Dify vs Flowise vs Langflow for the head-to-head.

2. Flowise: Best for LangChain-style flow building

Open source. Apache 2.0. Hosted cloud option.

Use case: Engineering teams that already think in LangChain primitives (chains, agents, tools, memory) and want a visual canvas that maps 1-to-1 to those primitives. Flowise’s node library mirrors LangChain.js and LangChain Python concepts.

Architecture: Self-hostable via Docker, npm, or as a managed Cloud workspace. Built on Node.js with a React canvas. Components include chains, agents, document loaders, vector stores, embeddings, and LLM nodes. Custom JavaScript components are first-class.

Pricing: Flowise OSS is free. Flowise Cloud pricing requires account verification; tiered governance plans are available.

OSS status: Apache 2.0 for the main codebase, with enterprise-directory and copyright-marked files under a commercial license.

Best for: Engineering teams that want a LangChain-flavored visual canvas where non-engineers can compose flows that engineers can extend with custom nodes.

Worth flagging: The visual representation is JSON, which diffs less cleanly than Python. Multi-tenant SaaS deployment is the team’s responsibility on the OSS path. The agent depth is improving but is shallower than code-based LangGraph for complex stateful workflows.

3. Langflow: Best for DataStax-backed enterprise integrations

Open source. MIT. DataStax cloud option.

Use case: Teams that want a visual LangChain-style builder with first-class Astra DB integration and DataStax’s enterprise support story. Langflow was acquired by DataStax in 2024, and the platform has expanded with enterprise features around vector search and observability.

Architecture: Python-based with a React canvas. Self-hostable via pip or Docker. Components mirror LangChain primitives: chains, agents, tools, vector stores, document loaders. Native Astra DB and Hugging Face integrations. Custom Python components supported.

Pricing: Langflow OSS and DataStax-hosted Langflow Cloud are free to start. DataStax Astra DB usage is billed separately if used as the backing vector store; verify Astra DB pricing for current rates.

OSS status: MIT.

Best for: Teams that already use Astra DB or want DataStax’s enterprise procurement path, plus a visual LangChain-style builder.

Worth flagging: Path-of-least-resistance assumes Astra DB is the vector store; using Pinecone or Weaviate works but adds glue. The cloud story is tied to DataStax tenancy. Agent depth is shallower than Dify or Flowise for complex stateful workflows.

4. n8n: Best for broader automation that includes LLM steps

Source-available. Sustainable Use License. Cloud option.

Use case: Teams that want one automation platform across LLM workflows and non-AI workflows: Slack notifications, Salesforce updates, webhook handlers, ETL pipelines. n8n added AI Agent and OpenAI nodes; the LLM surface is one capability among 1000+ apps and services covered through built-in nodes, credential-only nodes, generic HTTP requests, and community nodes.

Architecture: Self-hostable via Docker. Cloud-hosted tiers managed by n8n. Workflow canvas with triggers, action nodes, and conditional logic. AI Agent node added in 2024-2025 for multi-step LLM agents.

Pricing: n8n Cloud Starter is 20€/mo billed annually (roughly $22/mo depending on exchange rate), with paid tiers for governance. Self-hosted is free under the Sustainable Use License (source-available, free for internal use, paid licensing required if hosting a commercial service on top).

OSS status: Sustainable Use License (not OSI-approved). Free for internal company use; commercial hosting requires a license.

Best for: Teams that already use or evaluate n8n for general automation and want LLM steps as part of broader workflows. Strong fit when the bottleneck is integration glue rather than agent depth.

Worth flagging: Sustainable Use License is source-available, not OSI-approved OSS. Read the license before relying on it for commercial deployment. The AI surface is newer than the broader automation surface; agent depth is shallower than Dify or Flowise.

5. Vapi: Best for production voice agents

Closed platform. Per-minute billing.

Use case: Production voice agents on phone numbers (inbound, outbound, IVR replacement) with low-latency turn-taking, telephony integrations, and per-minute pricing. Vapi handles the speech-to-text, the LLM hop, and the text-to-speech with end-to-end latency tuning.

Architecture: Hosted platform with telephony providers (Twilio, Vonage, others). Configurable STT (Deepgram, Whisper), LLM (OpenAI, Anthropic, custom endpoints), and TTS (ElevenLabs, PlayHT, Cartesia). Vapi targets sub-second voice-to-voice latency; tune end-to-end against your provider mix and benchmark in production.

Pricing: Vapi billing is per voice minute, with model and provider costs passed through. Verify the latest pricing.

OSS status: Closed platform.

Best for: Teams shipping voice agents to production: customer support automation, outbound qualification, appointment booking, IVR replacement. Strong fit when telephony, latency, and per-minute billing matter.

Worth flagging: Closed platform with no self-host path. The eval and observability story is lighter than dedicated voice eval platforms; pair with a voice-native eval surface for span-attached call quality scores. See Best Voice AI Frameworks.

6. Voiceflow: Best for designer-led conversation flows

Closed platform. Hosted only.

Use case: Conversation design teams that want to visualize chat or voice flows, A/B test variants, and ship to multiple channels (web chat, IVR, WhatsApp, voice). Voiceflow is conversation-design-first, with an editor that resembles a flowchart more than a node-DAG.

Architecture: Hosted platform. Channel adapters for web chat, voice (via Twilio), WhatsApp, Microsoft Teams, and others. Knowledge base nodes for RAG-flavored Q&A.

Pricing: Voiceflow offers a free trial (no credit card required) and usage-based business pricing surfaced inside the dashboard or via sales contact. Verify the latest plan and add-on pricing.

OSS status: Closed platform.

Best for: Teams with conversation designers as the primary platform owner; teams running multi-channel chat and voice from one design surface.

Worth flagging: Closed platform; export options are limited. Per-user pricing scales poorly for cross-functional teams. Agent and tool-calling depth is shallower than code-based platforms; use Voiceflow when the conversation tree is the work, not the agent loop.

7. Stack AI: Best for enterprise compliance

Closed platform. Hosted, VPC, and on-prem deployment options.

Use case: Enterprise teams that need SOC 2 and HIPAA compliance, regulated industries (healthcare, finance, legal), and centralized governance over no-code AI workflows. Stack AI’s platform is built around enterprise compliance, access control, and dedicated-infrastructure delivery.

Architecture: Hosted, VPC, and on-prem deployment options with SSO, audit logs, and compliance certifications (SOC 2, HIPAA, GDPR). Visual workflow canvas with LLM, retrieval, document, and integration nodes. White-label deployment available.

Pricing: Stack AI lists a Free plan and a custom Enterprise plan with dedicated, VPC, and on-prem deployment options. Verify current pricing via the pricing page or sales contact.

OSS status: Closed platform.

Best for: Enterprise teams in regulated industries that prioritize compliance, SSO, and audit logs over OSS license control or developer ergonomics.

Worth flagging: Closed platform; OSS license is not on the table. Developer ergonomics are lighter than Dify or Flowise; the audience is the enterprise procurement function more than the engineering team.

Decision framework: pick by constraint

- OSS license control matters: Dify, Flowise, Langflow.

- LangChain-flavored building blocks: Flowise.

- Astra DB integration: Langflow.

- Broader automation including non-AI nodes: n8n.

- Production voice agents: Vapi.

- Multi-channel conversation design: Voiceflow.

- Enterprise compliance (SOC 2, HIPAA): Stack AI.

- Eval and observability are non-negotiable: Dify, Flowise, Langflow paired with FutureAGI, Phoenix, or Langfuse for span-attached eval scoring on every workflow node.

Common mistakes when picking a no-code LLM builder

- Treating no-code as zero-engineering. Production no-code workflows still need engineers for custom logic, integrations, and observability. The engineering load shifts; it does not disappear.

- Skipping version control. Visual JSON or YAML diffs are noisy. Establish a discipline (export, commit, tag releases) before the first production workflow.

- Picking on demo polish. Demos use clean prompts and idealized failures. Run a domain reproduction with real traces and real failure modes.

- Pricing only the platform fee. Real cost equals platform fee plus model token cost plus retry rate plus engineering hours to maintain custom nodes.

- Confusing chat-flow with agent-loop. A linear chat flow with conditionals is simpler than a multi-step agent with tools, retries, and state. Map your workflow shape before picking a platform.

- Ignoring eval. A workflow that does not produce scores is a research demo, not a production capability. Wire eval into the workflow before the first deploy.

What changed in no-code LLM builders as of May 2026

| Date | Event | Why it matters |

|---|---|---|

| 2025-2026 | Dify continued shipping agent workflow nodes and RAG improvements | Multi-step agents and retrieval matured in the visual canvas; verify exact release versions in changelog. |

| 2025-2026 | Flowise continued expanding LangChain.js compatibility | Component coverage moved closer to LangChain feature parity; verify exact release versions in changelog. |

| 2025-2026 | Langflow shipped 1.x release line and enterprise features under DataStax | Native traces and Astra DB integration matured; verify exact 1.x release notes. |

| 2025 | n8n added AI Agent node + OpenAI integration | The automation platform crossed into multi-step agent territory. |

| 2025-2026 | Vapi expanded telephony providers and lowered turn-taking latency | Voice production deployments became more reliable; verify per-release benchmarks. |

| 2025-2026 | Voiceflow expanded knowledge-base nodes | RAG inside conversation design surface improved; verify exact release versions. |

How to actually evaluate this for production

-

Pick the workflow shape first. Linear chat, multi-step agent, voice, broader automation. The shape narrows the candidate list before pricing or OSS comparisons matter.

-

Run a domain reproduction. Build the same workflow in 2-3 candidates with the same prompts, the same retrievers, and the same failure cases. Hand-label outcomes.

-

Measure cost honestly. Real cost equals platform fee plus model token cost plus retry rate plus eval cost. For voice, add per-minute pricing.

-

Test the eval surface. Wire each candidate into FutureAGI, Phoenix, or Langfuse and verify span emission across every workflow node. A workflow you cannot debug in production is not production-ready.

Sources

- Dify GitHub repo

- Dify pricing

- Flowise GitHub repo

- Flowise pricing

- Langflow GitHub repo

- DataStax pricing

- n8n pricing

- n8n GitHub repo

- Vapi pricing

- Voiceflow pricing

- Stack AI pricing

Series cross-link

Read next: Dify vs Flowise vs Langflow, Best Voice AI Frameworks, Generative AI No-Code Platforms

Frequently asked questions

What is a no-code LLM builder?

Which no-code LLM builder is best in 2026?

Are no-code LLM builders open source?

Should engineers use no-code LLM builders?

Can no-code LLM builders be evaluated like code-based agents?

How do pricing models compare across no-code LLM builders?

Which no-code builder is best for voice workflows?

What are the limits of no-code LLM builders for production?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.