Best Voice AI Frameworks in 2026: 6 Platforms Ranked for Production

LiveKit Agents, Pipecat, Vapi, Retell, Daily Bots, and OpenAI Realtime API ranked for 2026 by latency, telephony, OSS, and production readiness.

Table of Contents

Voice AI frameworks proliferated in 2025 and consolidated in 2026 around three patterns: OSS frameworks with bring-your-own infra (LiveKit Agents, Pipecat), managed platforms with telephony built in (Vapi, Retell, Daily Bots), and the OpenAI Realtime API for single-provider speech-to-speech. This guide ranks six commonly shortlisted frameworks for production voice agents in 2026 across latency, telephony, runtime control, observability, eval integration, and license, with honest tradeoffs for each.

TL;DR: Best voice AI framework per use case

| Use case | Best pick | Why (one phrase) | License | Stars |

|---|---|---|---|---|

| OSS framework with WebRTC plus SIP plus Inference credits | LiveKit Agents | AgentSession primitive plus mature SFU | Apache 2.0 | 10.4k |

| OSS Python pipelines without Cloud lock-in | Pipecat | Pipeline-of-FrameProcessors mental model | BSD-2-Clause | 11.9k |

| Managed voice with telephony and simulator | Vapi | API-first, BYO models, multilingual | Closed managed | n/a |

| Call-center deployment with warm transfer | Retell | Telephony plus analytics plus CRM workflow | Closed managed | n/a |

| Pipecat runtime plus Daily.co transport | Daily Bots | OSS framework plus managed transport | BSD-2-Clause runtime | n/a |

| Speech-to-speech in one provider call | OpenAI Realtime API | Lowest hop count, single provider | Closed API | n/a |

If you only read one row: pick LiveKit Agents for OSS framework parity with first-class WebRTC plus SIP, Pipecat for Python pipeline ergonomics, and Vapi for managed telephony out of the box. For deeper reads: see LiveKit alternatives, Pipecat alternatives, voice AI evaluation infrastructure, and implementing voice AI observability.

What changed in 2026

Three shifts shaped the voice AI landscape:

TTS first-byte tightened. Cartesia and ElevenLabs Turbo gained sub-200 ms first-byte latency, which removed TTS as the main bottleneck in voice agent loops. The implication is that LLM token-generation latency is now the dominant cost in turn-around budget. Speech-to-speech models (OpenAI Realtime) gain an even bigger advantage.

Telephony got serious. LiveKit, Vapi, and Retell all matured native SIP support, inbound and outbound calls, and phone-number provisioning. The telephony story is no longer the differentiator it was in 2024; the differentiator is now turn-taking accuracy, barge-in handling, and call-analytics depth.

Voice eval moved upstream. Pre-prod simulation against persona libraries and span-attached evaluators became standard. Voice agents that ship without simulator coverage are now considered untested. The eval and simulation layer moved closer to the runtime, with OpenTelemetry GenAI semconv spans flowing through both.

How to rank voice AI frameworks for production

Use these dimensions, in order of importance:

- Latency story: First-response latency p50, p95, p99. STT, LLM, TTS, network, turn-taking choices all contribute.

- Turn-taking accuracy: End-of-turn detection, barge-in handling, interruption recovery. The agent that responds in 500 ms but mishandles barge-in still fails.

- Telephony support: SIP, inbound and outbound calls, phone-number provisioning, warm transfer if call-center is in scope.

- Runtime control: OSS framework versus managed cloud. Procurement and infra ops constraints push toward one or the other.

- OTel tracing compatibility: GenAI semconv spans for STT, LLM, TTS, plus eval scores attached to spans.

- Eval and simulation integration: Pre-prod persona library, regression test for known failure modes, span-attached scoring.

- License: Apache 2.0 (LiveKit), BSD-2-Clause (Pipecat), closed (Vapi, Retell, OpenAI Realtime).

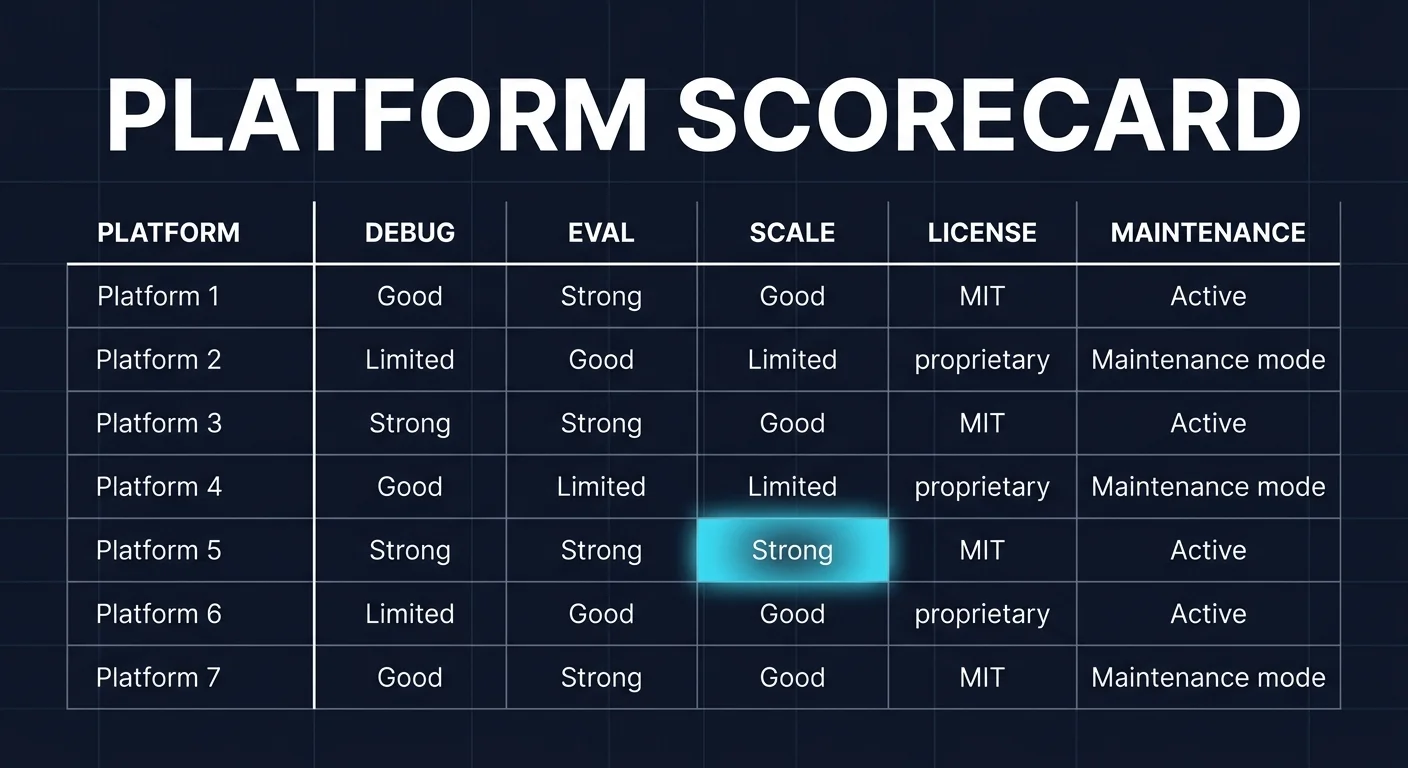

The 7 frameworks ranked

1. LiveKit Agents: Best OSS framework with WebRTC plus SIP

Apache 2.0. Python and TypeScript. 10.4k stars. Latest 1.5.8, May 2026.

LiveKit Agents is the most complete OSS voice framework in this list when telephony, multi-region, and Inference credits matter. The AgentSession primitive orchestrates STT, LLM, TTS, VAD, and turn detection. Native SIP telephony, end-of-turn detection, interruption handling, and noise cancellation are built in. LiveKit Inference credits cover STT, LLM, and TTS providers routed through the LiveKit gateway, which keeps per-call cost predictable.

The SFU is mature and used outside agents for general WebRTC. Self-hosting is realistic but involves running TURN, SFU, telephony peers, and inference paths yourself. LiveKit Cloud Build is free with 1,000 agent-session minutes per month.

Strengths: AgentSession primitive, native SIP, mature SFU, Inference credits, multi-region deployment.

Weaknesses: LiveKit Cloud is the implicit hosted runtime; pure self-hosting raises ops cost; AgentsJS is less mature than the Python core.

2. Pipecat: Best OSS Python pipelines

BSD-2-Clause. Python. 11.9k stars. Latest v1.1.0, April 2026.

Pipecat is a Python framework for real-time voice and multimodal conversational AI. The pipeline-of-FrameProcessors mental model is the cleanest abstraction in voice AI for engineers who think in Unix-style pipes. Transports include Daily.co WebRTC, Twilio Media Streams, FastAPI WebSocket, and Pipecat’s own server transport. Service integrations cover Deepgram, AssemblyAI, OpenAI, Anthropic, Google, Cartesia, ElevenLabs, OpenAI Realtime, and many others.

Strengths: clean pipeline mental model, transport flexibility, broad service catalog, BSD-2-Clause license.

Weaknesses: telephony, IVR, and warm transfer are integrations you wire yourself; the JS port is less mature than the Python core; no first-class persistence story.

3. Vapi: Best managed voice with telephony and simulator

Closed managed. Hosted cloud.

Vapi is API-first with thousands of configurations. The platform supports 100+ languages, tool calling against your APIs, automated testing through simulated conversations, BYO models with custom API keys, A/B experimentation for prompt and voice variations, and SOC 2, HIPAA, and PCI compliance. Telephony covers inbound and outbound calls. Stats list 300M+ calls processed and 500K+ developers.

Strengths: managed telephony, simulator, BYO models, multilingual, compliance posture.

Weaknesses: closed source; observability is its own format; eval pipeline depth depends on Vapi-specific tooling.

4. Retell: Best for call-center deployment

Closed managed. Hosted cloud.

Retell is the right framework when the use case is enterprise call center, with telephony, warm transfer to human agents, post-call analytics, and structured call review. Tools and functions integrate with your APIs. The runtime supports popular STT, TTS, and LLM providers behind one billing relationship.

Strengths: call-center workflow fit, warm transfer, analytics, CRM integrations.

Weaknesses: closed source; opinionated toward telephony; less suitable for in-app voice or chat-style products.

5. Daily Bots: Best Pipecat runtime plus Daily.co transport

Pipecat under BSD-2-Clause. Daily.co-hosted control plane.

Daily Bots runs Pipecat as the agent runtime with Daily.co’s WebRTC transport. The hosted control plane handles deployment, session orchestration, observability, and scaling. STT, LLM, and TTS services are BYOK or routed through Daily.co’s managed integrations. Telephony integrations connect through SIP providers like Twilio.

Strengths: OSS framework with managed infrastructure, predictable per-minute pricing, Daily.co engineering ownership.

Weaknesses: Daily.co transport coupling; non-Daily WebRTC requires more wiring; no managed simulator.

6. OpenAI Realtime API: Best speech-to-speech

Closed API. Hosted only.

The OpenAI Realtime API collapses STT, LLM, and TTS into a single provider call. The model handles VAD, turn detection, interruption, and tool calls inside one session. SDKs cover Python and JavaScript. Pricing is per-minute of audio input and output plus per-token for context.

Strengths: lowest hop count, integrated turn handling, function calling, simple integration.

Weaknesses: OpenAI-only; no BYOK across providers; no custom voice cloning; no framework-level control.

Decision framework

- Choose LiveKit Agents if WebRTC plus SIP plus AgentSession plus Inference credits matter. Buying signal: telephony in scope, multi-region required.

- Choose Pipecat if Python pipelines and OSS framework control are non-negotiable. Buying signal: multi-provider STT or TTS, FastAPI services.

- Choose Vapi if managed telephony plus simulator out of the box matters more than framework control. Buying signal: small to mid team, voice agent as a product.

- Choose Retell for call-center deployment. Buying signal: warm transfer, supervisors, CRM workflows.

- Choose Daily Bots if Pipecat plus Daily.co plus hosted control fits. Buying signal: existing Daily.co usage.

- Choose OpenAI Realtime API for the lowest hop count. Buying signal: latency dominates, single-provider lock-in is acceptable.

Common mistakes when picking a voice AI framework

- Treating “real-time” as the only metric. Latency is necessary but not sufficient. A voice agent that responds in 500 ms but mishandles barge-in still fails.

- Skipping simulation. Voice agents fail under accent drift, network jitter, partial STT outputs, and barge-in. A pre-prod simulator that replays real call transcripts and edits in failure modes catches more than human QA.

- Picking by integration logos. Verify your specific STT, TTS, and LLM combination. Provider rate limits, codec support, streaming-versus-batch differences, and timeout defaults change behavior between vendors.

- Ignoring observability format. If your framework emits non-OTel format, your downstream eval and incident review tools must adapt or stay separate. OTel GenAI semconv compatibility matters for cross-team analytics.

- Pricing only the platform fee. Real cost equals platform fee plus STT minutes plus TTS characters plus LLM tokens plus telephony minutes plus eval token spend plus storage retention.

What changed in 2026 for voice AI

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | LiveKit Agents 1.5.8 shipped | Latest minor release iterated on noise cancellation and end-of-turn detection. |

| Apr 2026 | Pipecat 1.1.0 released | Pipeline framework continued cadence on transports, services, and FrameProcessor primitives. |

| Mar 2026 | Cartesia and ElevenLabs Turbo TTS gained sub-200 ms first-byte | Turn-around budget tightened across all voice frameworks. |

| Feb 2026 | Vapi expanded to 100+ languages | Multilingual voice agents became practical without per-language model swaps. |

| Jan 2026 | OpenAI Realtime API hardened for production | Speech-to-speech became a credible production path. |

| Jan 2026 | Daily Bots positioned Pipecat as the runtime | Pipecat plus Daily Cloud became a clean managed alternative to LiveKit Cloud. |

How to evaluate voice AI flows

-

Run a domain reproduction. Export a representative slice of real call transcripts including barge-in events, accent drift, retrieval misses, and tool-call failures. Replay through each candidate with your STT, LLM, and TTS provider mix.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrent calls, y-axis is first-response latency p50, p95, p99, dropped sessions, dropped TTS frames, retry count, and tool-call failure rate. Track end-of-turn detection accuracy and barge-in handling under each scenario.

-

Cost-adjust against your real shape. Real cost equals platform fee plus STT minutes plus TTS characters plus LLM tokens plus telephony minutes plus eval token spend plus storage retention plus on-call labor.

Sources

- LiveKit Agents repo

- LiveKit pricing

- Pipecat repo

- Pipecat docs

- Daily.co pricing

- Vapi pricing

- Retell pricing

- OpenAI Realtime API docs

Series cross-link

Next: LiveKit Alternatives, Pipecat Alternatives, Voice AI Evaluation Infrastructure

Frequently asked questions

What is the best voice AI framework in 2026?

Which voice AI framework gives the lowest end-to-end latency?

Are voice AI frameworks open source?

How do I evaluate a voice AI framework for production?

Which framework is best for telephony?

What about evaluating voice AI agents?

Can I use OpenAI Realtime API with these frameworks?

What does each framework cost for production?

LiveKit Agents, Vapi, Retell, OpenAI Realtime API, and FutureAGI as Pipecat alternatives in 2026. Pricing, OSS license, and real tradeoffs.

Pipecat, Vapi, Retell, Daily Bots, and FutureAGI as LiveKit Agents alternatives in 2026. Pricing, OSS license, latency, and real tradeoffs.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.