Agent Playground Node-to-Node and Speed Improvements

Chain prompts visually with input/output port mapping between nodes and experience a 4x faster frontend across the platform.

What's in this digest

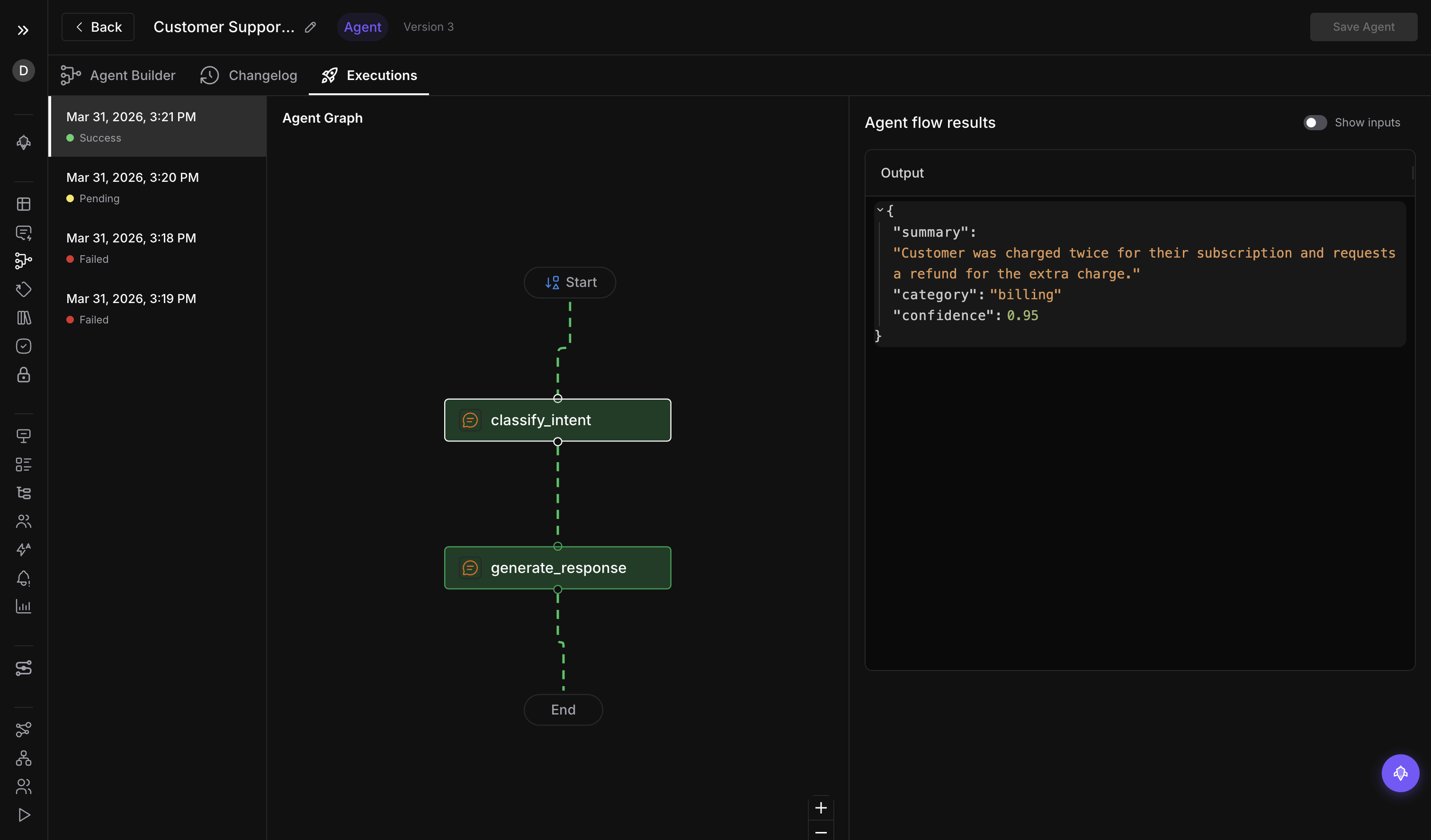

Node-to-Node Connections — Agents That Actually Chain

The Agent Playground launched two months ago with the ability to place nodes on a canvas and configure them individually. What was missing was the connective tissue: the ability to map the output of one node directly into the input of another. That changes today.

Node-to-node connections introduce input/output port mapping between nodes. Each node exposes typed ports — text output, structured data, tool results, image content — and you draw connections between compatible ports to define data flow. The output of a retrieval node feeds into the context port of a generation node. The generation output routes to an evaluation node that scores it. A conditional node inspects the score and decides whether to retry with different parameters or pass the result downstream.

This is visual agent orchestration done right. The graph editor validates connections as you draw them, preventing type mismatches and circular dependencies. Port labels update in real time to show what data is flowing through each connection. When you run the graph, execution traces highlight the active path, making it straightforward to see exactly how data moved through your agent.

Combined with the version management system — which now supports creating, browsing, comparing, and restoring named versions — teams can iterate on agent architectures with the confidence that every working configuration is preserved and recoverable.

4x Faster Frontend

Four separate optimization PRs landed in this release, collectively delivering a four-times improvement in page load performance across the platform. These are not cosmetic changes. The work includes route-level code splitting that eliminates loading unused JavaScript, aggressive data prefetching that starts API calls before navigation completes, virtualized rendering for large lists and tables, and optimized state management that reduces unnecessary re-renders.

The impact is most noticeable on the pages teams visit most: trace views, evaluation dashboards, simulation results, and the Agent Playground itself. Pages that previously took two to three seconds to become interactive now respond in under a second. For teams that spend hours each day inside Future AGI, this is the kind of improvement that compounds into meaningful productivity gains.

Annotation Queue UX Overhaul

The annotation queue gets a significant experience upgrade. Multi-assignment lets you assign multiple reviewers to the same items, enabling consensus-based review workflows where agreement between reviewers produces the final label. Prefetching loads the next several items in the background while a reviewer works on the current one, eliminating the loading delay between reviews that previously broke concentration.

These improvements reduce the friction that made human annotation feel tedious compared to automated evaluation. When annotation is fast and fluid, teams actually do it — and their evaluation pipelines improve as a result.

Voice Observe-to-Simulation Bridge

Production voice calls captured through Observe can now feed directly into Simulation. Select a call, extract its scenario — the conversation flow, the user intent, the edge cases that emerged naturally — and generate a simulation test case from it. This closes the loop between production monitoring and testing, ensuring that every interesting production interaction becomes a repeatable test.

For voice agent teams, this means test coverage that evolves organically with real usage patterns rather than relying solely on scenarios imagined during development.

Infrastructure and Reliability

The ClickHouse trace storage introduced two releases ago now runs on Replicated MergeTree, adding automatic replication and failover to the performance gains already delivered. If a storage node goes down, queries continue against replicas without interruption. This is the high-availability guarantee that production-critical observability requires.

Granular CRUD APIs for the Agent Playground enable programmatic management of agent graphs. Create nodes, define connections, configure ports, and manage edge mappings through the API — useful for teams that generate agent architectures programmatically or maintain agent configurations in version control.

Prompt generation and improvement round out the release. Describe a task in natural language and the platform generates a starting prompt. Already have a prompt? One-click improvement suggestions analyze your prompt and propose specific changes to improve clarity, reduce ambiguity, and better constrain model behavior.