LiveKit Alternatives in 2026: 5 Voice AI Frameworks Compared

Pipecat, Vapi, Retell, Daily Bots, and FutureAGI as LiveKit Agents alternatives in 2026. Pricing, OSS license, latency, and real tradeoffs.

Table of Contents

You are probably here because LiveKit Agents already runs your voice agent, and now your team is questioning the framework choice. You may want OSS without LiveKit Cloud lock-in, a managed platform where telephony is one click, deeper call analytics, simulator-driven pre-prod testing, or a way to score every voice trace with the same evaluator that judges production. This guide compares the five alternatives engineering teams actually evaluate against LiveKit in 2026, with honest tradeoffs for each.

TL;DR: Best LiveKit alternative per use case

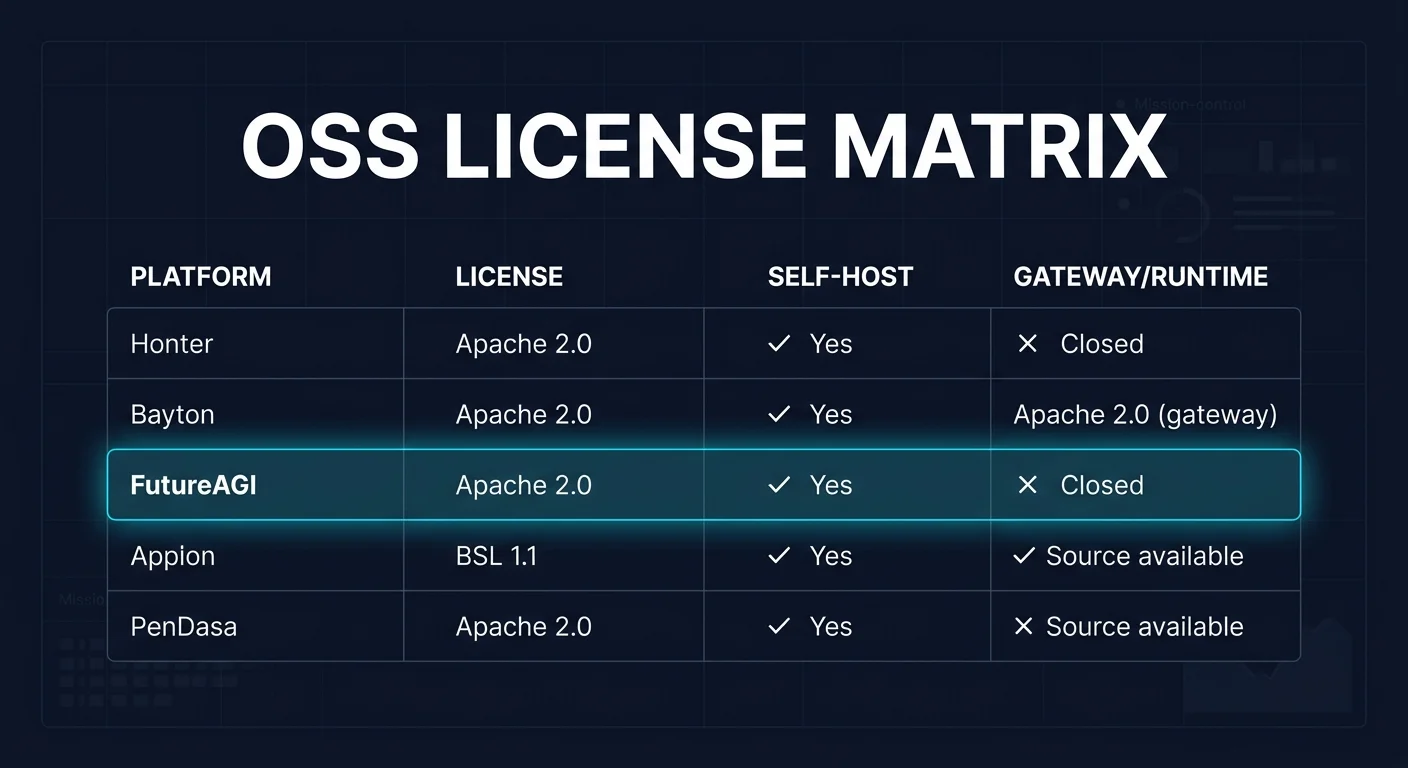

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| OSS Python framework, no Cloud lock-in | Pipecat | BSD-2 voice and multimodal pipelines | Free OSS, infra is yours | BSD-2-Clause |

| Managed voice platform with telephony and simulator | Vapi | API-first, BYO models, multilingual | Per-minute, scale-tiered | Closed source |

| Call-center deployment with analytics | Retell | Telephony plus warm transfer plus analytics | Per-minute, model-tiered | Closed source |

| WebRTC voice on Pipecat with hosted control | Daily Bots | Daily.co transport plus Pipecat runtime | Daily.co usage-based | Pipecat BSD-2 |

| Voice eval, simulation, and observability | FutureAGI | Eval and simulation on top of any runtime | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

If you only read one row: pick Pipecat for OSS framework control, Vapi when telephony plus simulator out of the box matters, and FutureAGI when voice eval and simulation must run alongside whatever runtime the team already chose. For deeper reads: see the voice AI evaluation infrastructure guide and voice AI observability.

Who LiveKit is and where it falls short

LiveKit ships an open-source WebRTC SFU plus a real-time voice and video framework called Agents. The LiveKit Agents repo is Apache 2.0, Python-first (10.4k stars, latest 1.5.8 in May 2026), with a separate AgentsJS for TypeScript. The framework’s AgentSession primitive orchestrates speech-to-text, language model, text-to-speech, voice activity detection, turn detection, and room connection in one place. Native SIP telephony, end-of-turn detection, interruption handling, and noise cancellation are built in.

Pricing is split between the OSS framework and LiveKit Cloud. The framework is free. LiveKit Cloud’s Build plan is free with 1,000 agent-session minutes per month, LiveKit Inference credits, and one free US local phone number for inbound calling. Higher tiers add more session minutes, multi-region deployment, and enterprise SLAs. Inference credits cover STT, LLM, and TTS providers routed through the LiveKit gateway, which is a useful billing model when you do not want to hold separate Deepgram, ElevenLabs, and OpenAI accounts.

Be fair about what LiveKit does well. The SFU is mature and is used outside agents for general WebRTC. AgentSession is the most integrated abstraction in this list because it bundles STT, LLM, TTS, VAD, turn detection, and room connection in one primitive. Inference credits make per-call cost predictable. SIP and phone-number provisioning are first-class. The framework is Apache 2.0 and self-hostable end-to-end if you want to operate the SFU yourself. Multimodal extensions (video and physical AI) extend the runtime beyond voice-only agents, which is uncommon among the voice frameworks compared here.

Where teams start looking elsewhere is less about LiveKit being weak and more about constraints. You may not want LiveKit Cloud as a runtime dependency. You may want telephony and a simulator out of the box without operating WebRTC. You may need call-center primitives like warm transfer, IVR, and post-call analytics that LiveKit leaves to you. You may want voice eval, simulation, and groundedness scoring as part of the loop, not an external tool. You may want the WebRTC transport but a different agent framework. Each of those is a real reason to compare alternatives.

The 5 LiveKit alternatives compared

1. Pipecat: Best OSS Python voice framework

BSD-2-Clause. Self-hostable. Daily.co-supported.

Pipecat is the right alternative when the team wants an open-source Python framework for real-time voice and multimodal pipelines without LiveKit Cloud as a runtime dependency. The pitch is direct: orchestrate audio and video, AI services, transports, and conversation pipelines effortlessly with primitives that read like a Unix pipe.

Architecture: Pipecat is a BSD-2-Clause Python framework (11.9k stars, latest v1.1.0 April 2026) supported by the Daily.co engineering team. Pipelines compose FrameProcessor nodes that pass audio, video, and text frames between transports, STT services, LLMs, TTS services, and custom processors. Transports include Daily.co WebRTC, Twilio Media Streams, FastAPI WebSocket, and Pipecat’s own server transport. Service integrations cover Deepgram, AssemblyAI, OpenAI, Anthropic, Google, Cartesia, ElevenLabs, OpenAI Realtime, Azure, Groq, Together, AWS, and many others. Pipecat Cloud is a managed runtime for production deployments at predictable per-minute pricing.

Pricing: Pipecat the framework is free OSS. Pipecat Cloud (managed runtime) and Daily Bots are billed per session minute on the Daily.co platform pricing. Self-hosted is free at the framework level; the infra cost is yours.

Best for: Pick Pipecat when Python is the language and the team wants the framework to be theirs. Buying signal: a multi-provider STT or TTS strategy, BYOK provider keys, and a desire to swap services without changing pipeline code. Pairs well with FastAPI services, custom processors, Daily.co transport, and Twilio Media Streams.

Skip if: Skip Pipecat if you want telephony, phone-number provisioning, IVR, warm transfer, and analytics as turnkey features. The framework gives you primitives; the call-center features are integrations you wire. Also skip it if your team is JavaScript or TypeScript first; the JS port is less mature than LiveKit AgentsJS.

2. Vapi: Best managed voice platform with telephony and simulator

Closed source. Managed cloud.

Vapi is the right alternative when you want a managed voice AI platform that handles telephony, simulator-based testing, observability, and BYO-model orchestration in one product. The pitch is that you ship a voice agent without operating WebRTC, SFU, or telephony peers.

Architecture: Vapi is API-first with thousands of configurations and integrations. The platform supports 100+ languages, tool calling against your APIs, automated testing through simulated conversations to identify hallucination risks, BYO models with custom API keys or self-hosted models, A/B experimentation for prompt and voice variations, and SOC 2, HIPAA, and PCI compliance. Telephony covers inbound and outbound calls with phone-number provisioning. The platform stats page lists 300M+ calls processed and 500K+ developers.

Pricing: Vapi pricing is per-minute on a scale-tiered model. Tiers include a free trial and paid plans for production usage. Confirm the current rates and minute-bucket pricing on the page; voice platforms tune pricing frequently.

Best for: Pick Vapi when telephony plus simulator plus tool calling out of the box matters more than framework-level control. Buying signal: small to mid-sized team, voice agent as a product, no desire to run WebRTC infra. Pairs with: BYO models, third-party API tools, multilingual deployment.

Skip if: Skip Vapi if your team needs full source-level control of the runtime or if your enterprise procurement requires OSI open source for the data path. Skip it also if your eval pipeline needs span-attached scores and OTel GenAI semconv compatibility; Vapi’s observability is its own format. Verify the simulator depth against your real conversation patterns before relying on it as your only pre-prod gate.

3. Retell: Best for call-center deployment with analytics

Closed source. Managed cloud.

Retell is the right alternative when the use case is enterprise call center, with telephony, warm transfer to human agents, analytics, and call review. The pitch is voice agents that fit existing call-center operations, including supervisors, queues, and post-call review.

Architecture: Retell exposes a managed voice AI platform with native telephony, inbound and outbound calls, warm transfer to human agents, post-call analytics, and structured call review. Tools and functions integrate with your APIs. The runtime supports popular STT, TTS, and LLM providers behind one billing relationship. Voice latency is competitive when paired with fast STT and TTS providers.

Pricing: Retell pricing is per-minute with model-tiered rates. Telephony costs are billed separately. Confirm the current rate card before signing.

Best for: Pick Retell when the buying signal is call-center deployment with supervisors, queues, and warm transfer. It pairs well with Salesforce, Zendesk, HubSpot, and other CRM workflows that drive call routing.

Skip if: Skip Retell if your team is shipping a chat-style voice product or a non-telephony use case (in-app voice assistant, kiosk, embedded device). The product is opinionated toward telephony. Also skip it if you need OSS framework control. Like Vapi, the runtime is closed and the eval pipeline is its own format.

4. Daily Bots: Best for WebRTC voice on Pipecat with hosted control

Pipecat runtime under BSD-2. Daily.co-hosted control plane.

Daily Bots is the right alternative when you want Pipecat as the runtime and Daily.co as the WebRTC transport plus hosted control plane. The pitch is OSS framework with managed infrastructure: Pipecat under the hood, Daily.co taking on session orchestration, observability, and the WebRTC SFU.

Architecture: Daily Bots runs Pipecat as the agent runtime with Daily.co’s WebRTC transport. The hosted control plane handles deployment, session orchestration, observability, and scaling. STT, LLM, and TTS services are BYOK or routed through Daily.co’s managed integrations. Telephony integrations connect through SIP providers like Twilio.

Pricing: Daily Bots is billed via the Daily.co platform on usage-based pricing. The free tier covers prototyping; paid plans cover production volume.

Best for: Pick Daily Bots when you want Pipecat’s framework primitives but do not want to operate WebRTC infrastructure. Buying signal: existing Daily.co usage, a desire for OSS framework code, and a willingness to pay for managed transport and orchestration.

Skip if: Skip Daily Bots if your team needs to run WebRTC with a different SFU or if your transport needs are atypical (Twilio Media Streams without Daily.co, embedded devices, custom WebRTC). Also skip it if you want a fully managed voice platform with telephony, simulator, and analytics out of the box; Vapi or Retell fit better there.

5. FutureAGI: Best for voice eval, simulation, and observability on top of any runtime

Open source. Self-hostable. Hosted cloud option.

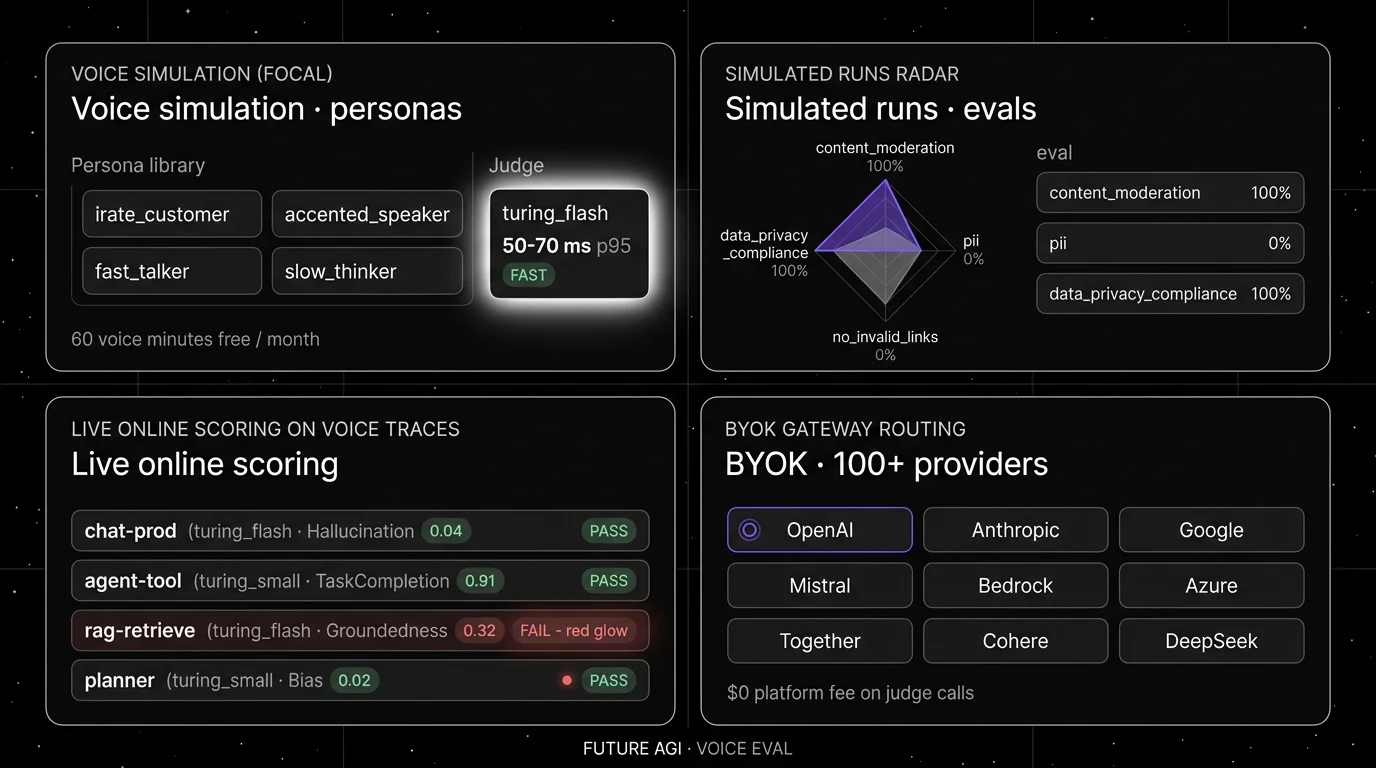

FutureAGI is the right alternative when the gap is not the runtime but the loop around the runtime. Voice agents fail in unique ways: barge-in handling, accent drift, retrieval misses on the wrong turn, hallucinated facts under interruption, and TTS cutoff at the wrong word. FutureAGI runs simulated calls in pre-prod, scores every voice trace with the same evaluator that judges production, and feeds failing turns back into prompts as labeled examples.

Architecture: what closes, not what ships. The public repo is Apache 2.0 and self-hostable. Voice simulation runs personas through your runtime and replays real production traces. Each turn is scored with span-attached evaluators across groundedness, task completion, refusal handling, latency, and conversation drift. Turing eval models include turing_flash (50 to 70 ms p95 for guardrail screening, 2 to 8 credits per call), turing_small (200 to 400 ms, 6 to 12 credits), and turing_large (3 to 5 s, 10 to 30 credits, multimodal across text, image, audio, and PDF). Full eval templates typically complete in about 1 to 2 seconds depending on template complexity, model routing, and input size. traceAI emits OpenTelemetry GenAI semconv spans that live next to your STT, LLM, and TTS spans, so a barge-in failure surfaces inside the trace tree. The plumbing under it (Django, React, the Go-based Agent Command Center gateway, traceAI under Apache 2.0, Postgres, ClickHouse, Redis, object storage, workers, Temporal, OTel across Python, TypeScript, Java, and C#) exists so the loop closes without manual export.

Pricing: FutureAGI starts at $0/month. The free tier includes 60 voice simulation minutes, 1 million text simulation tokens, 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Voice simulation after free is $0.08 per minute. Boost is $250 per month, Scale is $750 per month, Enterprise starts at $2,000 per month.

Best for: Pick FutureAGI when the runtime is already chosen (LiveKit, Pipecat, Vapi, Retell) and the gap is pre-prod simulation, span-attached voice evals, and a closed loop from production failure to next release’s regression test. Buying signal: voice agent failures repeat across releases because eval lives in a notebook.

Skip if: Skip FutureAGI if your immediate need is a voice runtime. FutureAGI is not a runtime. Skip it if you do not run agents in production yet; the eval loop is most useful once real traffic generates real failure modes.

Decision framework: Choose X if…

- Choose Pipecat if Python and OSS framework control are non-negotiable. Buying signal: multi-provider STT or TTS strategy, FastAPI services. Pairs with: Daily.co transport, Twilio Media Streams, custom processors.

- Choose Vapi if telephony plus simulator out of the box matters more than framework control. Buying signal: small to mid team, voice agent as a product. Pairs with: BYO models, third-party API tools.

- Choose Retell if the buying signal is call-center deployment. Buying signal: warm transfer, supervisors, queues, CRM workflows. Pairs with: Salesforce, Zendesk, HubSpot.

- Choose Daily Bots if Pipecat plus Daily.co transport plus hosted control fits the team. Buying signal: existing Daily.co usage, want managed WebRTC. Pairs with: Pipecat processors, Twilio SIP for telephony.

- Choose FutureAGI when the runtime is already chosen and the loop is the gap. Buying signal: production voice failures must become regression tests. Pairs with: traceAI, OTel GenAI semconv, BYOK judges.

Common mistakes when picking a LiveKit alternative

- Treating “real-time” as the only metric. Latency is necessary but not sufficient. A voice agent that responds in 500 ms but mishandles barge-in still fails. Test full conversation patterns, not just first-response latency.

- Skipping simulation. Voice agents fail under accent drift, network jitter, partial STT outputs, and barge-in. A pre-prod simulator that replays real call transcripts and edits in failure modes catches more than human QA.

- Picking by integration logos. Verify your specific STT, TTS, and LLM combination. Provider rate limits, codec support, streaming-versus-batch differences, and timeout defaults change behavior between vendors.

- Ignoring observability format. If your platform emits its own non-OTel format, your downstream eval, monitoring, and incident review tools must adapt or stay separate. OTel GenAI semconv compatibility matters for cross-team analytics.

- Pricing only the platform fee. Real cost equals platform fee plus STT minutes plus TTS characters plus LLM tokens plus telephony minutes plus storage retention plus any per-session fee. A cheaper platform can lose if every retry triggers a new STT session.

What changed in the voice AI landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | LiveKit Agents 1.5.8 shipped | Latest minor release iterated on noise cancellation, end-of-turn detection, and inference credits. |

| Apr 2026 | Pipecat 1.1.0 released | Pipeline framework continued cadence on transports, services, and FrameProcessor primitives. |

| Mar 2026 | Cartesia and ElevenLabs Turbo TTS landed sub-200 ms first-byte | For fast streaming TTS providers, first-byte latency became less dominant in many stacks; teams still need to benchmark their full provider mix. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Voice eval, simulation, and gateway routing moved into the same loop. |

| Feb 2026 | Vapi expanded to 100+ languages | Multilingual voice agents became practical without per-language model swaps. |

| Jan 2026 | Daily Bots positioned Pipecat as the runtime | Pipecat-plus-Daily-Cloud became a clean managed alternative to LiveKit Cloud. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real call transcripts, including barge-in events, accent drift, retrieval misses, and tool-call failures. Replay the slice through each candidate framework with your STT, LLM, and TTS provider mix. Do not accept a vendor demo dataset.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrent calls, y-axis is first-response latency p50, p95, p99, dropped sessions, dropped TTS frames, retry count, and tool-call failure rate. Track end-of-turn detection accuracy, barge-in handling, and time-to-detect for primary outages.

-

Cost-adjust. Real cost equals platform fee plus STT minutes plus TTS characters plus LLM tokens plus telephony minutes plus eval token spend plus storage retention plus on-call labor. A cheaper voice platform can lose if every retry triggers a new STT session and your call duration distribution skews long.

Sources

- LiveKit Agents repo

- LiveKit AgentsJS repo

- LiveKit Agents page

- Pipecat repo

- Pipecat docs

- Daily.co pricing

- Vapi pricing

- Retell pricing

- FutureAGI pricing

- FutureAGI repo

- traceAI repo

Series cross-link

Next: Pipecat Alternatives, Best Voice AI Frameworks, Voice AI Evaluation Infrastructure

Frequently asked questions

What is the best LiveKit alternative in 2026?

Is LiveKit Agents open source?

Can I self-host an alternative to LiveKit?

How does LiveKit Cloud pricing compare to alternatives?

Which alternative has the best telephony integration?

Which voice AI framework gives the lowest end-to-end latency?

Does FutureAGI replace LiveKit?

What does LiveKit still do better than alternatives?

LiveKit Agents, Vapi, Retell, OpenAI Realtime API, and FutureAGI as Pipecat alternatives in 2026. Pricing, OSS license, and real tradeoffs.

LiveKit Agents, Pipecat, Vapi, Retell, Daily Bots, and OpenAI Realtime API ranked for 2026 by latency, telephony, OSS, and production readiness.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.