Table of Contents

In this session, we dive into how early-stage evaluation-during dataset preparation and prompt iteration-can help you build more reliable GenAI systems.

What you’ll learn:

- Why early evaluation is critical to catching issues before deployment

- How to run multi-modal evaluations across various model outputs

- Setting up custom metrics tailored to your use case

- Using user feedback and error localization to improve model performance

- How to bring engineering discipline into your AI development process

This webinar is ideal for AI engineers, ML practitioners, and product teams looking to improve reliability, speed, and trust in their AI workflows.

Related Articles

View all

Webinar 01: AI Failures & Smart Evaluation Techniques

Explore why modern AI evaluation is key to building reliable, safe AI agents-featuring smart strategies, failure lessons, and live demos.

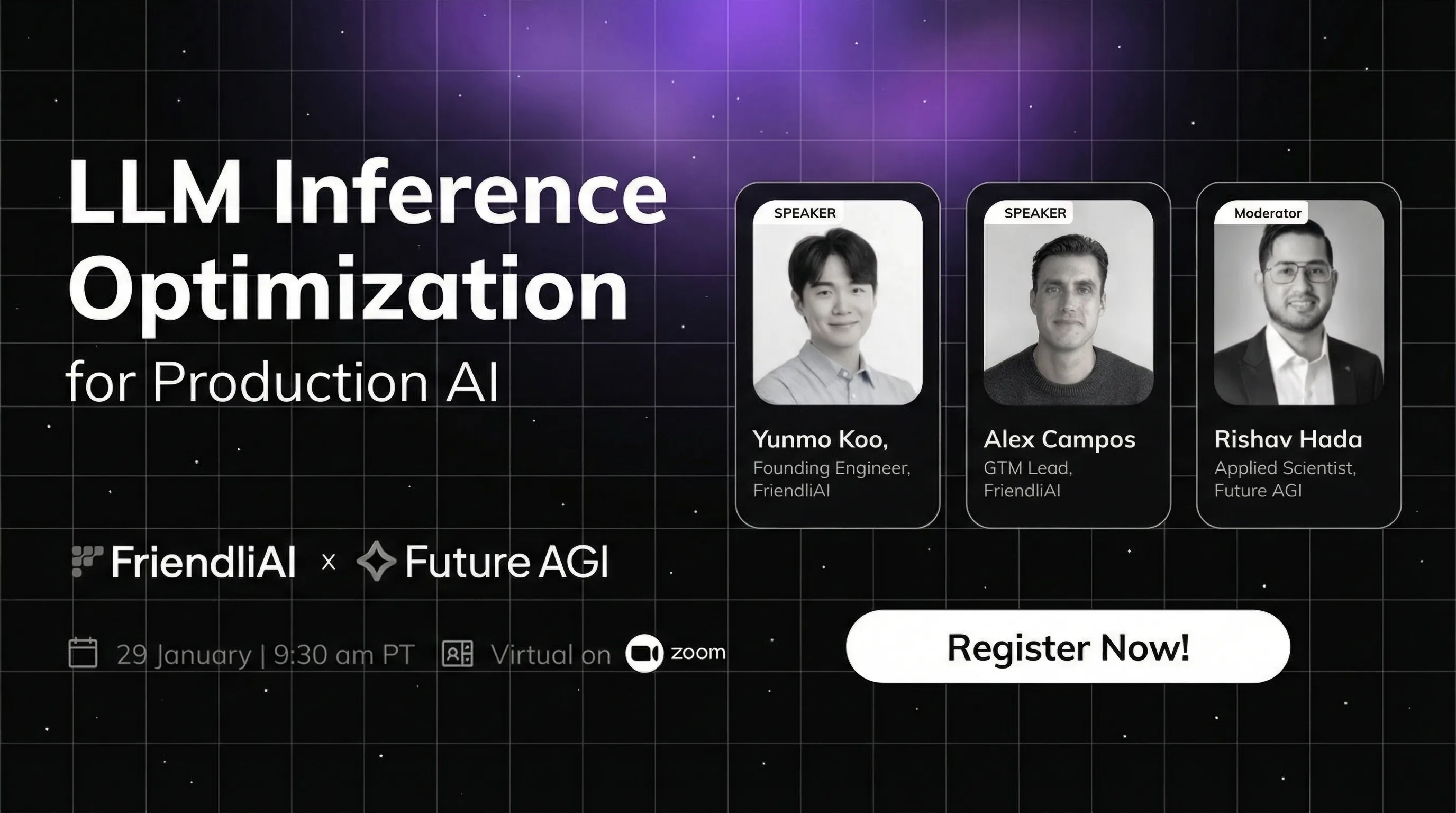

Inference Performance as a Competitive Advantage

Join our webinar on LLM inference optimization with FriendliAI. Learn to reduce GPU costs 90%, boost model serving speed in production AI deployment.

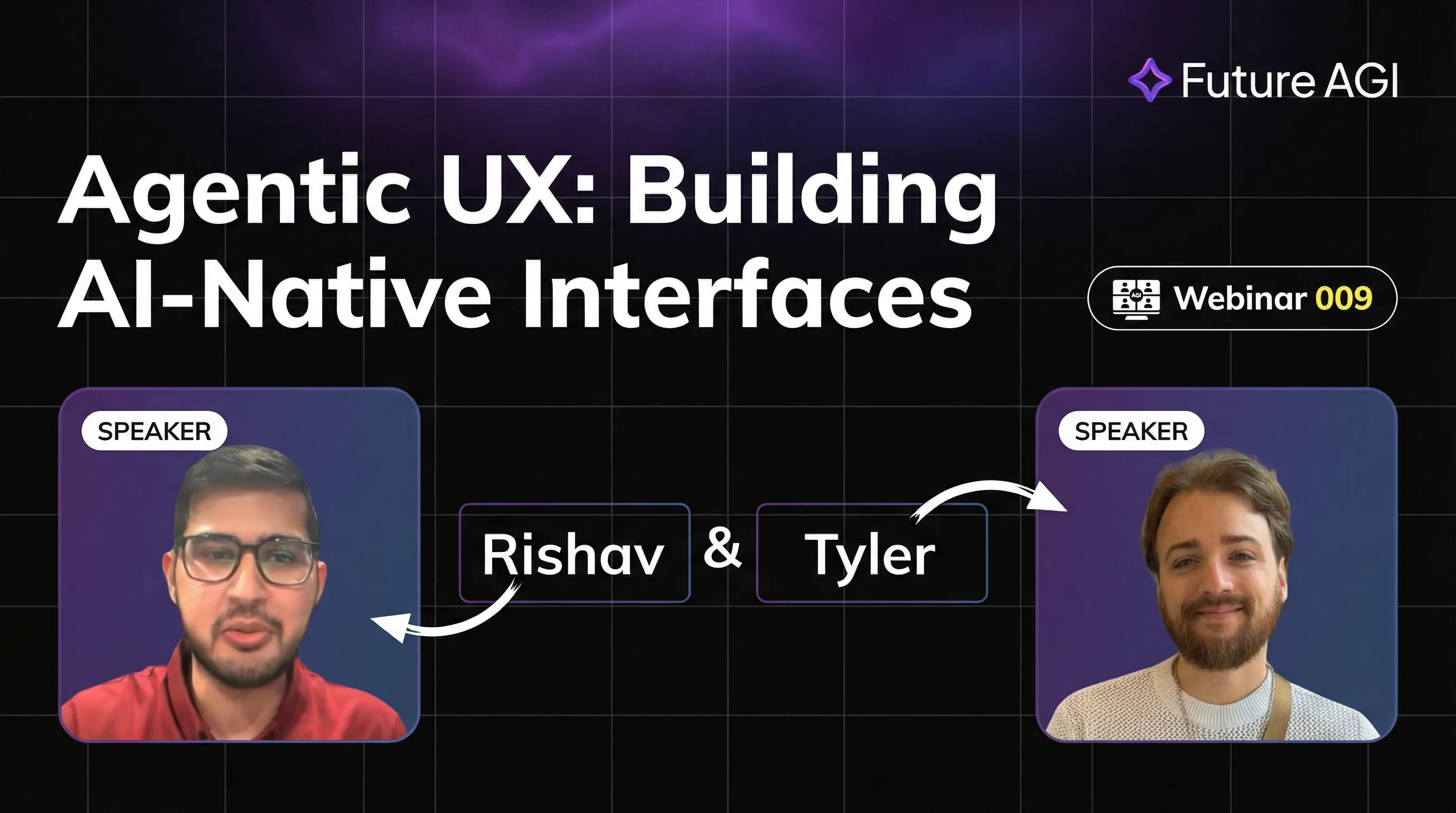

Agentic UX: Building AI-Native Interfaces

Master Agentic UX with AG-UI protocol. Learn to design AI-native interfaces for seamless agent interactions. Build real-time, collaborative AI experiences.