Meet Command Centre: The Control Plane for AI Agents in Production

Why routing, guardrails, and cost controls at the gateway layer fix the problems most teams blame on their LLM provider.

Table of Contents

Overview

Most teams building LLM applications treat routing, guardrails, and cost controls as an afterthought. This webinar covers the gateway layer and why getting it wrong is what’s quietly breaking your production deployments, inflating your bills, and leaving your agents exposed to prompt injection attacks.

Watch the Webinar

About the Webinar

This webinar covers the gateway layer, the infrastructure that sits between your application and the LLM, and why getting it wrong is what’s quietly breaking your production deployments, inflating your bills, and leaving your agents exposed to prompt injection attacks.

👉 Who Should Watch

- ML engineers and platform teams shipping LLM applications or multi-agent systems in production

- Security and DevOps teams responsible for governing AI at the infrastructure level

- Engineering leads trying to understand why LLM costs keep rising despite cheaper inference pricing

🎯 What You’ll Learn

- Why agentic tasks trigger 10-20 LLM calls per request and how that compounds cost

- How 10,000-word system prompts burn tokens on every single call and how caching fixes it

- The difference between exact caching and semantic caching, and when each applies

- How complexity routing sends simple queries to cheap models without touching your application code

- Why sequential fallback chains fail in production and how circuit breakers prevent infinite loops

- How inline guardrails intercept prompt injection, PII, and toxicity at sub-100ms without a sidecar

- How to integrate existing guardrail systems like Llama Guard and Azure Content Safety into one gateway

- How RBAC and per-team budget caps give visibility into who is spending what, on which model

💡 Key Insight

The cost and security problems most teams attribute to their LLM provider are actually gateway problems: missing routing intelligence, absent guardrails, and no spend attribution. OWASP ranks prompt injection as the #1 LLM risk, with 73% of production deployments currently exposed. Microsoft Copilot, Perplexity, and ServiceNow all learned this the hard way. The fix lives at the infrastructure layer, not the application layer.

🎙️ Speakers

NVJK Kartik — Data Scientist at Future AGI

Rishav Hada — Senior Applied Scientist at Future AGI

🌐 Visit Future AGI

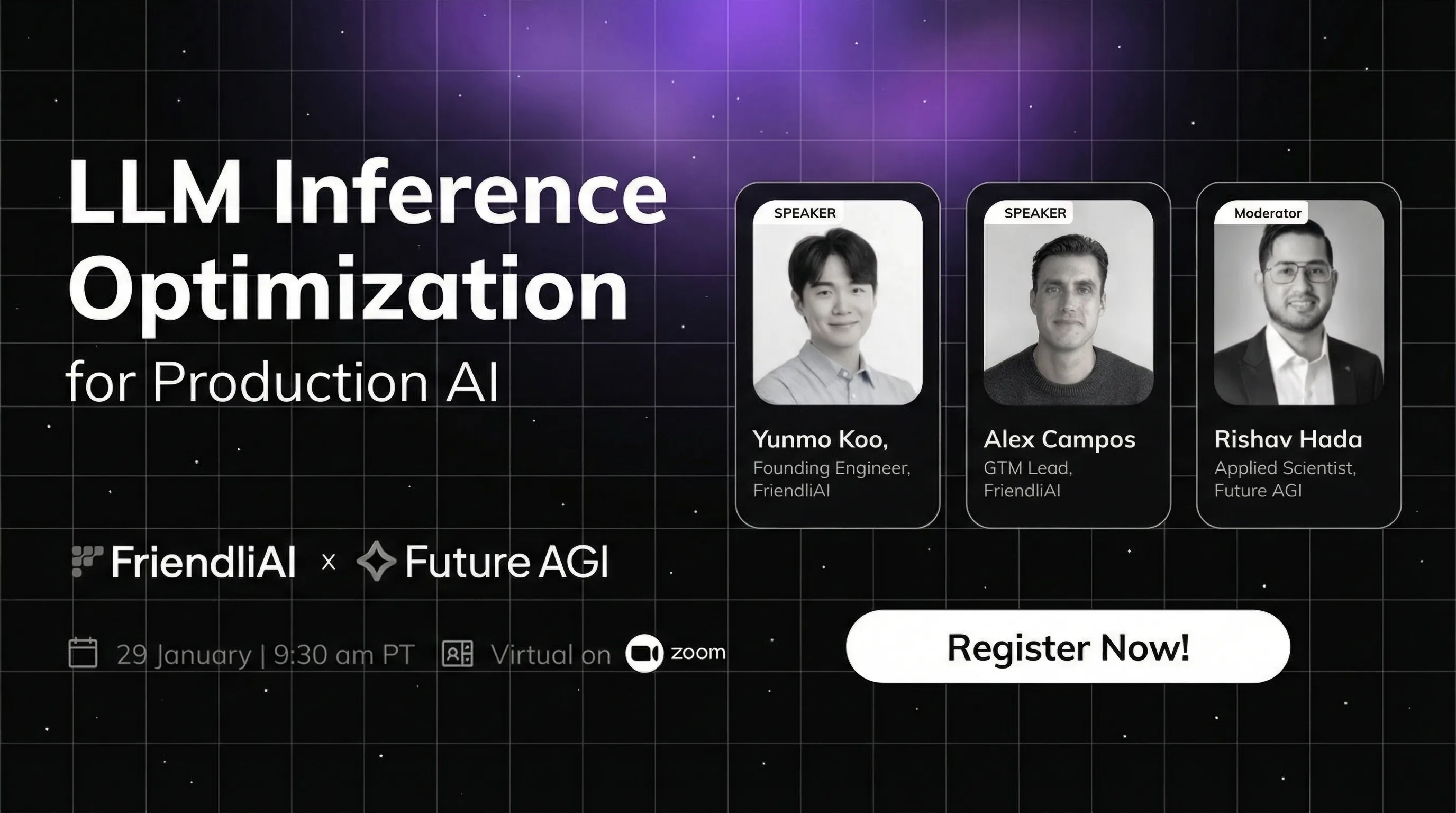

Join our webinar on LLM inference optimization with FriendliAI. Learn to reduce GPU costs 90%, boost model serving speed in production AI deployment.

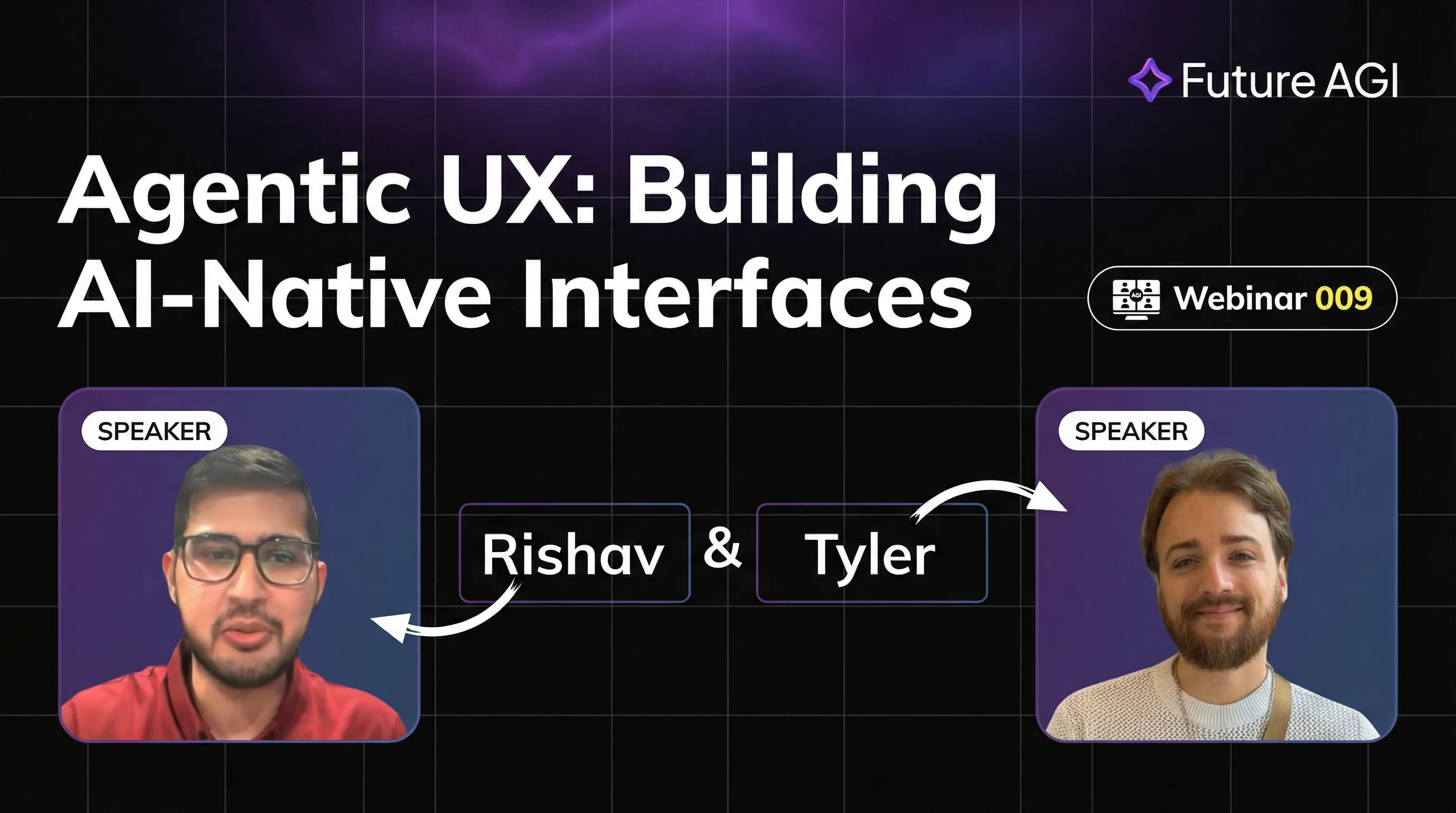

Master Agentic UX with AG-UI protocol. Learn to design AI-native interfaces for seamless agent interactions. Build real-time, collaborative AI experiences.

Build self-optimizing AI agents with eval-driven auto-optimization. Learn 6+ strategies to improve agent performance automatically-no manual tuning needed.